∫ Lecture 10

advertisement

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

Lecture 10

Last time: Random Processes

With f ( x, t ) we can compute all of the usual statistics.

Mean value:

∞

x (t1 ) =

∫ xf ( x, t )dx

1

−∞

Mean squared value:

∞

x (t1 ) =

2

∫x

2

f ( x, t1 )dx

−∞

Higher order distribution and density functions. You can define these

distributions of any order.

F ( x1 , t1; x2 , t2 ;...) = P [ x (t1 ) ≤ x1 , x(t2 ) ≤ x2 ,..., x (tn ) ≤ xn ]

∂n

F ( x1 , t1; x2 , t2 ;... )

∂x1∂x2 ...∂xn

F is the probability that one member of the ensemble x satisfies each of these

constraints at times ti.

f ( x1 , t1; x2 , t2 ;...) =

But we rarely work with distributions higher than second order.

A very important statistic of a random process for the study of random processes

in linear systems is the autocorrelation function, Rxx– the correlation of x (t1 ) and

x ( t2 ) .

∞

Rxx (t1 , t2 ) =

∞

∫ dx ∫ dx x x f ( x , t ; x , t )

1

−∞

2 1 2

1

1

2

2

−∞

= E [ x (t1 ), x (t2 ) ]

This could be computed as a moment of the second order density function (as

above), but we usually just specify or measure the autocorrelation function

directly.

Notice that the autocorrelation function and the first order probability density

function express different kinds of information about the random process. Two

different processes might have the same pdfs but quite different Rxx (τ ) s.

Conversely, they might have the same Rxx (τ ) but completely different pdfs.

Page 1 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

This is called the autocorrelation function for the random process {x (t )} . Note

some useful properties:

Rxx (t , t ) = E [ x (t ) x (t )] = E ⎡⎣ x (t ) 2 ⎤⎦ = x (t ) 2

Rxx (t2 , t1 ) = E [ x (t2 ) x (t1 ) ] = E [ x (t1 ) x (t2 ) ] = Rxx (t1 , t2 )

Also note that x(t2) is likely to be independent of x(t1) if |t2-t1| is large. For that

case:

lim Rxx (t1 , t2 ) → E [ x (t1 ) x (t2 ) ] = x (t1 ) x (t2 )

|t2 − t1|→∞

The members of the processes {x(t)} and {y(t)} must be associated as

corresponding pairs of functions. There is a particular y which goes with an x .

To study the statistical interrelation among more than one random process

we need to consider their joint distributions:

The general joint distribution function for two processes {x(t)} and {y(t)},

defined over the sample space of the same experiment in general:

Fxy( m ,n ) ( x1 , t1;..., xm , tm ; y1 , t1′;..., yn , tn′ ) = P [ x (t1 ) ≤ x1 ,..., x (tm ) ≤ xm , y (t1′) ≤ y1 ,..., y (tn ) ≤ yn ]

Examples include: Elevation, azimuth of radar tracker.

The general joint density function is:

f xy( m ,n ) ( x1 , t1;..., xm , tm ; y1 , t1′;..., yn , tn′ ) =

∂ m+n

Fxy( m ,n ) (...)

∂x1...∂xm∂y1...∂yn

Page 2 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

Any of the lower ordered joint or single distributions can be derived from

this by integration over the variables to be eliminated.

The mathematical expectation of a function of these random variables is:

∞

E {g [ x (t1 ),..., x (tm ), y (t1′),..., y (tn′ )]} =

∫

−∞

∞

dx1... ∫ dyn g ( x1... yn ) f xy( m ,n ) (...)

−∞

By far the most important statistical parameter involved in the joint

consideration of more than one random process is the cross correlation

function.

Rxy (t1 , t2 ) = E [ x (t1 ) y (t2 ) ] =

∞

∞

∫ dx ∫ xyf

−∞

(1,1)

xy

( x, t1; y , t2 )dy

−∞

Rxy (t2 , t1 ) = E [ x (t2 ) y (t1 )] = E [ y (t1 ) x (t2 )] = R yx (t1 , t2 )

If x(t ), y (t ) are statistically independent

Rxy (t1 , t2 ) = E [ x (t1 ) y (t2 )] = E [ x (t1 )] E [ y (t2 )] = x (t1 ) y (t2 ) = 0

if either x(t ) or y (t ) or both is zero mean.

If w(t ) = x (t ) + y (t ) + z (t )

Then

Rww (t1 , t2 ) = E [ w(t1 ) w(t2 )]

= Rxx (t1 , t2 ) + Rxy (t1 , t2 ) + Rxz (t1 , t2 )

+ R yx (t1 , t2 ) + R yy (t1 , t2 ) + R yz (t1 , t2 )

+ Rzx (t1 , t2 ) + Rzy (t1 , t2 ) + Rzz (t1 , t2 )

such that if any two of x(t ) , y (t ) or z (t ) have zero mean and if they are all

mutually independent, all the cross correlation terms vanish.

Rww (t1 , t2 ) = Rxx (t1 , t2 ) + R yy (t1 , t2 ) + Rzz (t1 , t2 )

Thus for independent processes with zero mean, the autocorrelation of the

sum is the sum of the autocorrelations. This has special relevance since it

implies that for independent processes with zero mean, the mean square of

a sum is the sum of the mean squares. This simplifies the problem of

minimizing the mean squared error when more than one random process

is to be considered.

Page 3 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

[ x(t1 ) ± y (t2 )]2 = x(t1 )2 ± 2 x(t1 ) y (t2 ) + y (t2 )2

= Rxx (t1 , t1 ) ± 2 Rxy (t1 , t2 ) + R yy (t2 , t2 ) ≥ 0

1

⎡ Rxx (t1 , t1 ) + R yy (t2 , t2 ) ⎤⎦

2⎣

1

Rxy (t1 , t2 ) ≤ ⎡⎣ Rxx (t1 , t1 ) + R yy (t2 , t2 ) ⎤⎦

2

1

≤ ⎡ x (t1 ) 2 + y (t2 ) 2 ⎤

⎦

2⎣

1

Rxx (t1 , t2 ) ≤ ⎡ x (t1 ) 2 + x (t2 ) 2 ⎤

⎦

2⎣

m Rxy (t1 , t2 ) ≤

Intuitively we feel that if the conditions under which an experiment is

performed are time independent, then the statistical quantities associated

with a random process resulting from the experiment should be

independent of time.

Analytically we say that a process is stationary if every translation in time

transforms members of the process into other members of the process in

such a way that probability is preserved.

This could also be stated by the statement that all distribution functions

associated with the process

F ( n ) ( x1 , t1 , x2 , t2 ,..., xn , tn ) = F ( n ) ( x1 , t1 , x2 , t1 + τ 2 ,..., xn , t1 + τ n )

be functions of the differences in the ti only and independent of the actual

values of the ti . Define n − 1 τ i . Then f ( n ) is independent of t1 .

f ( x, t ) ⇒ f ( x )

x (t ) ⇒ x

x (t ) 2 ⇒ x 2

Page 4 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

Still depends on differences between time samples, but does not depend

on time at which sampling starts.

f ( x1 , t1; x2 , t2 ;..., xn , tn ) = f ( x, t; x2 ,τ 2 ; x3 ,τ 3 ;...; xn ,τ n )

t2 = t + τ 2

f ( x1 , t1; x2 , t2 ) ⇒ f ( x1 , x2 ,τ )

τ = t2 − t1

Rxx (t1 , t2 ) ⇒ Rxx (τ )

Rxx (τ ) = x (t ) x (t + τ )

= x (t − τ ) x (t )

= x (t ) x (t − τ )

= Rxx ( −τ )

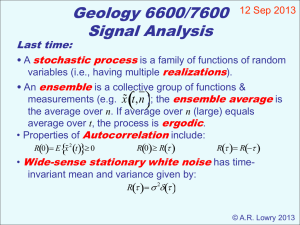

A stationary random process is further said to have the ergodic property if

the time average of any function over a randomly selected member of the

ensemble is equal to the ensemble average of the function with probability

1.

This means there must be probability 1 of picking a function which

represents the ensemble. Note that a “representative” member of the

ensemble excludes any with special properties which belong to a set of

zero probability. A representative member must display at various points

in time the same distributions of amplitude and rates of change of

amplitude as are displayed in the entire ensemble at any one point in time.

Page 5 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

This is why no member of the ensemble of constant functions is

representative of the ensemble.

Autocorrelation of a stationary function is an even function of its time

argument.

x 2 = Rxx (0)

1

⎡ Rxx (t1 , t1 ) + R yy (t2 , t2 ) ⎤⎦

2⎣

1

Rxy (τ ) ≤ ⎡⎣ Rxx (0) + R yy (0) ⎤⎦

2

1

≤ ⎡ x2 + y2 ⎤

⎦

2⎣

Rxy (t1 , t2 ) ≤

Rxx (τ ) ≤ x 2

≤ Rxx (0)

Rxy (τ ) = x (t ) y (t + τ )

= x (t − τ ) y (t )

= y (t ) x (t − τ )

= R yx ( −τ )

Page 6 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

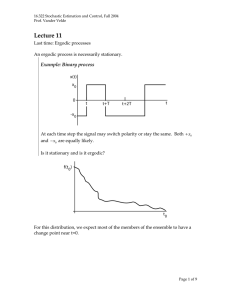

Ergodic property

A stationary random process is further said to have the ergodic property if the

time average of any function over a randomly selected member of the ensemble

is equal to the ensemble average of the function with probability 1.

This means there must be probability 1 of picking a function which represents

the ensemble. Note that a “representative” member of the ensemble excludes

any with special properties which belong to a set of zero probability. A

representative member must display at various points in time the same

distributions of amplitude and rates of change of amplitude as are displayed in

the entire ensemble at any one point in time. This is why no member of the

ensemble of constant functions is representative of the ensemble.

Example: Constants

Page 7 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

Stationary, but not ergodic.

Time average = ensemble expectation, with probability 1.

Example: Sinusoids

x (t ) = A sin(ωt + θ )

A, ω fixed

θ random

Ensemble expectation of g ( x (t ) ) :

E [ g ( A sin(ωt + θ ))] =

2π

1

∫ g [ A sin(ωt + θ )] 2π dθ

0

Time average of g ( x (t ) ) :

Page 8 of 9

16.322 Stochastic Estimation and Control, Fall 2004

Prof. Vander Velde

T

1

Ave ⎡⎣ g ( A sin(ωt + θ ) )⎤⎦ = ∫ g [ A sin(ωt + θ ) ] dt

T 0

φ = ωt

1

Ave ⎡⎣ g ( A sin(ωt + θ ) )⎤⎦ =

T

ωT

dφ

∫ g [ A sin(φ + θ )] ω

0

ωT = 2π

Ave ⎡⎣ g ( A sin(ωt + θ ) )⎤⎦ =

1

2π

2π

∫ g [ A sin(φ + θ )] dφ

0

= ensemble expectation of g ( x (t ) )

So when f (θ ) is uniformly distributed, the process is stationary and ergodic.

Page 9 of 9