Learning Powerpoint - Miami Arts Charter School

advertisement

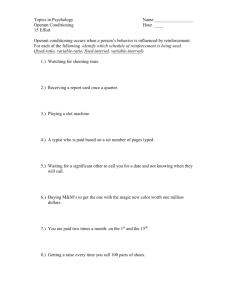

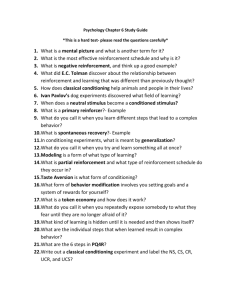

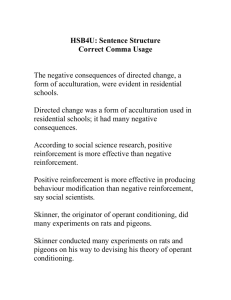

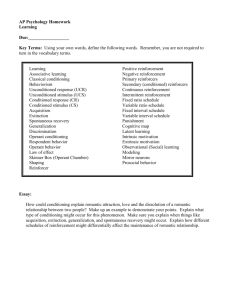

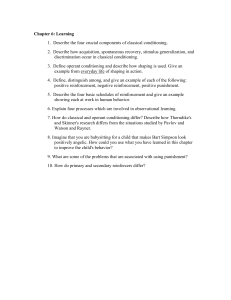

Learning Created by David Silverman Classical Conditioning Ivan Pavlov found that dogs learned to connect the sounds in the environment where they were fed with the food that was given to them. They began to salivate when they were hearing the sounds. The stimulus that evokes a response is the unconditioned stimulus (US or UCS). The US in this case is the food. Food creates the natural response of salivation. This response is called the unconditioned response (UR or UCR). Through repeated pairings with a neutral stimulus such as a bell, animals will come to associate the two stimuli together. Ultimately, animals will salivate when hearing the bell alone. Once the bell elicits salivation, a conditioned response (CR), it is no longer a neutral stimulus but rather a conditioned stimulus (CS) Learning occurs once the animals react to the CS without being shown the US. This learning is also called acquisition since the animals have acquired a new behavior. Pavlov Conditioning The process of unlearning a behavior is known as extinction. Extinction occurs when the CS no longer elicits the CR Animals conditioned to respond to particular stimulus will also respond to similar stimuli. The dogs may salivate to a number of bells, not just the one with which they were trained. This tendency to respond to similar CSs is known as generalization. Subjects can be trained, however, to tell the difference, or discriminate, between various stimuli. The unethical experiment conducted by John Watson and Rosalie Rayner conditioned a little boy named Albert to fear a white rat. This is known as aversive conditioning. Whereas Pavlov’s dogs were conditioned with something pleasant (food), baby Albert was conditioned to have a negative response to the white rat. Aversive conditioning can also be used in healthy ways. To stop biting their nails, some people paint them with something horrible-tasting. Little Albert The office classical conditioning- Jim conditions Dwight College roommate conditioning Contingency Model of Classical Conditioning The Pavlovian model of classical conditioning is known as the contiguity model because it states that the more times two things are paired, the greater the learning that will take place. Robert Rescorla revised the Pavlovian model to take into account a more complex set of circumstances. This model is known as the contingency model. Dog A is presented with a bell paired with food ten times in a row. Dog B also experiences ten pairings of bell and food. However, intermixed with those ten trials are five trials in which food is presented without the bell and five more trials in which the bell is rung but no food is presented. Once these training periods are over, which dog will have a stronger salivation response to the bell? Operant Conditioning Operant conditioning is a kind of learning based on the association of consequences with one’s behaviors. Edward Thorndike was one of the first people to research this kind of learning with his cat experiment The hungry cat was locked in a cage next to a dish of food. The cat had to get out of the cage in order to get the food. Thorndike found that the amount of time decreased gradually; the cat didn’t seem to understand after success, how to get out. This meant that the cat learned the new behavior without mental activity but rather simply connected a stimulus and a response. Thorndike’s law of effect says that if the consequences of a behavior are pleasant, the stimulus-response (S-R) will be strengthened and the likelihood of the behavior will increase. If the consequences of a behavior are unpleasant, the S-R connection will weaken and the likelihood of the behavior will decrease. He used the term instrumental learning to describe his work because he believed the consequence was instrumental in shaping future behaviors. BF SKINNER B. F. Skinner is the best-known psychologist to research operant conditioning. Skinner invented the Skinner box to use in his research of animal learning. A Skinner box usually has a way to deliver food to an animal and a lever to press or disk to peck in order to get the food. The food is called a reinforcer, and the process of giving the food is called reinforcement. Anything that makes a behavior more likely to occur is called a reinforcer. Two kinds of reinforcement exist. Positive reinforcement refers to the addition of something pleasant. Negative reinforcement refers to the removal of something unpleasant. For instance, if we give a rat in a Skinner box food when it presses a lever, we are using positive reinforcement. However, if we terminate a loud noise or shock in response to a press of the lever, we are using negative reinforcement. The latter example results in escape learning. Escape learning allows one to terminate an aversive stimulus. Avoidance learning is when one tries to avoid the unpleasant stimulus altogether. If Davey creates a ruckus in the English class he hates and is asked to leave, he is using escape learning. An example of avoidance learning would be if Sammy cut English class. B.F. Skinner explains operant conditioning Reinforcement Primary reinforcers are, in and of themselves, rewarding. They include things like food, water, and rest. Secondary reinforcers are things we have learned to value like praise or the chance to play a video game. Money is a special kind of secondary reinforcer, called a generalized reinforcer, because it can be traded for virtually anything. One example of generalized reinforcers is known as a token economy. In a token economy, every time people perform a desired behavior, they are given a token. They are allowed to trade their tokens for any one of a variety of reinforcers. What serves as a reinforcer for some may not have the same effect on others. The idea that the reinforcing properties of something depend on the situation, is shown in the Premack principle. Reinforcement Schedules The most effective way of teaching a new behavior at first is by rewarding the behavior each time. This process is known as continuous reinforcement. Once the behavior is learned however, you can actually get higher response rates by using a partial-reinforcement schedule Also, the partial-reinforcement effect says behaviors will be more resistant to extinction if the animal has not been reinforced continuously. Reinforcement schedules differ in two ways: What determines when reinforcement is delivered—the number of responses made (ratio schedules) or the passage of time (interval schedules). The pattern of reinforcement—either constant (fixed schedules) or changing (variable schedules). A fixed-ratio (FR) schedule gives reinforcement after a set number of responses. For example, if a pigeon is on an FR-5 schedule, it will be rewarded after the fifth bar press. A variable-ratio (VR) schedule also gives reinforcement based on the number of bar presses, but that number varies. A pigeon on a VR-5 schedule might be rewarded after the second press, then the ninth press, then the third press, then the sixth press. A fixed-interval (FI) schedule requires that a certain amount of time elapse before a bar press will result in a reward. In an FI-3 minute schedule the pigeon will be reinforced for the first bar press that occurs after three minutes have passed. A variable-interval (VI) schedule varies the amount of time required to pass before a response will result in reinforcement. In a VI-3 minute schedule, the pigeon will be reinforced for the first response made after an average time of three minutes. Variable schedules are more resistant to extinction than fixed schedules. Classical Conditioning vs Operant Conditioning Observational Learning People and animals learn many things simply by observing others. This observational learning is also known as modeling and was studied a great deal by Albert Bandura in formulating his sociallearning theory. This type of learning is said to be species-specific; it only occurs between members of the same species. Modeling has two basic components, observation and then imitation. There is a lot of research that indicate that children learn violent behaviors from watching violent television programs and violent adult models. Bandura, Ross, and Ross’s (1963) Bobo doll experiment illustrated this point. Latent, Abstract, and Insight Learning Latent learning (latent meaning hidden) was studied by Edward Tolman. Abstract learning involves understanding concepts such as tree or same rather than learning simply to press a bar or peck a disk in order to secure a reward. Latent learning is learning that becomes obvious only once a reinforcement is given for demonstrating it. Behaviorists had previously held that learning is evidenced by gradual changes in behavior, but Tolman conducted a famous experiment illustrating that sometimes learning occurs but is not immediately evidenced. Pigeons have learned to peck pictures they had never seen before if those pictures were of chairs. Wolfgang Kohler is well known for his studies of insight learning in chimpanzees. Insight learning occurs when one suddenly realizes how to solve a problem. Resources Mr. Duez- powerpoints and videos References This powerpoint presentation was adapted using information from the Barron’s AP Psychology 5th edition prep book. http://appsych.mrduez.com/p/4.h tml Crash Course Psychology https://www.youtube.com/watch? v=qG2SwE_6uVM&index=11&list=PL 8dPuuaLjXtOPRKzVLY0jJYuHOH9KVU6 Weseley, Allyson, Robert McEntarffer, and Robert McEntarffer. AP® Psychology. Hauppauge, N.Y.: Barron's Educational Series, 2014. Print. Fineburg, A., & Myers, D. (2010). Myers' Psychology for AP*: Teacher's edition (Teacher's ed.). New York: Worth /BFW.