Chord+DHash+Ivy - MIT Parallel & Distributed Operating Systems

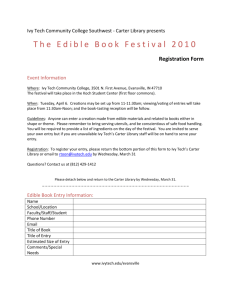

advertisement

Chord+DHash+Ivy: Building Principled Peer-to-Peer Systems Robert Morris rtm@lcs.mit.edu Joint work with F. Kaashoek, D. Karger, I. Stoica, H. Balakrishnan, F. Dabek, T. Gil, B. Chen, and A. Muthitacharoen What is a P2P system? Node Node Node Internet Node Node • A distributed system architecture: • No centralized control • Nodes are symmetric in function • Large number of unreliable nodes • Enabled by technology improvements The promise of P2P computing • High capacity through parallelism: • Many disks • Many network connections • Many CPUs • Reliability: • Many replicas • Geographic distribution • Automatic configuration • Useful in public and proprietary settings Distributed hash table (DHT) (Ivy) Distributed application data get (key) Distributed hash table lookup(key) node IP address Lookup service put(key, data) node node …. (DHash) (Chord) node • Application may be distributed over many nodes • DHT distributes data storage over many nodes A DHT has a good interface • Put(key, value) and get(key) value • Call a key/value pair a “block” • API supports a wide range of applications • DHT imposes no structure/meaning on keys • Key/value pairs are persistent and global • Can store keys in other DHT values • And thus build complex data structures A DHT makes a good shared infrastructure • Many applications can share one DHT service • Much as applications share the Internet • Eases deployment of new applications • Pools resources from many participants • Efficient due to statistical multiplexing • Fault-tolerant due to geographic distribution Many recent DHT-based projects • • • • • • • • File sharing [CFS, OceanStore, PAST, …] Web cache [Squirrel, ..] Backup store [Pastiche] Censor-resistant stores [Eternity, FreeNet,..] DB query and indexing [Hellerstein, …] Event notification [Scribe] Naming systems [ChordDNS, Twine, ..] Communication primitives [I3, …] Common thread: data is location-independent The lookup problem N1 Put (Key=“title” Value=file data…) Publisher Internet N4 • N2 N5 At the heart of all DHTs N3 ? N6 Client Get(key=“title”) Centralized lookup (Napster) N1 N2 SetLoc(“title”, N4) Publisher@N4 Key=“title” Value=file data… N3 DB N9 N6 N7 Client Lookup(“title”) N8 Simple, but O(N) state and a single point of failure Flooded queries (Gnutella) N2 N1 Publisher@N 4 Key=“title” Value=file data… N6 N7 N3 Lookup(“title”) Client N8 N9 Robust, but worst case O(N) messages per lookup Routed queries (Freenet, Chord, etc.) N2 N1 Publisher N3 N4 Key=“title” Value=file data… N6 N9 N7 N8 Client Lookup(“title”) Chord lookup algorithm properties • Interface: lookup(key) IP address • Efficient: O(log N) messages per lookup • N is the total number of servers • Scalable: O(log N) state per node • Robust: survives massive failures • Simple to analyze Chord IDs • Key identifier = SHA-1(key) • Node identifier = SHA-1(IP address) • SHA-1 distributes both uniformly • How to map key IDs to node IDs? Chord Hashes a Key to its Successor Key ID Node ID K100 N100 N10 K5, K10 Circular ID Space N32 K11, K30 K65, K70 N80 N60 K33, K40, K52 • Successor: node with next highest ID Basic Lookup N5 N10 N110 “Where is key 50?” N20 N99 “Key 50 is At N60” N32 N40 N80 N60 • Lookups find the ID’s predecessor • Correct if successors are correct Successor Lists Ensure Robust Lookup N5 5, 10, 20 N110 10, 20, 32 N10 20, 32, 40 N20 32, 40, 60 110, 5, 10 N99 N32 99, 110, 5 N80 40, 60, 80 N40 60, 80, 99 N60 80, 99, 110 • Each node remembers r successors • Lookup can skip over dead nodes to find blocks Chord “Finger Table” Accelerates Lookups ¼ 1/8 1/16 1/32 1/64 1/128 N80 ½ Chord lookups take O(log N) hops N5 N10 K19 N20 N110 N99 N32 Lookup(K19) N80 N60 Average Messages per Lookup Simulation Results: ½ log2(N) Number of Nodes • Error bars mark 1st and 99th percentiles DHash Properties • • • • Builds key/value storage on Chord Replicates blocks for availability Caches blocks for load balance Authenticates block contents DHash Replicates blocks at r successors N5 N10 N110 N20 N99 N40 Block 17 N50 N80 N68 N60 • Replicas are easy to find if successor fails • Hashed node IDs ensure independent failure DHash Data Authentication • Two types of DHash blocks: • Content-hash: key = SHA-1(data) • Public-key: key is a public key, data is signed by that key • DHash servers verify before accepting • Clients verify result of get(key) Ivy File System Properties • • • • Traditional file-system interface (almost) Read/write for multiple users No central components Trusted service from untrusted components Straw Man: Shared Structure Root Inode Directory Block File1 Inode File2 Inode File3 Inode • Standard meta-data in DHT blocks? • What about locking during updates? • Requires 100% trust File3 Data Ivy Design Overview • Log structured • Avoids in-place updates • Each participant writes only its own log • Avoids concurrent updates to DHT data • Each participant reads all logs • Private snapshots for speed Ivy Software Structure DHT Node user App system calls NFS Client Ivy Server NFS RPCs kernel Internet DHT Node DHT Node One Participant’s Ivy Log Record 1 Record 2 Record 3 Immutable content-hash DHT blocks Log Head Mutable public-key signed DHT block • Log-head contains DHT key of most recent record • Each record contains DHT key of previous record Ivy I-Numbers • • • • Every file has a unique I-Number Log records contain I-Numbers Ivy returns I-Numbers to NFS client NFS requests contain I-Numbers • In the NFS file handle NFS/Ivy Communication Example Local NFS Client Local Ivy Server LOOKUP(“d”, I-Num=1000) I-Num=1000 CREATE(“aaa”, I-Num=1000) I-Num=9956 WRITE(“hello”, 0, I-Num=9956) OK • • • • echo hello > d/aaa LOOKUP finds the I-Number of directory “d” CREATE creates file “aaa” in directory “d” WRITE writes “hello” at offset 0 in file “aaa” Log Records for File Creation Type: Create I-num: 9956 … Type: Link Dir I-num: 1000 File I-num: 9956 Name: “aaa” Type: Write I-num: 9956 Offset: 0 Data: “hello” Log Head • A log record describes a change to the file system Scanning an Ivy Log Type: Link Dir I-num: 1000 File I-num: 9956 Name: “aaa” Type: Link Dir I-num: 1000 File I-num: 9876 Name: “bbb” Type: Remove Dir I-num: 1000 Name: “aaa” • A scan follows the log backwards in time • LOOKUP(name, dir I-num): last Link, but stop at Remove • READDIR(dir I-num): accumulate Links, minus Removes Finding Other Logs: The View Block Log Head 1 Log Head 2 View Block Pub Key 1 Pub Key 2 Pub Key 3 Log Head 3 • View block is immutable (content-hash DHT block) • View block’s DHT key names the file system • Example: /ivy/37ae5ff901/aaa Reading Multiple Logs 27 20 26 31 32 Log Head 1 27 28 29 30 Log Head 2 • Problem: how to interleave log records? • Red numbers indicate real time of record creation • But we cannot count on synchronized clocks Vector Timestamps Encode Partial Order 27 20 26 31 32 Log Head 1 27 28 29 30 Log Head 2 • Each log record contains vector of DHT keys • One vector entry per log • Entry points to log’s most recent record Snapshots • Scanning the logs is slow • Each participant keeps a private snapshot • • • • • Log pointers as of snapshot creation time Table of all I-nodes Each file’s attributes and contents Reflects all participants’ logs Participant updates periodically from logs • All snapshots share storage in the DHT Simultaneous Updates • Ordinary file servers serialize all updates • Ivy does not • Most cases are not a problem: • Simultaneous writes to the same file • Simultaneous creation of different files in same directory • Problem case: • Unlink(“a”) and rename(“a”, “b”) at same time • Ivy correctly lets only one take effect • But it may return “success” status for both Integrity • Can attacker corrupt my files? • Not unless attacker is in my Ivy view • What if a participant goes bad? • Others can ignore participant’s whole log • Ignore entries after some date • Ignore just harmful records Ivy Performance • Half as fast as NFS on LAN and WAN • Scalable w/ # of participants • These results were taken yesterday… Local Benchmark Configuration App Ivy Server NFS Client • One log • One DHash server • Ivy+DHash all on one host DHash Server Ivy Local Performance on MAB Phase Ivy NFS Mkdir Write Stat Read Compile Total 0.6 6.6 0.6 1.0 10.0 18.8 0.5 0.8 0.2 0.8 5.3 7.6 • Modified Andrew Benchmark times (seconds) • NFS: client – LAN – server • 7 seconds doing public key signatures, 3 in DHash WAN Benchmark Details • • • • • • • • 4 DHash nodes at MIT, CMU, NYU, Cornell Round-trip times: 8, 14, 22 milliseconds No DHash replication 4 logs One active writer at MIT Whole-file read on open() Whole-file write on close() NFS client/server round-trip time is 14 ms Ivy WAN Performance Phase Ivy NFS Mkdir Write Stat Read Compile Total 3.2 24.6 12.0 11.4 41.4 92.6 1.8 9.6 9.6 12.0 27.8 60.8 • 47 seconds fetching log heads, 4 writing log head, • 16 inserting log records, 22 in crypto and CPU Ivy Performance w/ Many Logs 140 Run Time 120 100 80 60 40 20 0 4 5 6 7 8 9 10 11 12 13 14 15 16 Number of Logs • MAB on 4-node WAN • One active writer • Increasing cost due to growing vector timestamps Related Work • DHTs • Pastry, CAN, Tapestry • File systems • LFS, Zebra, xFS • Byzantine agreement • BFS, OceanStore, Farsite Summary • Exploring use of DHTs as a building block • Put/get API is general • Provides availability, authentication • Harnesses decentralized peer-to-peer groups • Case study of DHT use: Ivy • Read/write peer-to-peer file system • Trustable system from untrusted pieces http://pdos.lcs.mit.edu/chord