Abduction using Neural Models

advertisement

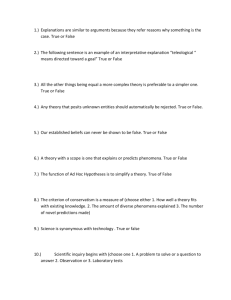

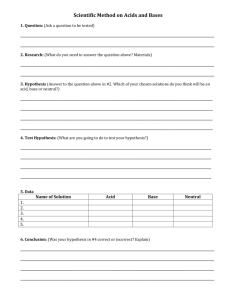

EEL 6876 Intelligent Diagnostics Abduction using Neural Models By Madan Bharadwaj Instructor: Dr. Avelino Gonzalez 1/21 Abdu ction u s ing Ne ura l Mod e ls ABSTRACT Addressing abductive reasoning effectively remains a formidable challenge to researchers world over. Most of the work on Abduction has been done using a logical or a probabilistic approach. In this paper, I review a few papers that discuss abduction in a Neural framework. The networks proposed cover almost the complete range of abduction problems and provide elegant solutions, which can be interpreted as similar to abduction performed by human beings. PROLOGUE With the rising prominence of Abduction as a vital form of reasoning in AI many researchers have attempted to address abductive reasoning in a logical or a probabilistic framework. Some of them have however pondered the use of Neural or connectionist models to deal with abductive reasoning. Some of the first works using this approach dates back to late 80’s [3] when ‘connectionist’ ideas were floated and followed up with more developed neural models [4] to address abduction. In the following sections we will see some of the more recent ideas which have evolved and have been experimentally proved to provide a framework to use abduction for real world problems. It is rather surprising that abduction got recognized as a prime form of human reasoning only recently when most of what we know can be thought of as abduced knowledge from real life observations. To put that in an informal definition, Abduction is the process of evolving a 2/21 hypothesis from a set of observations. An elementary explanation of abduction can be found in [5]. On the other hand Neural Networks are a class of algorithms that are taught to differentiate between similar and dissimilar data using a training data set and then are used in the real world to do the same on real data. Though one cannot immediately make a connection between the two, they can be interpreted to be doing the same thing. ABDUCTION & NEURAL NETWORKS: AN ANALOGY Let us consider an example to illustrate the discussion floated in the previous section. A very easily understood and widely used example for demonstrating the application of Neural Networks is the ‘Character Recognition’ task. A neural network is popularly used to recognize handwritten characters so that it can be fed automatically without having to perform manual interpretation. For example if we want our network to recognize A’s and B’s of the English alphabet, we train the network with a collection of handwritten A’s and B’s and ‘teach’ it to recognize the two of them as two different classes of data. Figure 1 shows a sample training set of 5 A’s and 5 B’s (say collected from your rough book) that can be used to train a neural network for this task. Once trained with the training data they will create classes in an implicit manner to represent A’s and B’s. Now, they can be tested on real data from a real data source (an ‘A’ picked out from your handwritten class notes) to elicit a response from it. The Neural Network has the inherent 3/21 capability to generalize from specific instances and hence will be able to recognize your ‘A’ once it sees it. Figure 1: Handwritten Characters. A’s and B’s Figure 2: After training the Neural Network classifies data into classes Now returning to our original discussion, abduction can be seen as generalizing from a set of observations to synthesize a hypothesis in order to explain the observations. In reality, we have a collection of hypothesis and our abduction algorithm will be expected to pick one among them, the one which best explains the observed data as it understands it. The observations can be intuitively linked to the handwritten A’s and B’s and the collection of hypothesis can be connected to the classes that the neural network creates to partition the data. 4/21 Through this simple explanation we can make an association in our minds between the properties of abduction and the functioning of neural networks. With this introduction let’s probe into the more concrete ideas that discuss implementing the concept. There are two basic approaches to designing a neural network architecture. 1. Designing neural network architecture to reflect the problem dynamics 2. Designing an Energy function to represent the same The first paper [1] we are going to discuss follows the first approach and portrays a simple architecture that covers most domains of Abduction providing an elegant solution to our problem. The second paper [2] proposes the latter approach and attempts to minimize an error function to attain the same end. “A UNIFIED MODEL FOR ABDUCTION-BASED REASONING” by B.AYEB, S.WANG AND J.GE They call the network UNIFY and true to its name it is a unified model for all classes of abduction problems as opposed to the other model which will only address linear and monotonic abduction problems. The network is a simple 3-layered architecture built specifically to implement abduction. The network hence bears a unique physical structure unlike most other neural network models used for more multifarious purposes. The model works on the basis of competition between cells (or nodes as it is more commonly addressed in neural network literature) to encode the observations and maximize fitting hypotheses. The algorithm is discussed incrementally, introducing the simple version first for the sake of simplicity and better understanding and then introducing minor modifications to accommodate for other classes of 5/21 abduction problems. The modifications made are general and do not affect the class of abduction treated before the modification. ABDUCTION CLASSES For any further discussion we need to understand the different types of abduction problems and what they mean, to understand its ramifications on the network architecture. To define them rather briefly, four major types of abduction problems are encountered in the literature. They are 1. Independent Abduction Problems 2. Monotonic Abduction Problems 3. Open Abduction Problems 4. Incompatible Abduction Problems An abduction problem is defined as AP (H, O, R), where H denotes an elementary set of Hypothesis, O denoted the set of observable facts and R denotes a mapping from H to O. If each causal relationship can be expressed in the form h o and where h H & o O . If one and only one elementary hypothesis is needed to explain one observation, then the problem is Independent. If there is at least one causal relationship which can be expresses as h H o meaning, o needs to be derived from more than one hypothesis as a conjunction of two hypothesis, then the problem is Monotonic. 6/21 For an observation uplet1 (OP, OA, OU), if we have OA OU then the problem is Open. If there is at least one instance from the observation set that is observed to be absent and one instance that is explicitly stated as unknown then the problem is Open. For an observation uplet1 (OP, OA, OU), if we have atleast one causal relationship which is expressed as h H , and OA then the problem is Incompatible. If there is at least one observation is observed to be absent but can be derived from the cardinal set of elementary hypothesis which are synthesized from the other observations, then it belongs to the Incompatible class of abduction problems. The explanations provided above are inadequate to provide the clearest picture one can provide towards the explanation of abduction classes. I urge the reader to refer pages 2-3 of [1] where it is more clearly and elaborately explained with an example. 7/21 INITIAL NEURAL MODEL The paper describes a simplified initial model to guide the reader through the process of building the comprehensive network from its smaller and simpler blocks. A pictorial representation of the idea is depicted in Figure 3. Hypothesis Layer Observation Layer Inhibitory Weights Excitatory Weights Figure 3: The Initial Mode. Reprinted from [1] The network comprises of two layers, one consisting of all the observations and the other consisting of all the hypothesis. The two are connected by weights as described in the mapping provided initially. Figure 4 illustrates the weights as described in the mapping rules . Figure 4: An illustration to show weights in axioms 8/21 The weights are intuitively the belief that we have over the presence of the hypothesis given the observation. By altering the weights we can alter our belief or disbelief in our hypotheses. There are two types of weights that are introduced in the architecture: Excitatory weights and Inhibitory weights. The excitatory weights are present in between a hypothesis and an observation, whereas an inhibitory weight can be seen in between two hypothesis. They serve to discredit opposing hypothesis intending to weaken it and shove it off the competition process. WORKING The network is initialized to its startup setting where the values of the observation cells are initialized to values based on the belief of the observation: a 0.5 for an observation cell would mean a 50% belief that the observation was observed. The excitatory weights are initialized to the values as given by the mapping rules and those that are not mentioned are set to zero. By this setting the observation will not affect hypothesis that are not related to it. The initialization inhibitory weights are not discussed and going by common sense they must be set to zero. The hypothesis taken a initial value dictated by the equation… where and A is a small value, say 0.001. 9/21 After initializing the network, the competition between the hypothesis begin. For every iteration Stage II of the algorithm described in Figure 5 is executed until the termination condition is met or in neural network terminology, ‘until the network stabilizes’. When the termination condition is met, one can expect one or more hypothesis to have won the competition and hence would comprise the final set of elementary hypothesis that can explain the observation set fully. Figure 5: Step by Step explanation of the algorithm. [1] THE EQUATIONS The unique structure of the network can be explained or its usefulness realized only when we analyze the equations used to govern the dynamics of the network. Let us review the equations used for this purpose. There are four major equations that govern the activities of the network. 10/21 The hypothesis cell updating equation given by , Where EXi represents the Excitatory input to the hypothesis and the IHi represents the inhibitory input the hypothesis cell. Note that the excitatory input is on top of the equation whereas the inhibitory input is in the bottom. Hence more excitation will improve the value of the hypothesis cell and more inhibition will lessen the value of the cell, which will translate into better or poorer chances for the hypothesis to win the competition with the other hypothesis. The Excitatory weight updating equation given by Note that the weight is updated depending on the cell values of the concerned hypothesis and observation and the present weight it holds. The inhibitory weight equation given by This equation is interesting since it calculates the inhibitory weight based on the differences between the previous and present cell values of the two hypothesis cells that it connects. If one value is negative and one is positive the weight will increase and hence weaken the other 11/21 hypothesis hence effectively implementing the hypothesis negation implemented in a logical framework. To summarize the working of the network one can say that the network structure ensures real value inputs to hypothesis and observations and hence can portrays real life situations more effectively than other approaches. The competition process may also be intuitively understood as similar to the one that happens in our minds, where we weigh different theories to explain an observation. EXPERIMENTAL RESULTS The authors have picked out a neat example to illustrate the concept. They have picked a simple observation set and a mapping rule set to initialize the network and have come up with answers that can be verified easily within a logical framework. Figure 6: A graph showing the hypotheses cell values at various instances. Reprinted from [1] Figure 6 shows a graph plotting the values of the different competing hypothesis at different time intervals. One can immediately see how some hypothesis cells decay to zero and two others 12/21 survive till termination. Actually, one hypothesis comes out clearly as a winner and another stabilizes to a value considerably greater than zero. The results can show how the process debunks false or incomplete hypothesis and exalts correct or appropriate hypothesis. The other immediate consequence is also that it gives real value outputs which can be construed as beliefs in the resulting hypothesis. This would also be very much representative of the resuts one can expect from real world abduction problems. UNIFIED MODEL In the last section the initial model we introduced provides the basis for the unified model that we are going to present in this section. The reason for doing so is that the initial model cannot explain the more complicated monotonic, open and incompatible abduction problems. The initial model cannot resolve conjunction of hypothesis and hence we are introducing the unified model that will address all problems including simple ones effectively. The unified model consists of three layers in place of two of the initial model to help conjunction of hypothesis. If an observation has to be explained by a conjunction of hypothesis only then the intermediate layer comes into play. A node on the intermediate layer connected to the respective hypothesis will act as a middle man to channel flow of information between the hypotheses and the observation. Using slightly modified equations the unified model addresses the problem effectively and elegantly. The nodes on the intermediate layer act like a composite jumbo hypothesis representing the conjunction of all the associated hypotheses. This way the model can be addressed like the initial model in basic framework with changes to accommodate the intermediate layer implemented in the equations. 13/21 Figure 7: The Unified Model. Reprinted from [1] Apart from this structural addition, there is one more major appendage implemented to ensure maximum problem domain coverage. To cover the class of Incompatible abduction problems the hypothesis cells that constitute incompatibility are connected by two connections. They act very much similar to the inhibitory connections and in a way just add to the inhibition of these hypotheses to avoid incompatibility in the final solution set. Let us have a look at the equations that cover the network now. The hypothesis cell equations is now given by & 14/21 The ICi represents the connection weights of the Incompatibility connections. Since they inhibit the hypothesis they are represented in the denominator. Negative influences of absent observations are realized by using EX+ and EX- notations where, EX- represents the observations are observed to be absent. The cell values of the observations cells for these observations will be negative, hence inhibiting the hypothesis. The incompatibility weights are updated according to the equation where g1(x)=1 if x>0 or else 0 and g2(x) = 1 if x is a Exclusive hypothesis or in other words the only hypothesis to observe a particular observation. The major benefit of the equation is it eschews discriminating against exclusive hypothesis but inhibits all other hypothesis that are connected, hence providing a way out of the incompatibility condition. EXPERIMENTAL RESULTS The algorithm was tested on various toy problems and graphs have been plotted to give the reader an intuitive understanding of the results. Further the algorithm is tested on a problem constructed from a real world murder case that went to trial by axiomizing the evidences and 15/21 constructing the possible outcomes of the case as candidate hypotheses. The example is very interesting and shows that the network in very good light. HOPFIELD AND ART NEURAL MODELS FOR ABDUCTION The authors Goel and Ramanujam put forward a more conventional approach in [2] that has been time tested and has well known properties. They propose two approaches, one using the Hopfield model and other using ART2. They have also clearly stated the limitations of their study and constraints using their approach. The authors seem to prefer the use of the Energy function approach to the design of a neural network, with analog internal operating parts. This they claim has inherent advantages over digital circuitry. The idea is to develop a network that will minimize an Energy function and find the minima of the function and hence finding the best hypothesis. To get to this end they have performed some operations on the data set partitioning them into domains and using subnets of the proposed neural architecture for each domain. The paper starts by saying that the algorithm is being implemented for linear and monotonic abduction problems. The first step of the process is to formulate the abduction task as an constrained optimization problem. This they achieve by representing the observations and hypotheses in data sets with certain easily deduced relationships between them. Then they partition the problem into as many sets as possible, or in other words create a Power Set 3 for the observation set and the hypotheses set and create a map between the two. 16/21 They then define the characteristics based on which the classifier will choose the hypotheses as the best among its compatriots. They broadly define the characteristics as 1. Maximal Coverage 2. Maximum Belief and 3. Minimal Cardinality. They then take into account the possible connection or associations that can be allowed between elementary hypotheses. With this framework in hand they now approach the problem with a Hopfield neural model with neurons having to minimize an energy function given by where the W’s are the connection weights and the Vi and Vj are the outputs of the neurons. By getting to minimize this function the network will determine choosing the best hypotheses. The authors have also proposed an ART model for the same purpose, where they split the domain into observations domain and hypotheses domain . By making use of local decisions taken by the observation neurons and the hypotheses neurons and the information exchange between the two domains a composite hypotheses set is synthesized. I have made only a brief review of these models because they do not address all classes of abduction problem and hence their significance is greatly truncated. CRITIQUE: NEURAL MODELS FOR ABDUCTION From this essentially incomplete review of literature and my other readings, I can see more and more study is being done on developing models for abductive reasoning. Non-Monotonic reasoning and reasoning under uncertainty are active research areas now. In my understanding 17/21 the framework for this kind of reasoning has to be in essence fuzzy. Since a large part of real world explanations in the human reasoning process are not purely axiomized; where evidence for a particular occurrence is not always 100% true but can be expressed with partial confidence and since results of a hypotheses are evidence for some other hypotheses in real life, it is very much necessary to maintain a fuzzy nature in the framework under which we can discuss abduction. This property is inherently present in Neural Networks and hence can prove to be an effective framework for abductive reasoning. The downside of the approach is that the functioning of a neural network is still very much abstract and has not been completely understood by researchers. This aspect promotes the tendency not to invest heavily on techniques that can backfire at a later time and would force researchers to redo and reprove all their work using a different approach. Hence the real credit that Neural Networks may deserve is lost due to cynicism. However recent studies like deriving a rule base from a neural network that explains the functioning of a neural network [7] and other similar studies can help researchers shoo the fears and embrace neural networks as a test bed for their research. FUTURE AVENUES OF RESEARCH The UNIFY paper [1] concedes that it still cannot solve the problems mooted by the cancellation class of abduction problems discussed in [8]. The authors have promised more research on that aspect and develop or modify their UNIFY to solve all the classes of Abduction problems. 18/21 Other Neural frameworks can be considered for the purpose. I would think ART provide a far more elegant and flexible solution to the purpose than described in [2]. Studying that avenue of thought would be an exciting experience. Genetic algorithms can also be touched upon, to see if they can provide any basis for the problems. SUMMARY This paper reviewed a few interesting neural models proposed to solve abduction problems. The UNIFY algorithm put forward by Ayeb et al stands out for their simplicity, applicability and domain coverage. Other approaches using Hopfield networks and ART were also briefly discussed. The concluding sections critiqued the idea as a whole, and provides some suggestions and explanations behind the philosophy of using neural networks for abduction. 19/21 NOTATIONS 1 - An observation uplet (OP, OA,) is defined primarily for Multiple Observations which are applicable only for the Open and Incompatible class of abduction problems. Here OP represents the observations that are known to be present, OA represents the observations that is known to be absent and OU represents the observations that are unknown. 2 - ART- Adaptive Resonance Theory 3 – A Power Set is a set containing all possible subsets of a set REFERENCES: [1]. B.Ayeb, S.Wang and J.Ge, “A Unified Model for Abduction-Based Reasoning” IEEE Transaction on Systems, Man and Cybernetics – Part A: Systems and Humans, Vol 28, No. 4, July 1998 [2]. A.K. Goel and J. Ramanujam, “A Neural Architecture for a Class of Abduction Problems”, IEEE Transaction on Systems, Man and Cybernetics – Part B – Cybernetics, Vol. 26, No. 6, December 1996 [3]. _____, “A Connectionist Model for Diagnostic Problem Solving: Part II”, IEEE Transaction on Systems, Man and Cybernetics., Vol19, pp. 285-289, 1989 [4]. A. Goel, J. Ramanujam and P. Sadayappan, “Towards a ‘neural’ architecture of abductive reasoning”, in Proc. 2nd Int. Conf. Neural Networks, 1988, pp. I-681-I-688. [5]. D.Poole, A. Mackworth and R.Goebel, “Computational Intelligence: A Logical Approach”, pp 319-343, Oxford University Press, 1998. [6]. C. Christodoulou and M. Georgiopoulos, “Applications of Neural Networks in Electromagnetics”, Boston: Artech House, 2001. 20/21 [7]. Castro, J.L.; Mantas, C.J.; Benitez, J.M., “Interpretation of artificial neural networks by means of fuzzy rules”, IEEE Transactions on Neural Networks, Volume: 13 Issue: 1, Jan. 2002. Page(s): 101 –116 [8]. T. Bylander, D. Allemang, M. C. Tanner, and J. R. Josephon, “The computational complexity of abduction,” Artif. Intell., vol. 49, pp. 25–60, 1991. 21/21