IV. HLT selection algorithms

advertisement

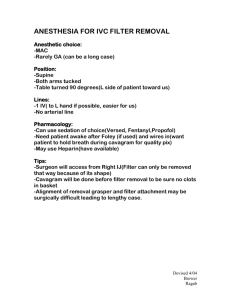

The CMS High Level Trigger V. Brigljevic, G. Bruno, E. Cano, S. Cittolin, S. Erhan, D. Gigi, F. Glege, R. Gomez-Reino Garrido, M. Gulmini, J. Gutleber, C. Jacobs, M. Kozlovszky, H. Larsen, I. Magrans De Abril, F. Meijers, E. Meschi, S. Murray, A. Oh, L. Orsini, L. Pollet, A. Racz, D. Samyn, P. Scharff-Hansen, P. Sphicas, C. Schwick, J. Varela Abstract—The High Level Trigger (HLT) system of the CMS experiment will consist of a series of reconstruction and selection algorithms designed to reduce the Level-1 trigger accept rate of 100 kHz to 100 Hz forwarded to permanent storage. The HLT operates on events assembled by an event builder collecting detector data from the CMS front-end system at full granularity and resolution. The HLT algorithms will run on a farm of commodity PCs, the filter farm, with a total expected computational power of 106 SpecInt95. The farm software, responsible for collecting, analyzing, and storing event data, consists of components from the data acquisition and the offline reconstruction domains, extended with the necessary glue components and implementation of interfaces between them. The farm is operated and monitored by the DAQ control system and must provide near-real-time feedback on the performance of the detector and the physics quality of data. In this paper, the architecture of the HLT farm is described, and the design of various software components reviewed. The status of software development is presented, with a focus on the integration issues. The physics and CPU performance of current reconstruction and selection algorithm prototypes is summarized in relation with projected parameters of the farm and taking into account the requirements of the CMS physics program. Results from a prototype test stand and plans for the deployment of the final system are finally discussed. I. INTRODUCTION CMS experiment employs a general-purpose detector with nearly complete solid-angle coverage for the detection of electrons, photons and muons and the measurement of hadronic jets and total energy flow. The detector features accurate tracking based on solid-state pixel and silicon strip detectors and a 4T superconducting solenoid. The main purpose of the Data Acquisition (DAQ) and HighLevel Trigger (HLT) systems is to read data from the detector T HE Manuscript received October 22, 2003. V. Brigljevic, G. Bruno, E. Cano, S. Cittolin, D. Gigi, F. Glege, R. Gomez-Reino Garrido, M. Gulmini, J. Gutleber, C. Jacobs, M. Kozlovszky, H. Larsen, I. Magrans De Abril, F. Meijers, E. Meschi, S. Murray, A. Oh, L. Orsini, L. Pollet, A. Racz, D. Samyn, P. Scharff-Hansen, P. Sphicas, C. Schwick, and J. Varela are with CERN, European Center for Particle Physics, Geneva, Switzerland. Corresponding author e-mail: Emilio.Meschi@cern.ch S. Erhan is with University of California at Los Angeles, Los Angeles, U.S.A. M. Gulmini is also with Laboratori Nazionali di Legnaro dell’INFN, Legnaro, Italy. P. Sphicas is also with University of Athens, Athens, Greece front-end electronics for those events that are selected by the Level-1 Trigger, assemble them into complete events, and select, amongst those events, the most interesting ones for output to mass storage [1]. The proper functioning of the HLT at the desired performance, and its availability, will be a key element in reaching the full physics potential of the experiment. The CMS Level-1 Trigger has to process information from the detector at the full beam-crossing rate of 40 MHz. This rate and the limited amount of buffering available in the detector front-end electronics, limit the data accessible to the Level-1 Trigger for processing to a subset of CMS sub-detectors, namely the calorimeters and muon chambers. These data do not represent the full information recorded in the CMS frontend electronics, but only a coarse-granularity and lowerresolution set. Given the total time available to the Level-1 Trigger processors, and the information sent to them, the Level-1 Trigger system is expected to reduce the event rate to 100 kHz at the design LHC luminosity of 1034 cm-2 s-1. At startup, the data acquisition system will be staged at about 50% of its final capability, or 50 kHz maximum average Level-1 accept rate. The HLT will receive, on average, one event of mean size 1 MB every 10 µs (20 µs at startup). In the HLT the event rate must be further reduced to approximately 100 Hz, which is manageable by persistent storage and offline processing. This rate reduction must be achieved without introducing dead time, executing algorithms that combine the rejection power and speed of traditional “Level-2” triggers, and the flexibility and sophistication of “Level-3”. For this to be possible, event and calibration data, and algorithms used by the HLT must be essentially of “offline” quality, and include information from tracking detectors. Complete events are obtained from assembly of data from all detector front-ends in the CMS event builder [2]. Strategies to analyze and select events for permanent storage are the subject of the following sections. II. ARCHITECTURE Because of the event sizes and rates involved, the task to assemble events consisting of a large number of small data fragments in a single location (the physical memory of a computer) requires high bandwidth I/O. The subsequent reconstruction and HLT selection, however, are essentially CPU-intensive. Distributing the two tasks to different components, loosely connected by asynchronous protocols, allows tailoring of the deployed CPU power according to varying running conditions, increases the fault tolerance of the system, and ensures “gracious” performance degradation thanks to easy reconfiguration, in the event of failure of one or more of the computational nodes. Working in connection with the event builder, the HLT must be configured, controlled, and monitored using online control and monitor data paths and software tools. The general architecture of the CMS DAQ software framework supports this separation by providing all the basic tools to interoperate and exchange data between diverse components in a distributed DAQ cluster [3]. The global architecture of the CMS DAQ is sketched in fig. 1. In the event builder, data from front-end electronic modules are assembled, by means of a complex of switching networks and intermediate merging modules, in a set of Builder Units (BU). A certain number of processing nodes of the HLT farm (Filter Units, FU) are connected to a BU. A BU serves complete events to the FU that is subsequently responsible for processing them to reach the HLT decision and transferring accepted events for persistent storage. BU BU BU Filter Data Network FU FU FU FU SM FU FU FU FU S Filter Control Network are transferred over a logically separate Filter Control Network (FCN). The functionality of the various software components of the FU and SM are discussed in more detail in the following sections. A. Filter Unit The FU software consists of four main components: The filter framework, handles the FU data access and control layers on behalf of the filter tasks, which run HLT algorithms and perform the event selection in the context of the framework. The filter unit monitor, in conjunction with the framework, provides a consistent interface for monitoring of system-level and application-level parameters of the Filter Unit. In particular, it collects and elaborates statistical data on the HLT, and periodically updates these data to the relevant consumers via the control network. The storage manager handles the transfer of accepted event data out of the FU. B. Subfarm Manager Representing a single physical point of access to the computation nodes performing the HLT selection, the SM guarantees consistency of the operational parameters of the sub-farm by distributing configuration information and control messages from other actors in the DAQ system to filter units, and by centralizing access to data repositories. The SM provides state tracking, monitor information cache and local storage of output data for the sub-farm, so that each sub-farm can be operated as a separate entity. Fetching of new calibration and run condition constants, as well as other parameters, directives, and algorithms defining the High Level Trigger “table”, are among SM responsibilities. By coordinating the update of these parameters in the filter units, complete traceability of events accepted by the HLT is guaranteed, thus easing the task of the physicists performing data analysis in evaluating trigger efficiencies and cross sections. A block scheme of the SM is shown in Figure 2. MS Fig. 1. A sub-farm consists of processing nodes (FU) and one head node (SM). The Filter Units are connected to the Builder Units by a switching network. To ensure good scaling behavior, as the number of processing nodes is increased, the HLT farm is organized as a collection of sub-farms, each connecting to several Builder Units via a switched interconnect, the Filter Data Network (FDN). Filter units in a sub-farm are managed by a head node, the Sub-farm Manager (SM). Control and monitor messages Fig. 2. Block scheme of the Sub-farm Manager The various services are coordinated by an application server to provide a unique point of access for run control and monitor clients, and to give a uniform interface to the various functions of the sub-farm. A sub-farm monitor handles the subscription and collection of monitor and alarm information from all the FU nodes. Monitor information is stored in a transient cache for local elaboration and distribution. A monitor and alarm engine can elaborate and analyze this information, as well as state information of FUs, SM and the local storage. Thresh-olds on various observables related to these components can be set here to produce alarms and warnings to be delivered to run control or other clients. Alarms originated by the FU or raised locally, can be masked in the monitor engine. Control and configuration messages are distributed upon request from run control by the corresponding service, and a state tracking service collects responses to provide real-time state of the system and acknowledge state transitions to run control. The SM supervises the file-system containing the reference code, configuration data, and the replica of the run condition and calibration databases, through the configuration manager, and monitors the state of the local mass storage on behalf of run control. To give uniform access to the Farm resources, the SM control and monitor interfaces are identical to the corresponding interfaces of the FU. The SM presenter elaborates dynamic representations of the monitor and state information for browsing by external clients via a standard web interface. III. FILTER UNIT SOFTWARE INTEGRATION STRATEGY Experience with traditional “Level-3” triggers shows that there is a fast turnover of reconstruction and selection algorithms as more effective strategies are developed offline and then exported to the “Level-3”. Thus, an early design choice was to provide, in the filter farm, the same software framework available to offline reconstruction [4], allowing transparent migration of new algorithms and selections developed offline by analyzing data. In the FU, the DAQ framework and the reconstruction framework must coexist and operate concurrently (Fig. 3). Onli ne-specific extensions to Base services Offli ne base services (reused) Onli ne repla cements for offli ne package s deali ng wit h raw data Recons truction and HLT algor it hms (same as offl ine) Filter Un it Framework (DAQ ) Execu tive (DAQ ) Fig. 3. The DAQ and extended reconstruction frameworks cooperate in the Filter Unit to run generic filter tasks. A limited set of interfaces connects the DAQ components to online-specific extensions of the offline framework. Interoperability is achieved by clear definition of the scope of each of the frameworks inside the FU, and subsequent careful design of a small set of interfaces between the two realms. Three separate areas of interaction were identified: event data input, control, and monitoring. The DAQ framework provides standardized interfaces for communication among DAQ applications through peer-to-peer message passing, and access to configuration parameters under the control of an executive program. The executive loads application components, dispatches configuration and control messages, provides data transport in the distributed system, and means to hook data delivery to specific software entry points. The heart of the Filter Unit is a set of filter tasks that manage reconstruction and selection algorithms using the services offered by the offline framework. In the framework itself, the offline implementation of some of these services is replaced by the corresponding online one, built as an extension to the offline complying with the aforementioned interfaces. The most important of these extensions allows the filter task to collect event (raw) data via the DAQ components of the FU, and dispatch them to the reconstruction modules. Event objects are created in the FU upon response from the BU to a request message, and placed in a queue, where they can be accessed by a filter task. Partial transfer of event fragments from the BU is supported to ease the load on the data network. Raw data fragments are interpreted on a need basis, according to their pre-specified formats. Data formatting is implemented as an extension to reconstruction code equally available for preliminary studies of data volumes and CPU requirements while the design of the front-end systems is being finalized. Formatting on demand minimizes CPU need by only transforming into software objects the data needed to reach the HLT decision. Other extensions transfer control over reconstruction and selection configuration and related parameters, and provide facilities that enable the filter tasks to publish and update monitor information in the SM using online protocols. This strategy allows the assembly of filter tasks as modular “application libraries” consisting mainly of offline components, and only few online specific modules which are completely interchangeable with their offline counterpart. Managing and maintaining a software project making use of a large base of external components can be cumbersome, especially if concurrent development and release of many of these components is expected. To overcome this problem, the farm software development integrates the same configuration and release management used for offline [5], concentrating in a single releasable unit all the software that has dependencies in both realms. IV. HLT SELECTION ALGORITHMS Extensive effort has been put by the CMS Collaboration on early development and benchmarking of effective HLT reconstruction and selections algorithms [1]. This development profits from a coherent object-oriented framework [4], allowing simple integration of reconstruction modules and providing the basic tools for sophisticated event-driven, “on demand” reconstruction. To optimize CPU usage, reconstruction is performed in a limited region of the detector, identified on the base of results of previous reconstruction steps, starting from Level-1 information. The resulting set of reconstruction and selection algorithms has been tested on large samples of simulated data to estimate their rate reduction power and physics efficiency. Events were generated for all physical processes expected to contribute to the Level-1 trigger output rate. The Level-1 trigger rate budget was allocated to the various physics channels according to their relevance for the CMS physics program. A safety factor of 3 was used to account for machine background processes (beam halo etc.), which were not simulated. To study the LHC startup scenario, a total Level-1 rate of 16 kHz only was allocated. As an example of the detailed performance analysis carried out on candidate HLT algorithms, the selection of electrons and photons is discussed in some detail. A quarter of the total Level-1 rate is assigned to the undifferentiated single and double electron/photon stream. By optimizing efficiencies, the relative rate for single and double trigger is assigned. The first step in the selection consists in the accurate measurement of the candidates’ transverse energy in the electromagnetic calorimeter (ECAL). A special clustering algorithm is applied to recover possible bremsstrahlung photons, and tighter thresholds are subsequently applied. This is commonly referred to as “Level-2 selection”. The accurate energy and position measurement in the CMS ECAL is used to extrapolate the direction of an electron track into the pixel layers of the CMS tracker. By requiring that two out of three pixel layers have a hit inside the extrapolation road (pixel matching), the electron hypothesis is excluded for a large fraction of the background from jets, while preserving very good efficiency for true electrons (Fig. 4). Events with one or two candidates passing this selection (the “Level-2.5”) enter the electron stream, whereas those that fail are tested for the photon hypothesis. For candidates in the electron stream, electron tracks are subsequently reconstructed, and track quality cuts applied. By looking for tracks in a cone around the electron direction, a very effective isolation cut can also be applied. This is collectively referred to as “Level-3” selection. For the photon stream, tighter transverse energy cuts are applied at “Level-3”, along with isolation cuts. The selection is almost 80% efficient on electrons from W decays in the fiducial area of the detector. The double photon selection is measured to be more than 80% efficient on H decays within the fiducial cuts. The CPU performance of the HLT algorithms has been evaluated for single and combined selections, to estimate the computational power needs of the HLT farm. As an example, figure 5 shows the distribution of the CPU time needed to run the electron/photon selection on events passing Level-1. The CPU time to run a given logical level is only accounted for if the event passes the previous selection. Processing times are found to be well behaved, and to fit well to a log-normal. This result guarantees that the system does not get stuck because of pathological events in the far tails of the distribution. Fig. 5. CPU time distribution for the electron HLT selection on a reference 1 GHz Pentium III PC. Inset: the log(t) distribution is fitted to a gaussian Table I summarizes results of the detailed CPU analysis for the baseline HLT selection described in [1]. The mean processing time is 271 ms per Level-1 accept. This mean does not include the time required to format raw event data as received from the DAQ. Some preliminary results on the impact of raw data formatting are discussed in the following section V. TABLE I SUMMARY OF CPU TIMES AND RATES FOR HLT SELECTIONS EVALUATED ON AN INTEL PENTIUM III MACHINE AT 1 GHZ Fig. 4. Efficiency vs. jet rejection for the electron selection algorithm based on pixel tracking at design LHC luminosity. The two curves are for different acceptance cuts of the pixel detector. Main Physics Object Electron/Photon CPU time/L1 (ms) Level-1 rate (kHz) HLT output rate (Hz) Total CPU time (s) 160 4.3 43 688 Muon 710 3.6 Tau 130 50 Electron + Jet B-jets Jet and missing ET 29 2556 3.0 4 390 3.4 14 170 165 0.8 2 132 300 0.5 5 150 The Pentium-III 1 GHz CPU estimated capacity is 41 SpecInt95. Thus, the total CPU power required by the HLT farm to process the maximum Level-1 rate of 100 kHz is 1.2106 SpecInt95, but only 6105 at startup. These figures are finally extrapolated to 2007 assuming a CPU power increase by a factor two every 1.5 years, yielding an average of approximately 40 ms per event, or a total of ~2,000 CPU at startup. V. PROTOTYPES AND RESULTS Thanks to the clear separation of responsibility between the acquisition and reconstruction software realms, components of the filter unit interacting with DAQ are implemented and tested in a pure DAQ context. In particular, the protocol for data transfer between the BU and the FU has been developed and tested early on. Tests show that a GEthernet-based FDN can sustain event rates up to 100 Hz out of the BU, assuming events of 1 MB average size. With the current DAQ design figures, two outgoing GE connections are needed for the BU to sustain its design output event rate of 200 Hz. Filter unit software development has subsequently concentrated on the integration issues mentioned in section III. To establish the data path and task coordination required to run the HLT algorithms in the context of the FU, the necessary extensions to the offline framework, were designed and implemented. These enable delivery of data from the DAQ segment, steering of the reconstruction execution, and access to reconstruction parameters and monitor information. In order to use simulated data, the ability to generate and interpret arbitrary raw data formats was introduced in the reconstruction, and a first set of formats based on current knowledge of front-end hardware design was implemented. By analyzing occupancy information in simulated events, the design hypothesis of 1MB average total size for events was verified and event-by-event size fluctuations estimated [6]. Fig. 6. CPU time distribution for access and formatting of CMS ECAL data on a reference 1 GHz Pentium-III The raw data access and formatting were analyzed to get a first hint of their impact on HLT execution times. As an example, figure 6 shows the estimated access and formatting time for the electromagnetic calorimeter data. Preliminary results indicate that, without any optimization, these times range between 10 and 20% of the total processing time. A complete filter unit running the available HLT algorithms was then assembled and functionally tested. This prototype filter unit obtains simulated raw events from a builder unit, and processes them under DAQ control. HLT parameters can be configured and modified through DAQ interfaces, and monitor information collected from a central server. A prototype of the sub-farm manager based on the apacheTomcat servlet container [7] was implemented. Relevant functionality is provided by servlets, which use DAQ protocols to communicate with the FU, to issue configuration and control messages and receive status and monitor information. Good scaling behavior of the SM when controlling up to 28 filter units was verified. A constant cold-start time of 9 seconds was measured when configuring and starting the whole sub-farm from scratch. Collection and collation of monitoring information in the SM was tested to scale up to 64 FU when 400 sample monitor objects (50-bin double precision 1D histograms) are updated every 5 seconds. The software was deployed on a small-scale test stand consisting of 9 rack-mount computers with dual Pentium-IV Xeon CPU at 2.4 GHz, connected through dual GEthernet links over a 24-port switch. The HLT selections ran by the filter units were the same used to produce the baseline results of table I. To fully exploit the CPU multiple instances of the filter unit were deployed on each machine, and performance was found to scale up to 4 FU/node, since processes profit from the Hyperthreaded Xeon architecture. The development of a FU with multiple processing threads is planned, when thread-safe reconstruction code will become available. A repetition of the offline benchmarks of HLT selections is currently ongoing. Preliminary results indicate that processing times scale well with machine clock speed: a test-stand processing node is capable of handling an average of 20 Hz event rate. Expansion of the current setup is planned for 2004 to reach the planned size of a sub-farm, spanning 8 or 16 BU. Development is ongoing to increase robustness and provide the complete functionality of the FU and SM, including local mass storage of accepted events. Performance improvements are expected from optimization of reconstruction and selection code until the very start of data taking, in 2007. VI. REFERENCES [1] [2] [3] [4] [5] [6] [7] CMS Collaboration, Data Acquisition and High-Level Trigger Technical Design Report, CERN/LHCC 2002-26. V. Brigljevic et al., The CMS Event Builder, 2003 Conference on Computing in High Energy Physics, San Diego, CA 24-28 March 2003. J. Gutleber et al. Towards a Homogeneous Architecture for High Energy Physics Data Acquisition Systems, Comp. Phys. Comm. 153/2 (2003), 155. V.Innocente et al., CMS Software Architecture, Comp. Phys. Comm., 140 (2001), 31. SCRAM, Software Configuration Release And Management, http://lcgapp.cern.ch/project/spi/scram/index.html See e.g. O. Kodolova et al., Expected Data Rates from the Silicon Strip Tracker, CMS Note-2002/047 (2002). The Jakarta Project: Apache Tomcat, http://jakarta.apache.org/tomcat