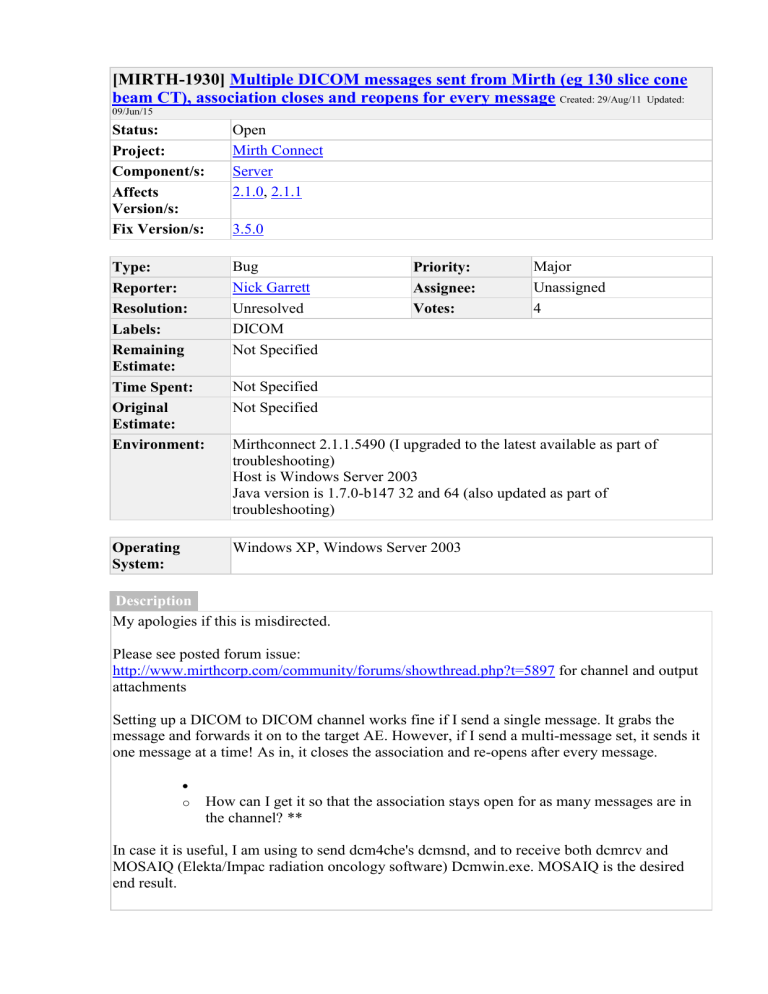

Mirth Connect DICOM Issue: Association Reopens for Each Message

[MIRTH-1930]

Multiple DICOM messages sent from Mirth (eg 130 slice cone beam CT), association closes and reopens for every message

Created: 29/Aug/11 Updated:

09/Jun/15

Status: Open

Project: Mirth Connect

Component/s: Server

Affects

Version/s:

2.1.0

,

Fix Version/s: 3.5.0

Type: Bug

Reporter:

Resolution:

Labels:

2.1.1

Nick Garrett

Unresolved

DICOM

Not Specified Remaining

Estimate:

Time Spent:

Original

Estimate:

Not Specified

Not Specified

Priority:

Assignee:

Votes:

Major

Unassigned

4

Environment: Mirthconnect 2.1.1.5490 (I upgraded to the latest available as part of troubleshooting)

Host is Windows Server 2003

Java version is 1.7.0-b147 32 and 64 (also updated as part of troubleshooting)

Operating

System:

Description

Windows XP, Windows Server 2003

My apologies if this is misdirected.

Please see posted forum issue: http://www.mirthcorp.com/community/forums/showthread.php?t=5897 for channel and output attachments

Setting up a DICOM to DICOM channel works fine if I send a single message. It grabs the message and forwards it on to the target AE. However, if I send a multi-message set, it sends it one message at a time! As in, it closes the association and re-opens after every message.

o How can I get it so that the association stays open for as many messages are in the channel? **

In case it is useful, I am using to send dcm4che's dcmsnd, and to receive both dcmrcv and

MOSAIQ (Elekta/Impac radiation oncology software) Dcmwin.exe. MOSAIQ is the desired end result.

Multi-image sets require being sent as a single association for many Oncology and Med

Imaging destinations (MOSAIQ, CHARM, Epic EMR, Pinnacle, Eclipse, etc etc) to be correctly imported into the patient image store.

Comments

Comment by Adam Martin

[ 11/Oct/11 ]

I believe this issue correlates directly with issue 1573: http://www.mirthcorp.com/community/issues/browse/MIRTH-1573 which has been around for over a year. I think the performance issue is because of the opening and closing of the dicom association.

Comment by Nick Garrett

[ 11/Oct/11 ]

I can confirm that switching to a *nix server host (tried Debian, Solaris 11 Express, and

Scientific Linux which is basically RHEL) makes no difference. Same versions of software and

Java (carefully NOT using the openJDK on the Linux installations). Just in case it was the

Windows networking.

Not sure if that helps.

In all cases removing Mirthconnect from the equation lets the DICOM msgs flow smoothly in a single association.

Comment by Garry Christensen

[ 07/Mar/12 ]

I have just started testing MirthConnect as a DICOM tool and I also see each image sent as a separate association, significantly increasing TCP and DICOM overhead. I tried to send a large chest CT (6000 slices) through MirthConnect to get an indication of timing. After less than 600 images, it crashed.

I can understand why MirthConnect sends each image in a single association - coming from a

HL7 pedigree where this is the norm and where it is unknown from image to image whether more are to come. It would be good if the association with the destination could remain open till the inbound association closes or when there was no activity on the association for a set time

(say 10 seconds). Alternatively, the images could be queued until the inbound association was closed, , then the queued images sent in a single association. This assumes an asynchronous channel.

Just some thoughts after some initial testing.

Comment by Adam Martin

[ 08/Mar/12 ]

I agree Garry that a timeout is the best way to handle associations. A good majority of DICOM devices I work with have a user specified timeout period. That way if you are for example receiving some large mammo images over a slow WAN connection then you can increase your timeout to an appropriate value. Another example is ultrasound, if the study consist of multiple cine loops then the interval that images are received could vary significantly. Now the ultrasound example assumes that you would be sending directly from a modality to Mirth, which I see as a rare occasion.

I would really like to see some progress on this, and am willing to help in any way possible.

Generated at Tue Feb 09 08:29:51 PST 2016 using JIRA 6.2.7#6265sha1:91604a8de81892a3e362e0afee505432f29579b0.