Principle of Orthogonality

advertisement

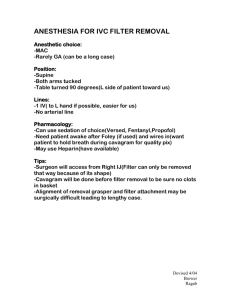

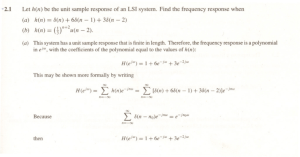

Advance Digital Signal Process Final Tutorial Introduction of Weiner Filter 學號: R98943133 姓名: 馮紹惟 老師: 丁建均教授 1 Contents Abstract ...................................................................................................................... 3 I. Introduction of Wiener Filter ................................................................. 4 Weiner Filter II. The Linear Optimal Filtering Problem ............................................. 6 a. III. Linear Estimation with Mean-Square Error Criterion ........................7 The Real-Valued Case ............................................................................. 9 a. Minimization of performance function ...................................................10 b. Error performance surface for FIR filtering c. Explicit form of J(w) ....................................................................................12 d. Canonical form of J(w) IV. .........................................12 ...............................................................................13 Principle of Orthogonality ................................................................... 15 a. See the Wiener-Hopf Equation by Principle of Orthogonality ....16 b. More Of Principle of Orthogonality ........................................................17 V. Normalized Performance Function .................................................. 19 VI. The Complex-Valued Case .................................................................. 20 VII. Application ................................................................................................ 22 a. Modelling ...........................................................................................................22 b. Inverse Modelling ...........................................................................................23 VIII. Implementation Issues ........................................................................... 28 Summary ................................................................................................................ 30 Reference ................................................................................................................ 31 2 Abstract The Wiener filter was introduced by Norbert Wiener in the 1940's and published in 1949 in signal processing. A major contribution was the use of a statistical model for the estimated signal (the Bayesian approach!). And the Wiener filter solves the signal estimation problem for stationary signals. Because the theory behind this filter assumes that the inputs are stationary, a wiener filter is not an adaptive filter. So the filter is optimal in the sense of the MMSE. The wiener filter’s main purpose is to reduce the amount of noise present in a signal by comparison with an estimation of the desired noiseless signal. As we shall see, the Kalman filter solves the corresponding filtering problem in greater generality, for non-stationary signals. We shall focus here on the discrete-time version of the Wiener filter. 3 I. Introduction of wiener function Wiener filters are a class of optimum linear filters which involve linear estimation of a desired signal sequence from another related sequence. In the statistical approach to the solution of the linear filtering problem, we assume the availability of certain statistical parameters (e.g. mean and correlation functions) of the useful signal and unwanted additive noise. The goal of the Wiener filter is to filter out noise that has corrupted a signal. It is based on a statistical approach. The problem is to design a linear filter with the noisy data as input and the requirement of minimizing the effect of the noise at the filter output according to some statistical criterion. A useful approach to this filter-optimization problem is to minimize the mean-square value of the error signal that is defined as the difference between some desired response and the actual filter output. For stationary inputs, the resulting solution is commonly known as the Weiner filter. The Weiner filter is inadequate for dealing with situations in which nonstationarity of the signal and/or noise is intrinsic to the problem. In such situations, the optimum filter has to be assumed a time-varying form. A highly successful solution to this more difficult problem is found in the Kalman filter. Now we summarize some Wiener filters characteristics Assumption: Signal and (additive) noise are stationary linear stochastic processes with known spectral cross-correlation Requirement: characteristics or known autocorrelation and We want to find the linear MMSE estimate of sk based on (all or part of) yk . So there are three versions of this problem: a. The causal filter: b. The non-causal filter: 4 c. The FIR filter: And we consider in this tutorial the FIR case for simplicity. 5 II. Wiener Filter - The Linear Optimal filtering problem There is a signal model, showed in Fig. 1, Fig. 1 A signal model Where u(n) is the measured value of the desired signal d(n). And there are some examples of measurement process: Additives noise problem u(n) = d(n) + v(n) Linear measurement Simple delay Interference problem u(n) = d(n)* h(n) + v(n) u(n) = d(n −1) + v(n) => It becomes a prediction problem u(n) = d(n) + i(n) + v(n) And the filtering main problem is to find an estimation of d(n) by applying a linear filter on u(n) Fig. 2 The filtering goal When the filter is restricted to be a linear filter => it is a linear filtering problem. Then the filter is designed to optimize a performance index of the filtering process, such as min |y(n) − d(n)| min |y(n) − d(n)|2 min E[|y(n) − d(n)|2 ] wk wk wk 6 max SNR of y(n) wk ⋯ Solving wopt is a linear optimal filtering problem The whole process can indicate Fig. 3 Fig. 3 ∗ where the output is 𝑦(𝑛) = 𝑑̂(𝑛) = ∑∞ k=0 wk u(n − k) for n = 0, 1, 2, ⋯ a. Linear Estimation with Mean-Square Error Criterion Fig. 4 shows the block schematic of a linear discrete-time filter 𝑊(𝑧) for estimating a desired signal 𝑑(𝑛) based on an excitation 𝑢(𝑛). We assume that both 𝑢(𝑛) and 𝑑(𝑛) are random processes (discrete-time random signals). The filter output is 𝑦(𝑛) and 𝑒(𝑛) is the estimation error. u(n) 7 Fig. 4 To find the optimum filter parameters, the cost function or performance function must be selected. In choosing a performance function the following points have to be considered: 1. 2. The performance function must be mathematically tractable. The performance function should preferably have a single minimum so that the optimum set of the filter parameters could be selected unambiguously. The number of minima points for a performance function is closely related to the filter structure. The recursive (IIR) filters, in general, result in performance function that may have many minima, whereas the non-recursive (FIR) filters are guaranteed to have a single global minimum point if a proper performance function is used. In Weiner filter, the performance function is chosen to be J(w) = E[|e(n)|2 ] This is also called “mean-square error criterion” 8 III. Wiener Filter - The Real-Valued Case Fig. 5 shows a transversal filter (FIR) with tap weights W0 , W1 , … , WN−1 . u(n − 1) u(n) Fig. 5 Let W = [W0 , W1 , … , WN−1 ]T U(n) = [u0 , u1 , … , un−N+1 ]T The output is N−1 T T y(n) = ∑ wi u(n − i) = W U(n) = U (n)W … … … … … [1] i=0 Thus we may write T e(n) = d(n) − y(n) = d(n) − U (n)W … … … … … [2] The performance function, or cost function, is then given by 9 J(w) = E[|e(n)|2 ] T T = E [(d(n) − W U(n)) (d(n) − U (n)W)] T T = E[d2 (n)] − W E[U(n)d(n)] − E [U (n)d(n)] W + T T W E [U(n)U (n)] W … [3] Now we define the Nx1 cross-correlation vector P ≡ E[U(n)d(n)] = [p0 , p1 , … , pN−1 ]T and the NxN autocorrelation matrix T R ≡ E [U(n)U (n)] = r0,0 r1,0 ⋮ [rN−1,0 r0,1 r1,1 ⋮ r00 ⋯ r0,N−1 r1,N−1 ⋮ ⋯ rN−1,N−1 ] Also we note that T E [d(n)U (n)] = P T T T W P=P W Thus we obtain T T J(w) = E[d2 (n)] − 2W P + W RW … … … … … [4] Equation 4 is a quadratic function of the tap-weight vector W with a single global minimum. We note that R has to be a positive definite matrix in order to have a unique minimum point in the w-space. a. Minimization of performance function 10 To obtain the set of tap weights that minimize the performance function, we set ∂J(w) = 0, for i = 0, 1, ⋯ , N − 1 ∂wi or ∇J(w) = 0 where ∇is the gradient vector defined as the column vector T ∂ ∂ ∂ Δ=[ , ,⋯, ] ∂w0 ∂w1 ∂wN−1 and zero vector 0 is defined as N-component vector 0 = [0, 0, ⋯ ,0]T Equation 4 can be expanded as N−1 N−1 N−1 2 J(w) = E[d (n)] − 2 ∑ pl wl + ∑ ∑ wl wm rlm l=0 l=0 m=0 N−1 and ∑N−1 l=0 ∑m=0 wl wm rlm can be expanded as N−1 N−1 N−1 N−1 N−1 ∑ ∑ wl wm rlm = ∑ ∑ wl wm rlm + wi ∑ wm rim + wi2 rii l=0 m=0 l=0,l≠i m=0,m≠i m≠0,l≠i Then we obtain N−1 ∂J(w) = −2pi + ∑ Wl (rli + ril ) , for i = 0, 1, 2, ⋯ , N − 1 … … … … … [5] ∂wi l=0 By setting ∂J(w) ∂wi = 0, we obtain 11 N−1 ∑ Wl (rli + ril ) = 2pi l=0 Note that rli = E[x(n − l)x(n − i)] = R xx (i − l) The symmetry property of autocorrelation function of real-valued signal, we have the relation rli = ril Equation 5 then becomes N−1 ∑ ril wl = pi l=0 In matrix notation, we then obtain RWop = P … … … … … [6] where Wop is the optimum tap-weight vector. Equation 6 is also known as the Wiener-Hopf equation, which has the solution Wop = R−1 P assuming that R has inverse. b. Error performance surface for FIR filtering By J ≡ E[e(n)x(n − i)], it can be deduced that J = function[w|d(n), u(n)] = function[|data], and J = function[w] is defined as the error performance surface over all possible weights w. c. Explicit form of 𝐉(𝐰) 12 M−1 J = E [(d(n) − ∑ M−1 wk∗ u(n − k)) (d ∗ (n) − ∑ wi u∗ (n − i))] k=0 M−1 = σ2d −∑ k=0 i=0 M−1 wk∗ p(−k) − ∑ wk p∗ (−k) + ∑ ∑ wk∗ wi r(i − k) k=0 k i = Quadratic function of w0 , w1 , ⋯ , wM−1 = A surface in (M + 1) − dimension space d. Canonical form of 𝐉(𝐰) J(w) can be written in matrix form as J(w) = σ2d − w H p − pH w + w H Rw And by (w − R−1 p)H R(w − R−1 p) = w H Rw − pH R−1 Rw − w H RR−1 p + pH R−1 RR−1 p = w H Rw − pH w − w H p + pH R−1 p The Hermitian property of R−1 has been used, (R−1 )H = R−1 Then J(w) can be put into a perfect square-form as J(w) = σ2d − pH R−1 p + (w − R−1 p)H R(w − R−1 p) The squared form can yield the minimum MSE explicitly as 13 J(w) = σ2d − pH R−1 p + (w − w0 )H R(w − w0 ) ⟹ when w = w0 (= R−1 p) is found, we have the minimum value of Jmin is T Jmin = E[d2 (n)] − Wop P T Jmin = E[d2 (n)] − Wop RWop … … … … … [7] Equation 7 can also expressed as T Jmin = E[d2 (n)] − P R−1 P And the squared form becomes H J(w) = Jmin + (w − w0 ) R(w − w0 ) = Jmin + εH Rε where εtape error = w − w0 If R is written in its similarity form, then J(w) can be put into a more informative form named Canonical Form. Using the eigen-decomposition R = Q⋀QH , we have H J(w) = Jmin + (w − w0 ) Q⋀QH (w − w0 ) = Jmin + v H ⋀v … … The Canonical Form Where v ≡ QH ε is the transformed vector of ε in the eigenspace of R. In eigenspace the vk ′ s in v = [v1 , v2 , … , vM ]T are the decoupled tape-errors, then we have J(w) = Jmin + v H ⋀v M M ∗ = Jmin + ∑ λk vk vk = Jmin + ∑ λk |vk |2 k=1 k=1 14 where |vk |2 is the error power of each coefficients in the eigenspace and λk is the weighting (ie. relative importance) of each coefficient error. IV. Wiener Filter - Principle of Orthogonality For linear filtering problem, the wiener solution can be generalized to be a principle as following steps. 1. The MSE cost function (or performance function) is given by J(w) = E[e2 (n)] By the chain rule, ∂J(w) ∂e(n) = E [2e(n) ] for i = 0, 1, 2, ⋯ , N − 1 ∂wi ∂wi where e(n) = d(n) − y(n) Since d(n) is independent of wi , we get ∂e(n) ∂y(n) =− = −u(n − i) ∂wi ∂wi Then we obtain ∂J(w) = −2E[e(n)u(n − i)] for i = 0, 1, 2, ⋯ , N − 1 … … … … … [8] ∂wi 2. When the Wiener filter tap, which weights are set to their optimal values, ∂J(w) =0 ∂wi Hence, if e0 (n) is the estimation error when wi are set to their optimal values, then equation 8 becomes E[e0 (n)u(n − i)] = 0 for i = 0, 1, 2, ⋯ , N − 1 15 For the ergodic random process, EΩ [x(n − i)e∗ (n)] = ∑ x(n − i)e∗ (n) = ⟨x(n − i), e(n)⟩ n ⟹ ⟨x(n − i), e(n)⟩ = 0 means x(n − i) ⊥ e(n) On the other hands, the estimation error is orthogonal to input u(n − i) for all i (Note: u(n − i) and e(n) are treated as vectors !). That is, the estimation error is uncorrelated with the filter tap inputs, x(n − i). This is known as “ the principles of orthogonality”. 3. We can also show that the optimal filter output is also uncorrelated with the estimation error. That is E[e0 (n)y0 (n)] = 0 for i = 0, 1, 2, ⋯ , N − 1 This result indicates that the optimized Weiner filter output and the estimation error are “orthogonal”. The orthogonality principle is important in that it can be generalized to many complicated linear filtering or linear estimation problem. a. See the Wiener-Hopf Equation by Principle of Orthogonality Wiener-Hopf equation is a special case of the orthogonality principle, when ∗ d(n) = û(n) = ∑M k=1 wk u(n − k) which is the linear prediction problem. So we can derived Weiner-Hopf equation based on the principle of orthogonality E[u(n − k)e∗ (n)] = 0 M ∗ (n) ⟹ E [u(n − k) (d − ∑ wi u∗ (n − i))] = 0 i=1 M ⟹ E [∑ wi u(n − k)u∗ (n − i)] = E[u(n − k)d∗ (n)] i=1 ∗ where ∑M i=1 wi r(i − k) = E[u(n − k)u (n)] = r(−k) for k = 1~M 16 This is the Wiener-Hopf equation Rw = r ∗ . For optimal FIR filtering problem, d(n) needs not to be û(n), then the Wiener-Hopf equation is Rw = p with p being the cross correlation vector of u(n − k) and d(n). b. More Of Principle of Orthogonality Since J is a quadratic function of wi 's , i.e., J(w0 , w1 , ⋯ , w0 w1 , w1 w2 , ⋯ , w02 , w12 , ⋯ ) it has a bowel shape in the hyperspace of (w0 , w1 , ⋯ ), which has an unique extreme point at wopt . ∇k J = 0 is also a sufficient condition for minimizing J This is the principle of orthogonality. For the 2D case w = [w0 , w1 ]T , the error surface is showed in Fig. 6 Fig. 6 Error surface of 2D case → y(n) ⊥ e(n) By 17 E[e∗ (n)y(n)] = E [e∗ (n) ∑ wk∗ u(n − k)] = ∑ wk∗ E[e∗ (n)u(n − k)] = 0 k k =>Both input and output of the filter are orthogonal to the estimation error e(n). The vector space interpretation By y0 ⊥ e0 and e0 = 𝑑 − y0 , i.e., eopt (n) = 𝑑(𝑛) − yopt (n) => 𝑑 = e0 + y0 ,i.e., 𝑑 is decomposed into two orthogonal components, Fig. 7 Estimation value + Estimation error = Desired signal. In geometric term, 𝑑̂ = projection of 𝑑 on e⊥ 0 . This is a reasonable result ! 18 V. Normalized Performance Function 1. If the optimal filter tap weights are expressed by w0,l , l = 0, 1, 2, ⋯ , N − 1. The estimation error is then given by N−1 e0 (n) = d(n) − ∑ w0,l u(n − l) l=0 and then d(n) = e0 (n) + y0 (n) E[d2 (n)] = E[e20 (n)] + E[y02 (n)] + 2E[e0 (n)y0 (n)] 2. We may note that E[e20 (n)] = Jmin and we obtain Jmin = E[d2 (n)] − E[y02 (n)] 3. Define ρ as the normalized performance function, and Jmin ≥ 0 → E[d2 (n)] ≥ E[y02 (n)] ρ= J E[d2 (n)] 4. ρ = 1 when y(n) = 1 5. ρ reaches its minimum value, ρmin when the filter tap-weights are chosen to achieve the minimum mean-squared error. This gives ρmin = 1 − 19 E[y02 (n)] E[d2 (n)] and we have 0 < ρmin < 1 VI. Wiener Filter - The Complex-Valued Case In many practical applications, the random signals are complex-valued. For example, the baseband signal of QPSK & QAM in data transmission systems. In the Wiener filter for processing complex-valued random signals, the tap-weights are assumed to be complex variables. The estimation error, e(n), is also complex-valued. We may write J = E[|e(n)|2 ] = E[e(n)e∗ (n)] The tap-weight wi is expressed by wi = wi,R + jwi,I The gradient of a function with respect to a complex variable wi = wi,R + jwi,I is defined as ∇Cw ≡ ∂ ∂ +j … … … … … [9] ∂wR ∂wI The optimum tap-weights of the complex-valued Wiener filter will be obtained from the criterion: ∇Cwi J = 0 for i = 0, 1, 2, ⋯ , N − 1 That is, ∂J ∂wi,R = 0 and ∂J ∂wi,I =0 Since J = E[e(n)e∗ (n)], we have ∇Cwi J = E[e(n)∇Cwi e∗ (n) + e∗ (n)∇Cwi e(n)] … … … … … [10] Noting that N−1 e(n) = d(n) − ∑ wk u(n − k) k=0 20 ∇Cwi e(n) = −u(n − i)∇Cwi wi ∇Cwi e∗ (n) = −u∗ (n − i)∇Cwi wi∗ Applying the definition 9, we obtain ∇Cwi wi = ∂wi ∂wi +j = 1 + j(j) = 0 ∂wi,R ∂wi,I and ∇Cwi wi∗ = ∂wi∗ ∂wi∗ +j = 1 + j(−j) = 2 ∂wi,R ∂wi,I Thus, equation 10 becomes ∇Cwi j = −2E[e(n)u∗ (n − i)] The optimum filter tap-weights are obtained when ∇Cwi J = 0. This givens E[e0 (n)u∗ (n − i)] = 0 for i = 0, 1, 2, ⋯ , N − 1 … … … … … [11] where e0 (n) is the optimum estimation error. Equation 11 is the “principle of orthogonality” for the case of complex-valued signals in wiener filter. The Wiener-Hopf equation can be derived as follows: Define u(n) ≡ [u(n) u(n − 1) ⋯ u(n − N + 1)]T and ∗ w(n) ≡ [w0∗ w1∗ ⋯ wN−1 ]T We can also write u(n) ≡ [u∗ (n) u∗ (n − 1) ⋯ u∗ (n − N + 1)]H and 21 w(n) ≡ [w0 w1 ⋯ wN−1 ]H where H denotes complex-conjugate transpose or Hermitian. Noting that H e(n) = d(n) − w0 u(n) … … … … … [12] and E[e0 (n)u(n)] = 0 from equation 12, we have H E[u(n)(d∗ (n) − u (n)w0 )] = 0 and then Rw0 = P … … … … … [13] where H R = E[u(n)u (n)] and P = E[u(n)d∗ (n)] Equation 13 is the Wiener-Hopf equation for the case of complex-valued signals. The minimum performance function is then expressed as H Jmin = E[d2 (n)] − Wo RWo Remarks: In the derivation of the above Wiener filter we have made assumption that it is causal and finite impulse response, for both real-valued and complex-valued signals. 22 VII. Wiener Filter - Application a. Modelling Consider the modeling problem depicted in Fig. 8 Fig. 8 The model of Modeling μ(n), 𝒱0 (n), 𝒱i (n) are assumed to be stationary, zero-mean and uncorrelated with one another. The input to Wiener filter is given by x(n) = μ(n) + 𝒱i (n) and the desired output is given by d(n) = g n ∗ μ(n) + 𝒱0 (n) where g n is the impulse response sample of the plant. The optimum unconstrained Wiener filter transfer function Wo (z) = Note that 23 R dx (z) R xx (z) R xx (k) = E[x(n)x(n − k)] = E[(μ(n) + 𝒱i (n))(μ(n − k) + 𝒱i (n − k))] = E[μ(n)μ(n − k)] + E[μ(n)𝒱i (n − k)] + E[𝒱i (n)μ(n − k)] + E[𝒱i (n)𝒱i (n − k)] = R μμ (k) + R 𝒱i 𝒱i (k) … … … … … [14] Taking Z-transform on both sides of equation 14, we get R xx (z) = R μμ (z) + R 𝒱i 𝒱i (z) R (z) To calculate Wo (z) = Rdx(z), we must first find the expression for R dx (z), We can xx show that R dx (z) = R d′ μ (z) where d′ (n) is the plant output when the additive noise 𝒱0 (n) is excluded from that. Moreover, we have R d′ μ (z) = G(z)R μμ (z) Thus R dx (z) = G(z)R μμ (z) and we obtain Wo (z) = R μμ (z) G(z) … … … … … [15] R μμ (z) + R 𝒱i 𝒱i (z) We note that Wo (z) is equal to G(z) only when R 𝒱i 𝒱i (z)is equal to zero. That is, when 𝒱i (n) is zero for all values of n. The noise sequence 𝒱i (n) may be thought of as introduced by a transducer that is used to get samples of the plant input. Replacing z by ejw in equation 15, we obtain 24 Wo (ejw ) = R μμ (ejw ) G(ejw ) R μμ (ejw ) + R 𝒱i 𝒱i (ejw ) Define R μμ (ejw ) K(e ) ≡ R μμ (ejw ) + R 𝒱i 𝒱i (ejw ) jw We obtain Wo (ejw ) = K(ejw )G(ejw ) With some mathematic manipulation, we can find the minimum mean-square error, min Jmin , expressed by Jmin = R μ0 μ0 (0) + 1 π ∫ (1 − K(ejw ))R μμ (ejw )|G(ejw )|2 dw 2π −π The best performance that one can expect from the unconstrained Wiener filter is Jmin = R μ0 μ0 (0) and this happens when 𝒱i (n) = 0. The Wiener filter attempts to estimate that part of the target signal d(n) that is correlated with its own input x(n) and leaves the remaining part of d(n) (i.e. 𝒱o (n) ) unaffected. This is known as “ the principles of correlation cancellation “. b. Inverse Modelling Fig. 9 depicts a channel equalization scenario. n(n) Fig. 9 The model of inverse modeling 25 s(n): data samples H(z): System function of communication channel 𝑛(𝑛): additive channel noise which is uncorrelated with s(n) 𝑊(𝑧): filter function used to process the received noisy signal samples to recover the original data samples. When the additive noise at the channel output is absent, the equalizer has the following trivial solution: Wo (z) = 1 H(z) This implies that y(n) = s(n) and thus e(n) = 0 for all n. When the channel noise, 𝑛(𝑛), is non-zero, the solution provided by equation in modeling may not be optimal. x(n) = hn ∗ s(n) + ν(n) and d(n) = s(n) where hn is the impulse response of the channel, H(z). From equation in modeling, we obtain R xx (z) = R ss (z)|H(z)|2 R μμ (z) Also R dx (z) = R sx (z) = H(z −1 )R ss (z) With z 1, we may also write R dx (z) = H ∗ (z)R ss (z) And then Wo (z) = H ∗ (z)R ss (z) … … … … … [16] R ss (z)|H(z)|2 + R μμ (z) 26 This is the general solution to the equalization problem when there is no constraint on the equalizer length and, also, it may be let to be non-causal. Equation 16 can be rewritten as Wo (z) = 1 1 ∙ R μμ (z) H(z) 1+ 2 R ss (z)|H(z)| Let z = ejw and define the parameter R ss (ejw )|H(ejw )|2 ρ(e ) ≡ R μμ (ejw ) jw where R ss (ejw )|H(ejw )|2 and R μμ (ejw )are the signal power spectral density and the noise power spectral density, respectively, at the channel output. We obtain Wo (ejw ) = ρ(ejw ) 1 ∙ jw 1 + ρ(e ) H(ejw ) We note that 𝑛(ejw ) is a non-negative quantity, since it is the signal-to-noise power spectral density ratio at the equalizer input. ρ(ejw ) Also, 0 ≤ 1+ρ(ejw ) ≤ 1 Cancellation of ISI and noise enhancement Consider the optimized equalizer with frequency response given by ρ(ejw ) 1 Wo (e ) = ∙ jw 1 + ρ(e ) H(ejw ) jw In the frequency regions where the noise is almost absent, the value of is very large and hence Wo (ejw ) ≈ 27 1 H(ejw ) The ISI will be eliminated without any significant enhancement of noise. On the other hand, in the frequency regions where the noise level is high, the value of 𝑛(ejw ) is not large and hence the equalizer does not approximate the channel inverse well. This is of course, to prevent noise enhancement. VIII. Wiener Filter - Implementation Issues The Wiener (or MSE) solution exists in correlation domain, which needs XΩ (n) to find the ACF and CCF in the Wiener-Hopf equation. This is the original theory developed by Wiener for the linear prediction case given if we have another chance. Fig. 10 The family of wiener filter in adaptive operation The existence of Wiener solution depends on the availability of the desired signal d(n), the requirement of d(n) may be avoided by using a model of d(n) or making it to be a constraint (e.g., LCMV). Another requirement for the existence of Wiener solution is that the RP must be stationary to ensure the existence of ACF and CCF representation. Kalman (MV) filtering is the major theory developed for making the Wiener solution adapt to nonstationary signals. For real time implementation, the requirement of signal statistics (ACF and CCF) must be avoided => search solution using LMS or estimate solution blockwise using LS. Another concern for real time implementation is the computation issues in finding ACF, CCF and the inverse of ACM based on x(n) instead of XΩ (n). 28 Recursive algorithms for finding Wiener solution were developed for the above cases. The Levinson-Durbin algorithm is developed for linear prediction filtering. Complicated recursive algorithms are used in Kalman filtering and RLS. LMS is the simplest recursive algorithm. SVD is the major technique for solving the LS solution. So we do some conclusion, existence and computation are two major problems in finding the Wiener solution. And the existence problem includes: R, p, d(n) and R−1, we can find the computation problem is primarily for finding R−1 . 29 Summary In this tutorial, we described the discrete-time version of Wiener filter theory, which has evolved from the pioneering work of Norbert Wiener on linear optimum filters for continuous-time signals. The importance of Wiener filter lies in the fact that it provides a frame of reference for the linear filtering of stochastic signals, assuming wide-sense stationarity. And the Wiener filter’s main purpose is that the output of filter can close to the desired response by filter out the noise which interference signal. The Wiener filter has two important properties: 1. The principle of orthogonality: The error signal (estimation error) produced by the Wiener filter is orthogonal to its tap inputs. 2. Statistical characterization of the error signal as white noise: This condition is attained when the filter length matches the order if the multiple regression model describing the generation of the observable data (i.e., the desired response). 30 Reference Wiener, Norbert (1949). Extrapolation, Interpolation, and Smoothing of Stationary Time Series. New York: Wiley. ISBN 0-262-73005-7. Simon Haykin : Adaptive Filter Theory, 4rd Ed. Prentice-Hall , 2002. Paulo S.R. Diniz : Adaptive Filtering, Kluwer , 1994 D.G. Manolakis “Statistical and adaptive signal processing” et. al. 2000 WIKIPEDIA, “Weiner Filter” 張文輝教授, “適應性訊號處理”課程講義 魏哲和教授, “Adaptive Signal Processing”課程講義 曹建和教授, “Adaptive Signal Processing”課程講義 31