Module 07 - Lync Ignite - Network Assessment Tools and Processes

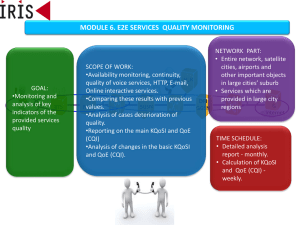

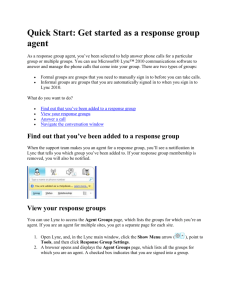

advertisement

Ease of deployment Licensing for use by services organization Cost Features A console where tests are configured and results are collected This may be on-premises or it may be offered as a cloud service Several probes that generate the simulated voice/video traffic Hardware shipped to the datacenter site and the several branch sites involved in the assessment Or available as software to install on customer-provided PCs Since we are most concerned about pre-deployment planning, we assume you are using a toolset that performs an active test However, we will discuss in this module how the Lync monitoring server can provide post-deployment data without “sniffing” No concern regarding PC performance interfering with tests No customer equipment required Easier to deploy (self-contained) No shipping/customs concerns Faster to deploy (no shipping delays) Addresses security concern of connecting unknown hardware to network Understand what data they generate, capture, report Understand what services they run We are only simulating expected future traffic based on the bandwidth planning If a customer has already deployed Lync, the monitoring server reports can reveal many of the same metrics as the synthetic testing tools Reports are best interpreted in aggregate, but individual calls can be analyzed as well After a media session is complete, the end point sends Quality of Experience (QoE) data to home pool The UDC agent captures this data and stores it in the LYSS database All media end points have these capabilities – Lync Clients, Mediation Server, AVMCU, Edge Look for VQReportEvent in Lync client UccApilog These reports are based on RTCP exchanges between the two endpoints The UDC agent monitors all traffic that passes by the front end service and stores it in the LYSS database This includes call records, diagnostic errors, invites, responses etc. To view this process, enable Component logging of the UDCAgent component Front End Server Lync Storage Service CDR and QoE Adaptors CDR/QoE Data Replication for HA (Within a pool) Needed to write custom SQL queries This is a good reference for understanding metrics and associated thresholds Most in-box reports are based on pre-defined SQL Views Conferences ConferenceSessionDetails Registration SessionDetails VoIPDetails AudioStreamDetail MediaLine QoEReportsCallDetail Session VideoStreamDetail The ratio of auto-generated audio data over real speech data, i.e. audio data is delayed or missed, due to network connectivity issues Average packet loss rate during the call Maximum network jitter during the call Round trip time from RTCP statistics The amount the Network MOS was reduced because of jitter and packet loss “Thanks for reporting your issue – do you have logs with that?” Both diagnostic codes and media quality are collected and reported Correlate the user’s reported issue with the associated session reported via CDR/QoE Provides an objective view of the experience Who else is impacted? How often does this happen? Why is it happening? Understanding Deployment Health Reviewing reports should be part of our daily operations Gives you a snapshot of usage, quality trending, worst performing servers, and top failures SCOM management pack can monitor the QoE database to identify locations preforming badly From Users What was wrong with this call? Why did I see a User Facing Diagnostic (UFD)? I was just on a call and it dropped mid stream. Why? From Administrators How do I read the in-box reports? How can I determine what the value of X in a particular report means? Where do I start? Scenario Review Overview – Where do I start Scenario Review Report – Peer-to-peer audio call report Scenario Review Report – Conference call report Scenario review Report – Top failures