2-sample testes, ANOVA

advertisement

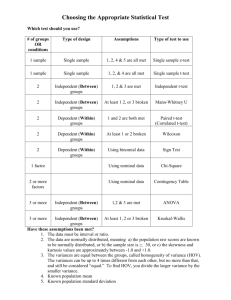

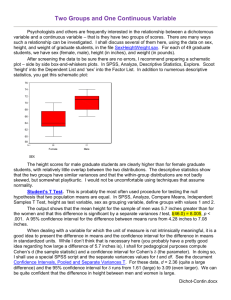

REVIEW OF T-TESTS And then…..an “F” for everyone T-TESTS 1 sample t-test (univariate t-test) Compare sample mean and population mean on same variable Assumes knowledge of population mean (rare) 2-sample t-test (bivariate t-test) Compare two sample means (very common) Nominal (Dummy) IV and I-R Dependent Variable Difference between means across categories of IV Do males and females differ on #hours watching TV? THE T DISTRIBUTION Unlike Z, the t distribution changes with sample size (technically, df) As sample size increases, the tdistribution becomes more and more “normal” At df = 120, tcritical values are almost exactly the same as zcritical values T AS A “TEST STATISTIC” • All test statistics indicate how different our finding is from what is expected under null Mean differences predicted under null hypothesis? ZERO – t indicates how different our finding is from zero – • There is an exact probability associated with every value of a test statistic – One route is to find a “critical value” for a test statistic that is associated with stated alpha – – What t value is associated with .05 or .01 SPSS generates the exact probability associated with any value of a test statistic T-SCORE IS “MEANINGFUL” • • Measure of difference in numerator (top half) of equation Denominator = convert/standardize difference to “standard errors” rather than original metric – Imagine mean differences in “yearly income” versus differences in “# cars owned in lifetime” • • Very different metric, so cannot directly compare (e.g., a difference of “2” would have very different meaning) t = the number of standard errors that separates means One sample = x versus µ – Two sample = xmales vs. xfemales – T-TESTING IN • Analyze compare means independent samples t-test – Must define categories of IV (the dummy variable) • • SPSS How were the categories numerically coded? Output Group Statistics = mean values – Levine’s test – • – Not real important, if significant, use t-value and sig value from “equal variances not assumed” row t = “tobtained” • no need to find “t-critical” as SPSS gives you “sig” or the exact probability of obtaining the tobtained under the null 2-SAMPLE HYPOTHESIS TESTING IN SPSS Independent Samples t Test Output: Testing the Ho that there is no difference in number the number of prior felonies in a sample of offenders who went through “drug court” as compared to a control group. Group Statistics Prior Felonies group status Std. Std. Error Deviation Mean N Mean control 165 3.95 5.374 .418 drug court 167 2.71 3.197 .247 INTERPRETING SPSS OUTPUT Difference in mean # of prior felonies between those who went to drug court & control group Independent Samples Test Levene's Test for Equality of t-test for Equality of Means Variances 95% Confidence Interval of the Difference Prior Felonies Equal variances assumed Equal variances not assumed F Sig. t 29.035 .000 2.557 Sig. (2Mean Std. Error tailed) Difference Difference Lower Upper df 330 .011 1.239 .485 .286 2.192 2.549 266.536 .011 1.239 .486 .282 2.196 INTERPRETING SPSS OUTPUT t statistic, with degrees of freedom Independent Samples Test Levene's Test for Equality of t-test for Equality of Means Variances 95% Confidence Interval of the Difference Prior Felonies Equal variances assumed Equal variances not assumed F Sig. t 29.035 .000 2.557 Sig. (2Mean Std. Error tailed) Difference Difference Lower Upper df 330 .011 1.239 .485 .286 2.192 2.549 266.536 .011 1.239 .486 .282 2.196 INTERPRETING SPSS OUTPUT “Sig. (2 tailed)” The exact probability of obtaining this mean difference (and associated t-value) under the null hypothesis Independent Samples Test Levene's Test for Equality of t-test for Equality of Means Variances 95% Confidence Interval of the Difference Prior Felonies Equal variances assumed Equal variances not assumed F Sig. t 29.035 .000 2.557 Sig. (2Mean Std. Error tailed) Difference Difference Lower Upper df 330 .011 1.239 .485 .286 2.192 2.549 266.536 .011 1.239 .486 .282 2.196 SIGNIFICANCE (“SIG”) VALUE & PROBABILITY Number under “Sig.” column is the exact probability of obtaining that t-value ( or of finding that mean difference) if the null is true When probability > alpha, we do NOT reject H0 When probability < alpha, we DO reject H0 As the test statistics (here, “t”) increase, they indicate larger differences between our obtained finding and what is expected under null Therefore, as the test statistic increases, the probability associated with it decreases SPSS AND 1-TAIL / 2-TAIL SPSS only reports “2-tailed” significant tests To obtain a 1-tail test simple divide the “sig value” in half Sig. (2 tailed) = .10 Sig 1-tail = .05 Sig. (2 tailed) = .03 Sig 1-tail = .015 FACTORS IN THE PROBABILITY OF REJECTING H0 FOR T-TESTS 1. The size of the observed difference(s) 2. The alpha level 3. The use of one or two-tailed tests 4. The size of the sample SPSS EXAMPLE Data from one of our graduate students’ survey of you deviants. Go to www.d.umn.edu/~jmaahs and get “ttest example” data and open into SPSS H1: Sex is related to GPA H2: Those who use Adderall are more likely to engage in other sorts of crime Use Alpha = .01 ANALYSIS OF VARIANCE What happens if you have more than two means to compare? IV (grouping variable) = more than two categories Examples Risk level (low medium high) Race (white, black, native American, other) DV Still I/R (mean) Results in F-TEST ANOVA = F-TEST The purpose is very similar to the t-test HOWEVER Computes the test statistic “F” instead of “t” And does this using different logic because you cannot calculate a single distance between three or more means. ANOVA Why not use multiple t-tests? Error compounds at every stage probability of making an error gets too large F-test is therefore EXPLORATORY Independent variable can be any level of measurement Technically true, but most useful if categories are limited (e.g., 3-5). HYPOTHESIS TESTING WITH ANOVA: Different route to calculate the test statistic 2 key concepts for understanding ANOVA: SSB – between group variation (sum of squares) SSW – within group variation (sum of squares) ANOVA compares these 2 type of variance The greater the SSB relative to the SSW, the more likely that the null hypothesis (of no difference among sample means) can be rejected TERMINOLOGY CHECK “Sum of Squares” = Sum of Squared Deviations from the Mean = (Xi - X)2 Variance = sum of squares divided by sample size = (Xi - X)2 = Mean Square N Standard Deviation = the square root of the variance = s ALL INDICATE LEVEL OF “DISPERSION” THE F RATIO Indicates the variance between the groups, relative to variance within the groups F = Mean square between Mean square within Between-group variance tells us how different the groups are from each other Within-group variance tells us how different or alike the cases are as a whole sample ANOVA Example Recidivism, measured as mean # of crimes committed in the year following release from custody: 90 individuals randomly receive 1 of the following sentences: Prison (mean = 3.4) Split sentence: prison & probation (mean = 2.5) Probation only (mean = 2.9) These groups have different sample means ANOVA tells you whether they are statistically significant – bigger than they would be due to chance alone # OF NEW OFFENSES: DEMO OF BETWEEN & WITHIN GROUP VARIANCE 2.0 2.5 GREEN: PROBATION (mean = 2.9) 3.0 3.5 4.0 # OF NEW OFFENSES: DEMO OF BETWEEN & WITHIN GROUP VARIANCE 2.0 2.5 GREEN: PROBATION (mean = 2.9) BLUE: SPLIT SENTENCE (mean = 2.5) 3.0 3.5 4.0 # OF NEW OFFENSES: DEMO OF BETWEEN & WITHIN GROUP VARIANCE 2.0 2.5 GREEN: PROBATION (mean = 2.9) BLUE: SPLIT SENTENCE (mean = 2.5) RED: PRISON (mean = 3.4) 3.0 3.5 4.0 # OF NEW OFFENSES: WHAT WOULD LESS “WITHIN GROUP VARIATION” LOOK LIKE? 2.0 2.5 GREEN: PROBATION (mean = 2.9) BLUE: SPLIT SENTENCE (mean = 2.5) RED: PRISON (mean = 3.4) 3.0 3.5 4.0 ANOVA Example, continued Differences (variance) between groups is also called “explained variance” (explained by the sentence different groups received). Differences within groups (how much individuals within the same group vary) is referred to as “unexplained variance” Differences among individuals in the same group can’t be explained by the different “treatment” (e.g., type of sentence) F STATISTIC When there is more within-group variance than betweengroup variance, we are essentially saying that there is more unexplained than explained variance In this situation, we always fail to reject the null hypothesis This is the reason the F(critical) table (Healey Appendix D) has no values <1 SPSS EXAMPLE Example: 1994 county-level data (N=295) Sentencing outcomes (prison versus other [jail or noncustodial sanction]) for convicted felons Breakdown of counties by region: REGION Valid MW NE S W Total Frequency 67 43 140 45 295 Percent 22.7 14.6 47.5 15.3 100.0 Valid Percent 22.7 14.6 47.5 15.3 100.0 Cumulative Percent 22.7 37.3 84.7 100.0 SPSS EXAMPLE Question: Is there a regional difference in the percentage of felons receiving a prison sentence? (0 = none; 100 = all) Null hypothesis (H0): There is no difference across regions in the mean percentage of felons receiving a prison sentence. Mean percents by region: Report TOT_PRIS REGION MW NE S W Total Mean 44.033 45.917 58.236 28.574 48.775 N 66 43 140 44 293 Std. Deviation 21.6080 17.9080 27.0249 16.3751 25.4541 SPSS EXAMPLE These results show that we can reject the null hypothesis that there is no regional difference among the 4 sample means The differences between the samples are large enough to reject Ho The F statistic tells you there is almost 20 X more between group variance than within group variance The number under “Sig.” is the exact probability of obtaining this F by chance A.K.A. “VARIANCE” ANOVA TOT_PRIS Sum of Squares Between Groups 32323.544 Within Groups 156866.3 Total 189189.8 df 3 289 292 Mean Square 10774.515 542.790 F 19.850 Sig. .000 ANOVA: POST HOC TESTS The ANOVA test is exploratory ONLY tells you there are sig. differences between means, but not WHICH means Post hoc (“after the fact”) Use when F statistic is significant Run in SPSS to determine which means (of the 3+) are significantly different OUTPUT: POST HOC TEST This post hoc test shows that 5 of the 6 mean differences are statistically significant (at the alpha =.05 level) (numbers with same colors highlight duplicate comparisons) p value (info under in “Sig.” column) tells us whether the difference between a given pair of means is statistically significant Multiple Comparisons Dependent Variable: TOT_PRIS Scheffe (I) REG_NUM MW NE S W (J) REG_NUM NE S W MW S W MW NE W MW NE S Mean Difference (I-J) -1.884 -14.203* 15.460* 1.884 -12.319* 17.344* 14.203* 12.319* 29.663* -15.460* -17.344* -29.663* Std. Error 4.5659 3.4787 4.5343 4.5659 4.0620 4.9959 3.4787 4.0620 4.0266 4.5343 4.9959 4.0266 *. The m ean di fference is si gnificant at the .05 level. Sig. .982 .001 .010 .982 .028 .008 .001 .028 .000 .010 .008 .000 95% Confi dence Interval Lower Bound Upper Bound -14.723 10.956 -23.985 -4.421 2.709 28.210 -10.956 14.723 -23.742 -.897 3.295 31.392 4.421 23.985 .897 23.742 18.340 40.986 -28.210 -2.709 -31.392 -3.295 -40.986 -18.340 ANOVA IN SPSS STEPS TO GET THE CORRECT OUTPUT… ANALYZE COMPARE MEANS ONE-WAY ANOVA INSERT… INDEPENDENT VARIABLE IN BOX LABELED “FACTOR:” DEPENDENT VARIABLE IN THE BOX LABELED “DEPENDENT LIST:” CLICK ON “POST HOC” AND CHOOSE “LSD” CLICK ON “OPTIONS” AND CHOOSE “DESCRIPTIVE” YOU CAN IGNORE THE LAST TABLE (HEADED “Homogenous Subsets”) THAT THIS PROCEDURE WILL GIVE YOU