Background for non-software engineering professionals - QI

advertisement

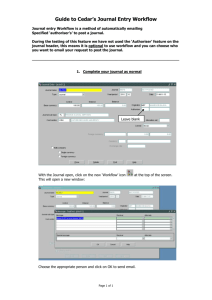

Program Update December 13, 2012 WITH FUNDING SUPPORT PROVIDED BY NATIONAL INSTITUTE OF STANDARDS AND TECHNOLOGY Andrew J. Buckler, MS Principal Investigator, QI-Bench Agenda • Enterprise Architecture: – Requirements overview – Background for non-software engineering professionals – Enterprise architecture modeling • Analysis library: – Current status and active extensions in progress – Drill-down on segmentation analysis activities • Update on workflow engine for the Compute Services : – First demonstration of Kepler, using the segmentation analysis as example 2 Requirements Overview Background for non-software engineering professionals • MVC – Model, View, Controller • Design Patterns • Frameworks 4 Background for non-software engineering professionals: Model – View – Controller • Model: Represents the state of what we are doing and how we think about it • View: How we perceive and seem to manipulate the model • Controller: mediator between the Model and View View Controller Model 5 Background for non-software engineering professionals: Design Patterns 6 Background for non-software engineering professionals: Frameworks 7 8 Most familiar: Data Services 9 Also familiar: Compute Services 10 Less familiar to some, but foundational to the full vision: The Blackboard 11 Interfacing to existing ecosystem: Workstations 12 Internal components within QI-Bench to make it work: Controller and Model Layers 13 Internal components within QI-Bench to make it work: QI-Bench REST 14 Last but not least: QI-Bench Web GUI 15 (GO TO LATEST TOP LEVEL GUI CONCEPT DEMO) 16 Compute Services: Objects for the Analyze Library • • • • Capabilities to analyze literature, to extract In Place • Reported technical performance • Covariates commonly measured in clinical trials Capability to analyze data to • Characterize image dataset quality • Characterize datasets of statistical outliers. Capability to analyze technical performance of datasets to, e.g. • Characterize effects due to scanner settings, sequence, geography, reader, – scanner model, site, and patient status. • Quantify sources of error and variability • Characterize intra- and inter-reader variability in the reading process. • Evaluate image segmentation algorithms. In Progress Capability to analyze clinical performance, e.g. • response analysis in clinical trials. • analyze relative effectiveness of response criteria and/or read paradigms. • overcome metric‘s limitations and add complementarity In Queue • establish biomarker characteristics and/or value as a surrogate endpoint. 17 Analyze Library: Coding View • Core Analysis Modules: • • • • AnalyzeBiasAndLinearity PerformBlandAltmanAndCCC ModelLinearMixedEffects ComputeAggregateUncertainty • Meta-analysis Extraction Modules: • • • • CalculateReadingsFromMeanStdev (written in MATLAB to generate synthetic Data) CalculateReadingsFromStatistics (written in R to generate synthetic data. Inputs are number of readings, mean, standard deviation, inter- and intra-reader correlation coefficients). CalculateReadingsAnalytically • Utility Functions: • • • PlotBlandAltman GapBarplot Blscatterplotfn 18 Drill-down on segmentation analysis activities Metric Purpose STAPLE Language Status To compute a probabilistic estimate FDA of the true segmentation and a measure of the performance level by each segmentation MATLAB testing STAPLE Same as above ITK C++ implemented soft STAPLE Extension of STAPLE to estimate performance from probabilistic segmentations TBD TBD TBD DICE Metric evaluation of spatial overlap ITK C++ implemented Vote Probability map ITK C++ implemented P-Map Probability map C. Meyer Perl TBD Versus (Peter Bajcsy) JAVA TBD Jaccard, Pixel-based comparisons Rand, DICE, etc. Source More? 19 Update on Workflow Engine for the Compute Services • allows users to create their own workflows and facilitates sharing and reusing of workflows. • has a good interface for capture of the provenance of data. • ability to work across different platforms (Linux, OSX, and Windows). • easy access to a geographically distributed set of data repositories, computing resources, and workflow libraries. • robust graphical interface. • can operate on data stored in a variety of formats, locally and over the internet (APIs, Web RESTful interfaces, SOAP, etc…). • directly interfaces to R, MATLAB, ImageJ, (or other viewers). • ability to create new components or wrap existing components from other programs (e.g., C programs) for use within the workflow. • provides extensive documentation. • grid-based approaches to distributed computation. 20 Taverna and Kepler… Two powerful suites for workflow management. However, Kepler improves Taverna by: • grid-based approaches to distributed computation. • directly interfaces to MATLAB, ImageJ, (or other viewers). • ability to wrap existing components from other programs (e.g., C programs) for use within the workflow. • provides extensive documentation. 21 22 Value proposition of QI-Bench • Efficiently collect and exploit evidence establishing standards for optimized quantitative imaging: – Users want confidence in the read-outs – Pharma wants to use them as endpoints – Device/SW companies want to market products that produce them without huge costs – Public wants to trust the decisions that they contribute to • By providing a verification framework to develop precompetitive specifications and support test harnesses to curate and utilize reference data • Doing so as an accessible and open resource facilitates collaboration among diverse stakeholders 23 Summary: QI-Bench Contributions • We make it practical to increase the magnitude of data for increased statistical significance. • We provide practical means to grapple with massive data sets. • We address the problem of efficient use of resources to assess limits of generalizability. • We make formal specification accessible to diverse groups of experts that are not skilled or interested in knowledge engineering. • We map both medical as well as technical domain expertise into representations well suited to emerging capabilities of the semantic web. • We enable a mechanism to assess compliance with standards or requirements within specific contexts for use. • We take a “toolbox” approach to statistical analysis. • We provide the capability in a manner which is accessible to varying levels of collaborative models, from individual companies or institutions to larger consortia or public-private partnerships to fully open public access. 24 QI-Bench Structure / Acknowledgements • Prime: BBMSC (Andrew Buckler, Gary Wernsing, Mike Sperling, Matt Ouellette, Kjell Johnson, Jovanna Danagoulian) • Co-Investigators – – • • Financial support as well as technical content: NIST (Mary Brady, Alden Dima, John Lu) Collaborators / Colleagues / Idea Contributors – – – – – – • Georgetown (Baris Suzek) FDA (Nick Petrick, Marios Gavrielides) UMD (Eliot Siegel, Joe Chen, Ganesh Saiprasad, Yelena Yesha) Northwestern (Pat Mongkolwat) UCLA (Grace Kim) VUmc (Otto Hoekstra) Industry – – • Kitware (Rick Avila, Patrick Reynolds, Julien Jomier, Mike Grauer) Stanford (David Paik) Pharma: Novartis (Stefan Baumann), Merck (Richard Baumgartner) Device/Software: Definiens, Median, Intio, GE, Siemens, Mevis, Claron Technologies, … Coordinating Programs – – RSNA QIBA (e.g., Dan Sullivan, Binsheng Zhao) Under consideration: CTMM TraIT (Andre Dekker, Jeroen Belien) 25