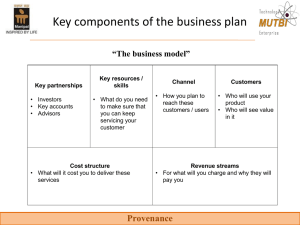

Slide - ProvenanceWeek

advertisement

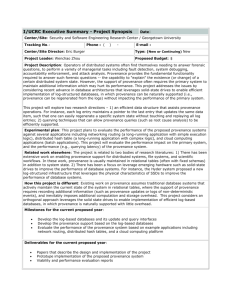

Approximated Provenance for Complex Applications Susan B. Davidson University of Pennsylvania Eleanor Ainy, Daniel Deutch, Tova Milo Tel Aviv University Crowd Sourcing The engagement of crowds of Web users for data procurement and knowledge creation. 2 2 Why now? We are all connected, all the time! 3 3 Complexity? • Many of the initial applications were quite simple – Specify Human Interaction Task (HIT) using e.g. Mechanical Turk, collect responses, aggregate to form result. • Newer ideas are multi-phase and complex, e.g. mining frequent fact sets from the crowd (OASSIS) – Model as workflows with global state 4 Outline • “State-of-the-art” in crowd data provenance • New challenges • A proposal for modeling crowd data provenance 5 Outline • “State-of-the-art” in crowd data provenance • New challenges • A proposal for modeling crowd data provenance 6 Crowd data provenance? • TripAdvisor: aggregates reviews and presents average ratings – Individual reviews are part of the provenance • Wikipedia: keeps extensive information about how pages are edited – ID of the user who generated the page as well as changes to page (when, who, summary) – Provides several views of this information, e.g. by page or by editor • Mainly used for presentation and explanation 7 8 9 10 11 12 13 14 15 Outline • “State-of-the-art” in crowd data provenance • New challenges • A proposal for modeling crowd data provenance 16 Challenges for crowd data provenance • Complexity of processes and number of user inputs involved – Provenance can be very large, leading to difficulties in viewing and understanding provenance • Need for – – – – Summarization Multidimensional views Provenance mining Compact representation for maintenance and cleaning 17 Summarization • Large size of provenance need for abstraction – E.g., in heavily edited Wikipedia pages: • “x1, x2, x3 are formatting changes; y1, y2, y3, y4 add content; z1 , z2 represent divergent viewpoints” • “u1 , u2 , u3 represent edits by robots; v1, v2 represent edits by Wikipedia administrators” – E.g., in a movie-rating application to summarize the provenance of the average rating for “MatchPoint” • “Audience crowd members gave higher ratings (8-10) whereas critics gave lower ratings (3-5).” 18 Multidimensional Views • “Perspective” through which provenance can be viewed or mined – E.g. in TripAdvisor, if there is an “outlier” review it would be useful to see other reviews by that person to “calibrate” it. – “Question” perspective could show which questions are bad/unclear 19 Maintenance and Cleaning • May need update propagation to remove certain users, questions and/or answers – E.g. spammers or bad questions • Mining of provenance may lag behind the aggregate calculation – E.g., detecting a spammer may only be possible when they have answered enough questions, or when enough answers have been obtained from other users. 20 Outline • “State-of-the-art” in crowd data provenance • New challenges • A proposal for modeling crowd data provenance 21 Crowd Sourcing Workflow Movie reviews Aggregator Platform 22 Provenance expression 23 Propagating provenance annotations through joins R A B C R … a b c p … JOIN (on B) S D B A E … d b e r … B ⋈S C D E … a b c d e p*r … The annotation p * r means joint use of data annotated by p and data annotated by r [Green, Karvounarakis, Tannen, Provenance Semirings. PODS 2007] 24 Propagating provenance annotations through unions and projections R A B C … a b c1 p … a b c2 r … a b c3 s … πABR A B … PROJECT a b p+r+s … + means alternative use of data, which arises in both PROJECT and UNION. [Green, Karvounarakis, Tannen, Provenance Semirings. PODS 2007] 25 Annotated Aggregate Expressions Q= R select Dept, sum(Sal) from R group by Dept Eid Dept Sal 1 d1 20 p1 2 d1 10 p2 3 d1 15 P3 The sum salary for d1 could be represented by the expression (20 ⊗p1 + 10 ⊗ p2 + 15⊗p3) This provenance aware value “commutes” with deletion. [Amsterdamer, Deutch, Tannen, Provenance for Aggregate Queries. PODS 2011] 26 Provenance expression 27 Provenance expression: Benefits • Can understand how movie ratings were computed. • Can be used for data maintenance and cleaning – E.g. if U2 is discovered to be a spammer, “map” its provenance annotation to 0 28 Summarizing provenance • Map annotations to a corresponding “summary” – h: Ann Ann’, where |Ann’| << |Ann| • E.g. in our example, let – h(Ui)=h(Si)=1, h(Ai)=A, h(Ci)=C – Reducing the expression to – Which simplifies to 29 Constructing mappings? • How do we define and find “good” mappings? – Provenance size – Semantic constraints (e.g. two annotations can only be mapped to the same annotation if they come from the same input table) – Distance between original provenance expression and the mapped expression (e.g. grouping all young French people and giving them an average rating for some movie) 30 Conclusions • Provenance is needed for crowd-sourcing applications to help understand the results and reason about their quality. • Techniques from database/workflow provenance can be used, but there are special challenges and “opportunities” 31