Computer Architecture

advertisement

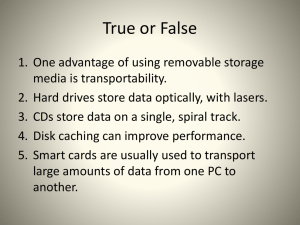

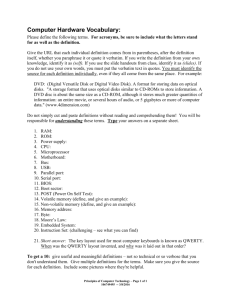

EEF011 Computer Architecture 計算機結構 Chapter 7 Storage Systems 吳俊興 高雄大學資訊工程學系 December 2004 Chapter 7. Storage Systems 7.1 7.2 7.3 7.4 7.5 7.6 7.7 7.8 7.9 Introduction Types of Storage Devices Busses - Connecting IO Devices to CPU/Memory Reliability, Availability and Dependability RAID: Redundant Arrays of Inexpensive Disks Errors and Failures in Real Systems I/O Performance Measures A Little Queuing Theory Benchmarks of Storage Performance and Availability 2 7.1 Introduction Motivation: Who cares about I/O? • Gaps between CPU and I/O system are getting worse! – CPU Performance: Improves 55% per year – I/O system performance limited by mechanical delays (disk I/O): improves less than 10% per year (IO per sec) • Amdahl's Law: system speed-up limited by the slowest part! Assume I/O takes 10% of overall system times – 10x CPU 5.26x Performance (lose 50% of CPU gain) – 100x CPU 9.17x Performance (lose 90% of CPU gain) • I/O bottleneck: –Diminishing fraction of time in CPU –Diminishing value of faster CPUs • Magnetic disks ( inclusive of hard disks and floppy disks) have dominated nonvolatile (permanent) storage since 1965 3 Who cares about CPUs? • Why still important to keep CPUs busy vs. IO devices ("CPU time"), as CPUs not costly? –Moore's Law leads to both large, fast CPUs but also to very small, cheap CPUs –2001 Hypothesis: 600 MHz PC is fast enough for Office Tools? –PC slowdown since fast enough unless games, new apps? • People care more about storing information and communicating information than calculating –"Information Technology" vs. "Computer Science" –1960s and 1980s: Computing Revolution → Execution Time –1990s and 2000s: Information Age → Dependability 4 I/O Systems 5 Storage Technology Drivers • Driven by the prevailing computing paradigm – 1950s: migration from batch to on-line processing – 1990s: migration to ubiquitous computing • computers in phones, books, cars, video cameras, … • nationwide fiber optical network with wireless tails • Effects on storage industry – Embedded storage • smaller, cheaper, more reliable, lower power – Data utilities • high capacity, hierarchically managed storage 6 7.2 Types of Storage Devices • Purpose – Long-term, nonvolatile storage – Large, inexpensive, slow level in the storage hierarchy • Types – Magnetic storages: disk, floppy, tape – Optical storages: compact discs (CD), digital video/versatile discs (DVD) – Electrical storage: flash memory • Bus Interface – IDE: ATA, S-ATA – SCSI – Small Computer System Interface – USB – Universal Serial Bus – 1394, Fibre Channel, etc. 7 Magnetic Disks Actuator Arm Track Sector Cylinder Head Read Write Cache Cache Data Platter Electronics (controller) Control Disk latency = Controller overhead + Seek Time + Rotation Time + transfer Time •Characteristics – Seek Time (~8 ms avg) • positional latency + rotational latency •Transfer rate – 10-40 MByte/sec, Blocks •Capacity – Approaching 400 Gigabytes – Quadruples every 2 years 7200 RPM = 120 RPS => 8 ms per rev ave rot. latency = 4 ms 128 sectors per track => 0.25 ms per sector 1 KB per sector => 16 MB / s • Seek Time depends no. tracks move arm, seek speed of disk • Rotation Time depends on speed disk rotates, how far sector is from head • Transfer Time depends on data rate (bandwidth) of disk (bit density), size of request 8 Data Rate: Inner vs. Outer Tracks • To keep things simple, originally kept same number of sectors per track – Since outer track longer, lower bits per inch (BPI) • Competition decided to keep BPI the same for all tracks (“constant bit density”) – More capacity per disk – More of sectors per track towards edge – Since disk spins at constant speed, outer tracks have faster data rate • Bandwidth: outer track 1.7X inner track! – Inner track highest density, outer track lowest, so not really constant – 2.1X length of track outer / inner, 1.7X bits outer / inner 9 Photo of Disk Head, Arm, Actuator Spindle Arm Head Actuator Platters (12) State of the Art: Barracuda 180 •181.6 GB, 3.5 inch disk •12 platters, 24 surfaces •24,247 cylinders •7,200 RPM; (4.2 ms avg. latency) •7.4/8.2 ms avg. seek (r/w) •64 to 35 MB/s (internal) •0.1 ms controller time •10.3 watts (idle) 10 1 inch disk drive! • 2000 IBM MicroDrive: – 1.7” x 1.4” x 0.2” – 1 GB, 3600 RPM, 5 MB/s, 15 ms seek – Digital camera, PalmPC? • 2004 Apple iPod Mini MP3 Player – 4 GB, 249 USD – 3.6” x 2.0” x 0.5” case – iPod: 20-40GB, 299 USD / 1.8” hard drive • 2006 MicroDrive? • 9 GB, 50 MB/s! 11 Figure 7.2 Characteristics of three magnetic disks of 2000 12 Magnetic Disk History Data density (Mbit/inch2) Capacity of Unit Shown (Megabytes) source: New York Times, 2/23/98, page C3, “Makers of disk drives crowd even more data into even smaller spaces” 13 Historical Perspective • 1956 IBM Ramac — early 1970s Winchester – Developed for mainframe computers, proprietary interfaces – Steady shrink in form factor: 27 in. to 14 in • Form factor and capacity drives market, more than performance • 1970s: Mainframes 14 inch diameter disks • 1980s: Minicomputers,Servers 8”,5 1/4” diameter • PCs, workstations Late 1980s/Early 1990s: – Mass market disk drives become a reality • industry standards: SCSI, IDE – Pizzabox PCs 3.5 inch diameter disks – Laptops, notebooks 2.5 inch disks – Palmtops didn’t use disks, so 1.8 inch diameter disks didn’t make it • 2000s: – 1 inch for cameras, camcorders, cell phones? 14 The Future of Magnetic Disks Disk Performance Model /Trends • Capacity + 100%/year (2X / 1.0 yrs) • Transfer rate (BW) + 40%/year (2X / 2.0 yrs) • Rotation + Seek time – 8%/ year (1/2 in 10 yrs) • MB/$ > 100%/year (2X / 1.0 yrs) Fewer chips + areal density 15 Areal Density • Bits recorded along a track – Metric is Bits Per Inch (BPI) • Number of tracks per surface – Metric is Tracks Per Inch (TPI) • Disk Designs Brag about bit density per unit area – Metric is Bits Per Square Inch, called Areal Density • Areal Density = BPI x TPI – through about 1988: 29% per year (double every three years) – then and about 1996: 60% per year (quadruple every three years) – from 1997 to 2001: 100% (double every year) Year Areal Density 1973 1.7 1979 7.7 1989 63 1997 3090 2000 17100 16 Figure 7.3 Price per PC disk by capacity (MBytes) between 1983 and 2001 • 4000 times in 18 years • Price declines become steeper from 30% per year to 100% per year 17 Figure 7.4 Price per GByte of PC disk over time, dropping a factor of 10,1000 between 1983 and 2001 The center point is the median price. The spread from min to max becomes large due to increasing difference in price between ATA.IDE and SCSI disks 18 Figure 7.5 Cost vs. access time for SRAM, DRAM, and magnetic disk • DRAM latency is about 100,000 times less than disk, although bandwidth is only about 50 times larger • Two-order-of-magnitude gap in cost and access times between semiconductor memory and rotating magnetic disks 19 Magnetic Tapes Tape vs. Disk •Magnetic tapes use similar technology as disks, and hence historically have followed the same density improvements •Disk head flies above surface, tape head lies on surface •Disk fixed, tape removable •Inherent cost-performance based on geometries: fixed rotating platters with gaps (random access, limited area, 1 media / reader) vs. removable long strips wound on spool (sequential access, "unlimited" length, multiple tapes / reader) •usually 10-100x over disks in price per GB (good for backup), but in 2001, the prices of a 40GB IDE disk and a 40GB tape is almost the same •Helical Scan (developed for VCR, Camcorder, etc) •Spins head at angle to tape to improve density by a factor of 20 to 50 20 Current Drawbacks to Tape • Wear out – Tape wear out: » Helical 100s of passes to 1000s for longitudinal – Head wear out: » 2000 hours for helical Both must be accounted for in economic / reliability model • Compatibility problem – Readers must be compatible with multiple generations of media – Disks are a closed system • Longer times – Long rewind, eject, load, spin-up times – long recovery time: take longer time to restore data from tape than disk • Designed for archival, market is small – PCs are a small market for tapes – cannot afford a separate large research and development effort • Get rid of tapes? – Replaced by networks and remote disks to replicate the data geographically 21 Automated Cartridge System: StorageTek Powderhorn 9310 7.7 feet 8200 pounds, 1.1 kilowatts 10.7 feet • 6000 x 50 GB 9830 tapes = 300 TBytes in 2000 (uncompressed) – Library of Congress: all information in the world; in 1992, ASCII of all books = 30 TB – Exchange up to 450 tapes per hour (8 secs/tape) • 1.7 to 7.7 Mbyte/sec per reader, up to 10 readers 22 Flash Memory • Flash memory: electronic solid state storage – written by inducing the tunneling of charge from transistor gain to a floating gate – The floating gate acts as a potential well that stores the charge, and the charge cannot move from there without applying an external force – Restricts writes to multi-kilobyte blocks, increasing memory capacity per chip by reducing area dedicated to control – Two basic types in 2001: » NOR: 1-2s to erase 64 KB to 128 KB blocks, 10 us/B to write, 0.1M write-cycles » NAND: 5-6 ms to erase 4KB to 8KB blocks, 1.5 us/B to write, 1M write-cycles • Applications – Nonvolatile storage: used for embedded devices and wireless portable devices (e.g., mobile phones, palms, mp3 player, digital cameras) – Rewritable ROM: allow software to be upgraded without having to replace chips • Comparisons – Offer lower power consumption (<50 mw) than disks; and can be sold in small sizes – Offer read access times comparable to DRAMs » 150ns fro 128M and 65ns for 16M in 2001 – writing is much slower and more complicated » sharing characteristics with older EPROM and EEPROM: erased first then written 23 7.3 Busses - Connecting IO Devices to CPU/Memory • Interconnect = glue that interfaces computer system components • High speed hardware interfaces + logical protocols • Networks, channels, backplanes Network Connects Machines >1000 m Distance Bandwidth Latency Reliability 10 - 1000 Mb/s Channel Devices 10 - 100 m Chips 0.1 m 40 - 1000 Mb/s 320 - 2000+ Mb/s high ( 1ms) medium low Extensive CRC medium Byte Parity message-based narrow pathways distributed arbitration Backplane low (Nanosecs.) high Byte Parity memory-mapped wide pathways centralized arbitration 24 A Computer System with One Bus: Backplane Bus Backplane Bus Processor Memory I/O Devices • A single bus (the backplane bus) is used for: – Processor to memory communication – Communication between I/O devices and memory • Advantages: Simple and low cost • Disadvantages: slow and the bus can become a major bottleneck • Example: IBM PC - AT 25 A Two-Bus System Processor Memory Bus Processor Memory Bus Adaptor I/O Bus Bus Adaptor Bus Adaptor I/O Bus I/O Bus •I/O buses tap into the processor-memory bus via bus adaptors: – Processor-memory bus: mainly for processor-memory traffic – I/O buses: provide expansion slots for I/O devices •Apple Macintosh-II – NuBus: Processor, memory, and a few selected I/O devices – SCCI Bus: the rest of the I/O devices 26 A Three-Bus System Processor Memory Bus Processor Memory Bus Adaptor Backplane Bus Bus Adaptor Bus Adaptor I/O Bus I/O Bus • A small number of backplane buses tap into the processormemory bus – Processor-memory bus is only used for processor-memory traffic – I/O buses are connected to the backplane bus • Advantage: loading on the processor bus is greatly reduced 27 Separate Bus Architecture: North/South Bridges Processor Director “backside cache” Processor Memory Bus Memory Backplane Bus Bus Adaptor Bus Adaptor I/O Bus I/O Bus • Separate sets of pins for different functions – Memory bus – Caches – Graphics bus (for fast frame buffer) – I/O busses are connected to the backplane bus • Advantages: – Busses can run at different speeds – Much less overall loading! 28 Example: Intel Chipset System Bus: 1066, 800 Mhz 82925XE MCH Memory Controller Hub FSB/Mem: 2 DIMMs/channel * 2 1066/800 DDR2-533/400 4 GB Interface: PCI Express * 16 ICH6R ICH PCI Support: 4 PCI Express Storage Interface: ATA 100 SATA 150/4, UDMA USB: USB 2.0 * 8 ports I/O Controller Hub Audio: AC'97/20-bit audio I/O: SMBus 2.0 / GPIO 29 Examples: VIA Chipsets PT880 + VT8837 for Intel P4 K8T890+VT8251 for AMD Athlon 64 30 Bus Design Master Slave °°° Control Lines Address Lines Data Lines •Synchronous Bus: includes a clock in the control lines – A fixed protocol for communication that is relative to the clock – Advantage: involves very little logic and can run very fast – Disadvantages: • Every device on the bus must run at the same clock rate • To avoid clock skew, busses cannot be long if they are fast •Asynchronous Bus: not clocked – It can accommodate a wide range of devices – It can be lengthened without worrying about clock skew – It requires a handshaking protocol •Bus Master: has ability to control the bus, initiates transaction •Bus Slave: module activated by the transaction •Bus Communication Protocol: specification of sequence of events and timing requirements in transferring information 31 Bus Design Options 32 Summary of Parallel and Serial I/O Buses 33 Interfacing I/O to CPU The interface consists of setting up the device with what operation is to be performed: • Read or Write • Size of transfer • Location on device • Location in memory Then triggering the device to start the operation When operation complete, the device will interrupt. Method 1: I/O instructions I/O instructions (in,out) unique from memory access instructions LDD R0,D,P <-- Load R0 with the contents found at device D, port P. Method 2: memory-mapped I/O Device registers are mapped to look like regular memory: LD R0,Mem1 <-- Load R0 with the contents found at device D, port P. This works because an initialization has correlated the device characteristics with location Mem1. 34 Memory Mapped I/O • Some physical addresses are set aside; There is no REAL memory at these addresses • Instead when the processor sees these addresses, it knows to aim the instruction at the IO processor ROM RAM Virtual Memory Pointing at IO space. target device where commands are I/O OP Device Address (1) Issues instruction to IOC CPU IOC (3) (4) IOC interrupts CPU when done IOP looks in memory for commands (2) OP Addr Cnt Other memory Device to/from memory transfers are controlled by the IOC directly. what to do special requests where to put data how much 35 Transfer Method 1: Programmed I/O (Polling) CPU Is the data ready? Memory IOC no yes read data but checks for I/O completion can be dispersed among computationally intensive code device store data done? busy wait loop not an efficient way to use the CPU unless the device is very fast! no yes 36 Device Interrupts • An I/O interrupt is just like the exception handlers except: – An I/O interrupt is asynchronous – Further information needs to be conveyed • An I/O interrupt is asynchronous with respect to instruction execution: – I/O interrupt is not associated with any instruction – I/O interrupt does not prevent any instruction from completion • You can pick your own convenient point to take an interrupt • I/O interrupt is more complicated than exception: – Needs to convey the identity of the device generating the interrupt – Interrupt requests can have different urgencies: • Interrupt request needs to be prioritized 37 add subi slli $r1,$r2,$r3 $r4,$r1,#4 $r4,$r4,#2 Hiccup(!) lw lw add sw $r2,0($r4) $r3,4($r4) $r2,$r2,$r3 8($r4),$r2 Raise priority Reenable All Ints Save registers lw $r1,20($r0) lw $r2,0($r1) addi $r3,$r0,#5 sw $r3,0($r1) Restore registers Clear current Int Disable All Ints Restore priority RTI “Interrupt Handler” External Interrupt Device Interrupts • Advantage: – User program progress is only halted during actual transfer • Disadvantage, special hardware is needed to: – Cause an interrupt (I/O device) – Detect an interrupt (processor) – Save the proper states to resume after the interrupt (processor) 38 Transfer Method 2: Interrupt Driven Data Transfer add sub and or nop CPU (1) I/O interrupt user program (2) save PC Memory IOC device (3) interrupt service addr User program progress is only halted during actual transfer. Interrupt handler code does the transfer. (4) read store ... rti interrupt service routine memory 1000 transfers at 1000 bytes each: 1000 interrupts @ 2 µsec per interrupt 1000 interrupt service @ 98 µsec each = 0.1 CPU seconds Device xfer rate = 10 MBytes/sec => 0 .1 x 10-6 sec/byte => 0.1 µsec/byte => 1000 bytes = 100 µsec 1000 transfers x 100 µsecs = 100 ms = 0.1 CPU seconds Still far from device transfer rate! 1/2 in interrupt overhead 39 Delegating I/O Responsibility from CPU: DMA CPU sends a starting address, direction, and length count to IOC. Then issues "start". Direct Memory Access (DMA): – External to the CPU – Act as a master on the bus – Transfers blocks of data to or from memory without CPU intervention CPU Memory IOC device IOC provides handshake signals for Peripheral Controller, and Memory Addresses and handshake signals for Memory. 40 Transfer Method 3: Direct Memory Access Time to do 1000 transfers at 1000 bytes each: 1 DMA set-up sequence @ 50 µsec 1 interrupt @ 2 µsec 1 interrupt service sequence @ 48 µsec CPU sends a starting address, direction, and length count to DMAC. Then issues "start" .0001 second of CPU time 0 CPU Memory Mapped I/O Memory ROM RAM IOC device Peripherals IOC provides handshake signals for Peripheral Controller, and Memory Addresses and handshake signals for Memory IO Buffers n 41 7.5 RAID: Redundant Array of Independent Disks Use Arrays of Small Disks? Katz and Patterson asked in 1987: • Can smaller disks be used to close gap in performance between disks and CPUs? Conventional: 4 disk 3.5” 5.25” designs Low End 10” 14” High End Disk Array: 1 disk design 3.5” 42 Array Reliability •Reliability of N disks = Reliability of 1 Disk ÷ N 1,200,000 Hours ÷ 100 disks = 12,000 hours 1 year = 365 * 24 = 8700 hours Disk system MTTF: drops from 140 years to about 1.5 years! • Arrays (without redundancy) too unreliable to be useful! Hot spares support reconstruction in parallel with access: very high media availability can be achieved 43 Redundant Arrays of Inexpensive Disks Ideas • Files are "striped" across multiple spindles • Redundancy yields high data availability Disks will fail Contents reconstructed from data redundantly stored in the array – Capacity penalty to store redundant info – Bandwidth penalty to update Techniques – Mirroring/Shadowing (high capacity cost) – Parity 44 Figure 7.17 Standard RAID Levels 45 RAID 1: Disk Mirroring/Shadowing recovery group shadow shadow • Each disk is fully duplicated onto its "shadow" Very high availability can be achieved • Bandwidth sacrifice on write: Logical write = two physical writes • Reads may be optimized • Most expensive solution: 100% capacity overhead Targeted for high I/O rate, high availability environments (RAID 2 not interesting, so skip) 46 RAID 3: Bit-Interleaved Parity Disk 10010011 11001101 10010011 ... logical record Striped physical records P 1 0 0 1 0 0 1 1 1 1 0 0 1 1 0 1 1 0 0 1 0 0 1 1 1 1 0 0 1 1 0 1 • Parity computed across recovery group to protect against hard disk failures • 33% capacity cost for parity in this configuration • wider arrays reduce capacity costs, decrease expected availability, increase reconstruction time • Arms logically synchronized, spindles rotationally synchronized • logically a single high capacity, high transfer rate disk Targeted for high bandwidth applications: Scientific, Image Processing 47 Inspiration for RAID 4 • RAID 3 relies on parity disk to discover errors on Read, but every sector has an error detection field – In RAID 3, every access (read/write) went to all disks – We rely on error detection field to catch errors on read, not on the parity disk • How to allows independent reads to different disks simultaneously? • RAID 4 and RAID 5: block-interleaved parity and distributed block-interleaved parity – The parity is stored as blocks – Smaller accesses is allowed to occur in parallel – Small write involves 2 disks instead of accessing all disks in RAID 3 48 RAID 4:Block-Interleaved Parity Insides of 5 disks Example: small read D0 & D5, large write D12-D15 High I/O Rate Parity D0 D1 D2 D3 P D4 D5 D6 D7 P D8 D9 D10 D11 P D12 D13 D14 D15 P D16 D17 D18 D19 P D20 D21 D22 D23 P . . . . Columns . . . . . . . . . . Disk . Increasing Logical Disk Address Stripe 49 Figure 7.18 Small write update on RAID 3 vs. RAID 4/5 •Assume 4 blocks of data and 1 block of parity •RAID 3: read blocks D1, D2 and D3, add block D0’ to calculate new P’, and write D0’ and P’ •RAID4/5: read D0 and P, calculate new P’, and write D0’ and P’ 50 Inspiration for RAID 5 D0 D1 D2 D3 P D4 D5 D6 D7 P • RAID 4 works well for small reads; for small writes: – Option 1: read other data disks, create new sum and write to Parity Disk – Option 2: since P has old sum, compare old data to new data, add the difference to P – Small writes are limited by Parity Disk: Write to D0, D5 both also write to P disk • RAID 5: distributed block-interleaved parity – Distribute parity blocks to disks • RAID 6: P+Q Redundancy – Parity-based schemes in RAID 1-5 protect against a single selfidentifying failure only – Second check block allows recovery from a second failure (ReedSolomon codes) 1 extra Q disk – There are 6 disk accesses instead of 4 to update both P and Q information (read Q and write Q’) 51 RAID 5: Distributed Block-Interleaved Parity Independent writes possible because of interleaved parity Example: write to D0, D5 uses disks 0, 1, 3, 4 D0 D1 D2 D3 P D4 D5 D6 P D7 D8 D9 P D10 D11 D12 P D13 D14 D15 P D16 D17 D18 D19 D20 D21 D22 D23 P . . . . . . . . . Disk Columns. . . High I/O Rate Interleaved Parity Increasing Logical Disk Addresses . . . 52 System Availability: Orthogonal RAIDs Array Controller String Controller . . . String Controller . . . String Controller . . . String Controller . . . String Controller . . . String Controller . . . Data Recovery Group: unit of data redundancy Redundant Support Components: fans, power supplies, controller, cables End to End Data Integrity: internal parity protected data paths 53 System-Level Availability host host Fully dual redundant I/O Controller Array Controller I/O Controller Array Controller ... ... ... ... Goal: No Single Points of Failure ... Recovery Group . . . with duplicated paths, higher performance can be obtained when there are no failures 54 Summary: RAID Techniques: Goal was performance, popularity due to reliability of storage • Disk Mirroring, Shadowing (RAID 1) Each disk is fully duplicated onto its "shadow" Logical write = two physical writes 100% capacity overhead • Parity Data Bandwidth Array (RAID 3) Parity computed horizontally Logically a single high data bw disk • High I/O Rate Parity Array (RAID 5) 1 0 0 1 0 0 1 1 1 0 0 1 0 0 1 1 1 0 0 1 0 0 1 1 1 1 0 0 1 1 0 1 1 0 0 1 0 0 1 1 0 0 1 1 0 0 1 0 Interleaved parity blocks Independent reads and writes Logical write = 2 reads + 2 writes 55 Summary Chapter 7. Storage Systems 7.1 Introduction 7.2 Types of Storage Devices 7.3 Busses - Connecting IO Devices to CPU/Memory 7.4 Reliability, Availability and 7.5 RAID: Redundant Arrays of Inexpensive Disks 7.6 Errors and Failures in Real Systems 7.7 I/O Performance Measures 7.8 A Little Queuing Theory 7.9 Benchmarks of Storage Performance and Availability 56