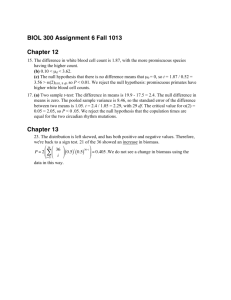

Day 1

advertisement