Data Reduction

advertisement

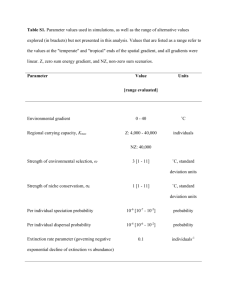

Noise & Data Reduction Paired Sample t Test Data Transformation - Overview From Covariance Matrix to PCA and Dimension Reduction Fourier Analysis - Spectrum Dimension Reduction Data Integration Automatic Concept Hierarchy Generation Testing Hypothesis Remember: Central Limit Theorem The sampling distribution of the mean of samples of size N approaches a normal (Gaussian) distribution as N approaches infinity. If the samples are drawn from a population with mean and standard deviation , then the mean of the sampling distribution is and its standard deviation is x N as N increases. These statements hold irrespective of the shape of the original distribution. Z Test standard deviation (population) N x 2 1 Z xi x / N N i1 t Test x t s/ N sample standard deviation N 1 s xi x N 1 i1 2 • when population standard deviation is unknown, samples are small population mean , sample mean x p Values Commonly we reject the H0 when the probability of obtaining a sample statistic given the null hypothesis is low, say < .05 The null hypothesis is rejected but might be true We find the probabilities by looking them up in tables, or statistics packages provide them The probability of obtaining a particular sample given the null hypothesis is called the p value By convention, one usually dose not reject the null hypothesis unless p < 0.05 (statistically significant) Example Five cars parked, mean price of the cars is 20.270 € and the standard deviation of the sample is 5.811€ The mean costs of cars in town is 12.000 € (population) H0 hypothesis: parked cars are as expensive as the cars in town 20270 12000 t 3.18 5811/ 5 For N-1 (degrees of freedom) t=3.18 has a value less than 0.025, reject H0! Paired Sample t Test Given a set of paired observations (from two normal populations) A B =A-B x1 y1 x1-x2 x2 y2 x2-y2 x3 y3 x3-y3 x4 y4 x4-y4 x5 y5 x5-y5 Calculate the mean x and the standard deviation s of the the differences H0: =0 (no difference) H0: =k (difference is a constant) x t ˆ s ˆ N Confidence Intervals ( known) Standard error from the standard deviation x Population N 95 Percent confidence interval for normal distribution is about the mean x 1.96 x Confidence interval when ( unknown) s ˆx N Standard error from the sample standard deviation 95 Percent confidence interval for t distribution (t0.025 from a table) is 0.025 x xt ˆ Previous Example: Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (LZW)“ benötigt. Overview Data Transformation Reduce Noise Reduce Data Data Transformation Smoothing: remove noise from data Aggregation: summarization, data cube construction Generalization: concept hierarchy climbing Normalization: scaled to fall within a small, specified range min-max normalization z-score normalization normalization by decimal scaling Attribute/feature construction New attributes constructed from the given ones Data Transformation: Normalization Min-max normalization: to [new_minA, new_maxA] v' v minA (new _ maxA new _ minA) new _ minA maxA minA Ex. Let income range $12,000 to $98,000 normalized to [0.0, 1.0]. Then $73,000 is mapped to 73,600 12,000 (1.0 0) 0 0.716 98,000 12,000 Z-score normalization (μ: mean, σ: standard deviation): v' v A A Ex. Let μ = 54,000, σ = 16,000. Then Normalization by decimal scaling 73,600 54,000 1.225 16,000 How to Handle Noisy Data? (How to Reduce Features?) Binning Regression smooth by fitting the data into regression functions Clustering first sort data and partition into (equal-frequency) bins then one can smooth by bin means, smooth by bin median, smooth by bin boundaries, etc. detect and remove outliers Combined computer and human inspection detect suspicious values and check by human (e.g., deal with possible outliers) Data Reduction Strategies A data warehouse may store terabytes of data Data reduction Complex data analysis/mining may take a very long time to run on the complete data set Obtain a reduced representation of the data set that is much smaller in volume but yet produce the same (or almost the same) analytical results Data reduction strategies Data cube aggregation Dimensionality reduction—remove unimportant attributes Data Compression Numerosity reduction—fit data into models Discretization and concept hierarchy generation Simple Discretization Methods: Binning Equal-width (distance) partitioning: Divides the range into N intervals of equal size: uniform grid if A and B are the lowest and highest values of the attribute, the width of intervals will be: W = (B –A)/N. The most straightforward, but outliers may dominate presentation Skewed data is not handled well. Equal-depth (frequency) partitioning: Divides the range into N intervals, each containing approximately same number of samples Good data scaling Managing categorical attributes can be tricky. Binning Methods for Data Smoothing * Sorted data for price (in dollars): 4, 8, 9, 15, 21, 21, 24, 25, 26, 28, 29, 34 * Partition into (equi-depth) bins: - Bin 1: 4, 8, 9, 15 - Bin 2: 21, 21, 24, 25 - Bin 3: 26, 28, 29, 34 * Smoothing by bin means: - Bin 1: 9, 9, 9, 9 - Bin 2: 23, 23, 23, 23 - Bin 3: 29, 29, 29, 29 * Smoothing by bin boundaries (min and max are identified, bin value replaced by the closesed boundary value): - Bin 1: 4, 4, 4, 15 - Bin 2: 21, 21, 25, 25 - Bin 3: 26, 26, 26, 34 Cluster Analysis Regression y Y1 Y1’ y=x+1 X1 x Heuristic Feature Selection Methods There are 2d -1 possible sub-features of d features Several heuristic feature selection methods: Best single features under the feature independence assumption: choose by significance tests Best step-wise feature selection: • The best single-feature is picked first • Then next best feature condition to the first, ... Step-wise feature elimination: • Repeatedly eliminate the worst feature Best combined feature selection and elimination Optimal branch and bound: • Use feature elimination and backtracking Sampling: with or without Replacement Raw Data From Covariance Matrix to PCA Principal Component Analysis and Dimension Reduction X2 Y1 Y2 X1 Feature space Sample x (1) (k) , x ,.., x ,.., x x1 x 2 d x .. .. x d (2) xy (n ) d 2 (x y ) i i i1 Scaling A well-known scaling method consists of performing some scaling operations subtracting the mean and dividing the standard deviation (x i mi ) yi si mi sample mean si sample standard deviation According to the scaled metric the scaled feature vector is expressed as (x i mi ) 2 || y ||s 2 si i1 n shrinking large variance values stretching low variance values si > 1 si < 1 Fails to preserve distances when general linear transformation is applied! Covariance Measuring the tendency two features xi and xj varying in the same direction The covariance between features xi and xj is estimated for n patterns n c ij k1 x i mi x j (k ) n 1 (k ) mj c11 c12 c c 21 22 C .. .. c d1 c d 2 .. c1d .. c 2d .. .. .. c dd Correlation Covariances are symmetric cij=cji Covariance is related to correlation n rij x k1 (k ) i mi x j (k ) (n 1)si s j mj c ij si s j 1,1 Karhunen-Loève Transformation Covariance matrix C of (a d d matrix) Symmetric and positive definite U CU diag(1, 2 ,..., d ) T I Cu 0 There are d eigenvalues and eigenvectors Cui ui is the i ith eigenvalue of C and ui the ith column of U, the ith eigenvectors Eigenvectors are always orthogonal U is an orthonormal matrix UUT=UTU=I U defines the K-L transformation The transformed features by the K-L transformation are given by y Ux (linear Transformation) K-L transformation rotates the feature space into alignment with uncorrelated features Example 2 1 C 1 1 I C 0 1=2.618 2=0.382 0.618 1 u1 0 1.618u2 1 u(1)=[1 0.618] u(2)=[-1 1.618] 3 1 0 2 Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (LZW)“ benötigt. Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (LZW)“ benötigt. PCA (Principal Components Analysis) New features y are uncorrelated with the covariance Matrix Each eigenvector ui is associated with some variance associated by i Uncorrelated features with higher variance (represented by i) contain more information Idea: Retain only the significant eigenvectors ui Dimension Reduction How many eigenvectors (and corresponding eigenvector) to retain Kaiser criterion Discards eigenvectors whose eigenvalues are below 1 Problems Principal components are linear transformation of the original features It is difficult to attach any semantic meaning to principal components Fourier Analysis It is always possible to analyze „complex“ periodic waveforms into a set of sinusoidal waveforms Any periodic waveform can be approximated by adding together a number of sinusoidal waveforms Fourier analysis tells us what particular set of sinusoids go together to make up a particular complex waveform Spectrum In the Fourier analysis of a complex waveform the amplitude of each sinusoidal component depends on the shape of particular complex wave • Amplitude of a wave: maximum or minimum deviation from zero line • T duration of a period 1 • f T Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (LZW)“ benötigt. Noise reduction or Dimension Reduction It is difficult to identify the frequency components by looking at the original signal Converting to the frequency domain If dimension reduction, store only a fraction of frequencies (with high amplitude) If noise reduction (remove high frequencies, fast change, smoothing) (remove low frequencies, slow change, remove global trends) Inverse discrete Fourier transform Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (LZW)“ benötigt. Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (LZW)“ benötigt. Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (Unkomprimiert)“ benötigt. Zur Anzeige wird der QuickTime™ Dekompressor „TIFF (Unkomprimiert)“ benötigt. Dimensionality Reduction: Wavelet Transformation Haar2 Daubechie4 Discrete wavelet transform (DWT): linear signal processing, multi-resolutional analysis Compressed approximation: store only a small fraction of the strongest of the wavelet coefficients Similar to discrete Fourier transform (DFT), but better lossy compression, localized in space Method: Length, L, must be an integer power of 2 (padding with 0’s, when necessary) Each transform has 2 functions: smoothing, difference Applies to pairs of data, resulting in two set of data of length L/2 Applies two functions recursively, until reaches the desired length Data Integration Data integration: Schema integration: e.g., A.cust-id B.cust-# Integrate metadata from different sources Entity identification problem: Combines data from multiple sources into a coherent store Identify real world entities from multiple data sources, e.g., Bill Clinton = William Clinton Detecting and resolving data value conflicts For the same real world entity, attribute values from different sources are different Possible reasons: different representations, different scales, e.g., metric vs. British units Handling Redundancy in Data Integration Redundant data occur often when integration of multiple databases Object identification: The same attribute or object may have different names in different databases Derivable data: One attribute may be a “derived” attribute in another table, e.g., annual revenue Redundant attributes may be able to be detected by correlation analysis Careful integration of the data from multiple sources may help reduce/avoid redundancies and inconsistencies and improve mining speed and quality Automatic Concept Hierarchy Generation Some hierarchies can be automatically generated based on the analysis of the number of distinct values per attribute in the data set The attribute with the most distinct values is placed at the lowest level of the hierarchy Exceptions, e.g., weekday, month, quarter, year country 15 distinct values province_or_ state 365 distinct values city 3567 distinct values street 674,339 distinct values Paired Sample t Test Data Transformation - Overview From Covariance Matrix to PCA and Dimension Reduction Fourier Analysis - Spectrum Dimension Reduction Data Integration Automatic Concept Hierarchy Generation Mining Association rules Apriori Algorithm (Chapter 6, Han and Kamber)