Relationship Analysis between User's Contexts and Real Input

advertisement

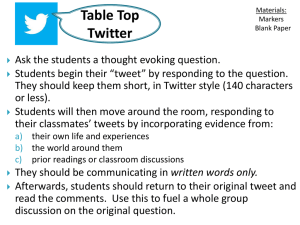

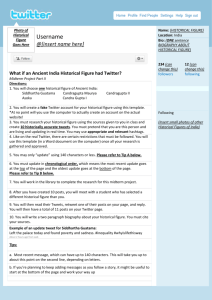

Relationship Analysis between User’s Contexts and Real Input Words through Twitter Yutaka Arakawa, Shigeaki Tagashira and Akira Fukuda Graduate School of Information Science and Electrical Engineering, Kyushu University, Fukuoka, Japan 744, Motooka, Nishi-ku, Fukuoka, Fukuoka, JAPAN, 819-0395 Email: arakawa, shigeaki, fukuda@f.ait.kyushu-u.ac.jp Abstract—In this paper, we propose a method to evaluate effectiveness of our proposed context-aware text entry by using Twitter. We focus on ”geo-tagged” public tweets because they include user’s important contexts, real location and time. We also focus on TV program listing because 50% traffic of iPhone in Japan is generated from our home, in which I often tweets in watching a TV. Cyclical collecting system based on Streaming API and Search API of Twitter is proposed for gathering the target tweets efficiently. In order to find the relationship between user’s contexts and really used words, we compare really-tweeted words with words obtained from Local Search API of Yahoo! Japan that is used for our context-aware text entry and words obtained from TV program listing. We analyze 471274 tweets that have been collected from 15 December 2009 to 10 June 2010 for specifying the relationship to landmark information and TV program. As a result, we show that 5.1% of tweets include landmark words, and 9% of tweets include TV program words. Additionally, we bring out that there are location dependent words and time dependent words. I. I NTRODUCTION In a recent research in Japan[1], it is turned out that over fifty percent of Internet users access the Internet from mobile devices. And among them, over eighty percent users access the information by using not hierarchical menu in official site but search engines such as Google. Moreover, current mobile devices can use not only text messaging but also web-mail such as Gmail. It indicates that one has an opportunity to input a long text. The increase of text input on mobile devices drives the demand for improving a text input method. Recent mobile phones generally equip clever text entry which have a function of predictive transform. This function consists of dictionaries, syntactic parsing, and learning. When a user inputs “a”, it picks up the words which start with “a” from a dictionary, and recommends some candidate words which seems appropriate according to syntactic parsing. In addition, based on user’s input history, such as frequency or time stamp of latest use, it sorts the order of the candidates. In these days, iWnn[2], one of Japanese text entry adopted many kinds of mobile phones, suggests more appropriate words according to current seasons, time, body of received e-mail, relationships between superior and inferior. As another approach that differs from text entry, “Google Suggest” provides oftenused keyword combination for optimizing search terms and reducing keystrokes. Meanwhile we have proposed context-aware text entry[3] which can suggest useful words based on user’s context such as location, presence and time. We mainly focus on how to make dictionary dynamically among above-mentioned three component of predictive transform in text entry. The reason is that the word not included in the dictionary cannot be recommended, and we are not specialists of philological syntactic parsing. In our proposed system, based on user’s current contexts, the dictionary in the mobile phone is updated periodically in cooperation with the dictionary creation server on the Internet, which generates user’s current dictionary dynamically by using several public Web APIs. In our current prototype, “location” and “time” are adopted as a user’s context, and landmark names surrounding user are added based on user’s location, and TV programs’ title and performers’ name are added based on “time”. The reason why we use TV program is that 50% iPhone traffic in Japan is transmitted through home WiFi networks. As a result, if you input “H” at neighbor venue of Globecom2010, the system may suggest “Hyatt Regency Miami” as one of candidates. If you input “J” or “K” in watching 24, the system may return “Jack Bauer” or “Kiefer Sutherland” respectively. We have already constructed the OpenWnn-based prototype system on Android terminal[4]. We have already tried several system architectures, and have confirmed that one of them can achieve enough response time[5]. Also, we are evaluating our system through demonstration and questionnaire with some persons. Although our system looks effective, there is an important remaining issue. Since we started this research with the assumption that such kind of system will be convenient for us, there is no evidence or quantitative evaluation for representing the effectiveness. It is hard to gather large amount of results through questionnaire-based evaluation. In addition, if we log really inputted sentences in a mobile phone, we must consider privacy protection in relation to personal data. In this paper, we propose a method for evaluating contextaware text entry by using Twitter. Twitter is a micro blogging service on the Internet, where a short message of up to 140 characters, called tweet, can be posted. And these tweets are generally open for the public. The reason why we focus on Twitter is 1) we can obtain huge amount of public strings of various users, 2) Geotagging API released at November 2009 enables users to add geo code to each tweet. It means that we can extensively and publicly collect real input sentences that include users’ real location. Therefore, we think that to analyze collected data clears up the relationship between user’s Train Transit Application E-mail Application Map Application Internet Departure Destination Roppongi I took a Yamanote train Hyatt Regency Miami Local device External server from Shinbashi. Soon, I Tokyo will arrive Shibuya. Meet Acceleration sensor Dictionary is dynamically generated by using public APIs on the Internet Bank of America, Hyatt, Roppongi, Tokyo, etc. Shinbashi, Hachiko James L. Knight Center Asynchronous Roman character Typical Effective Examples of context-aware text entry Direct plugin IME ATOK contexts and real input words. As a result, it can show the effectiveness of our proposed text entry quantitatively. First, we construct the tweet collecting system that obtains Japanese tweets with geocode, where we effectively combine two APIs of Twitter, Streaming API and Search API to gather huge amount of tweets. Our system has already gathered halfmillion tweets since 15 December 2009. Next, we analyze collected data by comparing with the data that obtained from other APIs. In this paper, we use “Yahoo! Local search API[6]” for obtaining landmark information, and use “TV program listing on the Internet” for obtaining TV programs’ title and performers’ name. These APIs are the same as APIs for making dictionary in our context-aware text entry. In our relationship analysis, both data are separated into some words by using “Yahoo! Japanese language morphological analysis API” and “Yahoo! Key phrase extraction API”. As a result, we show that 5.1% of tweets include landmark words, and 9% of tweets include TV program words. Additionally, we bring out that there are location dependent words and time dependent words. The rest of the paper is organized as follows. We present our context-aware input method editor proposed previously in Section 2. In section 3, we explain about Twitter. And following section explains relationship analysis. Finally, results are shown in Section 5. II. C ONTEXT-AWARE T EXT E NTRY FOR M OBILE P HONE Fig.1 shows a typical service examples in which our proposed context-aware text entry will work effective. It indicates the importance of words varies with a location (i.e., user’s context). For example, nearby station name is used at stations, landmark name is used at a new places, product name is used at bookstores and electronic retail stores. The most characteristic point is that dictionary is automatically and dynamically updated by mashing up public Web APIs in the Internet. Nearest station API can be used for obtaining the station name near here. Also, Landmark information API can be adopted to search landmark names surrounding the user. In addition, we introduced learning process into this system. If a user selects the word in suggested candidates at the station, the system judges it may be used at the same place in the future. If the word is not used, it judges that the word is not useful in this place. By repeating these learning Japanese language morphological analysis (MeCab) Context-Aware Select & Sort Engine or Location API Yahoo API Input Hiragana Schedule API Presense API Google API Schedule API Amazon API Tabelog API For making dictionary Landmark Info. API Train, Go, Take, Ride, Other sensors API access module (XML parser) Schedule/Calender API Shibuya, Shinjuku, Global context Feedback Nearest station API Context updater Local context For estimation Context Estimation Engine GPS sensor at Statue of Hachiko. API access module (XML parser) Route Search Fig. 1. Internal server GuruNavi API Output "Personal Context Dictionary" mixture of Chinese characters and Japanese phonetic characters As a general dictionary kana-kanji conversion API Fig. 2. The architecture of prototype system processes cyclically, the words related to a certain place become suggested, and normal words will be suggested in other place. A. System architecture The architecture of prototype system is shown in Figure 2. It is composed of three parts, local device, our server on the Internet, and general web services on the Internet. The local device has various sensors such as GPS and acceleration. In our prototype system, we use a PC as local device and adopt the Google Maps API as GPS sensor for setting user ’ s location visually. The internal server in the center of Figure 2 is a main part of our proposed system. It collects information and estimates of user’s context, creates the dynamic dictionary, and suggests the words by utilizing user’s context. These functions are possible to construct on local device. However, we set it into the server over the global network because it is important not only accuracy of estimation algorithm but also processing speed. Besides, we architect it works asynchronously to collect sensor information by the system and to input text on local device. The dictionary is updated whenever location is varied. As a result, local device only searches pre-constructed database when text is input. This architecture enable the system to prevent the processing speed from slowing down when web external servers increase. The external servers in the right of Figure 2 are not our servers but provided by several companies. In the case of this prototype system, it cooperates with the Yahoo Local Search API, Google Maps API, and Gurunavi API. Some words provided by these APIs are materials of personal context-aware dictionary. We develop the two prototype systems. One is the extension of OpenWnn of Android, another is ATOK Direct Plug-in for Collect realtime tweets from Streaming API (10∼15% of all tweets) ・xxxxxxxx Collect past tweets from Search API Filtering Japanese & Geotagged (Users who once geotagged) ・xxxxxxxx Twitter ID ・xxxxxxxxxx Filtering Japanese & Geotagged Time ・xxxxxxxxxx ・xxxxxx ・xxxxxx ・xxxxxxxxxxx ・xxxxxxxxxxx Performer's name Jack Bauer Kiefer Sutherland Keisuke Honda Daisuke Matsui etc. ・xxxxxxx ・xxxxxx ・xxxxxxxxx ・xxxxxxxxxxx ・xxxxx (less than 1%) ・xxxxxxxxx ・xxxxx Database Many tweets Fig. 3. Real inputted texts Yahoo! Local Search API Landmark name Hyatt Regency Miami Bank of America James L. Knight Center Miami Convention Center etc. Language morphological analysis to parse sentences ・xxxxxxxxx Tweets Location TV program listing ・xxx ・xxxxxxxxxxx 2010-06-28T17:04:25, 34.54324, 131.234234, Honda and Matsui, Good job!! #worldcup 2010-06-28T17:05:32, 33.59723, 130.217793, I am staying Hyatt Regency Miami. Jack Bauer Kiefer Sutherland Keisuke Honda Daisuke Matsui Hyatt Regency Miami Bank America James Knight Center Convention Honda Matsui Good Worldcup Hyatt Regency Miami staying Cyclical collecting system based on Streaming API and Search API Fig. 4. Windows and Mac. “ATOK[7]” is one of the major text entry in Japan as well as Microsoft text entry. B. Remaining Issue Flow of relationship analysis These APIs are the same as APIs for making dictionary in our previously proposed context-aware text entry. A. Cyclical collecting system for Twitter Since we started this research with the assumption that such kind of system will be convenient for us, there is no evidence or quantitative evaluation for representing the effectiveness. It is hard to gather large amount of results through questionnaire-based evaluation. In addition, if we log really inputted sentences in a mobile phone, we must consider privacy protection in relation to personal data. III. T WITTER As you know, Twitter[8] is one of the major micro blogging and social networking service today, in which a short message of up to 140 characters, called tweet, can be posted. Tweets are generally open for the public as a “public timeline”. Since Twitter releases many kinds of API for general users, we can obtain other user’s tweets through these APIs. In this paper, we use Streaming API and Search API for obtaining tweets. Streaming API that was officially released January 2010 allows near-realtime access to the user’s tweets timeline. Tweets created by a public account are candidates for inclusion in the Streaming API. However, Streaming API only provides randomly sampled tweets which is about 10% of all the tweets. Search API allows us to search Tweets with a query in which we can set some parameters such as target text, language, user id, geocode, time spam, etc. In this paper, we use this API for collecting the past tweets of user who have once posted with geocode. How to combine these two APIs is described in the following section. IV. R ELATIONSHIP A NALYSIS For analyzing the relationship between user’s contexts and really inputted sentences, we construct the collecting system of Twitter and compare collected tweets with landmark information gotten from Yahoo! Local Search API[6] and TV information obtained from online TV program listing[9]. We construct the tweet collecting system that obtains Japanese tweets tagged with geocode, where we effectively combine two APIs of Twitter, Streaming API and Search API to gather huge amount of tweets. Fig.3 shows the cyclical tweet collecting system based on Streaming API and Search API. The reason to use two APIs is as follows. Since tweets obtained through Streaming API consist of various languages, we need to filter and pick up target tweets which are written in Japanese and have geocode as shown in the left side of Fig.3. As a result, we obtain only less than 1% of tweets. If a user want to add geocode to own tweets, he must have a client that can tag user’s current location through Twitter API. In other words, a user once tagged is possible to post other tagged tweets. Therefore, we pick up user IDs who posted a geo-tagged tweet, and we collect their past tweets through Search API cyclically. B. Matching Process A tweet consists of thee data, time information, location information, and inputted text as shown in Fig.4. From location information and “Yahoo! Local Search API”, we pick up the surrounding landmarks’ name within one kilometer of user’s current location. Examples of typical landmarks are station, city hall, school, hospital, post office, and so on. From time information and TV program listing, we pick up performers’ name and TV programs’ title. As a target channel to be collected, we adopt 12 key stations in Tokyo area and Fukuoka area. Fukuoka is the one of major cities located at west side of Japan, where our university exists. Since it is hard to collect past TV program listing and obtainable data is extremely large, we only analyze data of about one-month (between 7 January 2010 to 2 February 2010). All the data are separated into some words by using “Yahoo! Japanese language morphological analysis API” and “Yahoo! 130.42 141.35 ᮐᖠ 43.06 Only Streaming API ༤ከ 33.58 Start: 15 Dec. 2009 Fig. 5. End: 10 June 2010 Distribution of collected tweets per day Fig. 6. Geographical distribution of a location independent word: Noodle 139.7 Key phrase extraction API”. After that we compare these words with each other and evaluate the matching rate. Bunkyo-ku V. R ESULTS Fig.5 shows a distribution of collected 471274 tweets that have been collected from 15 December 2009 to 10 June 2010. Geographical scope of tweets is limited to Japan area, which is equal to the area from latitude 24 north and longitude 123 east to latitude 46 north and longitude 146. This limitation is due to the limitation of Yahoo! Local search API, which is only provided by Yahoo! Japan. Since we used only Streaming API at first, the number of tweets of the first one month is 10 or 100 times less than those of subsequent terms. It points out that our proposed cyclical tweet collecting system is very effective. Average word count of collected tweets is 48.8 characters, and tweets of about 30 characters are majority. From these results, we think that an abbreviated notation is often used in tweets. Average and maximum number of landmarks obtained at a certain position from Yahoo! Local Search API is 22.9 and 71 respectively. 10.2% of position can’t obtain any landmark information from this API. Maximum number of landmarks per position is 66. Meanwhile, average and maximum number of words gotten from TV program listing is 149.1 words/hour and 790 words/hour respectively, which is about 10 times larger than landmark information. The percentage of tweets including the words obtained according to the tweeted position is 5 Finally, we refer the dependency of time and location. Fig.6 shows a geographical distribution of tweets which include “noodle”. Since plots are widely distributed all over Japan, the Nakano-ku Shinjuku-ku Shinjuku station 35.69 Chiyoda-ku Shibuya-ku Shibuya station 35.66 Minato-ku Meguro-ku Fig. 7. Geographical distribution of location dependent words: Shibuya, Shinjuku word “noodle” can be determined as a location independent word. On the other hand, we notice that each plot (circle and plus) in Fig.7 is concentrated in certain areas respectively. In this figure, circle plots and plus plots show the geographical distribution of tweets which incude “Shinjuku” and “Shibuya” respectively. Centers of concentrated areas are Shinjuku station Sunday “Ryoma-den” is a TV drama broadcasting in NHK at 20 o'clock on every Sunday now. “Ryoma-den” is a TV drama broadcasting in NHK at 20 o'clock on every Sunday now. Sunday Sunday Sunday Sunday 9 May Fig. 8. 16 May 23 May 30 May 6 June Distribution of a time dependent word: Ryoma-den (per day) and Shibuya station of JR (Japan Railways). From this result, the word “Shinjuku” and “Shibuya” can be defined as location dependent words. Fig.8 and Fig.9 show a distribution of tweets which include “Ryoma-den” per day and per hour respectively. “Ryoma-den” is a popular TV program broadcasting in Japan Broadcasting Corporation (NHK) at 20 o’clock on every Sunday now. As shown in Fig.8, the number of tweets on every Sunday is obviously larger than those on other day of the week. Also, we can notice that the number of tweets at 20 o’clock is remarkably larger than other time slots. As a result, the word “Ryoma-den” highly depends on time. We are now picking up other typical words that highly depend on either time or location. We hope that by picking up such context-aware words, our context-aware text entry system will be improved. VI. C ONCLUSION AND FUTURE WORK In this paper, we have proposed cyclical tweet collecting system and have collected over half-million geo-tagged tweets written in Japanese for analyzing the relationship between users’ context (location and time) and real inputted words. We have collected 471274 tweets from 15 December 2009. Statistical analysis shows that 5.1% of tweets include landmark words, and 9% of tweets include TV program words. Addtionally, Geographical mapping indicates the evidence of location/time dependence of real inputted words. As a first step, we have focused on Japanese tweets, but this relationship must exist regardless of language. We are now trying to pick up the words with high location dependency by calculating the geographical distribution ratio. Fig. 9. Distribution of a time dependent word: Ryoma-den (per hour) ACKNOWLEDGMENT The work is carried out by joint research program of the NTT Service Integration Laboratories and the National Institute of Informatics. It is performed using the facilities provided by them. R EFERENCES [1] rTYPE. (2009) Survey of mobile web site. (in Japanese). [Online]. Available: http://release.center.jp/2008/11/0502.html (last access:2009/12/1) [2] OMRON SOFTWARE. (2009) iwnn. (in Japanese). [Online]. Available: http://www.omronsoft.co.jp/SP/mobile/iwnn/ (last access:2009/12/1) [3] S. Suematsu, Y. Arakawa, S. Tagashira, and A. Fukuda, “Network-based context-aware input method editor,” in The Sixth International Conference on Networking and Services (ICNS 2010), 7 March 2010, pp. 1–6. [4] Y. Arakawa, S. Suematsu, S. Tagashira, Y. Yamaguchi, Y. Tanaka, and A. Fukuda, “Implementation of network-based context-aware japanese input method editor,” in IEICE Technical Reports, ser. MoMuC2009-58, vol. 109, no. 380, 21 January 2010, pp. 31–34, (in Japanese). [5] S. Suematsu, Y. Arakawa, S. Tagashira, and A. Fukuda, “On improvement of response time for network-based context-aware japanese input method editor,” in IEICE General Conference, no. B-15-18, 19 March 2010, (in Japanese). [6] Yahoo Japan Corporation., “Yahoo! developer network- map,” http:/ /developer.yahoo.co.jp/webapi/map/(last access:2009/12/1), 2009, (in Japanese). [7] JustSystems Corporation, “Atok.com,” http://www.atok.com/(last access:2009/12/1), 2009, (in Japanese). [8] Twitter, “Twitter,” http://twitter.com/. [9] Toshiba, “Net de navi,” http://tvsurf.jp/tv/.