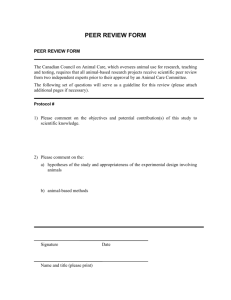

FP7 Grant Agreement 266632 Deliverable No and Title 1.3. Peer

advertisement