The Case for Thread Migration: Predictable IPC in a Customizable

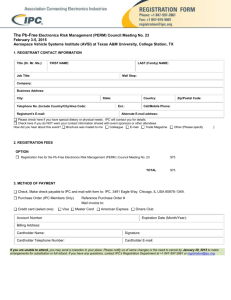

advertisement

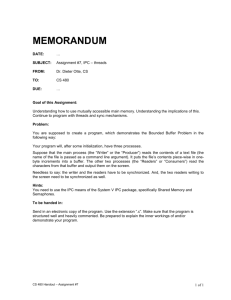

The Case for Thread Migration: Predictable IPC in a Customizable and Reliable OS Gabriel Parmer The George Washington University Computer Science Department gparmer@gwu.edu Component-Based OSs and µ-kernels Configurability in RT/Embedded systems scheduling policies resource sharing protocols interrupt scheduling Connection Manager File Desc. API HTTP Parser Content Manager Async Invs. CGI Service CGI FD API Static Content TCP Event Manager Port Manager MPD Manager Timed Block IP Lock vNIC Scheduler Timer Driver Reliability in RT/Embedded Systems limit scope of failures Network Driver System policies/abstractions are components User-level, separate protection domains IPC implementation key for system performance and predictability Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 2 / 19 Synchronous IPC Between Threads C0 C1 S0 C2 S1 kernel wait queue thread structure Communication operations: Clients: send + wait reply = call Servers: reply + wait msg = reply wait Threads bound to a component separate scheduling parameters communication end-points Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 3 / 19 Thread Migration kernel Thread Structure Invocation/procedure call semantics for communication Scheduling context migrates between components Separate execution context in each component Kernel thread structure tracks invocations Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 4 / 19 Synchronous IPC and Thread Migration Functionally identical interface definition language (IDL) L4 and Composite performance Similar published overheads > 2/3 invocation cost is hardware overhead in Composite Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 5 / 19 Thread Migration vs. Synchronous IPC Qualitative comparison wrt predictability worst-case overheads, relevant parameters implications for system design 1 2 3 CPU Accounting and Scheduling Communication End-point Contention System Configurability Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 6 / 19 CPU Accounting, Scheduling: Thread Migration C0 C1 Accounting and Scheduling execution charged to, and scheduled wrt the client thread TCP IP Gabe Parmer (CS@GWU) Resources within each component must avoid priority inversion component-based locking policies Thread Migration and Synchronous IPC 7 / 19 CPU Accounting, Scheduling: Sync. IPC C0 C1 1 Accounting execution time not accounted to clients bandwidth servers? TCP 2 IP Gabe Parmer (CS@GWU) Scheduling threads have independent priorities possible priority inversion Thread Migration and Synchronous IPC 8 / 19 CPU Accounting, Scheduling: L4 Implementations Lazy scheduling, direct switch don’t call scheduler, and directly switch to server optimizations for performance unpredictable scheduling and accounting Credo decouple scheduling context from execution context server runs with scheduling ctxt of client thread tracking correct scheduling context O(n) in depth of invocations Sync IPC → thread migration Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 9 / 19 Communication End Points: Sync. IPC c0 c1 c2 S0 s1 s 2 kernel wait queue IPC worst-case execution-time? O(length of wait queue) Client decides thread to invoke, server/kernel know which are available Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 10 / 19 Communication End Points: Thread Migration The target of invocation is the component server contains code to locate execution context specialized policies service differentiation allocate new execution contexts Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 11 / 19 System Customizability Configurability for Real-Time/Embedded Systems temporal policies scheduling synchronization reliability vs. predictability Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 12 / 19 User-Level Component-Based Scheduling Composite supports component-based scheduling no kernel scheduler, only dispatching Synchronous IPC requires scheduler interaction switch between threads activation of multiple threads Can double (or triple) invocation cost Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 13 / 19 Mutable Protection Domains fn fn Dynamically trade-off fault-isolation for performance Raise and lower protection domain boundaries between components remove boundaries where communication overheads are significant raise boundaries when “hot-paths” change Overhead of thread dispatching >> overhead of function call Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 14 / 19 Conclusions Summary Chart: Sync. IPC: pure ls/ds credo Thd Migration Accounting/ Scheduling Communication End Point Contention Customiz ability × ×× X∗ X × × × X∗ X X X XX Qualitative assessment of the predictability of the two models Moving sync. IPC implementations toward migrating thread increases predictability Migrating thread model good fit for predictable, reliable, configurable systems Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 15 / 19 ? || /* */ Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 16 / 19 Communication End Points: Sync. IPC II w w Execution contexts are the target of invocation client decides which to invoke kernel/server know which are busy Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 17 / 19 Communication End Points: Sync IPC III Synchronous IPC addressing the component kernel manages server threads Synchronous IPC → Thread Migration Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 18 / 19 Stack Manager Component Stack Manager Upon “stack miss”, invoke stack manager component allocate new stack (shown) priority inheritance when a thread using a stack is done, it returns it to be used by the requesting thread QoS-aware stack allocation Gabe Parmer (CS@GWU) Thread Migration and Synchronous IPC 19 / 19

![[#JAXB-300] A property annotated w/ @XmlMixed generates a](http://s3.studylib.net/store/data/007621342_2-4d664df0d25d3a153ca6f405548a688f-300x300.png)