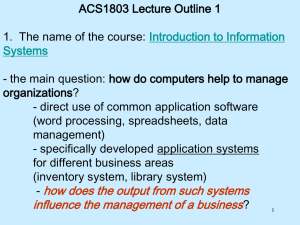

thesis-hes

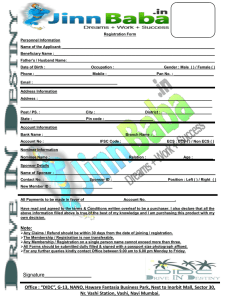

advertisement