Using Statistics in the application of MIM data

advertisement

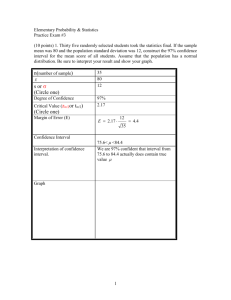

Using MIM Monitoring Data – Statistics Made Simple Statistics are critical to proper interpretation of data collected using the Multiple Indicator Monitoring protocols. They enable making management decisions when only a portion of the whole population has been sampled. Statistics provide a means of making unbiased assessments from field observations, when those observations have been made using the procedures in the MIM, including the collection of random samples and an adequate number of observations for the indicators being evaluated. What’s more, statistics provide a way of determining the reliability of our assessments. Meeting the assumptions of parametric statistics – normal probability distributions Parametric statistics provide the most powerful tests, but to use these statistics we need to know if our data fit a normal probability distribution. Typically data that calculates a mean value, like stubble height and bank alteration using the MIM have a lot of small values and just a few large values. Thus the data are typically skewed rather than being distributed evenly from small sizes to large sizes. You can graph your data using the “Histogram” plot in EXCEL to visually evaluate the data. Here are a couple of examples: 1. Stubble height – Often fits a normal probability distribution. Following is a typical set of data for a species, in this case Nebraska sedge, at a single DMA: Note that the frequency bars approximate a bell shape with larger values toward the center, smaller values to the left and right of center. 2. Greenline-greenline width – This indicator usually fits a normal distribution, but in the following example there are a couple of outliers skewed to the right. 3. Bank Alteration – Bank alteration data are typically non-normal in distribution. That is because there are usually a lot of 0 and 1 values with fewer 2, and 3 values and sometimes very few or lacking in 4 and 5 values. Thus we almost always transform the data, usually taking the logarithm after adding 1 to each value (to remove zeros). It is common for riparian data, like bank alteration, to have a lot of small values and just a few larger values. Thus it is a common practice in such situations to simply take a logarithm of the data so that it will more closely fit a normal probability distribution then we conduct our statistical tests using those values. There are more sophisticated tests for evaluating normal probability fitness. You can download statistical software that works as an add on to MS EXCEL and that are very easy to use. Examples include XLSTAT and ANALYSE-IT which both contain standard tests for normality. You can easily produce normal probability and box plots from your data in the Data Analysis Module. Direction are provided to understand how to use the tests. Included are even more sophisticated tests of normality, like the Kolmogorov-Smirnov test. For further information see: http://www.analyseit.com/products/standard/ Rules of thumb for deciding whether or not a parametric test is appropriate: After we have transformed the data, assuming we decided the original raw data did not fit a normal probability distribution, there is still the need to decide if the data can be used in a parametric test. Use the following rules of thumb (BLM Tech Reference 1730-01). If the variances are within a factor of 2 to 3 of each other, then the assumption of homogeneity of variances can be considered to be met. If the plot of the observations reveals they are not heavily skewed then you can assume the data are close enough to a normal distribution to use parametric statistics. When the standard deviation is about the same size or larger than the Mean, this is an indication that the distribution is heavily skewed. Comparing samples – a major purpose of monitoring One of the primary objectives of monitoring is to determine if a change has occurred through time. Did the parameter of interest go up or down? In addition we typically use monitoring data to assess compliance. Did the average value for the parameter meet or exceed the management criteria? These are simple comparison tests and they are important in making management decisions. For example, a management objective to achieve a bank stability rating of 80 or greater in the next 10 years often requires that positive change occur each year. Statistics help us to know how if the change occurred and how reliable our conclusion is. Another management objective, commonly used in livestock grazing, is to allow no more that a certain level of stream bank alteration or to graze a key plant species to no less than a particular value. Statistical tests are used to show if the management objective was achieved and the probability that it actually occurred. As mentioned above, parametric tests are the most powerful tests, but they require that the data fit a normal probability distribution. If we are satisfied that the data, as-is or transformed are normally distributed then we might apply the t-test, a commonly used statistic for testing differences in the means of two samples (trend differences from one year to a different year). These tests are good to use because they come standard within MS EXCEL (make sure you have loaded the Analysis Tool Pak add-in by clicking on the OFFICE button and selecting EXCEL Options). The following example shows how you would conduct a t-test using samples from two Data Analysis Modules (two sample test), for a test of trend. Test for trend – using Parametric Statistics: In this test, we will examine the change in GGW at a site through time. The first sample was collected 5 years prior to the second. Through time the stream has been narrowing. First we articulate the Null Hypothesis: Null Hypothesis: No change has occurred in the GGW. If, through our significance test, we conclude that an observed change is likely, we reject the null hypothesis in favor of an alternative hypothesis: that there has been a change in the GGW through time. To test our null hypothesis, we need to specify the significance of the difference, or the P value. In other words, how big a difference between sample means constitutes an observed change? The P value is the probability of obtaining a value of the test statistic as large as or larger than the one computed from the data when in reality there is no difference between the two populations. For GGW we will select a very small value for P, P=.01. Two sets of data are taken from the Data Analysis Modules for Bear Creek in 2005 and then in 2010 as follows (this is a partial list of the data, there were 80 samples in total): The means are: 2.5 meters in 2005 and then 1.9 meters in 2010. In EXCEL we select “Data Analysis” and the following screen appears: Select t-test: Two Sample Assuming Equal Variances. This tests assumes the samples came from separate populations (different years) but that the variances would be approximately the same, which we would expect given that our data are coming from measurements using the same sample protocol. The following screen appears where we indicate the ranges of our data. Variable 1 is the 2010 set of data, Variable 2 the 2005 set. The output was placed in cell D1, and is as follows: Note that there are two types of tests, one-tail and two-tail. For trend analysis we would typically us the two-tail test because we need to detect change in either possible direction (smaller or larger values of the mean). But a one-tail test can be used if the null hypothesis is that the population mean has not increased, which is the present case. Based on the analysis above, the one-tail P value is .000789, less than our threshold value of .01 so we would reject the null hypothesis and conclude that there is a difference. How big is this difference? Subtract the test value (.000789) from our threshold value of .01 = .0092, and convert to percentage. We also conclude that there is a .92% (nine tenths of one percent) chance that we are wrong. When a difference is significant, the t-stat value in this table will be smaller than the value for “t critical one-tail”. Remember that the significance was set at .01. Test for trend – using Non-Parametric Statistics: In this test we examine the difference between two samples of bank stability through time. Bank stability and cover are simply presence/absence data. Either the bank is covered or it is not. It is either stable or it is not. Thus a sample is a yes/no rather than a measure. The data actually describe a frequency. For example, if a stream bank is 80% stable, that means that 80 percent, or a frequency of 8 out of 10 plots were observed to be stable. Such data use a Contingency table and a Fisher's exact test for the analysis (See BLM Tech Reference 1730-1). In 2005 Bear Creek had a bank stability of 66% and in 2010, 76%. We start with a contingency table like the following: In 2005 Bear Creek had 50 stable plots and 26 unstable plots. That changed in 2010 to 62 and 18 respectively. You can use a free on-line calculator to derive P values for the test. Here’s an example: Null Hypothesis: No change has occurred in Bank Stability. If, through our significance test, we conclude that an observed change is likely, we reject the null hypothesis in favor of an alternative hypothesis: that there has been a change in the Bank Stability through time. For bank stability, we might use a p value of .25 as the threshold value for change. Given that our computed P value is .1129, we would reject the null hypothesis and conclude that bank stability had changed. We can also use the Chi square statistic to validate the p values calculated using the Fishers exact test. The following steps are used: 1. Display the contingency table with proportions as follows: Stable Unstable Totals 2005 50 (.66) 26 (.34) 76 2010 62 (.78) 18 (.22) 80 Totals 112 44 156 Determine the number expected if there was no difference between 2005 and 2010 by using the average of the proportions as applied to each year. In the above example, stable is .66 in 2005 and .78 in 2010. The mid point between these is .78 – (.78-.66)/2 = .72. Thus .72 times the total of 76 in 2005 is 55. Thus the “Expected” contingency table looks like this: Stable Unstable Totals 2005 55 (.72) 22 (.28) 76 2010 57 (.72) 22 (.28) 80 Totals 112 44 156 As describe in BLM Tech Reference 170-1, now compute the chi square statistic as follows (can be done in EXCEL): Where: χ2 is the chi square statistic. Σ = summation symbol. O = Number observed. E = Number expected. Applying this to the example X2 = (55-50)2/55+(26-22)2/22+(62-57)2/57(22-18)2/22 This formula is entered into EXCEL and gives a Chi Square value of 1.501 Use the Chi Square critical value from the table in BLM Tech Reference 1730-1 value by providing to it the threshold value, .25 and the degrees of freedom, which for a 2x2 contingency table is 1. Thus to see if our Chi Square value of 1.501 is sufficiently large enough to be significant we compare it to the table value as 1 DF and .25 = 1.3. Thus the Chi Square tests validates our conclusion using the Fisher Exact test that the null hypothesis is rejected and conclude that bank stability has changed. Testing for compliance with a standard and/or criteria Fortunately the MIM Data Analysis Module provides a simple way to test for compliance. Confidence intervals are provided with the metric summary data and their use is explained in the MIM Technical Reference (1737-23). There are two types of confidence intervals in the table, the first is the 95% confidence interval based upon standard deviation from sample data, and the second is the 95% confidence interval on observer variation as displayed in table F7 of the MIM Technical Reference Appendix. Values for these confidence intervals (CI values) are provided in the Data Analysis Module, Summary worksheet for each of the metrics displayed, and that calculate a mean value. Thus for stubble height, a CI of .96 is displayed. That is the confidence interval from the repeat data and represents the average difference between observers. Thus any result within .96 inch of the stubble height standard would not be considered different from the standard itself. For example, on Meadow Creek we measured a mean stubble height of 4.4 inches from 80 plots and 142 individual observations involving several key species. The stubble height standard for Meadow Creek is to graze to an average stubble height of no less than 5 inches, plus and minus the 95% confidence interval. The stubble height standard was NOT exceeded since 5” minus 4.4” equals .6”, and within the confidence interval of .96. We then examine the other confidence interval computed by the Data Analysis Module. In this case the CI is only .3 therefore it would appear in this case that the standard was exceeded since 4.4 is outside the interval: 4.7 to 5.3. However, we always default to the larger of the two confidence intervals, in this case 5” plus and minus .96” and conclude that the stubble height standard was not exceeded. Refer to the Appendix F in Technical Reference 1737-23 for more details about the use of the confidence interval methods.