06_Inference-issues-in-OLS

advertisement

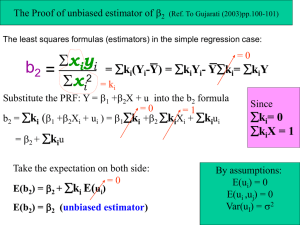

Inference issues in OLS Amine Ouazad Ass. Prof. of Economics Outline 1. Heteroscedasticity 2. Clustering 3. Generalized Least Squares 1. For heteroscedasticity 2. For autocorrelation HETEROSCEDASTICITY Issue • The issue arises whenever the residual’s variance depends on the observation, or depends on the value of the covariates. 10 Example #1 SS df MS Model Residual 9202.81992 968.636231 1 998 9202.81992 .970577386 Total 10171.4561 999 10.1816378 Number of obs F( 1, 998) Prob > F R-squared Adj R-squared Root MSE = 1000 = 9481.80 = 0.0000 = 0.9048 = 0.9047 = .98518 y Coef. x _cons 3.057914 1.023541 -10 -5 0 y 5 Source -4 -2 0 x 2 4 . regress y x, robust Linear regression Number of obs F( 1, 998) Prob > F R-squared Root MSE y Coef. x _cons 3.057914 1.023541 Robust Std. Err. .051667 .0311581 t 59.19 32.85 = 1000 = 3502.87 = 0.0000 = 0.9048 = .98518 P>|t| [95% Conf. Interval] 0.000 0.000 2.956525 .9623982 3.159302 1.084684 Std. Err. .0314036 .0311542 t 97.37 32.85 P>|t| [95% Conf. Interval] 0.000 0.000 2.996289 .9624057 3.119538 1.084676 Example #2 . regress y1 x SS df MS Model Residual 74981.0212 13409.9859 1 640 74981.0212 20.9531029 Total 88391.0071 641 137.895487 Number of obs F( 1, 640) Prob > F R-squared Adj R-squared Root MSE = 642 = 3578.52 = 0.0000 = 0.8483 = 0.8481 = 4.5775 20 y1 40 60 80 Source y1 Coef. x _cons 16.07456 9.339686 Std. Err. .2687123 .2382014 t 59.82 39.21 P>|t| [95% Conf. Interval] 0.000 0.000 15.54689 8.871936 16.60222 9.807437 . regress y1 x, robust Number of obs F( 1, 640) Prob > F R-squared Root MSE 0 Linear regression -2 -1 0 1 2 3 x y1 Coef. x _cons 16.07456 9.339686 Robust Std. Err. .4701565 .2079953 t 34.19 44.90 = 642 = 1168.94 = 0.0000 = 0.8483 = 4.5775 P>|t| [95% Conf. Interval] 0.000 0.000 15.15132 8.931251 Here Var(y|x) is clearly increasing in x. Notice the underestimation of the size of the confidence intervals. 16.99779 9.748122 Visual checks with multiple variables • Use the vector of estimates b, and predict E(Y|X) using the predict xb, xb stata command. • Draw the scatter plot of the dependent y and the prediction Xb on the horizontal axis. 100 . regress y3 x z SS df MS Model Residual 61926.4748 8288.16585 2 639 30963.2374 12.9705256 Total 70214.6407 641 109.539221 Number of obs F( 2, 639) Prob > F R-squared Adj R-squared Root MSE = 642 = 2387.20 = 0.0000 = 0.8820 = 0.8816 = 3.6015 0 y3 50 Source y3 Coef. x z _cons 1.835086 3.015666 1.104964 -50 . predict xb, xb -20 0 20 Linear prediction 40 . scatter y3 xb Std. Err. .2183514 .0468961 .1878993 t 8.40 64.31 5.88 P>|t| [95% Conf. Interval] 0.000 0.000 0.000 1.406313 2.923577 .7359896 2.263859 3.107755 1.473939 Causes • Unobservable that affects the variance of the residuals, but not the mean conditional on x. – y=a+bx+e. – with e=hz. The shock h satisfies E(h |x)=0, and E(z|x)=0 but the variance Var(z|x) depends on an unobservable z. – E(e|x)=0 (exogeneity), but Var(e|x)=Var(hz|x) depends on x. (previous example #1). • In practice, most regressions have heteroskedastic residuals. Examples • Variability of stock returns depends on the industry. – Stock Returni,t = a + b Market Returnt + ei,t. • Variability of unemployment depends on the state/country. – Unemploymenti,t = a + b GDP Growtht + ei,t. • Notice that both the inclusion of industry/state dummies and controlling for heteroskedasticity may be necessary. Heteroscedasticity: the framework 𝑉𝑎𝑟 𝜀𝑖 𝑥𝑖 = 𝜎𝑖 2 • We set the ws so that their sum is equal to n, and they are all positive. The trace of the matrix W (see matrix appendix) is therefore equal to n. Consequences 1. The OLS estimator is still unbiased, consistent and asymptotically normal (only depends on A1-A3). 2. But the OLS estimator is then inefficient (the proof of the Gauss-Markov theorem relies on homoscedasticity). 3. And the confidence intervals calculated assuming homoscedasticity typically overestimate the power of the estimates/underestimate the size of the confidence intervals. Variance-covariance matrix of the estimator • Asymptotically • At finite and fixed sample size • xi is the i-th vector of covariates, a vector of size K. • Notice that if the wi are all equal to 1, we are back to the homoscedastic case and we get Var(b|x) = s2(X’X)-1 • We use the finite sample size formula to design an estimator of the variance-covariance matrix. White Heteroscedasticity consistent estimator of the variance-covariance matrix • The formula uses the estimated residuals ei of each observation, using the OLS estimator of the coefficients. • This formula is consistent (plim Est. Asy. Var(b)=Var(b)), but may yield excessively large standard errors for small sample sizes. • This is the formula used by the Stata robust option. • From this, the square of the k-th diagonal element is the standard error of the k-th coefficient. Test for heteroscedasticity • Null hypothesis H0: si2 = s2 for all i=1,2,…,n. • Alternative hypothesis Ha: at least one residual has a different variance. • Steps: 1. Estimate the OLS and predict the residuals ei. 2. Regress the square of the residuals on a constant, the covariates, their squares and their cross products (P covariates). 3. Under the null, all of the coefficients should be equal to 0, and NR2 of the regression is distributed as a c2 with P-1 degrees of freedom. Suggests another visual check 0 10 residsq 20 30 • Examples #1 and #2 with one covariate. • Example with two covariates. -2 -1 0 1 2 0 0 500 500 resid3sq resid3sq 1000 1000 1500 1500 x -10 -5 0 5 z 10 -20 0 20 Linear prediction 40 3 Stata take aways • Always use robust standard errors – robust option available for most regressions. – This is regardless of the use of covariates. Adding a covariate does not free you from the burden of heteroscedasticity. • Test for heteroscedasticity: – hettest reports the chi-squared statistic with P-1 degrees of freedom, and the p-value. – A p-value lower than 0.05 rejects the null at 95%. – The test may be used with small sample sizes, to avoid the use of robust standard errors. CLUSTERING Clustering, example #1 • Typical problem with clustering is the existence of a common unobservable component… – Common to all observations in a country, a state, a year, etc. • Take yit = xit + eit, a panel dataset where the residual eit=ui+hit. • Exercise: Calculate the variance-covariance matrix of the residuals. Clustering, example #2 • Other occurrence of clustering is the use of data at a higher level of aggregation than the individual observation. – Example: yij = xijb+zjg+eij. – This practically implies (but not theoretically), that Cov(eij,ei’j) is nonzero. • Example: – regression performanceit = c + d policyj(i) + eit. – regression stock returnit = constant + b Markett + eit. Moulton paper The clustering model • Notice that the variance-covariance matrix can be designed this way by blocks. • In this model, the estimator is unbiased and consistent, but inefficient and the estimated variance-covariance matrix is biased. True variance-covariance matrix • With all the covariates fixed within group, the variance covariance matrix of the estimator is: • where m=n/p, the number of observations per group. • This formula is not exact when there are individual-specific covariates, but the term (1+(m-1)r) can be used as an approximate correction factor. Descriptive Statistics Stata • regress y x, cluster(unit) robust. – Clustering and robust s.e. s should be used at the same time. – This is the OLS estimator with corrected standard errors. – If x includes unit-specific variables, we cannot add a unit (state/firm/industry) dummy as well. Multi-way clustering • Multi-way clustering: – “Robust inference with multi-way clustering”, Cameron, Gelbach and Miller, Technical NBER Working Paper Number 327 (2006). • Has become the new norm very recently. • Example: clustering by year and state. – yit = xitb + zig + wtd + eit – What do you expect? • ivreg2 , cluster(id year) . • ssc install ivreg2. GENERALIZED LEAST SQUARES OLS is BLUE only under A4 • OLS is not BLUE if the variance-covariance matrix of the residuals is not diagonal. • What should we do? • Take general OLS model Y=Xb+e. • And assume that Var(e)=W. • Then take the square root of the matrix, W-1/2. This is a matrix that satisfies W=(W-1/2 )’W-1/2. This matrix exists for any positive definite matrix. Sphericized model • The sphericized model is: W-1/2 Y= W-1/2 Xb+ W-1/2e • This model satisfies A4 since Var(e|X)=s2. Generalized Least Squares • The GLS estimator is: • This estimator is BLUE. It is the efficient estimator of the parameter beta. • This estimator is also consistent and asymptotically normal. • Exercise: prove that the estimator is unbiased, and that the estimator is consistent. Feasible Generalized Least Squares • The matrix W in general is unknown. We estimate W using a procedure (see later) so that plim W = W. • Then the FGLS estimator b=(X’W-1X)-1X’W-1Y is a consistent estimator of b. • The typical problem is the estimation of W. There is no one size fits all estimation procedure. GLS for heteroscedastic models • Taking the formula of the GLS estimator, with a diagonal variancecovariance matrix. • Where each weight is the inverse of wi. Or the inverse of si2. Scaling the weights has no impact. • Stata application exercise: – Calculate weights and tse the weighted OLS estimator regress y x [aweight=w] to calculate the heteroscedastic GLS estimator, on a dataset of your choice. GLS for autocorrelation • Autocorrelation is pervasive in finance. Assume that et=ret-1+ht, (we say that et is AR(1)) where ht is the innovation, uncorrelated with et-1. • The problem is the estimation of r. Then a natural estimator of r is the coefficient of the regression of et on et-1. • Exercise 1 (for adv. students): find the inverse of W. • Exercise 2 (for adv. students): find W for an AR(2) process. • Exercise 3 (for adv. students): what about MA(2) ? • Variation: Panel specific AR(1) structure. 1500 Autocorrelation example . webuse invest2 . regress invest stock market df MS 5532554.18 1570883.64 2 97 2766277.09 16194.6767 Total 7103437.82 99 71751.8972 = = = = = = 100 170.81 0.0000 0.7789 0.7743 127.26 500 Model Residual Number of obs F( 2, 97) Prob > F R-squared Adj R-squared Root MSE 1000 SS invest Source Coef. stock market _cons .3053655 .1050854 -48.02974 Std. Err. .0435078 .0113778 21.48016 t 7.02 9.24 -2.24 P>|t| 0.000 0.000 0.028 [95% Conf. Interval] .2190146 .0825036 -90.66192 .3917165 .1276673 -5.397555 0 invest 0 500 1000 1500 Linear prediction company (strongly balanced) time, 1 to 20 1 unit 400 SS df MS 1212772.8 338031.953 1 93 1212772.8 3634.75219 Total 1550804.76 94 16497.9229 = = = = = = 95 333.66 0.0000 0.7820 0.7797 60.289 resid Coef. Std. Err. resid L1. .9366451 .051277 _cons -1.86626 6.185532 t P>|t| [95% Conf. Interval] 18.27 0.000 .8348191 1.038471 -0.30 0.764 -14.1495 10.41698 -400 -200 Model Residual Number of obs F( 1, 93) Prob > F R-squared Adj R-squared Root MSE Residuals Source 200 . regress resid l.resid 0 . xtset company time panel variable: time variable: delta: -400 -200 0 Residuals, L 200 400 GLS for clustered models • Correlation r within each group. • Exercise: write down the variance-covariance matrix W of the residuals. • Put forward an estimator of r. • What is the GLS estimator of b in Y=Xb+e with clustering? • Estimation using xtgls, re. Applications of GLS • The Generalized Least Squares model is seldom used. In practice, the variance of the OLS estimator is corrected for heteroscedasticity or clustering. – – – – Take-away: use regress , cluster(.) robust Otherwise: xtgls, panels(hetero) xtgls, panels(correlated) xtgls, panels(hetero) corr(ar1) • The GLS is mostly used for the estimation of random effects models. – xtreg, re CONCLUSION: NO WORRIES Take away for this session 1. Use regress, robust; always, unless the sample size is small. 2. Use regress, robust cluster(unit) if: – You believe there are common shocks at the unit level. – You have included unit level covariates. 3. Use ivreg2, cluster(unit1 unit2) for two way clustering. 4. Use xtgls for the efficient FGLS estimator with correlated, AR(1) or heteroscedastic residuals. – This might allow you to shrink the confidence intervals further, but beware that this is less standard than the previous methods.