Data Fitting

advertisement

Python Crash Course

Data Fitting

Sterrenkundig Practicum 2

V1.0

dd 07-01-2015

Hour 7

Data Fitting

We are given:

–

observational data

–

a model with a set of fit parameters

Goal:

–

Want to optimize some objective function.

–

E.g.: Want to minimize squared difference between some model and some given data

What we do not talk about:

–

How to choose your objective function.

–

How to choose your model.Least squares fitting

Data Fitting

Fitting Lines to Data

–

Define function to fit

–

Define range and step size for all data points

–

Calculate best fitting line

import numpy as np

import pylab as pl

# Data Fitting

# First, we'll generate some fake data to use

x = np.linspace(0,10,50) # 50 x points from 0 to 10

# Remember, you can look at the help for linspace too:

# help(np.linspace)

# y = m x + b

y = 2.5 * x + 1.2

# let's plot that

pl.clf()

pl.plot(x,y)

Data Fitting

# looks like a simple line.

pl.plot(x,y,marker='s')

Noise

# We need to add noise first

noise = pl.randn(y.size)

# What's y.size?

print y.size

print len(y)

50

50

But we want to see the individual data points

Data Fitting

# We can add arrays in python just like in IDL

noisy_flux = y + noise

# We'll plot it too, but this time without any lines

# between the points, and we'll use black dots

# ('k' is a shortcut for 'black', '.' means 'point')

pl.clf() # clear the figure

pl.plot(x,noisy_flux,'k.')

# We need labels, of course

pl.xlabel("Time")

pl.ylabel("Flux")

Data Fitting

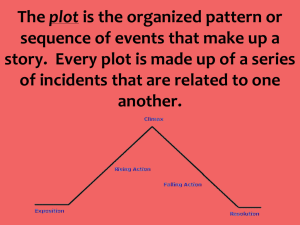

Now we're onto the fitting stage. We're going to fit a function of the form

y=m∗x+b

which is the same as

f(x)=p[1]∗x+p[0]

to the data. This is called "linear regression", but it is also a special case of a more general

concept: this is a first-order polynomial. "First Order" means that the highest exponent of x

in the equation is 1

#

#

p

#

We'll use polyfit to find the values of the coefficients.

parameter is the "order"

= np.polyfit(x,noisy_flux,1)

help(polyfit) if you want to find out more

The third

#

#

p

#

[

We'll use polyfit to find the values of the coefficients.

parameter is the "order"

= np.polyfit(x,noisy_flux,1)

help(polyfit) if you want to find out more

2.59289715 0.71443764]

The third

Data Fitting

Let's do the same thing with a noisier data set. I'm going to leave out most of the comments

this time.

noisy_flux = y+noise*10

p = polyfit(x,noisy_flux,1)

print p

[ 3.4289715 -3.65562359]

# plot it

pl.clf() # clear the figure

pl.plot(x,noisy_flux,'k.') # repeated from above

pl.plot(x,np.polyval(p,x),'r-',label="Best fit") # A red solid line

pl.plot(x,2.5*x+1.2,'b--',label="Input") # a blue dashed line showing the REAL line

pl.legend(loc='best') # make a legend in the best location

pl.xlabel("Time") # labels again

pl.ylabel("Flux")

Data Fitting

Despite the noisy data, our fit is still pretty good! One last plotting trick.

pl.clf() # clear the figure

pl.errorbar(x,noisy_flux,yerr=10,marker='.',color='k',linestyle='none') # errorbar

requires some extras to look nice

pl.plot(x,np.polyval(p,x),'r-',label="Best fit") # A red solid line

pl.plot(x,2.5*x+1.2,'b--',label="Input") # a blue dashed line showing the REAL line

pl.legend(loc='best') # make a legend in the best location

pl.xlabel("Time") # labels again

pl.ylabel("Flux")

Power Law Fitting

Power laws occur all the time in physis, so it's a good idea to learn how to use them.

What's a power law? Any function of the form:

f(t)=atb

where t is your independent variable, a is a scale parameter, and b is the exponent (the power).

When fitting power laws, it's very useful to take advantage of the fact that "a power law is linear

in log-space". That means, if you take the log of both sides of the equation (which is allowed)

and change variables, you get a linear equation!

ln(f(t))=ln(atb)=ln(a)+bln(t)

We'll use the substitutions y=ln(f(t)), A=ln(a), and x=ln(t), so that

y=a+bx

which looks just like our linear equation from before (albeit with different letters for the fit

parameters).

t

a

b

z

=

=

=

=

np.linspace(0.1,10)

1.5

2.5

a*t**b

pl.clf()

pl.plot(t,z)

Power Law Fitting

# Change the variables

# np.log is the natural log

y = np.log(z)

x = np.log(t)

pl.clf()

pl.plot(x,y)

pl.ylabel("log(z)")

pl.xlabel("log(t)")

Power Law Fitting

It's a straight line. Now, for our "fake data", we'll add the noise before transforming from

"linear" to "log" space

noisy_z = z + pl.randn(z.size)*10

pl.clf()

pl.plot(t,z)

pl.plot(t,noisy_z,'k.')

noisy_y = np.log(noisy_z)

pl.clf()

pl.plot(x,y)

pl.plot(x,noisy_y,'k.')

pl.ylabel("log(z)")

pl.xlabel("log(t)")

Power Law Fitting

Note how different this looks from the "noisy line" we plotted earlier. Power laws are much

more sensitive to noise! In fact, there are some data points that don't even show up on this

plot because you can't take the log of a negative number. Any points where the random

noise was negative enough that the curve dropped below zero ended up being "NAN", or

"Not a Number". Luckily, our plotter knows to ignore those numbers, but polyfit doesnt.

print noisy_y

[ 2.64567168 1.77226366

0.58257979 -0.50719229

-0.65048639 3.4827123

4.10072678 3.76322421

4.4608377

4.5945862

5.05915226 5.02661678

5.41827503 5.486903

5.77382726 5.82299095

6.13466015 6.12248799]

nan

1.84461177

2.60910913

4.06432005

4.66004888

5.05308181

5.50263105

5.92653466

1.49412348

2.72918637

3.48086587

4.23511474

4.8084364

5.26753675

5.59005234

5.93264584

nan 1.28855427

nan -1.02747374

3.84495668 3.90751514

4.26996586 4.25153639

4.81659305 4.97216304

5.23242563 5.36407125

5.6588601

5.7546482

5.99879722 6.07711596

Power Law Fitting

# try to polyfit a line

pars = np.polyfit(x,noisy_y,1)

print pars

[ nan nan]

In order to get around this problem, we need to mask the data. That means we have to tell

the code to ignore all the data points where noisy_y is nan.

nan == nan is False

OK = noisy_y == noisy_y

print OK

[ True True False True False

True True True True True

True True True True True

True True True True True

True True]

True

True

True

True

True

True

True

True

True

True

True

True

True

True

True

True

True False

True True

True True

True True

True

True

True

True

Power Law Fitting

This OK array is a "boolean mask". We can use it as an "index array", which is pretty neat.

print "There are %i OK values" % (OK.sum())

masked_noisy_y = noisy_y[OK]

masked_x = x[OK]

print "masked_noisy_y has length",len(masked_noisy_y)

There are 47 OK values

masked_noisy_y has length 47

# now polyfit again

pars = np.polyfit(masked_x,masked_noisy_y,1)

print pars

[ 1.50239281 1.9907672 ]

fitted_y = polyval(pars,x)

pl.plot(x, fitted_y, 'r--')

Power Law Fitting

The noise seems to have affected our fit.

# Convert bag to linear-space to see what it "really" looks like

fitted_z = np.exp(fitted_y)

pl.clf()

pl.plot(t,z)

pl.plot(t,noisy_z,'k.')

pl.plot(t,fitted_z,'r--')

pl.xlabel('t')

pl.ylabel('z')

Power Law Fitting

That's pretty bad. A "least-squares" approach, as with curve_fit, is probably going to be the

better choice. However, in the absence of noise, this approach should work

def powerlaw(x,a,b):

return a*(x**b)

pars,covar = curve_fit(powerlaw,t,noisy_z)

pl.clf()

pl.plot(t,z)

pl.plot(t,noisy_z,'k.')

pl.plot(t,powerlaw(t,*pars),'r--')

pl.xlabel('t')

pl.ylabel('z')

Introduction to language

End