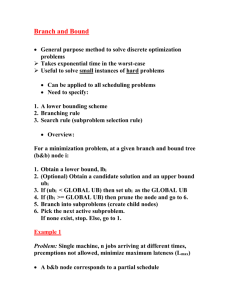

Feature Selection with Branch and Bound

advertisement

Feature Selection with Branch and Bound Norman Poh Steps 1. Construct an ordered tree satisfying: where Jk means k variables to be eliminated and the order of Zp is determined by a discrimination criterion 2. Traverse the tree from right to left in depth-first search pattern 3. Evaluate the criterion at each level and sort them 4. Prune the tree The objective is to fine the optimal value: while keeping a constantly updated lower bound: Whenever the criterion evaluated for any node is less than the bound B, all nodes that are successors of that node also have criterion values less than B. So, we prune them. “6 choose 2” How to construct the order tree? • Determine the number of levels of the tree – In the “6 choose 2” examples, we have to eliminate 4 variables and so the number of levels is 4 • Each child node should have a number greater than it’s parent’s • The right-most leave node (the note at the last level) should reach the largest variable index (that is 6 in the “6 choose 2” example) • A number of examples are given next “5 choose 2” “5 choose 3” “6 choose 3” Your tutorial question: 1. Construct an ordered tree according to “4 choose 2” 3. Put the tree 2. Eliminate features x1, x2, x3 and x4 – calculate the criterion and sort them Reference • PATRENAHALLI M. NARENDRA and KEINOSUKE, FUKUNAGA , “A Branch and Bound Algorithm for Feature Subset Selection”, IEEE TRANSACTIONS ON COMPUTERS, VOL. C-26, NO. 9, SEPTEMBER 1977