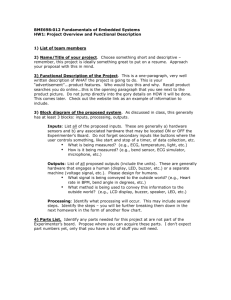

It‘s All About MeE: Design Space

advertisement