Extracting Information from Music Audio Dan Ellis

advertisement

Extracting Information

from Music Audio

Dan Ellis

Laboratory for Recognition and Organization of Speech and Audio

Dept. Electrical Engineering, Columbia University, NY USA

http://labrosa.ee.columbia.edu/

1.

2.

3.

4.

Motivation: Learning Music

Notes Extraction

Drum Pattern Modeling

Music Similarity

Music Information Extraction - Ellis

2006-02-13

p. 1 /32

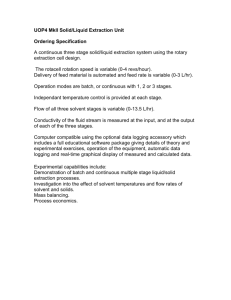

LabROSA Overview

Information

Extraction

Music

Recognition

Environment

Separation

Retrieval

Machine

Learning

Signal

Processing

Speech

Music Information Extraction - Ellis

2006-02-13

p. 2 /32

1. Learning from Music

• A lot of music data available

e.g. 60G of MP3

≈ 1000 hr of audio, 15k tracks

• What can we do with it?

implicit definition of ‘music’

• Quality vs. quantity

Speech recognition lesson:

10x data, 1/10th annotation, twice as useful

• Motivating Applications

music similarity (recommendation, playlists)

computer (assisted) music generation

insight into music

Music Information Extraction - Ellis

2006-02-13

p. 3 /32

Ground Truth Data

File: /Users/dpwe/projects/aclass/aimee.wav

music data available

manual annotation is

expensive and rare

7000

6500

6000

5500

5000

4500

4000

3500

3000

2500

2000

1500

1000

500

t

0:02

0:04

f 9 Printed: Tue Mar 11 13:04:28

• A lot of unlabeled

Hz

0:06

0:08

0:10

0:12

• Unsupervised structure discovery possible

.. but labels help to indicate what you want

• Weak annotation sources

mus

artist-level descriptions

symbol sequences without timing (MIDI)

errorful transcripts

• Evaluation requires ground truth

limiting factor in Music IR evaluations?

Music Information Extraction - Ellis

2006-02-13

p. 4 /32

0:14

vox

0:16

0:18

mu

Talk Roadmap

4

Similarity/

recommend'n

Anchor

models

Music

audio

1

Semantic

bases

Melody

extraction

Drums

extraction

2

3

Event

extraction

Music Information Extraction - Ellis

Fragment

clustering

Eigenrhythms

Synthesis/

generation

?

2006-02-13

p. 5 /32

2. Notes Extraction

with Graham Poliner

• Audio → Score very desirable

for data compression, searching, learning

• Full solution is elusive

signal separation of overlapping voices

music constructed to frustrate!

• Maybe simplify problem:

“Dominant Melody” at each time frame

Frequency

4000

3000

2000

1000

0

0

0.5

1

1.5

2

2.5

3

3.5

4

4.5

5

Time

Music Information Extraction - Ellis

2006-02-13

p. 6 /32

Break tracks

- need to detect new ‘onset’

at single frequencies

500

time / s

Conventional Transcription

0.06

0.04

0.02

0

0

0

0

1

2

3

4

• Pitched notes have harmonic spectra

0.5

→1 transcribe

by searching for harmonics

1.5 time / s

ram

Modeling

e.g. sinusoid modeling + grouping

Group by onset &

freq / Hz

common 3000

harmonicity

find sets2500

of tracks that start

ut-signal

around 2000

the same time

1500

- + stable1000

harmonic pattern

500

Pass on to 0constraint‘onset’

0

1

2

3

based

filtering...

es

APR - Dan Ellis

e/s

4

time / s

• Explicit expert-derived knowledge

L10 - Music Analysis

Music Information Extraction - Ellis

2005-04-06 - 5

2006-02-13

p. 7 /32

Transcription as Classification

• Signal models typically used for transcription

harmonic spectrum, superposition

• But ... trade domain knowledge for data

transcription as pure classification problem:

Audio

Trained

classifier

p("C0"|Audio)

p("C#0"|Audio)

p("D0"|Audio)

p("D#0"|Audio)

p("E0"|Audio)

p("F0"|Audio)

single N-way discrimination for “melody”

per-note classifiers for polyphonic transcription

Music Information Extraction - Ellis

2006-02-13

p. 8 /32

Melody Transcription Features

• Short-time Fourier Transform Magnitude

(Spectrogram)

• Standardize over 50 pt frequency window

Music Information Extraction - Ellis

2006-02-13

p. 9 /32

Training Data

• Need {data, label} pairs for classifier training

• Sources:

freq / kHz

pre-mixing multitrack recordings + hand-labeling?

synthetic music (MIDI) + forced-alignment?

2

1.5

1

0.5

30

0

20

2

10

1.5

0

1

0.5

0

0

0.5

1

1.5

Music Information Extraction - Ellis

2

2.5

3

3.5

time / sec

2006-02-13

p. 10/32

Melody Transcription Results

• Trained on 17 examples

.. plus transpositions out to +/- 6 semitones

All-pairs SVMs (Weka)

• Tested on ISMIR MIREX 2005 set

Table 1: Results of the formal MIREX 2005 Audio Melody Extraction evaluation from http://www.music-ir.

org/evaluation/mirex-results/audio-melody/. Results marked with * are not directly comparable to the

others because those systems did not perform voiced/unvoiced detection. Results marked † are artificially low due to an

unresolved algorithmic issue.

includes foreground/background detection

Rank

1

2

3

3

5

6

7

8

9

10

Participant

Dressler

Ryynänen

Poliner

Paiva 2

Marolt

Paiva 1

Goto

Vincent 1

Vincent 2

Brossier

Overall Accuracy

71.4%

64.3%

61.1%

61.1%

59.5%

57.8%

49.9%*

47.9%*

46.4%*

3.2%* †

Voicing d!

1.85

1.56

1.56

1.22

1.06

0.83

0.59*

0.23*

0.86*

0.14 * †

Example...

STFT frame in the analysis of the synthesized audio.

Music

1.4 Segmentation

Information Extraction - Ellis

Voiced/Unvoiced melody classification is performed by

Raw Pitch

68.1%

68.6%

67.3%

58.5%

60.1%

62.7%

65.8%

59.8%

59.6%

3.9% †

Raw Chroma

71.4%

74.1%

73.4%

62.0%

67.1%

66.7%

71.8%

67.6%

71.1%

8.1% †

Runtime / s

32

10970

5471

45618

12461

44312

211

?

251

41

ever, give another picture: For pitch, our system is in a

three-way tie for top performance with the top two ranked

systems, and when

octave errors p.

are11

ignored

/32 we are in2006-02-13

significantly worse than the best system (Ryynänen in this

case), and almost significantly better than the top-ranked

Polyphonic Transcription

• Train SVM detectors for every piano note

same features & classifier but different labels

88 separate detectors, independent smoothing

• Use MIDI syntheses, player piano recordings

freq / pitch

Bach 847 Disklavier

A6

20

A5

10

A4

0

A3

A2

-10

A1

-20

0

1

2

3

4

5

6

7

8

level / dB

9

time / sec

about 30 min training data

Music Information Extraction - Ellis

2006-02-13

p. 12/32

Piano Transcription Results

improvement from classifier:

• Significant

Table 1: Frame level transcription results.

frame-level accuracy results:

Algorithm

SVM

Klapuri&Ryynänen

Marolt

Errs

43.3%

66.6%

84.6%

!")

False Pos

27.9%

28.1%

36.5%

False Neg

15.4%

38.5%

48.1%

d!

3.44

2.71

2.35

:540/+;/<5.6=/0

:540/+>-06.6=/0

3450067685.6-,+/22-2+9

Breakdown

• Overall Accuracy

by frameAcc: Overall accuracy is a frame-level version of the metric

proposed by Dixon in [Dixon, 2000] defined as:

type:

!))

()

&)

$)

N

Acc

=" # $ % & ' (

)

!

(F

P + FN + N)

*+,-./0+12/0/,.

")

http://labrosa.ee.columbia.edu/projects/melody/

(3)

where Music

N isInformation

the number

of correctly

transcribed2006-02-13

frames, F P

is/32

the number of

p. 13

Extraction

- Ellis

unvoiced frames U V transcribed as voiced V , and F N is the number of voiced

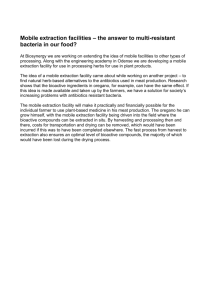

3. Eigenrhythms: Drum Pattern Space

with John Arroyo

• Pop songs built on repeating “drum loop”

variations on a few bass, snare, hi-hat patterns

• Eigen-analysis (or ...) to capture variations?

by analyzing lots of (MIDI) data, or from audio

• Applications

music categorization

“beat box” synthesis

insight

Music Information Extraction - Ellis

2006-02-13

p. 14/32

Aligning the Data

• Need to align patterns prior to modeling...

tempo (stretch):

by inferring BPM &

normalizing

downbeat (shift):

correlate against

‘mean’ template

Music Information Extraction - Ellis

2006-02-13

p. 15/32

Eigenrhythms (PCA)

• Need 20+ Eigenvectors for good coverage

of 100 training patterns (1200 dims)

• Eigenrhythms both add and subtract

Music Information Extraction - Ellis

2006-02-13

p. 16/32

Posirhythms (NMF)

Posirhythm 1

Posirhythm 2

HH

HH

SN

SN

BD

BD

Posirhythm 3

Posirhythm 4

HH

HH

SN

SN

BD

BD

0.1

Posirhythm 5

Posirhythm 6

HH

HH

SN

SN

BD

BD

0

1

50

100

2

150

200

3

250

300

4

350

400

0

-0.1

0

1

50

100

2

150

200

3

250

300

4

• Nonnegative: only adds beat-weight

• Capturing some structure

Music Information Extraction - Ellis

2006-02-13

p. 17/32

350

samples (@ 2

beats (@ 120

Eigenrhythms for Classification

• Projections in Eigenspace / LDA space

PCA(1,2) projection (16% corr)

LDA(1,2) projection (33% corr)

10

6

blues

4

country

disco

hiphop

2

house

newwave

rock 0

pop

punk

-2

rnb

5

0

-5

-10

-20

-10

0

10

-4

-8

-6

-4

-2

• 10-way Genre classification (nearest nbr):

PCA3: 20% correct

LDA4: 36% correct

Music Information Extraction - Ellis

2006-02-13

p. 18/32

0

2

Eigenrhythm BeatBox

• Resynthesize rhythms from eigen-space

Music Information Extraction - Ellis

2006-02-13

p. 19/32

4. Music Similarity

with Mike Mandel

and Adam Berenzweig

• Can we predict which songs

“sound alike” to a listener?

.. based on the audio waveforms?

many aspects to subjective similarity

• Applications

query-by-example

automatic playlist generation

discovering new music

• Problems

the right representation

modeling individual similarity

Music Information Extraction - Ellis

2006-02-13

p. 20/32

Music Similarity Features

• Need “timbral” features:

log-domain

!"ec%r'(r)m

+e,-.requency

!"ec%r'(r)m

auditory-like

frequency

warping

Mel-Frequency Cepstral Coeffs (MFCCs)

discrete

cosine

+e,-Frequency

4e"5%r),64'e..icien%5

transform

orthogonalization

Music Information Extraction - Ellis

2006-02-13

p. 21/32

Timbral Music Similarity

• Measure similarity of feature distribution

i.e. collapse across time to get density p(xi)

compare by e.g. KL divergence

• e.g. Artist Identification

learn artist model p(xi | artist X) (e.g. as GMM)

classify unknown song to closest model

Training

GMMs

Artist 1

MFCCs

KL

Artist 2

Min

Artist

KL

Test Song

Music Information Extraction - Ellis

2006-02-13

p. 22/32

“Anchor Space”

• Acoustic features describe each song

.. but from a signal, not a perceptual, perspective

.. and not the differences between songs

• Use genre classifiers to define new space

prototype genres are “anchors”

n-dimensional

vector in "Anchor

Space"

Anchor

Anchor

Audio

Input

(Class i)

p(a1|x)

AnchorAnchor

Anchor

Audio

Input

(Class j)

|x)

p(a2n-dimensional

vector in "Anchor

Space"

GMM

Modeling

Similarity

Computation

p(a1|x)p(an|x)

p(a2|x)

Anchor

Conversion to Anchorspace

GMM

Modeling

KL-d, EMD, etc.

p(an|x)

Conversion to Anchorspace

Music Information Extraction - Ellis

2006-02-13

p. 23/32

Anchor Space

• Frame-by-frame high-level categorizations

0

0.6

0.4

0.2

Electronica

fifth cepstral coef

compare to

raw features?

Anchor Space Features

Cepstral Features

0

0.2

0.4

0.6

madonna

bowie

0.8

1

0.5

0

third cepstral coef

5

10

15

madonna

bowie

15

0.5

properties in distributions? dynamics?

Music Information Extraction - Ellis

2006-02-13

10

Country

p. 24/32

5

‘Playola’ Similarity Browser

Music Information Extraction - Ellis

2006-02-13

p. 25/32

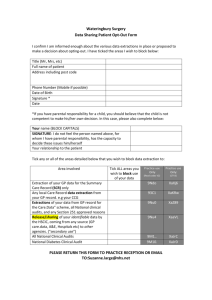

Ground-truth data

• Hard to evaluate Playola’s ‘accuracy’

user tests...

ground truth?

• “Musicseer” online survey:

ran for 9 months in 2002

> 1,000 users, > 20k judgments

http://labrosa.ee.columbia.edu/

projects/musicsim/

Music Information Extraction - Ellis

2006-02-13

p. 26/32

Evaluation

• Compare Classifier measures against

Musicseer subjective results

“triplet” agreement percentage

Top-N ranking agreement score:

! " 13

1

αr =

2

N

si = ∑ αrrαkcr

r=1

αc = α2r

First-place agreement percentage

Top rank agreement

test

- simple significance

80

70

60

SrvKnw 4789x3.58

%

50

SrvAll 6178x8.93

40

GamKnw 7410x3.96

30

GamAll 7421x8.92

20

10

0

cei

cmb

erd

e3d

opn

Music Information Extraction - Ellis

kn2

rnd

ANK

2006-02-13

p. 27/32

Using SVMs for Artist ID

• Support Vector Machines (SVMs) find

hyperplanes in a high-dimensional space

relies only on matrix of

distances between points

much ‘smarter’ than

nearest-neighbor/overlap

want diversity of reference

vectors...

(w x) + b = + 1

(w x) + b = –1

x1

x2

yi = – 1

y i = +1

w

(w x) + b = 0

Music Information Extraction - Ellis

2006-02-13

p. 28/32

Song-Level SVM Artist ID

• Instead of one model per artist/genre,

use every training song as an ‘anchor’

then SVM finds best support for each artist

Training

MFCCs

Song Features

Artist 1

D

D

D

Artist

DAG SVM

Artist 2

D

D

D

Test Song

Music Information Extraction - Ellis

2006-02-13

p. 29/32

Artist ID Results

• ISMIR/MIREX 2005 also evaluated Artist ID

• 148 artists, 1800 files (split train/test)

from ‘uspop2002’

• Song-level SVM clearly dominates

using only MFCCs!

e 4: Results of the formal MIREX 2005 Audio Artist ID evaluation (USPOP2002) from http://www.music/evaluation/mirex-results/audio-artist/.

MIREX 05 Audio Artist (USPOP2002)

Rank Participant Raw Accuracy Normalized Runtime / s

1

Mandel

68.3%

68.0%

10240

2

Bergstra

59.9%

60.9%

86400

3

Pampalk

56.2%

56.0%

4321

4

West

41.0%

41.0%

26871

5

Tzanetakis

28.6%

28.5%

2443

6

Logan

14.8%

14.8%

?

7

Lidy

Did not complete

Music Information Extraction - Ellis

References

2006-02-13 p. 30/32

John C. Platt, Nello Cristianini, and John Shawe-Ta

Large margin dags for multiclass classification. In

Playlist Generation

• SVMs are well suited to “active learning”

solicit labels on items closest to current boundary

• Automatic player

with “skip”

= Ground truth

data collection

active-SVM

automatic playlist

generation

Music Information Extraction - Ellis

2006-02-13

p. 31/32

Conclusions

Similarity/

recommend'n

Anchor

models

Semantic

bases

Music

audio

Melody

extraction

Drums

extraction

Event

extraction

Fragment

clustering

Synthesis/

generation

Eigenrhythms

?

• Lots of data

+ noisy transcription

+ weak clustering

musical insights?

Music Information Extraction - Ellis

2006-02-13

p. 32/32