Bioinspired Computing Lecture 5

advertisement

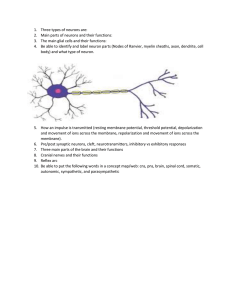

Bioinspired Computing Lecture 5 Biological Neural Networks Netta Cohen Last week: We introduced swarm intelligence. We saw how many simple agents can follow simple rules that allow them to collectively perform more complex tasks. Today... Biological systems whose manifest function is information processing: computation, thought, memory, communication and control. We begin a dissection of a brain: How different is a brain from an artificial computer? How can we build and use artificial neural networks? Investigating the brain Imagine landing on an abandoned alien planet and finding thousands of alien computers. You and your crew’s mission is to find out how they work. What do you do? Summon Scottie, your engineer Summon Data - your software wiz to disassemble the machines into component parts, test each part (electronically, optically, chemically…), decode the machine language, and study how components are connected. to connect to the input & output ports of a machine, find a language to communicate with it & write computer programs to test the system’s response by measuring its speed, efficiency & performance at different tasks. Inputs Input program part #373a Outputs The computer Output The brain as a computer Higher level functions in animal behaviour • Gathering data (sensation) • Inferring useful structures in data (perception) • Storing and recalling information (memory) • Planning and guiding future actions (decision) • Carrying out the decisions (behaviour) • Learning consequences of these actions Hardware functions and architectures • 10 billion neurons in human cortex • 10,000 synapses (connections) per neuron • Machine language: 100mV, 1-2msec spikes (action potential) • Specialised regions & pathways (visual, auditory, language…) versus The brain as a computer Special task: program often hard-coded into system. Universal, general-purpose. Software: general, user-supplied. Hardware not hard: plastic, rewiring. Hardware is hard: Only upgraded in discrete units. No clear hierarchy. Bi-diretional feedback up & down the system. Obvious hierarchy: each component has a specific function. Unreliable components. Parallelism, redundancy appear to compensate. Once burned in, circuits run without failure for extended lifetimes. Output doesn’t always match input: Internal state is important. Input-output relations are welldefined. Development & evolutionary constraints are crucial. Engineering design depends on engineer. Function is not an issue. Neuroscience pre-history • 200 AD: Greek physician Galen hypothesises that nerves carry signals back & forth between sensory organs & the brain. • 17th century: Descartes suggests that nerve signals account for reflex movements. • 19th century: Helmholtz discovers the electrical nature of these signals, as they travel down a nerve. • 1838-9: Schleiden & Schwann systematically study plant & animal tissue. Schwann proposes the theory of the cell (the basic unit of life in all living things). • Mid-1800s: anatomists map the structure of the brain. but… The microscopic composition of the brain remains elusive. A raging debate surrounds early neuroscience research, until... The neuron doctrine Ramon y Cajal (1899) 1) Neurons are cells: distinct entities (or agents). 2) Inputs & outputs are received at junctions called synapses. 3) Input & output ports are distinct. Signals are uni-directional from input to output. Today, neurons (or nerve cells) are regarded as the basic information processing unit of the nervous system. Inputs neuron Outputs The neuron as a transistor • Both have well-defined inputs and outputs. • Both are basic information processing units that comprise computational networks. If transistors can perform logical operations, maybe neurons can too? Neuronal function is typically modelled by a combination of • a linear operation (sum over inputs) and • a nonlinear one (thresholding). This simple representation relies on Cajal’s concept of input neuron output Machine language The basic “bit” of information is represented by neurons in spikes. The cell is said to be either at rest or active. A spike (action potential) is a strong, brief electrical pulse. Since these action potentials are mostly identical, we can safely refer to them as all-or-none signals. Why Spikes? Why don’t neurons use analog signals? One answer lies in the network architecture: signals cover long distances (both within the brain and throughout the body). Reliable transmissions requires strong pulses. Computation of a pyramidal neuron soma axon Many inputs (dendrites) Single all-or-none output From transistors to networks We can now summarise our working principles: • The basic computational unit of the brain is the neuron. • The machine language is binary: spikes. • Communication between neurons is via synapses. However, we have not yet asked how information is encoded in the brain, how it is processed in the brain, and whether what goes on in the brain is really ‘computation’. Information codes Temporal code Neural code Rate code Population code/ Distributed code noise Examples of both neural codes and distributed representations have been found in the brain. Example in the visual system: colour representation, face recognition, orientation, motion detection, & more… http://www.cs.stir.ac.uk/courses/31YF/Notes/Notes_NC.html Information content Example. A spike train produced by a neuron over an interval of 100ms is recorded. Neurons can produce a spike every 2ms. Therefore, 51 different rates (individual code words) can be produced by this neuron. In contrast, if the neuron were using temporal coding, up to 250 different words could be represented. In this sense, temporal coding is much more powerful. Circuitry depends on neural code Temporal codes rely on a noise-free signal transmission. Thus, we would expect to find very few ‘redundant’ neurons with co-varying outputs in that network. Accordingly, an optimal temporal coding circuit might tend to eliminate redundancy in the pattern of inputs to different neurons. On the other hand, if neural information is carried by a noisy rate-based code, then noise can be averaged out over a population of neurons. Population coding schemes, in which many neurons represent the same information, would therefore be the norm in those networks. Experiments on various brain systems find either coding systems, and in some cases, combinations of temporal and rate coding are found. Neuronal computation Having introduced neurons, neuronal circuits and even information codes with well defined inputs and outputs, we still have not mentioned the term computation. Is neuronal computation anything like computer computation? 1 1 1 1 0 1 If read 1, write 0, go right, repeat. If read 0, write 1, HALT! If read • , write 1, HALT! In a computer program, variable have initial states, there are possible transitions, and a program specifies the rules. The same is true for machine language. To obtain an answer at the end of a computation, the program must HALT. Does the brain initialise variables? Does the brain ever halt? Association an example of bio-computation One recasting of biological brain function in these computational terms was proposed by John Hopfield in the 1980s as a model for associative memory. Question: How does the brain associate some memory with a given input? Answer: The input causes the network to enter an initial state. The state of the neural network then evolves until it reaches some new stable state. The new state is associated with the input state. Association (cont.) Trajectories in a schematic state space Whatever initial condition is chosen, the system will follow a well-defined route through state-space that is guaranteed to always reach some stable point (i.e., pattern of activity) Hopfield’s ideas were strongly motivated by existing theories of self-organisation in neural networks. Today, Hopfield nets are a successful example of bio-inspired computing (but no longer believed to model computation in the brain). Learning No discussion of the brain, or nervous systems more generally is complete without mention of learning. • • • • What is learning? How does a neural network ‘know’ what computation to perform? How does it know when it gets an ‘answer’ right (or wrong)? What actually changes as a neural network undergoes ‘learning’? body Sensory inputs brain Motor outputs environment Learning (cont.) Learning can take many forms: • Supervised learning • Reinforcement learning • Association • Conditioning • Evolution At the level of neural networks, the best understood forms of learning occur in the synapses, i.e., the strengthening and weakening of connections between neurons. The brain uses its own learning algorithms to define how connections should change in a network. Learning from experience How do the neural networks form in the brain? Once formed, what determines how the circuit might change? In 1948, Donald Hebb, in his book, "The Organization of Behavior", showed how basic psychological phenomena of attention, perception & memory might emerge in the brain. Hebb regarded neural networks as a collection of cells that can collectively store memories. Our memories reflect our experience. How does experience affect neurons and neural networks? How do neural networks learn? Synaptic Plasticity Definition of Learning: experience alters behaviour The basic experience in neurons is spikes. Spikes are transmitted between neurons through synapses. Hebb suggested that connections in the brain change in response to experience. delay Pre-synaptic cell Post-synaptic cell time Hebbian learning: If the pre-synaptic cell causes the post-synaptic cell to fire a spike, then the connection between them will be enhanced. Eventually, this will lead to a path of ‘least resistance’ in the network. Today... From biology to information processing At the turn of the 21st century, “how does it work” remains an open question. But even the kernel of understanding and simplified models we already have for various brain function are priceless, in providing useful intuition and powerful tools for bioinspired computation. Next time... Artificial neural networks (part 1) Focus on the simplest cartoon models of biological neural nets. We will build on lessons from today to design simple artificial neurons and networks that perform useful computational tasks.