'em, can't live without

Journal rankings: Can’t live with ‘em, can’t live without ‘em!

Professor John Mingers

Kent Business School,

ECU, February 2014 j.mingers@kent.ac.uk

Components of the REF

– Components of the submission

• Staff data

• Output data (65%)

• Impact case studies and contextual narrative

(20%)

• Research environment narrative, income and

PhD data (15%)

– Outcome reported as a

‘quality profile’

Page

2

Journal rankings

• Many different journal rankings each with its own biases and prejudices

• They are based on often arbitrary criteria. They can be by peer review or behavioural (e.g, impact factors)

• The original Kent ranking was simply a statistical combination of other rankings “ Objectivity results from a combination of subjectivities ” (Ackoff)

• ABS ranking was based on a UWE peer review ranking that was developed for ABS

• Since the 2008 RAE it has become the de facto standard and yet is hugely contentious

Advantages of journal rankings

1.

Problems of peer reviewing papers

1.

Simply too time consuming

2.

Disagreements between reviewers (cf journal referees disagreeing)

3.

Bias

2.

Makes more open and transparent what would otherwise be very judgmental and open to bias

3.

Provides a common currency against which to discuss and judge research quality

4.

Provides clear guidance and targets for people to aim at

5.

Provides a lot of information for DoRs, ECRs, libraries etc.

Problems of journal rankings (ABS in particular)

1. History and development

• 2004 Version 1 Bristol BS “Not intended for general circulation”; based on journals submitted to RAE 2001 plus others; grades standardised to 2008 RAE 1* - 4*; decisions made by the editors

(In OR/MS there are only 19 journals but 6 have the top grade

In IS/IT there were 25 journals of which 7 have the top grade)

• 2007 Version 1 of ABS list; based on the BBS one but with input from subject specialists and use of impact factors; many journals downgraded

Who were the subject experts? How/why were they chosen? Why were disciplinary bodies, e.g., COPIOR, not included?

(In OR there are 40 journals, 5 have top score but 3 of these are statistics journals, so only 2, American, OR journals – Management Science and Operations Research

In IS there are 68 journals, but only 4 top ones, all US

In both areas, all the UK/Euro ones had been demoted leaving only US ones

The people on the Panel were Chris Voss, an Ops Mgt person at LBS, and Bob

O’Keefe, more an IS person, who had just returned from the US)

• 2010 current version. Has become highly contentious, especially in particular fields such as OR, IS and Accounting and Finance.

2. General coverage of management

Numbers of journals in the RAE and the ABS list

No of submissions

No. of staff submitted

Total no. of outputs

No. of journal papers (% of total) a

No. of journal titles

Mean outputs/journal

Mean outputs/institution

1996

100

2300+

8000+

90

3300

12575

5494 (69%) 7973 (80%) 11625

1275 1582

(92%)

1639

4.3

80.0

2001

97

3000+

9942

5.0

102.5

2008

7.1

139.7

Submission statistics for the last three RAEs

Adapted from Geary et al (2004), Bence and Oppenheim (2004), RAE (2009a) a Totals differ slightly between different sources. Figures for 2008 are after data cleaning as described later

E

G

H

N

Output

Type

A

B

C

D

T

Description

Authored book

Edited book

Chapter in book

Journal article

Conference contribution

Software

Internet publication

Research report for external body

Other form of assessable output

Total

1996 2001

431

77

863

7973

295

3

24

80

184

2008

22

9942 12575

Number of publications by output type

Adapted from Geary et al (2004), Bence and Oppenheim (2004), RAE (2009a). Categories with zero entries have been suppressed

285

60

332

11374

85

1

318

98

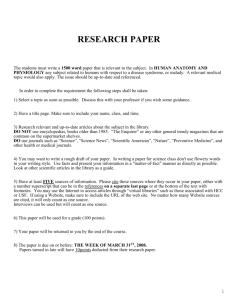

Figure 1 Pareto curve for the number of entries per journal in the

2008 RAE

3. Disciplinary coverage

• There are 22 different subject area in the list which seems like a lot. It is also very ad hoc:

“an eclectic mix of categories consisting of: academic disciplines, business functions, industries, sectors, issues or interests as well as more or less residual categories which includes many of the leading business and management journals”

Rowlinson, 2013

• Much of the list is devoted to reference disciplines rather than to B&M and applied areas – Economics (16%), Psychology (5%), Social science (7%) – nearly 30% in total.

But: Gen Man (4%), HR (4%), Marketing (7%), OR (4%), Strategy (2%)

(In OR, ABS had 35, but COPIOR list has 68 and is growing)

• Unequal proportions of 4*

Psychology (42%), Gen Mgt (23%), Soc Sci (20%), Econ (13%), HR (11%),

Marketing (9%), Fin (7%), Ops Mgt (3%), Ethics/governance (0%), Mgt Ed

(0%)

(In OR, there are 4x4* so apparently 11%, but in fact 2 are statistics journals so in reality 6%)

• Specific disciplinary factors (e.g., why is Business History a 4*?).

See Accounting Education December 2011 for critiques from an

Accounting perspective

(The two 4* OR journals are American and specifically exclude

Soft OR, which is one of the major British strengths)

• Over-reliance on ISI impact factors – journals not in ISI are ignored or at least lowly graded

4. Problems of process

• Lack of openness about how the list is created or updated

• Lack of engagement with disciplinary communities

(No members of COPIOR on the committee despite our offers)

• Few changes made despite protests; little attempt to address the criticisms

(COPIOR overtures ignored so reluctantly we produced our own ranking.

This too has been ignored)

• Is it now seen as a money-making venture?

Problems with a single dominant list

1.

Journals outside the list are inevitably marginalised

2.

With the REF, journals at 1*, and increasingly at 2* are devalued

3.

It’s very hard for new journals to get started

4.

The quality levels given in the list tend to be taken as the quality levels of both the journal and then the papers within it “It’s a 3* paper”, “Jane Bloggs is a 4* researcher”

5.

Individual researchers are disciplined into channelling into ABS journals

6.

It discourages cross disciplinary or applied work

7.

The particular focus of ABS appears to be on US journals – these tend to be highly theoretical, positivistic, and anti-pluralist

This leads to less practical and engaged work and more arcane theory

8.

Ideas or work that is pushing the boundaries will not get published and hence will not get done

9.

Journal fetishism - gimme that 4* “hit”

10.

Potentially serious effect on peoples’ careers and sections of

Schools – e.g., OR at Warwick which was decimated

All journals

ABS Grades

Journals not in

RAE

Journals in

RAE and our list

All list

RAE Estimated Grades our Journals not in ABS

Journals in

ABS and our list

4*

3*

2*

10%

24%

37%

1* 27%

0*

GPA 2.17

4%

12%

39%

45%

1.74

15%

31%

37%

17%

2.43

17%

29%

28%

23%

3%

2.34

13%

28%

26%

19%

13%

2.09

18%

31%

28%

22%

2%

2.41

Table 9 Proportions of journals in particular ranks comparing ABS with RAE grades

Note: we show the proportions in terms of % for ease of comparison but all Chi-Square tests were performed on the underlying frequencies

Conclusions from Table 9

•

Overall RAE grades were higher than overall ABS grades (cols 1,

4) but this was because of selectivity of submissions

• This can be seen by comparing the ABS submitted with the ABS not submitted (cols 2, 3)

• Comparing those journals that are in common the level of grading is very similar (cols 3,6)

• In the RAE , ABS journals were graded more highly than non-ABS journals (cols 5,6)

• 13% of non-ABS journals were graded 0*

Figure 3 Scattergram showing association between GPA and proportion of an institution’s submitted journals that are in ABS

There are at least 3 possible explanations of this:

Higher %

ABS journals

Higher quality of department

Better RAE grades

Higher %

ABS journals

Better RAE grades

Higher quality of department

Higher %

ABS journals

“RAE Bias”

“Better depts. more mainstream”

“Greater selectivity”

Problems with the whole RAE regime

•

Current measurement regimes are hugely distorting to research:

• Narrow focus on types of outputs – ie “4*” English language journal articles

• Narrow focus on types of measurements

• Narrow focus on types of impact

• The RAE/REF has had a huge negative effect on the overall contribution of research in the UK – lack of innovation, opening up new areas; lack of major projects (books); lack of engaged research trying to deal with the real problems of our society and environment

• Concentration on peer review and rejection of bibliometrics (current REF Panel)

– leads to maintenance of the status quo – “The Golden Triangle”

• Should we stop now and develop a system that aims to evaluate quality in a variety of forms, a variety of media, through a variety of measures with the ultimate goal of answering significant questions?

N. Adler and A-W Harzing, 2009 “When Knowledge Wins: Transcending the Sense and Nonsense of Academic

Rankings”, Academy of Management Learning and Education 8, 1, 72-95

S. Hussain, 2013 “Journal Fetishism and the ‘Sign of the 4’ in the ABS guide: A Question of Trust? Organization (online publication)

S. Hussain, 2011 “Food for Thought on the ABS Academic Journal Quality Guide”, Accounting Edication 20, pp. 545-559

H. Willmott, 2011 “Journal List Fetishism and the Perversion of Scholarship: Reactivity and the ABS List”, Organization

18, 4, pp. 429-442

J. Mingers and L. Leydesdorff, 2013, “Identifying Research Fields within Business and Management: A Journal Cross-

Citation Analysis, available from http://arxiv.org/abs/1212.677

3

J. Mingers and H. Willmott, 2012, “Taylorizing Business School Research: On the “One Best Way” Performative Effects of Journal Ranking Lists”, Human Relations, DOI: 10.1177/0018726712467048

J. Mingers, K. Watson and M. P. Scaparra, 2012 “Estimating Business and Management Journal Quality from the 2008

Research Assessment Exercise in the UK”, Information Processing and Management 48, 6, pp. 1078-1093, http://dx.doi.org/10.1016/j.ipm.2012.01.008

J. Mingers, F. Macri and D. Petrovici, 2011, “Using the h-index to Measure the Quality of Journals in the field of

Business and Management”, Information Processing and Management, 48, 2, pp. 234-241 http://dx.doi.org/10.1016/j.ipm.2011.03.009

J. Mingers, 2009, “Measuring the Research Contribution of Management Academics using the Hirsch-Index”, J. of the

Operational Research Society, 60, 8, pp. 1143-1153, doi 10.1057/jors.2008.94

J. Mingers and A.-W. Harzing, 2007, “Ranking Journals in Business and Management: A Statistical Analysis of the

Harzing Database”, European J. of Information Systems 16, 4, pp. 303-316, http://www.palgravejournals.com/ejis/journal/v16/n4/pdf/3000696a.pdf

J. Mingers and Q. Burrell, 2006, “Modelling Citation Behavior in Management Science Journals”, Information Processing and Management 42, 6, pp. 1451-1464

H. Morris, C. Harvey, Aidan Kelly and M. Rowlinson, 2011 “Food for Thought? A Rejoinder on Peer Review and the RAE

2008 Evidence”, Accounting Edication 20, pp. 561-573

D. Tourish, 2011, “Leading Questions: Journal Rankings, Academic freedom, and Performativity: What is or Should be the Future of Leadership?”, Leadership 7, pp. 367-381