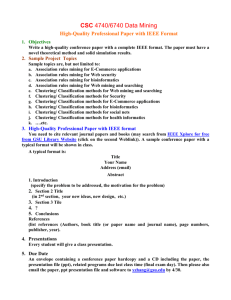

DMBMI_introduction_1

advertisement

Machine Learning

SCE 5820: Machine Learning

Instructor: Jinbo Bi

Computer Science and Engineering Dept.

1

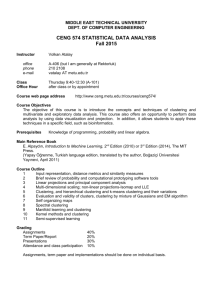

Course Information

Instructor: Dr. Jinbo Bi

– Office: ITEB 233

– Phone: 860-486-1458

– Email: jinbo@engr.uconn.edu

– Web: http://www.engr.uconn.edu/~jinbo/

– Time: Tue / Thur. 2:00pm – 3:15pm

– Location: BCH 302

– Office hours: Thur. 3:15-4:15pm

HuskyCT

– http://learn.uconn.edu

– Login with your NetID and password

– Illustration

2

Introduction of the instructor and TA

Ph.D in Mathematics

Research interests: machine learning, data mining,

optimization, biomedical informatics, bioinformatics

Color of flowers

Cancer,

Psychiatric

disorders,

…

http://labhealthinfo.uconn.e

du/EasyBreathing

subtyping

GWAS

3

Course Information

Prerequisite: Basics of linear algebra, calculus,

optimization and basics of programming

Course textbook (not required):

– Introduction to Data Mining (2005) by Pang-Ning Tan,

Michael Steinbach, Vipin Kumar

– Pattern Recognition and Machine Learning (2006)

Christopher M. Bishop

– Pattern Classification (2nd edition, 2000) Richard O.

Duda, Peter E. Hart and David G. Stork

Additional class notes and copied materials will be given

Reading material links will be provided

4

Course Information

Objectives:

– Introduce students knowledge about the basic

concepts of machine learning and the state-of-the-art

machine learning algorithms

– Focus on some high-demanding application domains

with hands-on experience of applying data mining/

machine learning techniques

Format:

– Lectures, Micro teaching assignment, Quizzes, A term

project

5

Grading

Micro teaching assignment (1):

20%

In-class/In-lab open-book open notes quizzes (4-5):

40%

Term Project (1):

30%

Participation:

10%

Term Project is one for each term. A term can consist of

one or two students. Each student in the team needs to

specify his/her roles in the project.

Term projects can be chosen from a list of pre-defined

projects

6

Policy

Computers

Participation in micro-teaching sessions is very

important, and itself accounts for 50% of the

credits for micro-teaching assignment

Quizzes are graded by the instructor

Final term projects will be graded by the

instructor

If you miss two quizzes, there will be a takehome quiz to make up the credits (missing one

may be ok for your final grade.)

7

Micro-teaching sessions

Students in our class need to form THREE

roughly-even study groups

The instructor will help to balance off the study

groups

Each study group will be responsible of teaching

one specific topic chosen from the following:

– Support Vector Machines

– Spectral Clustering

– Boosting (PAC learning model)

8

Term Project

Each team needs to give two presentations: a

progress or preparation presentation (10-15min);

a final presentation in the last week (15-20min)

Each team needs to submit a project report

– Definition of the problem

– Data mining approaches used to solve the

problem

– Computational results

– Conclusion (success or failure)

9

Machine Learning / Data Mining

Data mining (sometimes called data or

knowledge discovery) is the process of analyzing

data from different perspectives and summarizing

it into useful information

–

The ultimate goal of machine learning is the

creation and understanding of machine

intelligence

–

http://www.kdd.org/kdd2013/ ACM SIGKDD conference

http://icml.cc/2013/ ICML conference

The main goal of statistical learning theory is to

provide a framework for studying the problem of

inference, that is of gaining knowledge, making

predictions, and decisions from a set of data.

–

http://nips.cc/Conferences/2012/ NIPS conference

10

Traditional Topics in Data Mining /AI

Fuzzy set and fuzzy logic

– Fuzzy if-then rules

Evolutionary computation

– Genetic algorithms

– Evolutionary strategies

Artificial neural networks

– Back propagation network (supervised

learning)

– Self-organization network (unsupervised

learning, will not be covered)

11

Challenges in traditional techniques

Lack theoretical analysis about the behavior of

the algorithms

Traditional Techniques

may be unsuitable due to

Statistics/

Machine Learning/

– Enormity of data

AI

Pattern

Recognition

– High dimensionality

of data

Soft Computing

– Heterogeneous,

distributed nature

of data

12

Recent Topics in Data Mining

Supervised learning such as classification

and regression

– Support vector machines

– Regularized least squares

– Fisher discriminant analysis (LDA)

– Graphical models (Bayesian nets)

– Boosting algorithms

Draw from Machine Learning domains

13

Recent Topics in Data Mining

Unsupervised learning such as clustering

– K-means

– Gaussian mixture models

– Hierarchical clustering

– Graph based clustering (spectral clustering)

Dimension reduction

– Feature selection

– Compact feature space into low-dimensional

space (principal component analysis)

14

Statistical Behavior

Many perspectives to analyze how the algorithm

handles uncertainty

Simple examples:

– Consistency analysis

– Learning bounds (upper bound on test error of

the constructed model or solution)

“Statistical” not “deterministic”

– With probability p, the upper bound holds

P( > p) <= Upper_bound

15

Tasks may be in Data Mining

Prediction tasks (supervised problem)

– Use some variables to predict unknown or

future values of other variables.

Description tasks (unsupervised problem)

– Find human-interpretable patterns that

describe the data.

From [Fayyad, et.al.] Advances in Knowledge Discovery and Data Mining, 1996

16

Classification: Definition

Given a collection of examples (training set )

– Each example contains a set of attributes, one of

the attributes is the class.

Find a model for class attribute as a function

of the values of other attributes.

Goal: previously unseen examples should be

assigned a class as accurately as possible.

– A test set is used to determine the accuracy of the

model. Usually, the given data set is divided into

training and test sets, with training set used to build

the model and test set used to validate it.

17

Classification Example

Tid Refund Marital

Status

Taxable

Income Cheat

Refund Marital

Status

Taxable

Income Cheat

1

Yes

Single

125K

No

No

Single

75K

?

2

No

Married

100K

No

Yes

Married

50K

?

3

No

Single

70K

No

No

Married

150K

?

4

Yes

Married

120K

No

Yes

Divorced 90K

?

5

No

Divorced 95K

Yes

No

Single

40K

?

6

No

Married

No

No

Married

80K

?

60K

10

7

Yes

Divorced 220K

No

8

No

Single

85K

Yes

9

No

Married

75K

No

10

10

No

Single

90K

Yes

Training

Set

Learn

Classifier

Test

Set

Model

18

Classification: Application 1

High Risky Patient Detection

– Goal: Predict if a patient will suffer major complication

after a surgery procedure

– Approach:

Use

patients vital signs before and after surgical operation.

– Heart Rate, Respiratory Rate, etc.

Monitor

patients by expert medical professionals to label

which patient has complication, which has not.

Learn a model for the class of the after-surgery risk.

Use this model to detect potential high-risk patients for a

particular surgical procedure

19

Classification: Application 2

Face recognition

– Goal: Predict the identity of a face image

– Approach:

Align

all images to derive the features

Model

the class (identity) based on these features

20

Classification: Application 3

Cancer Detection

– Goal: To predict class

(cancer or normal) of a

sample (person), based on

the microarray gene

expression data

– Approach:

Use

expression levels of all

genes as the features

Label

each example as cancer

or normal

Learn

a model for the class of

all samples

21

Classification: Application 4

Alzheimer's Disease Detection

– Goal: To predict class (AD

or normal) of a sample

(person), based on

neuroimaging data such as

MRI and PET

– Approach:

Extract

features from

neuroimages

Label

each example as AD or

Reduced gray matter volume (colored

normal

areas) detected by MRI voxel-based

Learn

a model for the class of morphometry in AD patients

compared to normal healthy controls.

all samples

22

Regression

Predict a value of a real-valued variable based on the

values of other variables, assuming a linear or nonlinear

model of dependency.

Extensively studied in statistics, neural network fields.

Find a model to predict the dependent variable

as a function of the values of independent

variables.

Goal: previously unseen examples should be

predicted as accurately as possible.

– A test set is used to determine the accuracy of the

model. Usually, the given data set is divided into

training and test sets, with training set used to build

the model and test set used to validate it.

23

Regression application 1

Current data, want to use the model to predict

Tid Refund

Marital

Status

Taxable

Income

Loss

Refund Marital

Status

Taxable

Income Loss

1

Yes

Single

125K

100

No

Single

75K

?

2

No

Married

100K

120

Yes

Married

50K

?

3

No

Single

70K

-200

No

Married

150K

?

4

Yes

Married

120K

-300

Yes

Divorced 90K

?

5

No

Divorced 95K

-400

No

Single

40K

?

6

No

Married

-500

No

Married

80K

?

60K

10

7

Yes

Divorced 220K

-190

8

No

Single

85K

300

9

No

Married

75K

-240

10

No

Single

90K

90

10

Training

Set

Learn

Regressor

Test

Set

Model

Past transaction records, label them

goals: Predict the possible loss from a customer

24

Regression applications

Examples:

– Predicting sales amounts of new product

based on advertising expenditure.

– Predicting wind velocities as a function of

temperature, humidity, air pressure, etc.

– Time series prediction of stock market indices.

25

Clustering Definition

Given a set of data points, each having a set of

attributes, and a similarity measure among them,

find clusters such that

– Data points in one cluster are more similar to

one another.

– Data points in separate clusters are less

similar to one another.

Similarity Measures:

– Euclidean Distance if attributes are

continuous.

– Other Problem-specific Measures

26

Illustrating Clustering

Euclidean Distance Based Clustering in 3-D space.

Intracluster distances

are minimized

Intercluster distances

are maximized

27

Clustering: Application 1

High Risky Patient Detection

– Goal: Predict if a patient will suffer major complication

after a surgery procedure

– Approach:

Use

patients vital signs before and after surgical operation.

– Heart Rate, Respiratory Rate, etc.

Find

patients whose symptoms are dissimilar from most of

other patients.

28

Clustering: Application 2

Document Clustering:

– Goal: To find groups of documents that are

similar to each other based on the important

terms appearing in them.

– Approach: To identify frequently occurring

terms in each document. Form a similarity

measure based on the frequencies of different

terms. Use it to cluster.

– Gain: Information Retrieval can utilize the

clusters to relate a new document or search

term to clustered documents.

29

Illustrating Document Clustering

Clustering Points: 3204 Articles of Los Angeles Times.

Similarity Measure: How many words are common in

these documents (after some word filtering).

Category

Total

Articles

Correctly

Placed

555

364

Foreign

341

260

National

273

36

Metro

943

746

Sports

738

573

Entertainment

354

278

Financial

30

Algorithms to solve these problems

31

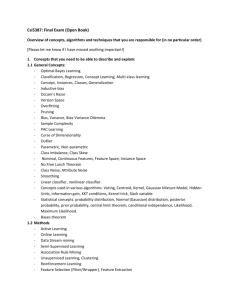

Classification algorithms

K-Nearest-Neighbor classifiers

Naïve Bayes classifier

Neural Networks

Linear Discriminant Analysis (LDA)

Support Vector Machines (SVM)

Decision Trees

Logistic Regression

Graphical models

32

Regression methods

Linear Regression

Ridge Regression

LASSO – Least Absolute Shrinkage and

Selection Operator

Neural Networks

33

Clustering algorithms

K-Means

Hierarchical

clustering

Graph-based clustering (Spectral

clustering)

Semi-supervised clustering

Others

34

Challenges of Data Mining

Scalability

Dimensionality

Complex and Heterogeneous Data

Data Quality

Data Ownership and Distribution

Privacy Preservation

35

Basics of probability

An experiment (random variable) is a welldefined process with observable outcomes.

The set or collection of all outcomes of an

experiment is called the sample space, S.

An event E is any subset of outcomes from S.

Probability of an event, P(E) is P(E) = number of

outcomes in E / number of outcomes in S.

36

Probability Theory

Apples and Oranges

X: identity of the fruit

Y: identity of the box

Assume P(Y=r) = 40%, P(Y=b) = 60% (prior)

P(X=a|Y=r) = 2/8 = 25%

P(X=o|Y=r) = 6/8 = 75%

Marginal

P(X=a|Y=b) = 3/4 = 75%

P(X=o|Y=b) = 1/4 = 25%

P(X=a) = 11/20, P(X=o) = 9/20

Posterior

P(Y=r|X=o) = 2/3

P(Y=b|X=o) = 1/3

37

Probability Theory

Marginal Probability

Conditional Probability

Joint Probability

38

Probability Theory

• Product Rule

Sum Rule

The marginal prob of X equals the sum of

the joint prob of x and y with respect to y

The joint prob of X and Y equals the product of the conditional prob of Y

given X and the prob of X

39

Illustration

p(X,Y)

p(Y)

Y=2

Y=1

p(X)

p(X|Y=1)

40

The Rules of Probability

Sum Rule

Product Rule

= p(X|Y)p(Y)

Bayes’ Rule

posterior likelihood × prior

41

Application of Prob Rules

Assume P(Y=r) = 40%, P(Y=b) = 60%

P(X=a|Y=r) = 2/8 = 25%

P(X=o|Y=r) = 6/8 = 75%

P(X=a|Y=b) = 3/4 = 75%

P(X=o|Y=b) = 1/4 = 25%

p(X=a) = p(X=a,Y=r) + p(X=a,Y=b)

= p(X=a|Y=r)p(Y=r) + p(X=a|Y=b)p(Y=b)

=0.25*0.4 + 0.75*0.6 = 11/20

P(X=o) = 9/20

p(Y=r|X=o) = p(Y=r,X=o)/p(X=o)

= p(X=o|Y=r)p(Y=r)/p(X=o)

= 0.75*0.4 / (9/20) = 2/3

42

Application of Prob Rules

Assume P(Y=r) = 40%, P(Y=b) = 60%

P(X=a|Y=r) = 2/8 = 25%

P(X=o|Y=r) = 6/8 = 75%

P(X=a|Y=b) = 3/4 = 75%

P(X=o|Y=b) = 1/4 = 25%

p(X=a) = p(X=a,Y=r) + p(X=a,Y=b)

= p(X=a|Y=r)p(Y=r) + p(X=a|Y=b)p(Y=b)

=0.25*0.4 + 0.75*0.6 = 11/20

P(X=o) = 9/20

p(Y=r|X=o) = p(Y=r,X=o)/p(X=o)

= p(X=o|Y=r)p(Y=r)/p(X=o)

= 0.75*0.4 / (9/20) = 2/3

43

Mean and Variance

The mean of a random variable X is the

average value X takes.

The variance of X is a measure of how

dispersed the values that X takes are.

The standard deviation is simply the square

root of the variance.

44

Simple Example

X= {1, 2} with P(X=1) = 0.8 and P(X=2) = 0.2

Mean

– 0.8 X 1 + 0.2 X 2 = 1.2

Variance

– 0.8 X (1 – 1.2) X (1 – 1.2) + 0.2 X (2 – 1.2)

X (2-1.2)

45

The Gaussian Distribution

46

Gaussian Mean and Variance

47

The Multivariate Gaussian

y

x

48

References

SC_prob_basics1.pdf (necessary)

SC_prob_basic2.pdf

Loaded to HuskyCT

49

Basics of Linear Algebra

50

Matrix Multiplication

The product of two matrices

Special case: vector-vector product, matrix-vector

product

A

B

C

51

Matrix Multiplication

52

Rules of Matrix Multiplication

B

A

C

53

Orthogonal Matrix

1

1

..

.

1

54

Square Matrix – EigenValue, EigenVector

( , x) is an eigen pair of A, if and only if Ax x.

is the eigenvalue

x is the eigenvecto r

where

55

Symmetric Matrix – EigenValue EigenVector

A is symmetric, if A AT

eigen-decomposition of A

.

A nn is symmetric and positive semi -definite, if xT Ax 0, for any x n .

i 0, i 1,, n

A nn is symmetric and positive definite, if xT Ax 0, for any nonzero x n .

i 0, i 1,, n

56

Matrix Norms and Trace

Frobenius norm

57

Singular Value Decomposition

orthogonal

diagonal

orthogonal

58

References

SC_linearAlg_basics.pdf (necessary)

SVD_basics.pdf

loaded to HuskyCT

59

Summary

This is the end of the FIRST chapter of this

course

Next Class

Cluster analysis

– General topics

– K-means

Slides after this one are backup slides, you can

also check them to learn more

60