Document

advertisement

Computational Linguistics

Dragomir Radev

Wrocław, Poland

July 29, 2009

Example (from a famous movie)

Dave Bowman: Open the pod bay doors, HAL.

HAL: I’m sorry Dave. I’m afraid I can’t do that.

Instructor

• Dragomir Radev, Professor, Computer

Science and Information, Linguistics,

University of Michigan

• radev@umich.edu

Natural Language

Understanding

• … about teaching computers to make

sense of naturally occurring text.

• … involves programming, linguistics,

artificial intelligence, etc.

• …includes machine translation, question

answering, dialogue systems, database

access, information extraction, game

playing, etc.

Example

I saw her fall

• How many different interpretations does

the above sentence have? How many of

them are reasonable/grammatical?

Silly sentences

•

•

•

•

•

•

•

•

•

•

•

Children make delicious snacks

Stolen painting found by tree

I saw the Grand Canyon flying to New York

Court to try shooting defendant

Ban on nude dancing on Governor’s desk

Red tape holds up new bridges

Iraqi head seeks arms

Blair wins on budget, more lies ahead

Local high school dropouts cut in half

Hospitals are sued by seven foot doctors

In America a woman has a baby every 15 minutes. How

does she do that?

Types of ambiguity

•

•

•

•

•

•

•

•

•

•

•

•

•

•

Morphological: Joe is quite impossible. Joe is quite important.

Phonetic: Joe’s finger got number.

Part of speech: Joe won the first round.

Syntactic: Call Joe a taxi.

Pp attachment: Joe ate pizza with a fork. Joe ate pizza with meatballs. Joe

ate pizza with Mike. Joe ate pizza with pleasure.

Sense: Joe took the bar exam.

Modality: Joe may win the lottery.

Subjectivity: Joe believes that stocks will rise.

Scoping: Joe likes ripe apples and pears.

Negation: Joe likes his pizza with no cheese and tomatoes.

Referential: Joe yelled at Mike. He had broken the bike.

Joe yelled at Mike. He was angry at him.

Reflexive: John bought him a present. John bought himself a present.

Ellipsis and parallelism: Joe gave Mike a beer and Jeremy a glass of wine.

Metonymy: Boston called and left a message for Joe.

NLP

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

Information extraction

Named entity recognition

Trend analysis

Subjectivity analysis

Text classification

Anaphora resolution, alias resolution

Cross-document crossreference

Parsing

Semantic analysis

Word sense disambiguation

Word clustering

Question answering

Summarization

Document retrieval (filtering, routing)

Structured text (relational tables)

Paraphrasing and paraphrasing/entailment ID

Text generation

Machine translation

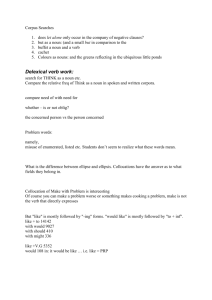

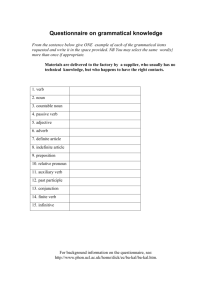

Syntactic categories

• Substitution test:

Nathalie likes

{ }

black

Persian

tabby

small

cats.

easy to raise

• Open (lexical) and closed (functional)

categories:

No-fly-zone

yadda yadda yadda

the

in

Jabberwocky (Lewis Carroll)

Twas brillig, and the slithy toves

Did gyre and gimble in the wabe:

All mimsy were the borogoves,

And the mome raths outgrabe.

"Beware the Jabberwock, my son!

The jaws that bite, the claws that catch!

Beware the Jubjub bird, and shun

The frumious Bandersnatch!"

Phrase structure

S

NP

That

VP

man

VBD

PP

NP

caught the butterfly

NP

IN

with

a

net

Sample phrase-structure

grammar

S

NP

NP

NP

VP

VP

VP

P

NP

AT

AT

NP

VP

VBD

VBD

IN

VP

NNS

NN

PP

PP

NP

NP

AT

NNS

NNS

NNS

VBD

VBD

VBD

IN

IN

NN

the

children

students

mountains

slept

ate

saw

in

of

cake

Phrase structure grammars

• Local dependencies

• Non-local dependencies

• Subject-verb agreement

The women who found the wallet were given a reward.

• wh-extraction

Should Peter buy a book?

Which book should Peter buy?

• Empty nodes

Subcategorization

Subject: The children eat candy.

Object: The children eat candy.

Prepositional phrase: She put the book on the

table.

Predicative adjective: We made the man angry.

Bare infinitive: She helped me walk.

To-infinitive: She likes to walk.

Participial phrase: She stopped singing that tune

at the end.

That-clause: She thinks that it will rain tomorrow.

Question-form clauses: She asked me what book

I was reading.

Phrase structure ambiguity

• Grammars are used for generating and parsing

sentences

• Parses

• Syntactic ambiguity

• Attachment ambiguity: Our company is training

workers.

• The children ate the cake with a spoon.

• High vs. low attachment

• Garden path sentences: The horse raced past

the barn fell. Is the book on the table red?

Sentence-level constructions

•

•

•

•

•

Declarative vs. imperative sentences

Imperative sentences: S VP

Yes-no questions: S Aux NP VP

Wh-type questions: S Wh-NP VP

Fronting (less frequent):

On Tuesday, I would like to fly to San Diego

Semantics and pragmatics

• Lexical semantics and compositional semantics

• Hypernyms, hyponyms, antonyms, meronyms

and holonyms (part-whole relationship, tire is a

meronym of car), synonyms, homonyms

• Senses of words, polysemous words

• Homophony (bass).

• Collocations: white hair, white wine

• Idioms: to kick the bucket

Discourse analysis

• Anaphoric relations:

1. Mary helped Peter get out of the car. He thanked her.

2. Mary helped the other passenger out of the car.

The man had asked her for help because of his foot

injury.

• Information extraction problems (entity

crossreferencing)

Hurricane Hugo destroyed 20,000 Florida homes.

At an estimated cost of one billion dollars, the disaster

has been the most costly in the state’s history.

Pragmatics

• The study of how knowledge about the

world and language conventions interact

with literal meaning.

• Speech acts

• Research issues: resolution of anaphoric

relations, modeling of speech acts in

dialogues

Coordination

• Coordinate noun phrases:

– NP NP and NP

– S S and S

– Similar for VP, etc.

Agreement

• Examples:

–

–

–

–

–

Do any flights stop in Chicago?

Do I get dinner on this flight?

Does Delta fly from Atlanta to Boston?

What flights leave in the morning?

* What flight leave in the morning?

• Rules:

–

–

–

–

–

S Aux NP VP

S 3sgAux 3sgNP VP

S Non3sgAux Non3sgNP VP

3sgAux does | has | can …

non3sgAux do | have | can …

Agreement

• We now need similar rules for pronouns,

also for number agreement, etc.

– 3SgNP (Det) (Card) (Ord) (Quant) (AP)

SgNominal

– Non3SgNP (Det) (Card) (Ord) (Quant) (AP)

PlNominal

– SgNominal SgNoun | SgNoun SgNoun

– etc.

Combinatorial explosion

• What other phenomena will cause the

grammar to expand?

• Solution: parameterization with feature

structures (see Chapter 11)

Parsing as search

S NP VP

Det that | this |a

S Aux NP VP

Noun book | flight | meal | money

S VP

Verb book | include | prefer

NP Det Nominal

Aux does

Nominal Noun

Proper-Noun Houston | TWA

Nominal Noun Nominal

Prep from | to | on

NP Proper-Noun

VP Verb

VP Verb NP

Nominal Nominal PP

Parsing as search

Book that flight.

S

Two types of constraints

on the parses:

a) some that come from

the input string,

b) others that come from

the grammar

VP

NP

Nom

Verb

Det

Noun

Book

that

flight

Top-down parsing

S

S

NP

VP

S

NP

S

S

VP

Det Nom

NP

PropN

VP

Aux NP

S

VP

VP

S

S

S

S

Aux NP VP

Aux NP VP

VP

VP

Det Nom

PropN

V

NP

V

Bottom-up parsing

Book

that

flight

Noun

Det

Noun

Verb

Det

Noun

Book

that

flight

Book

that

flight

NOM

NOM

NOM

Noun

Det

Noun

Verb

Det

Noun

Book

that

flight

Book

that

flight

NP

NOM

NP

NOM

VP

NOM

Noun

Det

Noun

Verb

Det

Noun

Book

that

flight

Book

that

flight

VP

NOM

VP

NP

Verb

Det

Noun

Book

that

flight

NP

NOM

NOM

Verb

Det

Noun

Verb

Det

Noun

Book

that

flight

Book

that

flight

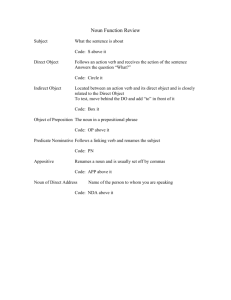

Grammatical Relations

and Free Ordering of Subject and Object

SVO

- Кого увидел Вася?

- Вася увидел Машу.

- Who did Vasya see?

- Vasya saw Masha.

SOV

- Кого же Вася увидел?

- Вася Машу увидел

- Who did Vasya see?

- Vasya saw Masha

VSO

OSV

- Увидел Вася кого?

- Кого же Вася увидел?.

- Увидел Вася Машу .

- Машу Вася увидел.

- (Actually,) who did Vasya see?- Who did Vasya see?

- Vasya saw Masha

- Vasya saw Masha

OVS

- Да кого увидел Вася?

- Машу увидел Вася

- Well, whom did Vasya see?

- It was Masha whom Vasya

saw.

Slide from Lori Levin, originally by Leonid Iomdin

VOS

- Увидел Машу кто?

- Увидел Машу Вася.

- Who saw Masha, at the

end?

- It was Vasya who saw

Masha

Features and unification

• Grammatical categories have properties

• Constraint-based formalisms

• Example: this flights: agreement is difficult to

handle at the level of grammatical categories

• Example: many water: count/mass nouns

• Sample rule that takes into account features: S

NP VP (but only if the number of the NP is

equal to the number of the VP)

Feature structures

CAT

NP

NUMBER SINGULAR

PERSON 3

CAT

AGREEMENT

NP

NUMBER SG

PERSON 3

Feature paths:

{x agreement number}

Unification

[NUMBER SG]

[NUMBER SG]

+

[NUMBER SG]

[NUMBER PL]

-

[NUMBER SG]

[NUMBER []] = [NUMBER SG]

[NUMBER SG]

[PERSON 3] = ?

Agreement

• S NP VP

{NP AGREEMENT} = {VP AGREEMENT}

• Does this flight serve breakfast?

• Do these flights serve breakfast?

• S Aux NP VP

{Aux AGREEMENT} = {NP AGREEMENT}

Agreement

• These flights

• This flight

• NP Det Nominal

{Det AGREEMENT} = {Nominal AGREEMENT}

• Verb serve

{Verb AGREEMENT NUMBER} = PL

• Verb serves

{Verb AGREEMENT NUMBER} = SG

Subcategorization

• VP Verb

{VP HEAD} = {Verb HEAD}

{VP HEAD SUBCAT} = INTRANS

• VP Verb NP

{VP HEAD} = {Verb HEAD}

{VP HEAD SUBCAT} = TRANS

• VP Verb NP NP

{VP HEAD} = {Verb HEAD}

{VP HEAD SUBCAT} = DITRANS

Eliza [Weizenbaum, 1966]

User: Men are all alike

ELIZA: IN WHAT WAY

User: They’re always bugging us about something or other

ELIZA: CAN YOU THINK OF A SPECIFIC EXAMPLE?

User: Well, my boyfriend made me come here

ELIZA: YOUR BOYFRIEND MADE YOU COME HERE

User: He says I’m depressed much of the time

ELIZA: I AM SORRY TO HEAR THAT YOU ARE DEPRESSED

Eliza-style regular expressions

Step 1: replace first person references with second person references

Step 2: use additional regular expressions to generate replies

Step 3: use scores to rank possible transformations

s/.* YOU ARE (depressed|sad) .*/I AM SORRY TO

HEAR YOU ARE \1/

s/.* YOU ARE (depressed|sad) .*/WHY DO YOU

THINK YOU ARE \1/

s/.* all .*/IN WHAT WAY/

s/.* always .*/CAN YOU THINK OF A SPECIFIC

EXAMPLE/

Finite-state automata

• Finite-state automata (FSA)

• Regular languages

• Regular expressions

Finite-state automata

(machines)

baa!

baaa!

baaaa!

baaaaa!

...

b

q0

baa+!

a

a

q1

a

q2

state

!

q3

transition

q4

final

state

Input tape

q0

a

b

a

!

b

Finite-state automata

•

•

•

•

•

Q: a finite set of N states q0, q1, … qN

: a finite input alphabet of symbols

q0: the start state

F: the set of final states

(q,i): transition function

State-transition tables

Stat

e

0

1

2

3

4

b

Input

a

!

1

0

0

0

0

0

2

3

3

0

0

0

0

4

0

Morphemes

• Stems, affixes

• Affixes: prefixes, suffixes, infixes: hingi

(borrow) – humingi (agent) in Tagalog,

circumfixes: sagen – gesagt in German

• Concatenative morphology

• Templatic morphology (Semitic languages)

: lmd (learn), lamad (he studied), limed (he

taught), lumad (he was taught)

Morphological analysis

• rewrites

• unbelievably

Inflectional morphology

•

•

•

•

•

Tense, number, person, mood, aspect

Five verb forms in English

40+ forms in French

Six cases in Russian, seven in Polish

Up to 40,000 forms in Turkish (you will

cause X to cause Y to … do Z)

Derivational morphology

• Nominalization: computerization,

appointee, killer, fuzziness

• Formation of adjectives: computational,

embraceable, clueless

Finite-state morphological

parsing

•

•

•

•

•

•

•

Cats: cat +N +PL

Cat: cat +N +SG

Cities: city +N +PL

Geese: goose +N +PL

Ducks: (duck +N +PL) or (duck +V +3SG)

Merging: +V +PRES-PART

Caught: (catch +V +PAST-PART) or (catch +V

+PAST)

Phonetic symbols

• IPA

• Arpabet

• Examples

Using WFST for language

modeling

• Phonetic representation

• Part-of-speech tagging

Dependency grammars

• Lexical dependencies between head

words

• Top-level predicate of a sentence is the

root

• Useful for free word order languages

• Also simpler to parse

Dependencies

S

VP

NP

NNP

NP

VBS

JJ

NNS

John likes tabby cats

Discourse, dialogue, anaphora

• Example: John went to Bill’s car

dealership to check out an Acura Integra.

He looked at it for about half an hour.

• Example: I’d like to get from Boston to San

Francisco, on either December 5th or

December 6th. It’s okay if it stops in

another city along the way.

Information extraction and

discourse analysis

• Example: First Union Corp. is continuing to

wrestle with severe problems unleashed by a

botched merger and a troubled business

strategy. According to industry insiders at Paine

Webber, their president, John R. Georgius, is

planning to retire by the end of the year.

• Problems with summarization and generation

Reference resolution

• The process of reference (associating

“John” with “he”).

• Referring expressions and referents.

• Needed: discourse models

• Problem: many types of reference!

Example (from Webber 91)

• According to John, Bob bought Sue an Integra,

and Sue bough Fred a legend.

• But that turned out to be a lie. - referent is a

speech act.

• But that was false. - proposition

• That struck me as a funny way to describe the

situation. - manner of description

• That caused Sue to become rather poor. - event

• That caused them both to become rather poor. combination of several events.

Reference phenomena

• Indefinite noun phrases: I saw an Acura Integra

today.

• Definite noun phrases: The Integra was white.

• Pronouns: It was white.

• Demonstratives: this Acura.

• Inferrables: I almost bought an Acura Integra

today, but a door had a dent and the engine

seemed noisy.

• Mix the flour, butter, and water. Kneed the

dough until smooth and shiny.

Constraints on coreference

• Number agreement: John has an Acura. It is red.

• Person and case agreement: (*) John and Mary have

Acuras. We love them (where We=John and Mary)

• Gender agreement: John has an Acura. He/it/she is

attractive.

• Syntactic constraints:

–

–

–

–

–

John bought himself a new Acura.

John bought him a new Acura.

John told Bill to buy him a new Acura.

John told Bill to buy himself a new Acura

He told Bill to buy John a new Acura.

Preferences in pronoun

interpretation

• Recency: John has an Integra. Bill has a Legend. Mary

likes to drive it.

• Grammatical role: John went to the Acura dealership

with Bill. He bought an Integra.

• (?) John and Bill went to the Acura dealership. He

bought an Integra.

• Repeated mention: John needed a car to go to his new

job. He decided that he wanted something sporty. Bill

went to the Acura dealership with him. He bought an

Integra.

Preferences in pronoun

interpretation

• Parallelism: Mary went with Sue to the Acura

dealership. Sally went with her to the Mazda

dealership.

• ??? Mary went with Sue to the Acura dealership.

Sally told her not to buy anything.

• Verb semantics: John telephoned Bill. He lost

his pamphlet on Acuras. John criticized Bill. He

lost his pamphlet on Acuras.

Salience weights in Lappin and Leass

Sentence recency

100

Subject emphasis

80

Existential emphasis

70

Accusative emphasis

50

Indirect object and oblique

complement emphasis

40

Non-adverbial emphasis

50

Head noun emphasis

80

Lappin and Leass (cont’d)

• Recency: weights are cut in half after each

sentence is processed.

• Examples:

– An Acura Integra is parked in the lot. (subject)

– There is an Acura Integra parked in the lot.

(existential predicate nominal)

– John parked an Acura Integra in the lot. (object)

– John gave Susan an Acura Integra. (indirect object)

– In his Acura Integra, John showed Susan his new CD

player. (demarcated adverbial PP)

Algorithm

1. Collect the potential referents (up to four sentences

back).

2. Remove potential referents that do not agree in number

or gender with the pronoun.

3. Remove potential referents that do not pass

intrasentential syntactic coreference constraints.

4. Compute the total salience value of the referent by

adding any applicable values for role parallelism (+35)

or cataphora (-175).

5. Select the referent with the highest salience value. In

case of a tie, select the closest referent in terms of

string position.

Example

• John saw a beautiful Acura Integra at the

dealership last week. He showed it to Bill. He

bought it.

Rec

Subj

John

100

80

Integra

100

dealershi

p

100

Exis

t

Obj

50

Ind

Obj

Non

Adv

Hea

d

N

Total

50

80

310

50

80

280

50

80

230

Example (cont’d)

Referent

Phrases

Value

John

{John}

155

Integra

{a beautiful Acura Integra}

140

dealership

{the dealership}

115

Example (cont’d)

Referent

Phrases

Value

John

{John, he1}

465

Integra

{a beautiful Acura Integra}

140

dealership

{the dealership}

115

Example (cont’d)

Referent

Phrases

Value

John

{John, he1}

465

Integra

{a beautiful Acura Integra, it}

420

dealership

{the dealership}

115

Example (cont’d)

Referent

Phrases

Value

John

{John, he1}

465

Integra

{a beautiful Acura Integra, it}

420

Bill

{Bill}

270

dealership

{the dealership}

115

Example (cont’d)

Referent

Phrases

Value

John

{John, he1}

232.5

Integra

{a beautiful Acura Integra,

it1}

210

Bill

{Bill}

135

dealership

{the dealership}

57.5

Observations

• Lappin & Leass - tested on computer manuals 86% accuracy on unseen data.

• Centering (Grosz, Josh, Weinstein): additional

concept of a “center” – at any time in discourse,

an entity is centered.

• Backwards looking center; forward looking

centers (a set).

• Centering has not been automatically tested on

actual data.

Part of speech tagging

•

•

•

•

Problems: transport, object, discount, address

More problems: content

French: est, président, fils

“Book that flight” – what is the part of speech

associated with “book”?

• POS tagging: assigning parts of speech to words

in a text.

• Three main techniques: rule-based tagging,

stochastic tagging, transformation-based tagging

Rule-based POS tagging

• Use dictionary or FST to find all possible

parts of speech

• Use disambiguation rules (e.g., ART+V)

• Typically hundreds of constraints can be

designed manually

Example in French

<S>

^

beginning of sentence

La

rf b nms u

article

teneur

nfs nms

noun feminine singular

Moyenne

jfs nfs v1s v2s v3s

adjective feminine singular

en

p a b

preposition

uranium

nms

noun masculine singular

des

p r

preposition

rivi`eres

nfp

noun feminine plural

,

x

punctuation

bien_que

cs

subordinating conjunction

délicate

jfs

adjective feminine singular

À

p

preposition

calculer

v

verb

Sample rules

BS3 BI1: A BS3 (3rd person subject personal pronoun) cannot be followed by a

BI1 (1st person indirect personal pronoun). In the example: ``il nous faut''

({\it we need}) - ``il'' has the tag BS3MS and ``nous'' has the tags [BD1P

BI1P BJ1P BR1P BS1P]. The negative constraint ``BS3 BI1'' rules out

``BI1P'', and thus leaves only 4 alternatives for the word ``nous''.

N K: The tag N (noun) cannot be followed by a tag K (interrogative pronoun);

an example in the test corpus would be: ``... fleuve qui ...'' (...river, that...).

Since ``qui'' can be tagged both as an ``E'' (relative pronoun) and a ``K''

(interrogative pronoun), the ``E'' will be chosen by the tagger since an

interrogative pronoun cannot follow a noun (``N'').

R V:A word tagged with R (article) cannot be followed by a word tagged with V

(verb): for example ``l' appelle'' (calls him/her). The word ``appelle'' can only

be a verb, but ``l''' can be either an article or a personal pronoun. Thus, the

rule will eliminate the article tag, giving preference to the pronoun.

Confusion matrix

IN

JJ

IN

-

.2

JJ

.2

-

3.3

NN

8.7

-

NNP .2

3.3

4.1

RB

2.0

.5

VBD

.3

.5

VBN

2.8

2.2

NN

NNP RB

VBD VBN

.7

2.1

1.7

.2

2.7

.2

-

.2

-

4.4

2.6

-

Most confusing: NN vs. NNP vs. JJ, VBD vs. VBN vs. JJ

HMM Tagging

• T = argmax P(T|W), where T=t1,t2,…,tn

• By Bayes’s theorem: P(T|W) = P(T)P(W|T)/P(W)

• Thus we are attempting to choose the sequence

of tags that maximizes the rhs of the equation

• P(W) can be ignored

• P(T)P(W|T) = ?

• P(T) is called the prior, P(W|T) is called the

likelihood.

HMM tagging (cont’d)

• P(T)P(W|T) =

P(wi|w1t1…wi-1ti-1ti)P(ti|t1…ti-2ti-1)

• Simplification 1: P(W|T) =

P(wi|ti)

• Simplification 2: P(T)= P(ti|ti-1)

• T = argmax P(T|W) = argmax P(wi|ti) P(ti|ti1)

Estimates

• P(NN|DT) =

C(DT,NN)/C(DT)=56509/116454 = .49

• P(is|VBZ =

C(VBZ,is)/C(VBZ)=10073/21627=.47

Example

• Secretariat/NNP is/VBZ expected/VBN

to/TO race/VB tomorrow/NR

• People/NNS continue/VBP to/TO

inquire/VB the/AT reason/NN for/IN the/AT

race/NN for/IN outer/JJ space/NN

• TO: to+VB (to sleep), to+NN (to school)

Example

NNP

VBZ

VBN

TO

VB

NR

Secretariat

is

expected

to

race

tomorrow

NNP

VBZ

VBN

TO

NN

NR

Secretariat

is

expected

to

race

tomorrow

Example (cont’d)

•

•

•

•

•

•

•

•

P(NN|TO) = .00047

P(VB|TO) = .83

P(race|NN) = .00057

P(race|VB) = .00012

P(NR|VB) = .0027

P(NR|NN) = .0012

P(VB|TO)P(NR|VB)P(race|VB) = .00000027

P(NN|TO)P(NR|NN)P(race|NN) = .00000000032

Decoding

• Finding what sequence of states is the

source of a sequence of observations

• Viterbi decoding (dynamic programming) –

finding the optimal sequence of tags

• Input: HMM and sequence of words,

output: sequence of states