Tokenization

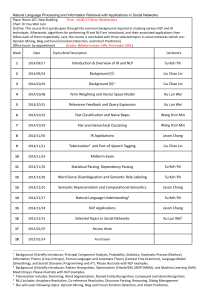

Survey of NLP

JILLIAN K. CHAVES

CUBRC, Inc.

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

Introduction

What is Natural Language?

A set of subconscious rules about the pronunciation (phonology), order (syntax), and meaning (semantics) of linguistic expressions.

What is Linguistics?

The scientific study of language use, acquisition, and evolution.

What is Computation?

Computation is the manipulation of information according to a specific method (e.g., algorithm) for determining an output value from a set of input values.

What is Computational Linguistics?

The study of the computational processes that are necessary for the generation and understanding of natural language.

Introduction

Processing natural language is far from trivial

Language is: based on very large vocabularies (± 20,000 words) rich in meaning (sometimes vague and context-dependent) regulated by complicated patterns and subconscious rules massively ambiguous (resolved only by world knowledge) noisy (speakers routinely produce and are tolerant to errors) produced and comprehended very quickly (and usually effortlessly)

Humans are specially equipped to handle these difficulties, but machines are not (yet). Is it possible to make a machine understand and use natural language as a human does, or even approximate the same utility?

A Typical NLP Pipeline

More-or-less standardized approach

Tokenization: Isolate all words and word parts

Sentence Segmentation: Isolate each individual sentence

POS Tagging: Assign part(s) of speech for each word

Phrase Structure Parsing: Isolate constituent boundaries

Syntactic Parsing: Identify argument structures

Semantic Analysis: Divine the meaning of a sentence

Ontology Translation: Map meaning to a concept model

Problems for NLP: Ambiguity

Speech Segmentation

Misheard song lyrics, for example

Discourse phenomena such as casual speech

Lexical Categorization

I saw her duck.

She fed her baby carrots.

Lexical/Phrasal Structure

British Prime Minister

The Prime Minister of Britain?

A Prime Minister (of some unknown country) who is of British descent?

Unlockable

Something that can be unlocked?

Something that can not be locked?

•

Analogous to mathematical order of operations: 12 ÷ 2 + 1 = 7 or 4 ?

Problems for NLP: Ambiguity

Sentence Structure

People with kids who use drugs should be locked up.

I forgot how good beer tastes.

Semantic Structure

Someone always wins the game.

Every arrow hit a target.

[reference ambiguity]

[scope ambiguity]

Implicitness

Can you open the door?

A) Are you able to open the door? B) Open the door!

What is the dog doing in the garage?

A) What activity is the dog carrying out? B) The dog doesn’t belong there.

Yeah, right.

A) Yes, that is correct. (= agreement) B) No, that is incorrect. (= sarcasm)

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Segmentation

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

Tokenization

Type (=

𝜑

)

The set of “word form” types in language is the lexicon

Token (=

𝜃

)

A single instance of a linguistic type (word or contracted word)

I am hungry.

{ I | am | hungry | . }

He’s Mary’s friend? { He | ’s | Mary | ’s | friend | ? }

The blue car chased the red car.

( 𝜑 =4; 𝜃 =4)

( 𝜑 =5; 𝜃 =6)

( 𝜑 =6; 𝜃 =8)

Types vs. Tokens in Comparative Corpora

Corpus

Switchboard Corpus

Types ( 𝜑 ) Tokens ( 𝜃 )

20,000 2,400,000

Shakespeare 31,000 884,000

Google Books (Ngram Viewer) 13,000,000 1,000,000,000

Tokenization

Tokenization

The process of individuating/indexing all tokens in a text

Very difficult in writing systems with lax compounding rules or flexible word boundaries

German: der Donaudampfschifffahrtsgesellschaftskapitän

THE DANUBE· STEAMBOAT· VOYAGE· COMPANY· CAPTAIN

(“The Danube Steamship Company captain”)

English: gonna, wanna, shoulda, hafta, …

Every token has a unique (within context) part-of-speech category and semantics

Cross-POS homography

•

Verb/Noun: record, progress, attribute, ...

Syncretism

•

Simple past and past participle: bought, cost, led, meant, …

Tokenization

The problem is token delineation

Spaces:

Hyphens:

Multiple “spellings”:

United States of America well-rounded; father-in-law

US, USA, U.S., U.S.A., United States, …

1/11/11, 01/11/11, January 11, 2011, 11 January 2011, 2011-01-11, …

(716) 555-5555, 716-555-5555, 716.555.55.55, …

The solution is normalization

Lemmatization: identifying the root (lemma) of each token

Lemma: open

•

Inflectional Paradigm: open, opens, opening, opened, …

Lemma: be

•

Inflectional Paradigm: am, is, are, was, were, being, been, isn’t, aren’t, …

Lemmatization

Lemma ≈ linguistic type

The set of possible words is much bigger than 𝜑 , thanks to derivation and inflection

Nouns/verbs

bike, skate, shelf, fax, email, Facebook, Google, …

Plural (-s) combines with most singular common nouns

Cat(s), table(s), day(s), idea(s), …

Genitive (-’s) combines with most nominals (simple or complex)

John’s cat, the black cat’s food, the Queen of England’s hat, the girl I met yesterday’s car

Progressive (-ing) attaches to almost any verb

Biking, skating, shelving, faxing, emailing, Facebooking, Googling, …

…which again can be ambiguous with another POS, e.g., shelving

Inflection and Derivation

Inflection

The paradigm (aka conjugation) of a single verb to account for person, number, and tense agreement

Regular

I act, he acts, you acted, we are acting, they have acted, he will act

Irregular

I go, he goes, you went, we are going, they have gone, she will go

I catch, he catches, you caught, we are catching, they have caught, she will catch

New/introduced verbs (e.g., tweet, Google) have regular inflection

Derivation

The process of deriving new words from a single root word

Nation (n.) national (adj.) nationalize (v.) nationalization (n.)

The Importance of Accurate Tokenization

Better downstream syntactic parsing

Stochastic (statistical) parsing thrives on high-quality input

Better downstream semantic assessment

Stable but rare lexical composition patterns

Anti-tank-missile (= a missile that targets tanks)

•

•

Anti-missile-missile (= a missile that targets missiles)

Anti-anti-missile-missile-missile (= a missile that targets anti-missilemissiles)

Great-grandfather (= a grandparent’s father)

•

•

Great-great-grandfather (= a grandparent’s parent’s father)

Great-great-great-grandfather …

Reliable lexical decomposition, especially with new/nonce words

I Yandexed it.

I’m a Yandexer.

{v|Yandex} simple past

{v|Yandex} agentive nominalization

I can’t stop Yandexing. {v|Yandex} progressive aspect

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

Sentence Segmentation

Naïve approach to identifying a sentence boundary:

1.

2.

3.

If the current token is a period, it’s the end of sentence

If the preceding token is on a list of known abbreviations, then the period might not end the sentence

If the following token is capitalized, then the period ends the sentence

Shockingly: 95% accuracy!

Demo: An Online Sentence Breaker

1.

2.

Mr. and Mrs. Jack Giancarlo of Lancaster celebrated their 50th wedding anniversary with a family cruise to the Bahamas. Mr. Giancarlo and Patricia Keenan were married September 28, 1963, in Holy Angels Catholic

Church, Buffalo. He is a retired inspector for the Ford Motor Co. Buffalo Stamping Plant; she is working as a tax preparer for H&R Block. They have five children and 13 grandchildren.

1

The bookkeeper/office manager at an Amherst jewelry store has admitted stealing more than $51,000.00 in cash from daily sales at the business. Rena Carrow, 44, of Lancaster, pleaded guilty to third-degree grand larceny in the theft at Andrews Jewelers on Transit Road, according to Erie County District Attorney Frank

A. Sedita III. Carrow admitted that between Aug. 31, 2011 and Dec. 5, 2012 she stole $51,069.14. She faces up to seven years in prison when she is sentenced Jan. 16 by Erie County Judge Kenneth F. Case.

2

1 Adapted from http://www.buffalonews.com/life-arts/golden-weddings/patricia-and-jack-giancarlo-20131010 , accessed 10 October 2013.

2 Adapted from http://www.buffalonews.com/city-region/amherst/jewelry-store-bookkeeper-admits-to-stealing-more-than-51000-20131010 , accessed 10 October 2013.

End of Module 1

Questions?

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

Parts of Speech

Closed class (function words)

Pronouns: I, me, you, he, his, she, her, it, …

Possessive: my, mine, your, his, her, their, its, …

Wh-pronouns: who, what, which, when, whom, whomever, …

Prepositions: in, under, to, by, for, about, …

Determiners: a, an, the, each, every, some, ...

Conjunctions

Coordinating: and, or, but, as, …

Subordinating: that, then, who, because, …

Particles: up, down, off, on, ..

Numerals: one, two, three, first, second, …

Auxiliary verbs: can, may, should, could, …

Open class (content words)

Nouns

Proper nouns: Jackie, Microsoft, France, Jupiter, …

Common nouns

•

Count nouns: cat, table, dream, height, …

•

Mass (non-count) nouns: milk, oil, mail, music, furniture, fun, …

Verbs: read, eat, paint, think, tell, sleep, …

Adjectives: purple, bad, false, original, …

Adverbs: quietly, always, very, often, never, …

POS Annotation Tagsets

Penn Treebank

A syntactically-annotated corpus of 5M words, using a set of

45 POS tags devised by UPenn (sampling of tagset below)

CC Coordinating conjunction

CD Cardinal number

DT Determiner

JJS Adjective, superlative

MD Modal verb

NN Noun, singular

NNS Noun, plural UH Discourse interjection

NNP Proper noun, singular VB

NNPS Proper noun, plural VBD

Verb, infinitive (base)

Verb, past tense

EX Existential there

IN Preposition/subordinating conjunction

POS

PRP

JJ Adjective, bare

JJR Adjective, comparative

PRP$

RB

Possessive marker

Personal pronoun

Possessive pronoun

Adverb, bare

RBR

RBS

TO

VBG

VBN

VBP

VBZ

Verb, gerund

Verb, past participle

Verb, non-3

Verb, 3 rd rd Sing. Pres. Form

Sing. Pres. Form

Adverb, comparative .

Adverb, superlative

Sentence-final punct (. ? !)

LRB Left-rounded parenthesis to RRB Right-rounded parenthesis

POS Annotation Tagsets

Comparison (Corpus : Word Count: Tagset Size)

Penn Treebank

British National Corpus (BNC)

4.5M

100M n = 45 n = 61

Brown Corpus (Brown University)

Corpus of Contemporary American English (COCA)

Global Web-Based English (GloWBE)

1M

450M

1.9B

n = 82 n = 137 n = 137

Why such a range across tagsets?

Occurrence of “complex” tags

•

•

Penn: [isn’t] is/VBZ n’t/RB

Brown: [isn’t] VBZ* (‘*’ indicates negation)

Most category distinctions are recoverable by context

A more exhaustive list of available corpora is available here .

POS Annotation Tagsets

Each token is assigned its possible POS tags

Ambiguity resolved with statistical likelihood measures

• e.g., nouns more likely than verbs to begin sentences, etc.

Bill saw

/NN /NN

/NNP /VB

/VBP /VBD

/VB her

/PRP father

/NN

/PRP$ /VBP

’s

/POS

/VBZ bike

/NN

/VBP yesterday

/NN

/RB

.

/.

/VB /VB

4 1 x 3 3 x 2 3 x 1 1 = 864 possible tag combinations

Given the syntactic patterns of English, only 1 is statistically likely:

Bill/NNP saw/VBD her/PRP$ father/NN ’s/VBZ bike/NN yesterday/RB /.

POS Annotation Tagsets

Lexical ambiguity metrics: Brown Corpus

11.5% of words (tokens) are ambiguous

However, those 11.5% tend to be the most frequent types:

•

•

•

I know that/IN she is honest.

Yes, that/DT concert was fun.

I’m not that/RB hungry.

In fact, those 11.5% of types account for 40% of the Brown corpus!

Methods & Accuracy

Rule-based POS Tagging

Probability-based (Trigram HMM)

Maximum Entropy P(t|w)

TnT (HMM++)

MEMM Tagger

Dependency Parser (Stanford)

Manual (Human)

50.0% - 90.0%

55.0% - 95.0%

93.7% - 82.6%

96.2% - 86.9%

96.9% - 86.9%

97.2% - 90.0%

98% upper bound

“Current part-of-speech taggers work rapidly and reliably, with pertoken accuracies of slightly over 97%. [...] Good taggers have sentence accuracies around 55-57%.”

Source: Manning 2011

Rule-based Method

Create a list of words with their most likely parts of speech

For each word in a sentence, tag it by looking up its most likely tag

e.g., dog/NN > dog/VB > dog/VBP

Correct for errors with tag-changing rules

Contextual rules: revise the tag based on the surrounding words or the tags of the surrounding words

•

IN DT NEXTTAG NN (IN becomes DT if next tag is NN)

• that/IN cat/NN that/DT cat/NN

Lexical rules: revise the tag based on an analysis of the stemmed word, in concert with the understanding of derivational rules of

English

Stemming

Affixation

Regular but not universal

•

-ize modernize, legalize, finalize

•

• un-

-s (plural)

*newize, *lawfulize, *permanentize unhealthy, unhappy, unstable

*unsick, *unsad, *unmiserable cats, dogs, birds

*oxs (oxen), *mouses (mice)

*hippopotamuss (hippopotami or hippopotamuses)

Irregular verbs

Root form changes for tense/aspect

•

•

•

• sink sank sunk begin began begun go went gone do did done

Unstable paradigms

• dive dove? dived? (= usually a dialectal variation)

Stemming: Variation Predictability

Pluralization via affix

1.

2.

cf. root change, e.g., man men

A singular root that does not end in “s”, “z”, “sh”, ch”, “dg” sounds or a vowel will take ‘-s’ in the plural form.

• cat, dog, lab, map, batter, seagull, button, firm, …

A.

A singular root ending in “s”, “z”, “sh”, “ch”, or “dg” sounds will take ‘-es’ in the plural form; if this results in an overlapping orthographic ‘e’, they will collapse.

• loss + es = losses / bus + es = buses / house + es /…

•

• buzz + es = buzzes / waltz + es = waltzes / … ash + es = ashes / match + es = matches / hedge + s = hedges / …

•

Corollary: A singular root ending in a singular ‘z’ will geminate in the plural form.

quiz + es = quizzes / …

Predictable variation can be captured with rules

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

N-grams

Probabilistic language modeling

Goal: determine probability P of a sequence of words

Applications:

POS Tagging

•

P(She

PRP bikes

VBG

) > P(She

PRP

Spellchecking bikes

NNS

)

•

P(their cat is sick) > P(there cat is sick)

Speech Recognition

•

P(I can forgive you) > P(I can for give you)

Machine translation, natural language generation, language identification, authorship (genre) identification, word similarity, sentiment analysis, etc.

N-grams

N-gram: a sequence of n words

Unigram: occurrence of a single isolated word

Bigram: a sequence of two words

Trigram: a sequence of three words

4-gram: a sequence of four words

…

Resources/demonstrations

Online N-gram calculator

GoogleBooks N-gram Viewer

Automatic random language generation

(based on N-gram probabilities of input text)

N-grams: Scope of Usefulness

In a text…

The set of bigrams is large and exhibits high frequencies

The set of trigrams is fewer than the bigrams and also less frequent

…

The set of 15-grams is small and each probably occurs only once

Zipf’s Law (long tail phenomenon): the frequency of a word is inversely correlated with its semantic specificity

Related Task

Compute probability of an upcoming word:

“The probability of the next word being w environment w

1 followed by w

2

5

|𝑤 , 𝑤 , 𝑤 given the preceding followed by w

𝑃(𝑤

3

5 1 2 followed by w

3

4

.”

Example:

, 𝑤

4

)

What is the value of P(the|is,easy,to,see)?

N-grams: Scope of Usefulness

What is the value of

P

(the|it,is,easy,to,see) ?

Approach #1: Counting!

𝐶(it is easy to see the)

𝑃 the|it is easy to see =

𝐶(it is easy to see)

Per Google

(as of 22-Oct-2013):

𝑃 the|it is easy to see =

𝐶(it is easy to see the)

=

𝐶(it is easy to see)

50,700,000

126,000,000

= 0.402

Problem: not all possible sequences occur very often

𝐶 it is easy to see Donna = 1

N-grams: Scope of Usefulness

What is the value of P (the|it,is,easy,to,see) ?

Approach #2: Estimate with N-grams

Joint probabilities

P (w

1

) * P (w

2

|w

1

) * P (w

3

|w

1

,w

2

) * … * P (w n

|w

1

,w

2

,…,w

Complex, time-consuming, and, in the end, not very helpful n-1

)

Limitations

N-gram probability analysis doesn’t give the whole picture

“Garden path” sentences

The man that I saw with her bikes to work every day.

The man that I saw with her bikes was a thief.

News headlines (“Journalese”)

Corn maze cutter stalks fall fun across country

After Earth Lost To Both Fast & Curious And Now You See Me At

Friday Box Office

Jury awards $6.5M in CA case of nozzle thought gun

Recurring Problem: Non-linearity

Predictive sequence models fail because they assume that:

Syntax is linear (cf. hierarchical)

•

“She sent a postcard to her friend from Australia.”

•

•

L: She sent a postcard to [her friend from Australia].

H: [She sent a postcard] to her friend [from Australia].

All dependencies are local (cf. long-distance)

•

•

Which instrument did you play?

•

Deconstruction:

Determine the value of x such that x is an instrument and you play x

Which instrument did your college roommate try to annoy you by playing?

•

Deconstruction:

Define set v that is identical to the set of your roommates

Define subset x of set v as the set of roommates from college

Define subset y of set v that played an instrument w

Define subset z of set v that played w to annoy you

Determine the value of w

End of Module 2

Questions?

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

Phrase Structures

Computational Analogy: base-10 arithmetic

Lexicon:

N 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9

O + | - | x | =

Grammar: N N O N

N (1+2) x (3+4)

N 3 x 7

21 3 x 7

N 9 – ((2 x 3) + 1)

N 9 – (6 + 1)

N 9 – 7

2 9 – 7

Phrase Structures

Natural language has a bigger lexicon and more rules

How?

Recursion: a phrase defined in terms of itself

•

•

•

A noun phrase can be rewritten as (for instance):

•

•

NP DT N

N N PP

“the dog”

“dog in the yard”

A prepositional phrase is rewritten as a preposition (relational term) and a noun phrase.

•

PP P NP

These three rules alone allow for infinite recursion!

Example:

•

“Put the ring in the box on the table at the end of the hallway.”

•

Where is the ring now? Where is it going?

Phrase Structures

Phrasal rewrite rules

Additional rules of English

S NP VP

•

•

NP DT N

VP IV

N AdjP N

VP TV NP

[the dog] [barked]

[the dog]

[barked]

[big] [dog]

[gnawed] [the bone]

VP DTV NP NP

VP DTV NP PP

PDV DTV

•

VP DTV NP PP

VP VP PP

•

PP P NP

[gave] [Mary] [a kiss]

[gave] [a kiss] [to Mary]

[was given]

[was given] [a kiss] [by the dog]

[went] [to the park]

[to] [the park]

Phrase Structures

Syntactic tree structure

“The woman called a friend from Australia.”

Parse #1:

The woman [called] a friend [from Australia].

Parse #2:

The woman called [a friend from Australia].

Is this parse predicted by the grammar rules?

[The woman] called a friend [from Australia].

Phrase Structures

Other common sources of recursion

Complex/non-canonical phrases

VP AUX VP

•

By this time next month, I [will [have [been [married]]]] for 10 years.

Complex/non-canonical phrases

NP GerundVP

•

•

•

[Swimming] is fun.

GerundVP VBG

[Going to the beach] is a great way to relax. GerundVP VBG PP

[Visiting the cemetery] was very sad.

GerundVP VBG NP

Reiteration within rules

NP DT AdjP N

AdjP Adj*

“the big dog”

“big brown furry”

AdjP (Adv*) Adj* “[awesomely [big]] [really [furry]]”

Phrase Structures

How do we know phrase structure rules exist?

Ability to parse novel grammatical sentences

“They laboriously cavorted with intrepid neighbors.”

Ability to intuit when a sentence is ungrammatical.

“Like almost eyes feel been have fully indigo.”

How many rules are there?

Nobody knows! Open problem since the 1950s.

The statistical universals have been identified –

Existing phrase structure rules account for ± 97% of natural language constructions

Psycholinguists focus on the remaining 3% via the grammaticality/acceptability interface

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

Online Parsers

Phrase Structure Parsers

Probabilistic LFG F-structure parsing

Link Grammar

ZZCad

Dependency Parsers

Stanford Parser

Connexor

ROOT The woman called a friend.

det(woman-2, the-1) nsubj(called-3, woman-2) root(root-0, called-3) det(friend-5, a-4) dobj(called-3, friend-5)

Long-distance Dependencies

Local

Which instrument did you play?

Long-distance

Which instrument did your college roommate try to annoy you by playing?

det(instrument-2, which-1) dobj(play-5, instrument-2) aux(play-5, did-3) nsubj(play-5, you-4) root(root-0, play-5) det(instrument-2, which-1) dep(try-7, instrument-2) aux(try-7, did-3) poss(roommate-6, your-4) nn(roommate-6, college-5) nsubj(try-7, roommate-6) xsubj(annoy-9, roommate-6) root(root-0, try-7) aux(annoy-9, to-8) xcomp(try-7, annoy-9) dobj(annoy-9, you-10) prep(annoy-9, by-11) pobj(by-11, playing-12)

End of Module 3

Questions?

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

The Syntax-Semantics Interface

Can we automate the process of associating semantic representations with parsed natural language expressions?

Is the association even systematic?

The Syntax-Semantics Interface

The meaning of an expression is a function of the meanings of its parts and the way the parts are combined syntactically

[The cat] chased the dog.

[The cat] was chased by the dog.

The dog chased [the cat].

The meaning of [the cat] is fairly stable, but its role in the sentence is determined by syntax

The primary tenet of the syntax-semantics interface is this Principle of Compositionality

Compositionality

Semantic

𝜆

-calculus

Notational extension of First-Order Logic

Grammar is extended with semantic representations

Proper names: (PN; tom) Tom; (PN; mia) Mia

Intrans. verbs: (IV; 𝜆𝑥. 𝑠𝑛𝑜𝑟𝑒(𝑥)) snores

Transitive verbs: (TV; 𝜆𝑦. 𝜆𝑥. 𝑙𝑖𝑒 𝑥, 𝑦 ) likes

Phrasal rules:

Sentence

Noun Phrase

(S; ( 𝜑 )( 𝜓 )) (NP; 𝜑 )(VP; 𝜓 )

(NP; 𝜑 ) (PN; 𝜑 )

Intransitive VP (VP; 𝜑 ) (IV; 𝜑 )

Transitive VP (VP; ( 𝜑 ) ( 𝜓 )) (TV; 𝜑 )(NP; 𝜓 )

Compositionality

Event Structure

The problem of determining the number of arguments for a given verb is complicated by the additional of non-essential expressions

I ate.

I ate a sandwich.

I ate a sandwich in my car.

I ate in my car.

I ate a sandwich for lunch.

I ate a sandwich for lunch yesterday.

I ate a sandwich around noon.

Linguistic approach: [in my car], [for lunch], [yesterday], and [around noon] are not required arguments of the verb; rather, they are modifiers.

Event Structure

For that approach to work, we must assert that there are mutually-exclusive sets of events and states.

State: A fact that is true of a single point in time

Larry died.

*Larry died for two hours.

Event: A state change

Activities: have no particular endpoint

•

Larry ran in the park.

Accomplishments: have a natural endpoint

•

Larry ran to the park.

Achievements: true of a single point in time but yield a result state

•

•

Larry found his car.

The tire popped.

Event Structure

Event/state distinctions remove the need to know the number of arguments of a verb

Instead, participants are categorized by thematic role

Thematic Role Definition Example

AGENT Volitional causer of an event

EXPERIENCER Experiencer of an event

FORCE

THEME

Non-volitional causer of an event

The waiter

John spilled the soup.

has a headache.

The wind blew debris into the yard.

Participant most directly affected by an event The skaters broke the ice .

RESULT

CONTENT

The end product of an event

The proposition or content of a propositional event

They built a golf course…

He asked, “Have you graduated yet?”

INSTRUMENT An instrument used in an event

BENEFICIARY The beneficiary of an event

SOURCE

GOAL

Origin of an object of a transfer event

Destination of an object of a transfer event

He hit the nail with a hammer .

She booked the flight for her boss .

I just arrived from Paris .

I sailed to Cape Cod .

Computational Lexical Semantics

Hypernyms/Hyponyms primate simian ape orangutan gorilla silverback chimpanzee monkey baboon macaque vervet hominid homo erectus homo sapiens

Cro-magnon homo sapiens sapiens

Computational Lexical Semantics

If X is a hyponym of Y, then:

Example: daffodil is a hyponym of flower.

Every daffodil is a flower, but not every flower is a daffodil.

If X is a hypernym of Y, then:

Example: jet is a hypernym of Boeing 737.

Not every jet is a Boeing 737, but every Boeing 737 is a jet.

Entailment

X entails Y ⟷ whenever X is true, Y is also true.

Downward-entailing verbs

Hate, dislike, fear, …

Upward-entailing verbs

See, have, buy, …

Computational Lexical Semantics

•

•

•

•

WordNet (English WordNet:

Link

)

Hierarchical lexical database of open-class synonyms, antonyms, hypernyms/hyponyms, and meronyms/holonyms

•

115000+ entries

Each entry belongs to a synset, a set of sense-based synonyms

Example: “bank”

{08437235}<noun.group> depository financial institution, banking company (a financial institution that accepts deposits) “He cashed a check at the bank.”

{02790795}<noun.artifact> bank building (a building in which the business of banking transacted) “The bank is on the corner of Main and Elm.”

{02315835}<verb.possession> deposit (put into a bank account) “She banked the check.”

{00714537}<verb.cognition> count, bet, depend, swear, rely, reckon (have faith or confidence in) “He’s banking on that promotion.”

Computational Lexical Semantics

Word-sense similarity technology is applied to:

Intelligent web searches

Questing answering

Plagiarism detection

Word-sense disambiguation (WSD)

1.

Supervised

Input: hand-annotated corpora

•

Time-intensive and unreliable

Start with sense-annotated training data

2.

3.

4.

Extract features describing the contexts of the target word

Train a classifier with some machine-learning algorithm

Apply the classifier to unlabeled data

Computational Lexical Semantics

Precision and Recall

Machine-learning algorithms and training models are calibrated and scored with precision and recall metrics

Precision: How specifically relevant are my results?

•

The number of correct answers retrieved relative to the total number of retrieved answers

Recall: How generally relevant are my results?

•

The number of answers retrieved relative to the total number of correct answers retrieved

F-score

•

The weighted mean of precision and recall

F = 2 ⋅ 𝑝𝑟𝑒𝑐𝑖𝑠𝑖𝑜𝑛 ⋅ 𝑟𝑒𝑐𝑎𝑙𝑙 𝑝𝑟𝑒𝑐𝑖𝑠𝑖𝑜𝑛 + 𝑟𝑒𝑐𝑎𝑙𝑙

Survey of NLP

Module 1

Introduction

Tokenization

Sentence Breaking

Module 2

Part-of-Speech (POS) Tagging

N-gram Analysis

Module 3

Phrase Structure Parsing

Syntactic Parsing

Module 4

Semantic Analysis

NLP & Ontologies

NLP & Ontologies

WordNet is a primitive ontology

Hierarchical organization of concepts

Noun

•

•

•

•

Act

Animal

Artifact

…

Verb

•

•

•

•

Motion

Perception

Stative

…

Ontologies are a model-specific mechanism for knowledge representation

NLP & Ontologies

The input to NLP (for sake of argument) can be any disparate data

The output of NLP is an index of extracted linguistic phenomena

Sentences, words, verb semantics, argument structure, etc.

When aligned to an ontology model, the output of NLP is easily integrated with information extraction efforts

Semantic concepts (entities, events) are mapped to classes

Arcs (relations, attributes) are mapped with properties

NLP & Ontologies

Domain specificity

In most industry applications, a whole-world representative model is neither required nor useful

Domain-specific ontologies exploit the set of target entities and properties

e.g., biomedical ontologies, military ground-force ontologies, etc.

End of Module 4

Questions?