What is an event?

advertisement

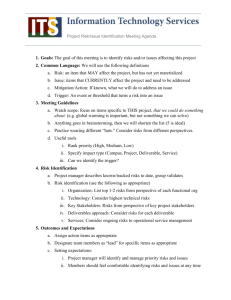

EVENT EXTRACTION Heng Ji jih@rpi.edu October 4, 2015 2 Outline • • • • Event Definition Event Knowledge Networks Construction Basic Event Extraction Approach Advanced Event Extraction Approaches • • • • • Information Redundancy for Inference • Co-training Event Attribute Labeling Event Coreference Resolution Event Relation Challenges From Entity-Centric to Event-Centric • State-of-the-art Information Extraction and Knowledge Discovery Techniques are Entity-Centric • Event-centric Knowledge Discovery from unstructured data to organize knowledge in a network structure • Construct High-quality Event Networks • Node: an event (a cluster of coreferential event mentions) • Event Mention Extraction based on Joint Modeling with Structured Prediction • Event Coreference Resolution based on semantic attributes • Edge: relation between events • Some are horizontal and some are vertical, forming an event cone 3 om Entity-Centric to Event-Centric CAUSAL SUB-EVENT COREFERENC E Barack Obama US congress Obama (approver) [Title: President Employer: US] (agent) (attendee) (organization) CLOSE-ORG MEET CLOSE-ORG SIGN NSF Obamacar e (cause) (organization) (act) 2010/03/03 Doc1 2013/10/01 Doc2 Member of: Republican Residence: Ohio] 2013/10/02 Doc3 2013/10/02 Doc4 (Employer) LEAVE John Boehner DARPA US (attendee) reformgovernment (organization) [Title: House Speaker COREFERENCEHealthcare Lockheed Martin 3000 Workers (Employee) 2013/10/03 Doc5 4 What is an event? • • • An Event is a specific occurrence involving participants. An Event is something that happens. An Event can frequently be described as a change of state. Most of current NLP work focused on this Chart from (Dölling, 2011) 5 What is an event? Topic Detection and Tracking (TDT) Automatic Content Extraction (ACE) & Entities, Relations and Events (ERE) Abstract Meaning Representation (AMR) Propbank, Nombank Timebank, Discourse Treebank Temporal order of coarse-grained groups of events (“topics”) Defined 33 types of events, each event mention includes a trigger word and arguments with roles Rich semantic roles on concepts, no typing on predicates Each predicate is an event type, no arguments Some nominals and adjectives allowed Chart adapted from (Strassel, 2012) 6 7 Event Extraction • • • Task Definition Basic Event Extraction Approach Advanced Event Extraction Approaches • • • Information Redundancy for Inference • Co-training Event Attribute Labeling Event Coreference Resolution Event Mention Extraction: Task • An event is specific occurrence that implies a change of states • event trigger: the main word which most clearly expresses an event occurrence • event arguments: the mentions that are involved in an event (participants) • event mention: a phrase or sentence within which an event is described, including trigger and arguments • Automatic Content Extraction defined 8 types of events, with 33 subtypes Argument, ACE event type/subtype role=victim triggerMention Example Event Life/Die Kurt Schork died in Sierra Leone yesterday Transaction/Transfer GM sold the company in Nov 1998 to LLC Movement/Transport Homeless people have been moved to schools Business/Start-Org Schweitzer founded a hospital in 1913 Conflict/Attack the attack on Gaza killed 13 Contact/Meet Arafat’s cabinet met for 4 hours Personnel/Start-Position She later recruited the nursing student Justice/Arrest Faison was wrongly arrested on suspicion of murder Supervised Event Mention Extraction: Methods • Staged classifiers • Trigger Classifier • • Argument Classifier • • to classify arguments by argument role Reportable-Event Classifier • • to distinguish arguments from non-arguments Role Classifier • • to distinguish event instances from non-events, to classify event instances by type to determine whether there is a reportable event instance Can choose any supervised learning methods such as MaxEnt and SVMs (Ji and Grishman, 2008) Typical Event Mention Extraction Features Trigger Labeling Lexical Entity the entity type of the syntactically nearest entity to the trigger in the parse tree the entity type of the physically nearest entity to the trigger in the sentence Entity type and subtype Head word of the entity mention Context Trigger tokens Event type and subtype Entity Trigger list, synonym gazetteers the depth of the trigger in the parse tree the path from the node of the trigger to the root in the parse tree the phrase structure expanded by the parent node of the trigger the phrase type of the trigger Event type and trigger Syntactic Argument Labeling Tokens and POS tags of candidate trigger and context words Dictionaries Context words of the argument candidate Syntactic the phrase structure expanding the parent of the trigger the relative position of the entity regarding to the trigger (before or after) the minimal path from the entity to the trigger the shortest length from the entity to the trigger in the parse tree (Chen and Ji, 2009) Event Trigger Identification Missing Errors • OOV • this morning in Michigan, a second straight night and into this morning, hundreds of people have been rioting [attack] in Benton harbor. • Scene understanding • This was the Italian ship that was taken -- that was captured [transfer-ownership] by Palestinian terrorists back in 1985 and some may remember the story of Leon clinghover, he was in a cheal chair and the terrorists shot him and pushed him over the side of the ship into the Mediterranean where he obviously, died. • The gloved one claims the label has been releasing new albums and Jackson five merchandise without giving [transfer-money] him "a single dollar." • Airlines are getting [transport] flyers to destinations ontime more often. • Three Young Boys,ages 2, 5 and 10 survived and are in critical [injure] condition after spending in 18 hours in the cold. 11 Event Trigger Identification Spurious Errors • Lara hijacking in 1995 has been captured [arrest-jail] by U.S. forces in or near Baghdad. • I want to take [transport] this opportunity to stand behind the Mimi and proclaim my solidarity. • But when we moved [transport] in closer and started to scan the area, then I saw the 2-year-old waving from next to the airplane. • He's left [transport] a lot on the table. • Barbara, Bill Bennet's Glam gambling loss [die] changed my opinion. • Stewart has found the road to fortune wherever she has traveled [transport]. • And it's hard to win back that sort of brand equity that she's lost [end-position]. • Police measure the spot where ten months old Miana Williams landed after she was thrown [transport] from a seventh floor window at this apartment building. • Reason, not result: • The baby fell [die] 80 feet. • These tree branches cushioned her fall [die] and saved her life. 12 Event Trigger Identification Spurious Errors (Con’t) • He -- After landing and taking a brief tour of part of the ship, he also went [transport] out on to the flight deck and observed some of the flight operations of some of the f-18 aircraft that are now leaving these aircraft… • still hurts [attack] me to read this. – Metaphor features: semantic categories (noun.animal, noun.cognition) or degree of abstractness • Any farm kid knows that certain animals cannot see for a few days after they're born [born], including puppies. • We happen to be at a very nice spot by the beach where this is a chance for people to get [transport] away from CNN coverage, everything, and kind of relax . • He bought the machinery, moved [transport] to a new factory, rehired some of the old workers and started heritage programs. • Under the reported plans, Blackstone Group would buy Vivendi's theme park division, including Universal Studios Hollywood, Universal Orlando in Florida and Universal's ownership interests in parks in Spain and Japan, a source close to the negotiations [meet] told the paper. 13 Event Trigger Classification Errors • a man in New York is facing attempted murder charges after allegedly throwing [transportattack] his baby seven stories to the ground below. • He's also being fined [transfer-money fine] $3 million and was ordered to pay $1.2 million in restitution to the New York State Tax Commission. • ``They admitted to having nuclear capability and weapons at this moment,'' said Rep. Curt Weldon, who headed a delegation of U.S. lawmakers that visited [meettransport] Pyongyang for three days ending Sunday. • Blasphemy is punishable by death [die execute] under the Pakistan Penal Code. • We discussed [phone-write meet] the Middle East peace process. 14 Event Argument Identification Missing Errors • Scene understanding: • This was the Italian ship that was taken -- that was captured by Palestinian terrorists back in 1985 [die_time] and some may remember the story of Leon clinghover, he was in a cheal chair and the terrorists shot him and pushed him over the side of the ship into the Mediterranean [die_place] where he [die_victim] obviously, died. • She [die_victim] was going to fight to the death. • Then police say the baby's mother pulled out a kitchen knife [die_instrument] opinion on the 911 tape you can hear Williams tape say "go ahead kill me." • Coreference: • another story out of Belgrade [attack_place], violence at the highest form. • Unusual syntactic structure: • Two f-14 Tomcats struck the targets, the same area was a site [attack_place] of heavy bombing yesterday. • The EU foreign ministers met hours after U.S. President George W. Bush gave Saddam [attack_target] 48 hours to leave Iraq or face invasion. 15 Event Argument Identification Missing Errors (Cont’) • OOV: • We've seen in the past in Bosnia for example, you held elections and all of the old ethnic thugs [election_person] get into power because they have organization and they have money and they stop the process of genuine building of democracy. • There are charges, U.S. charges which have expired but could, I am told, possibly be re -- restarted for piracy, hostage taking and conspiracy [charge_crime]. • Stewart and former imclone CEO Sam Waksal shared a broker who now also faces charges of obstruction of justice and perjury [charge_crime]. • I was born four years too late to have slept with JFK [born_time]? • He called the case and I'm quoting now, a judge's worst nightmare, but he noted that Maryland Parole Boards and the facility where Michael Serious was held, the institution made what the judge called the final decision on whether to release serious [release-parole_person]. • Last week Williamson, a mother of four, was found stabbed to death at a condominium [die_place] in Greenbelt, Maryland. • A source tell US Enron is considering suing its own investment bankers for giving 16 Event Argument Identification Spurious Errors • He's been in Libya and he's been living under the protection of Saddam Hussein in Baghdad [die_place], but he is wanted for murder in Italy Italy. • The EU foreign ministers met hours after U.S. President George W. Bush [meet_entity] gave Saddam 48 hours to leave Iraq or face invasion. 17 Event Argument Role Classification Errors • His private plane [vehicle artifact] arrived at Heathrow Airport. • a cruise ship [instrument target] is being searched off the Hawaii coast after two notes threatening terrorist attack were found on board. 18 Why Trigger Labeling is so Hard? DT this “this is the largest pro-troops demonstration that has ever been in San Francisco” RP forward “We've had an absolutely terrific story, pushing forward north toward Baghdad” WP what “what happened in” RB back “his men back to their compound” IN over “his tenure at the United Nations is over” IN out “the state department is ordering all non-essential diplomats” CD nine eleven “nine eleven” RB formerly “McCarthy was formerly a top civil servant at” Why Trigger Labeling is so Hard? A suicide bomber detonated explosives at the entrance to a crowded medical teams carting away dozens of wounded victims dozens of Israeli tanks advanced into thenorthern Gaza Strip Many nouns such as “death”, “deaths”, “blast”, “injuries” are missing Why Argument Labeling is so Hard? Two 13-year-old children were among those killed in the Haifa bus bombing, Israeli public radio said, adding that most of the victims were youngsters Israeli forces staged a bloody raid into a refugee camp in central Gaza targeting a founding member of Hamas Israel's night-time raid in Gaza involving around 40 tanks and armoured vehicles Eight people, including a pregnant woman and a 13-year-old child were killed in Monday's Gaza raid At least 19 people were killed and 114 people were wounded in Tuesday's southern Philippines airport The waiting shed literally exploded Wikipedia “A shed is typically a simple, single-storey structure in a back garden or on an allotment that is used for storage, hobbies, or as a workshop." Why Argument Labeling is so Hard? Two 13-year-old children were among those killed in the Haifa bus bombing, Israeli public radio said, adding that most of the victims were youngsters Fifteen people were killed and more than 30 wounded Wednesday as a suicide bomber blew himself up on a student bus in the northern town of Haifa Two 13-year-old children were among those killed in the Haifa bus bombing State-of-the-art and Remaining Challenges State-of-the-art Performance (F-score) English: Trigger 70%, Argument 45% Chinese: Trigger 68%, Argument 52% Single human annotator: Trigger 72%, Argument 62% Remaining Challenges Trigger Identification Trigger Classification “named” represents a “Personnel_Nominate” or “Personnel_Start-Position”? “hacked to death” represents a “Life_Die” or “Conflict_Attack”? Argument Identification Generic verbs Support verbs such as “take” and “get” which can only represent an event mention together with other verbs or nouns Nouns and adjectives based triggers Capture long contexts Argument Classification Capture long contexts Temporal roles (Ji, 2009; Li et al., 2011) IE in Rich Contexts Texts Time/Location/ Cost Constraints Authors Venues IE Information Networks Human Collaborative Learning Capture Information Redundancy • When the data grows beyond some certain size, IE task is naturally embedded in rich contexts; the extracted facts become inter-dependent • Leverage Information Redundancy from: • • • • • • • Large Scale Data (Chen and Ji, 2011) Background Knowledge (Chan and Roth, 2010; Rahman and Ng, 2011) Inter-connected facts (Li and Ji, 2011; Li et al., 2011; e.g. Roth and Yih, 2004; Gupta and Ji, 2009; Liao and Grishman, 2010; Hong et al., 2011) Diverse Documents (Downey et al., 2005; Yangarber, 2006; Patwardhan and Riloff, 2009; Mann, 2007; Ji and Grishman, 2008) Diverse Systems (Tamang and Ji, 2011) Diverse Languages (Snover et al., 2011) Diverse Data Modalities (text, image, speech, video…) • But how? Such knowledge might be overwhelming… Cross-Sent/Cross-Doc Event Inference Architecture Test Doc Within-Sent Event Tagger UMASS INDRI IR Cross-Sent Inference Candidate Events & Confidence Cluster of Related Docs Within-Sent Event Tagger Cross-Doc Inference Refined Events Cross-Sent Inference Related Events & Confidence Baseline Within-Sentence Event Extraction 1. Pattern matching • Build a pattern from each ACE training example of an event • British and US forces reported gains in the advance on Baghdad PER report gain in advance on LOC 2. MaxEnt models ① Trigger Classifier • to distinguish event instances from non-events, to classify event instances by type ② Argument Classifier • to distinguish arguments from non-arguments ③ Role Classifier • to classify arguments by argument role ④ Reportable-Event Classifier • to determine whether there is a reportable event instance Global Confidence Estimation Within-Sentence IE system produces local confidence IR engine returns a cluster of related docs for each test doc Document-wide and Cluster-wide Confidence • Frequency weighted by local confidence • XDoc-Trigger-Freq(trigger, etype): The weighted frequency of string • • • • trigger appearing as the trigger of an event of type etype across all related documents XDoc-Arg-Freq(arg, etype): The weighted frequency of arg appearing as an argument of an event of type etype across all related documents XDoc-Role-Freq(arg, etype, role): The weighted frequency of arg appearing as an argument of an event of type etype with role role across all related documents Margin between the most frequent value and the second most frequent value, applied to resolve classification ambiguities …… Cross-Sent/Cross-Doc Event Inference Procedure Remove triggers and argument annotations with local or cross-doc confidence lower than thresholds • Local-Remove: Remove annotations with low local confidence • XDoc-Remove: Remove annotations with low cross-doc confidence Adjust trigger and argument identification and classification to achieve document-wide and cluster-wide consistency • XSent-Iden/XDoc-Iden: If the highest frequency is larger than a threshold, propagate the most frequent type to all unlabeled candidates with the same strings • XSent-Class/XDoc-Class: If the margin value is higher than a threshold, propagate the most frequent type and role to replace low-confidence annotations Experiments: Data and Setting Within-Sentence baseline IE trained from 500 English ACE05 texts (from March – May of 2003) Use 10 ACE05 newswire texts as development set to optimize the global confidence thresholds and apply them for blind test Blind test on 40 ACE05 texts, for each test text, retrieved 25 related texts from TDT5 corpus (278,108 texts, from April-Sept. of 2003) Selecting Trigger Confidence Thresholds to optimize Event Identification F-measure on Dev Set 73.8% 69.8% 69.8% Best F=64.5% Selecting Argument Confidence Thresholds to optimize Argument Labeling F-measure on Dev Set 51.2% 48.0% 48.2% 48.3% F=42.3% 43.7% Experiments: Trigger Labeling Performance Precision Recall F-Measure Within-Sent IE (Baseline) 67.6 53.5 59.7 After Cross-Sent Inference 64.3 59.4 61.8 After Cross-Doc Inference 60.2 76.4 67.3 Human Annotator 1 59.2 59.4 59.3 Human Annotator 2 69.2 75.0 72.0 Inter-Adjudicator Agreement 83.2 74.8 78.8 System/Human Experiments: Argument Labeling Performance System/Human Argument Identification Argument Classification Accuracy P R F Within-Sent IE 47.8 38.3 42.5 After Cross-Sent Inference 54.6 38.5 After Cross-Doc Inference 55.7 Human Annotator 1 Argument Identification +Classification P R F 86.0 41.2 32.9 36.3 45.1 90.2 49.2 34.7 40.7 39.5 46.2 92.1 51.3 36.4 42.6 60.0 69.4 64.4 85.8 51.6 59.5 55.3 Human Annotator 2 62.7 85.4 72.3 86.3 54.1 73.7 62.4 Inter-Adjudicator Agreement 72.2 71.4 71.8 91.8 66.3 65.6 65.9 Global Knowledge based Inference for Event Extraction Cross-document inference (Ji and Grishman, 2008) Cross-event inference (Liao and Grishman, 2010) Cross-entity inference (Hong et al., 2011) All-together (Li et al., 2011) Leveraging Redundancy with Topic Modeling Within a cluster of topically-related documents, the distribution is much more convergent; closer to its distribution in the collection of topically related documents than the uniform training corpora e.g. In the overall information networks only 7% of “fire” indicate “End-Position” events; while all of “fire” in a topic cluster are “End-Position” events e.g. “Putin” appeared as different roles, including “meeting/entity”, “movement/person”, “transaction/recipient” and “election/person”, but only played as an “election/person” in one topic cluster Topic Modeling can enhance information network construction by grouping similar objects, event types and roles together 37 Bootstrapping Event Extraction • Both systems rely on expensive human labeled data, thus suffers from data scarcity (much more expensive than other NLP tasks due to the extra tagging tasks of entities and temporal expressions) Questions: • Can the monolingual system benefit from bootstrapping techniques with a relative small set of training data? • Can a monolingual system (in our case, the Chinese event extraction system) benefit from the other resourcerich monolingual system (English system)? 38 Cross-lingual Co-Training Intuition: The same event has different “views” described in different languages, because the lexical unit, the grammar and sentence construction differ from one language to the other. Satisfy the sufficiency assumption Cross-lingual Co-Training for Event Extraction (Chen and Ji, 2009) Labeled Samples in Language A train Unlabeled Bitexts Select at Random System for Language A Event Extraction Labeled Samples in Language B B Bilingual Pool with constant size train System for Language B Event Extraction A High Confidence Samples A Cross-lingual Projection Projected Samples A Projected Samples B Bootstrapping: n=1: trust yourself and teach yourself Co-training: n=2 (Blum and Mitchell,1998) • the two views are individually sufficient for classification • the two views are conditionally independent given the class High Confidence Samples B 40 Cross-lingual Projection • A key operation in the cross-lingual co-training algorithm • In our case, project the triggers and the arguments from one language into the other language according to the alignment information provided by bitexts. 41 Experiments (Chen and Ji, 2009) Data • ACE 2005 corpus • 560 English documents • 633 Chinese documents • LDC Chinese Treebank English Parallel corpus • 159 bitexts with manual alignment 42 Experiment results Self-training, and Co-training (English- labeled & Combined-labeled) for Trigger Labeling Self-training, and Co-training (English- labeled & Combined-labeled) for Argument Labeling 43 Analysis • Self-training: a little gain of 0.4% above the baseline for trigger labeling and a loss of 0.1% below the baseline for argument labeling. The deterioration tendency of the self-training curve indicates that entity extraction errors do have counteractive impacts on argument labeling. • Trust-English method: a gain of 1.7% for trigger labeling and 0.7% for argument labeling. • Combination method: a gain of 3.1% for trigger labeling and 2.1% for argument labeling. The third method outperforms the second method. Event Coreference Resolution: Task 1. An explosion in a cafe at one of the capital's busiest intersections killed one woman and injured another Tuesday 4. Ankara police chief Ercument Yilmaz visited the site of the morning blast 2. Police were investigating the cause of the explosion in the restroom of the multistory Crocodile Cafe in the commercial district of Kizilay during the morning rush hour 5. The explosion comes a month after 3. The blast shattered walls and windows in the building 7. Radical leftist, Kurdish and Islamic groups are active in the country and have carried out the bombing in the past 6. a bomb exploded at a McDonald's restaurant in Istanbul, causing damage but no injuries Typical Event Mention Pair Classification Features Category Feature Description Event type type_subtype pair of event type and subtype Trigger trigger_pair trigger pairs pos_pair part-of-speech pair of triggers nominal if the trigger of EM2 is nominal exact_match if the triggers exactly match stem_match if the stems of triggers match trigger_sim trigger similarity based on WordNet token_dist the number of tokens between triggers sentence_dist the number of sentences between event mentions event_dist the number of event mentions between EM1 and EM2 overlap_arg the number of arguments with entity and role match unique_arg the number of arguments only in one event mention diffrole_arg The number of coreferential arguments but role mismatch Distance Argument Incorporating Event Attribute as Features Event Attributes Modality Polarity Genericity Event Mentions Toyota Motor Corp. said Tuesday it will promote Akio Toyoda, a grandson of the company's founder who is widely viewed as a candidate to some day head Japan's largest automaker. Other Managing director Toyoda, 46, grandson of Kiichiro Toyoda and the eldest son of Toyota honorary chairman Shoichiro Toyoda, became one of 14 senior managing directors under a streamlined management system set to be… Asserted At least 19 people were killed in the first blast Positive There were no reports of deaths in the blast Negative An explosion in a cafe at one of the capital's busiest intersections killed one woman and injured another Tuesday Specific Roh has said any pre-emptive strike against the North's nuclear facilities could prove disastrous Tense Attribute Value Generic Israel holds the Palestinian leader responsible for the latest violence, even though the recent attacks were carried out by Islamic militants Past We are warning Israel not to exploit this war against Iraq to carry out more attacks against the Palestinian people in the Gaza Strip and destroy the Palestinian Authority and the peace process. Future Attribute values as features: Whether the attributes of an event mention and its candidate antecedent event conflict or not; 6% absolute gain (Chen et al., 2009) Clustering Method 1: Agglomerative Clustering Basic idea: Start with singleton event mentions, sort them according to the occurrence in the document Traverse through each event mention (from left to right), iteratively merge the active event mention into a prior event (largest probability higher than some threshold) or start the event mention as a new event Clustering Method 2: Spectral Graph Clustering Trigger Arguments Trigger Arguments Trigger Arguments explosion Role = Place a cafe Role = Time Tuesday explosion Role = Place restroom Role = Time morning rush hour explosion Role = Place building Trigger Arguments Trigger Arguments Trigger Arguments Trigger Arguments (Chen and Ji, 2009) blast Role = Place site Role = Time morning explosion Role = Time a month after exploded Role = Place restaurant bombing Role = Attacker groups Spectral Graph Clustering 0.8 0.7 A 0.9 0.9 0.8 0.6 0.3 0.8 0.2 0.7 0.2 0.1 0.3 B cut(A,B) = 0.1+0.2+0.2+0.3=0.8 Spectral Graph Clustering (Cont’) • Start with full connected graph, each edge is weighted by the coreference value • Optimize the normalized-cut criterion (Shi and Malik, 2000) cut ( A, B) cut ( A, B) min NCut ( A, B) vol ( A) vol ( B) • vol(A): The total weight of the edges from group A • Maximize weight of within-group coreference links • Minimize weight of between-group coreference links State-of-the-art Performance MUC metric does not prefer clustering results with many singleton event mentions (Chen and Ji, 2009) Remaining Challenges The performance bottleneck of event coreference resolution comes from the poor performance of event mention labeling Beyond ACE Event Coreference Annotate events beyond ACE coreference definition • ACE does not identify Events as coreferents when one mention refers only to a part of the other • In ACE, the plural event mention is not coreferent with mentions of the component individual events. • ACE does not annotate: “Three people have been convicted…Smith and Jones were found guilty of selling guns…” “The gunman shot Smith and his son. ..The attack against Smith.” CMU Event Coref Corpus • Annotate related events at the document level, including subevents. Examples: • “drug war” (contains subevents: attacks, crackdowns, bullying…) • “attacks” (contains subevents: deaths, kidnappings, assassination, bombed…) Applications • Complex Question Answering • Event questions: Describe the drug war events in Latin America. • List questions: List the events related to attacks in the drug war. • Relationship questions: Who is attacking who? Drug War events We don't know who is winning the drug war in Latin America, but we know who's losing it -- the press. Over the past six months, six journalists have been killed and 10 kidnapped by drug traffickers or leftist guerrillas -- who often are one and the same -- in Colombia. Over the past 12 years, at least 40 journalists have died there. The attacks have intensified since the Colombian government began cracking down on the traffickers in August, trying to prevent their takeover of the country. drug war (contains subevents: attacks, crackdowns, bullying …) lexical anchor:drug war crackdown lexical anchor: cracking down arguments: Colombian government, traffickers, August attacks (contains subevents: deaths, kidnappings, assassination, bombed..) attacks (set of attacks) lexical anchor: attacks arguments: (inferred) traffickers, journalists Events to annotate • Events that happened “Britain bombed Iraq last night.” • Events which did not happen “Hall did not speak about the bombings.” • Planned events planned, expected to happen, agree to do… “Hall planned to meet with Saddam.” Other cases • Event that is pre-supposed to have happened Stealing event “It may well be that theft will become a bigger problem.” • Habitual in present tense “It opens at 8am.” Annotating related entities • In addition to event coreference, we also annotate entity relations between events. e.g. Agents of bombing events may be related via an “ally” relation. e.g. “the four countries cited, Colombia, Cuba, Panama and Nicaragua, are not only where the press is under greatest attack …” Four locations of attack are annotated and the political relation (CCPN) is linked. Other Features Arguments of events • Annotated events may have arguments. • Arguments (agent, patient, location, etc.) are also annotated. Each instance of the same event type is assigned a unique id. e.g. attacking-1, attacking-2 Annotating multiple intersecting meaning layers Three types of annotations have been added with the GATE tool • What events are related? Which events are subevents of what events? (Event Coreference) • What type of relationships between entities? (Entity Relations) • How certain are these events to have occurred? (Committed Belief ) Limitations of Previous Event Representations • Coarse-grained predicate-argument representations defined in Propbank (Palmer et al., 2005, Xue and Palmer, 2009) and FrameNet (Baker et al., 1998) – No attempt to cluster the predicates – Assign coarse-grained roles to arguments • Fine-grained event trigger and argument representations defined in ACE and ERE – Only covers 33 subtypes; e.g., Some typical types of emergent events such as “donation” and “evacuation” in response to a natural disaster are missing • Both representations are missing: – Event hierarchies, Relations among events – Measurement to quantify uncertainty, Implicit argument roles – The narrator’s role, profile and behavior intent and temporal effects – Hard to construct a timeline of events across documents – Efforts are being made by the DEFT event working group (NAACL13/ACL14 Event Workshops and this DEFT PI meeting Event Workshop) 62 Limitations of Previous Event Representations • Coarse-grained predicate-argument representations defined in Propbank (Palmer et al., 2005, Xue and Palmer, 2009) and FrameNet (Baker et al., 1998) – No attempt to cluster the predicates – Assign coarse-grained roles to arguments • Fine-grained event trigger and argument representations defined in ACE and ERE – Only covers 33 subtypes; e.g., Some typical types of emergent events such as “donation” and “evacuation” in response to a natural disaster are missing • Both representations are missing: – Event hierarchies, Relations among events – Measurement to quantify uncertainty, Implicit argument roles – The narrator’s role, profile and behavior intent and temporal effects – Hard to construct a timeline of events across documents – Efforts are being made by the DEFT event working group (NAACL13/ACL14 Event Workshops and this DEFT PI meeting Event Workshop) 63 Specific Characteristics of Emergent Events Planning and Organizing Sensing environment to identify precursor events Event Taking non-routine actions Behavioral Response Timeline Resolution Organizing Activities Taking Actions Event Conclusion Process of Behavioral Responses to Emerging Events Obtain, Understand, Trust, Personalize actionable information Diffuse contradictory information, discredit trusted sources Seek/Obtain confirmation Discredit trusted sources, block information flow Take action Disturb actions (Tyshchuk et al., 2014) 65 A New Representation for Events Current NLP’s Focus 66 An Example: Input [Oct.25 News] WNYC: The Office of Emergency Management situation room is now open, and Mayor Michael Bloomberg said they are monitoring the progress of the storm. "We saw that hurricanes like Irene can really do damage and we have to take them seriously, but we don't expect based on current forecasts to have anything like that," Bloomberg said. "But we're going to make sure we're prepared." He advised residents in flood-prone areas be prepared to evacuate. [Oct.29 Tweets] OMG!!! NYC IS GETTING THE EYE OF HURRICANE SANDY NEXT WEEK....SCARY!!! I HAVE TO EVACUATE MY NEIGHBORHOOD! 67 An Example: Output 68 4-tuple Temporal Representation (Ji et al., 2011) • Challenges: • Capture incomplete information • Accommodate uncertainty • Accommodate different granularities • Solution: • express constraints on start and end times for slot value • 4-tuple <t1, t2, t3, t4>: t1 < tstart < t2 t3 < tend < t4 Document text (2001-01-01) T1 T2 T3 T4 Chairman Smith Smith, who has been chairman for two years Smith, who was named chairman two years ago Smith, who resigned last October Smith served as chairman for 7 years before leaving in 1991 Smith was named chairman in 1980 -infinite -infinite 20010101 19990101 20010101 20010101 +infinite +infinite 19990101 19990101 19990101 +infinite -infinite 19840101 20001001 19841231 20001001 19910101 20001031 19911231 19800101 19801231 19800101 +infinite 69 Open Domain Event Type Discovery • A number of recent event extraction programs such as ACE identify several common types of events – Defining and identifying those types rely heavily on expert knowledge – Reaching an agreement among the experts or annotators may require extensive human labor • Address the issue of portability – How can we automatically detect novel event types? • General Approach – Follow social science theories (Tyshchuk et al., 2013) to discover salient emergent event types – Discover candidate event types based on event trigger clustering via paragraph discovery methods (Li et al., 2010) – Rank these clusters based on their salience and novelty in the target unlabeled corpus 70 Event Mention Extraction • Traditional Pipelined Approach: Texts 100% Name/ Nominal/ Time Mention Extraction 90% Relation Extraction Coreference Resolution 70% Event Extraction 60% Cross-doc Entity Clustering /Linking 50% • Errors are compounded from stage to stage • No interaction between individual predictions • Incapable of dealing with global dependencies Slot Filling KB 40% PERFORMANCE CEILING 71 General Solutions Joint Inference Model 1 Model 2 … Joint Modeling Model n Inference Prediction • Constrained Conditional Models, ILP [Roth2004,Punyakanok2005,Roth2007, Chang2012, Yang2013] • Re-ranking [Ji2005,McClosky2011] • Dual decomposition [Rush2010] Task 1 … Task 2 Task n Prediction • Probabilistic Graphical Models [Sutton2004,Wick2012,Singh2013] • Markov logic networks [Poon2007,Poon2010,Kiddon2012] 72 Joint Modeling with Structured Prediction • One Single Uniformed Model to Extract All (Li et al., 2013; Li and Ji, 2014) • Avoid error compounding • Local predictions can be mutually improved • Arbitrary global features can be automatically learned selectively from the entire structure with lost cost place place target victim instrument instrument target In Baghdad, a cameraman died when an American tank fired on the Palestine Hotel. LOC O O PER O O VEH Die O O O FAC O Attack O O O O 73 Event Mention Extraction Results 75 70 65 60 68.3 65.965.767.5 59.7 52.7 55 48.3 50 45 40 46.5 43.9 36.5 35 30 Trigger Cross-entity in Hong et. al. 2011 Sentence-level in Hong et. al. 2011 Pipelined MaxEnt classifiers Joint w/ local features Joint w/ local + global features Argument 74 Our Proposed Event Relation Taxonomy • Focus: subevent, confirmation, causality and temporality Inheritance Expansion Reemergence Subevent Variation Reference/Target Ancestor/Descendant Semina/Variance Conjunction Disjunction Confirmation Competition Negation Opposite Concession Generation/Neighborship Generation/Neighborship Generation/Instantiation Contingency Causality Conditionality Superior/Inferior/parallelism Initiator/Negator N/A Prerequisite/Opposite to Expectation Cause/Result Condition/Emergence Temporality Asynchronism Synchronism Subtype Priority/Posteriority N/A Role Comparison Main Type 75 Event Relation: Inheritance Definition: if X clones or succeeds Y, or serves as a revolutionary change of Y Inheritance Reemergence Subevent Variation Reference/Target Ancestor/Descendant Semina/Variance Eruption of Mount Vesuvius in 1944. Inheritance. Reemergence Inheritance. Variation Inheritance.Subevent Eruption of Mount Vesuvius in 1906. Mount Vesuvius keeps silent recently. The eruption covered most of north area with ash. Role=Reference Role=Descendant Role=Variance 76 Event Relation: Expansion Definition: if X and Y jointly constitute a larger event or share the same subevent, or X serves as the fine-grain instance or evidence of Y, Expansion Conjunction Disjunction Confirmation Generation/Neighborship Generation/Neighborship Generation/Instantiation Expansion.Disjunction X and Y share the same subevent Try the suspect Arrest the suspect Boston Marathon Bombing. Dzhokhar was suspected Appear on the scene. Expansion.Confirmation Second Explosion. First Explosion. Expansion.Conjunction X and Y jointly constitute a main event X serves as the fine-grain instance or evidence of Y Made bombs at home. 77 Event Relation: Contingency Definition: if X creates a condition for the emergence of Y, or results in Y Primacy Effect Contingency Causality Conditionality Cause/Result Condition/Emergence Role=Cause Contingency.Conditionality X creates a condition for the emergence of Y A violent storm would hit Barents sea. Contingency.Causality X results in the emergence of Y The special hoisting equipment had been properly installed in the massive pick-up boat. Role=Condition The operation to raise the Kursk submarine was scheduled to begin in about two weeks. Role=Emergence Salvage plan was postponed indefinitely. Role=Result 78 Event Relation: Temporality Original TimeBank** There were four or five people inside, and they just started firing TimeBank-Dense There were four or five people inside, and they just started firing Ms. Sanders was hit several times and was pronounced dead at the scene. Ms. Sanders was hit several times and was pronounced dead at the scene. The other customers fled, and the police said it did not appear that anyone else was injured. The other customers fled, and the police said it did not appear that anyone else was injured. New relation From TimeBank to TimeBank-Dense • Relations: BEFORE, AFTER, INCLUDES, INCLUDED_IN, SIMULTANEOUS, VAGUE • Annotators must label all pairs within one sentence window • Annotation guidelines address resulting difficult cases • Evaluate and develop systems to decide whether a pair can be related (Cassidy et al., 2014); ** (Pustejovsky et al., 2003) 79 Event Relation Extraction Approach • Main Challenges – Fine-grained distinction / Implicit logic relations (Hong et al., 2012) – The text order by itself is a poor predictor of temporal order (3% correlation) (Ji et al., 2009) • Deep background and commonsense knowledge acquisition – FrameNet/Google ngram / Concept Net /Background news – Retrospective Historical Event Detection precursor prediction – Take advantage of rich event representation and Abstract Meaning Representation (Banarescu et al., 2013) • Temporal Event Tracking (Ji et al., 2010) and Temporal Slot Filling (Ji et al., 2013; Cassidy and Ji, 2014) – Apply Structured prediction to capture long syntactic contexts – Exploit global knowledge from related events and Wikipedia to conduct temporal reasoning and predict implicit time arguments 80 Representing Event-Event Relations in KB ID = E1 Name = Office of Emergency Management ID = E2 Name = Michael Bloomberg Title = Mayor Employer = E5 Entity ID = E3 Name = Residents in flood-phone areas KB ID = E4 Name = WNYC Property = News ID = E5 Name = New York City ID = E6 Name = Tammi Delancy #Follower s= #Friends = Role = 211 770 Participant &Commenter City_of_reside nce = E5 81 Representing Event-Event Relations in KB ID = EV1 Event KB ID = EV2 Properties Type= Warning Novelty= 1.0 Modality = Asserted Tense = Past Polarity = Positive Genericity = Specific Trigger advised Arguments Advisor = E1, E2 Advisees = E3 Advice = Evacuation Narrator = E4 Time = 10/25/2012 Place = E5 Result Event EV2 Children Event EV Properties Type= Evacuation Novelty= 1.0 Modality = Asserted Tense= Future Polarity = Positive Genericity = Specific Time = 10/29/2012 Place = E5 Trigger evacuate Arguments Narrator = E4 Cause Event EV1 Parent Event EV4 82 Pilot System • We have a reasonable understanding on how to detect temporal relations (Ji et al., 2013; Cassidy and Ji, 2014) but not others • Main Challenges – Clustering coreferential events - what is event coreference anyway (Hovy et al., 2013)? – Event-event relations are often logic relations without explicit linguistic indicators – Fine-grained distinction / Implicit logic relations (Hong et al., 2012) • Deep background and commonsense knowledge acquisition – FrameNet/Google ngram / Concept Net /Background news – Retrospective Historical Event Detection precursor prediction (Hong and Ji, 2014submission) – Take advantage of rich knowledge representation such as AMR (Banarescu et al., 2013) 83