Support-Graph Preconditioning

advertisement

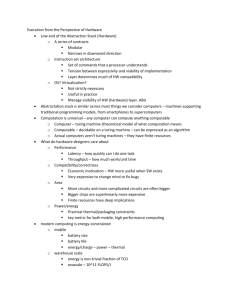

CSE 260 Parallel Computation Allan Snavely, Henri Casanova asnavely@cs.ucsd.edu casanova@cs.ucsd.edu http://www.sdsc.edu/~allans/cs260/cs260.htm Outline • Introductions • Why we need powerful computers • Why powerful computers are parallel • Issues in parallel performance • Parallel computers, yesterday and today • Class organization Introductions • Instructors: Allan Snavely, asnavely@cs.ucsd.edu, www.sdsc.edu/~allans Henri Casanova, casanova@cs.ucsd.edu, www.cs.ucsd.edu/~casanova/ • T.A.: Michael McCracken, mike@cs.ucsd.edu • Course web page: http://www.sdsc.edu/~allans/cs260/cs260.htm • HPCS experiment: more at end of class today. Thanks to Kathy Yelick and Jim Demmel , and John Gilbert at UCB for some of these slides. Why do we need powerful computers? Simulation: The Third Pillar of Science • Traditional scientific and engineering paradigm: 1) Do theory or paper design. 2) Perform experiments or build system. • Limitations: • • • • • Too difficult -- build large wind tunnels. Too expensive -- build a throw-away passenger jet. Too slow -- wait for climate or galactic evolution. Too dangerous -- weapons, drug design, climate experiments. Computational science paradigm: 3) Use high performance computer systems to simulate the phenomenon. • Base on known physical laws and efficient numerical methods. Some Challenging Computations • Science • • • • • Global climate modeling Astrophysical modeling Biology: genomics; protein folding; drug design Computational Chemistry Computational Material Sciences and Nanosciences • Engineering • • • • • Crash simulation Semiconductor design Earthquake and structural modeling Computation fluid dynamics (airplane design) Combustion (engine design) • Business • • Financial and economic modeling Transaction processing, web services and search engines • Defense • • Nuclear weapons -- test by simulation Cryptography Units of Measure in HPC • High Performance Computing (HPC) units are: • Flops: floating point operations • Flop/s: floating point operations per second • Bytes: size of data (double precision floating point number is 8) • Typical sizes are millions, billions, trillions… Mega Mflop/s = 106 flop/sec Giga Gflop/s = 109 flop/sec Tera Tflop/s = 1012 flop/sec Peta Pflop/s = 1015 flop/sec Exa Eflop/s = 1018 flop/sec Mbyte = 106 byte (also 220 = 1048576) Gbyte = 109 byte (also 230 = 1073741824) Tbyte = 1012 byte (also 240 = 10995211627776) Pbyte = 1015 byte (also 250 = 1125899906842624) Ebyte = 1018 byte Global Climate Modeling Problem • Problem is to compute: f(latitude, longitude, elevation, time) temperature, pressure, humidity, wind velocity • Approach: • Discretize the domain, e.g., a measurement point every 10 km • Devise an algorithm to predict weather at time t+1 given t • Uses: - Predict major events, e.g., El Nino - Use in setting air emissions standards Source: http://www.epm.ornl.gov/chammp/chammp.html Global Climate Modeling Computation • One piece is modeling the fluid flow in the atmosphere • Solve Navier-Stokes problem • Roughly 100 Flops per grid point with 1 minute timestep • Computational requirements: • • • • To match real-time, need 5x 1011 flops in 60 seconds = 8 Gflop/s Weather prediction (7 days in 24 hours) 56 Gflop/s Climate prediction (50 years in 30 days) 4.8 Tflop/s To use in policy negotiations (50 years in 12 hours) 288 Tflop/s • To double the grid resolution, computation is at least 8x • State of the art models require integration of atmosphere, ocean, sea-ice, land models, plus possibly carbon cycle, geochemistry and more • Current models are coarser than this High Resolution Climate Modeling on NERSC-3 – P. Duffy, et al., LLNL A 1000 Year Climate Simulation • Demonstration of the Community Climate Model (CCSM2) • A 1000-year simulation shows long-term, stable representation of the earth’s climate. • 760,000 processor hours used • Temperature change shown • • Warren Washington and Jerry Meehl, National Center for Atmospheric Research; Bert Semtner, Naval Postgraduate School; John Weatherly, U.S. Army Cold Regions Research and Engineering Lab Laboratory et al. http://www.nersc.gov/aboutnersc/pubs/bigsplash.pdf Climate Modeling on the Earth Simulator System Development of ES started in 1997 with the goal of enabling a comprehensive understanding of global environmental changes such as global warming. Construction was completed February, 2002 and practical operation started March 1, 2002 35.86 Tflops (87.5% of peak performance) on Linpack benchmark. 26.58 Tflops on a global atmospheric circulation code. Why are powerful computers parallel? Tunnel Vision by Experts • “I think there is a world market for maybe five computers.” • Thomas Watson, chairman of IBM, 1943. • “There is no reason for any individual to have a computer in their home” • Ken Olson, president and founder of Digital Equipment Corporation, 1977. • “640K [of memory] ought to be enough for anybody.” • Bill Gates, chairman of Microsoft,1981. Slide source: Warfield et al. Technology Trends: Microprocessor Capacity Moore’s Law Moore’s Law: #transistors/chip doubles every 1.5 years Microprocessors have become smaller, denser, and more powerful. Gordon Moore (co-founder of Intel) predicted in 1965 that the transistor density of semiconductor chips would double roughly every 18 months. Slide source: Jack Dongarra How fast can a serial computer be? 1 Tflop 1 TB sequential machine r = .3 mm • Consider the 1 Tflop sequential machine • data must travel some distance, r, to get from memory to CPU • to get 1 data element per cycle, this means 10^12 times per second at the speed of light, c = 3e8 m/s • so r < c/10^12 = .3 mm • Now put 1 TB of storage in a .3 mm^2 area • each word occupies ~ 3 Angstroms^2, the size of a small atom Scaling microprocessors • What happens when feature size shrinks by a factor of x? • Clock rate goes up by x • actually a little less • Transistors per unit area goes up by x2 • Die size also tends to increase • typically another factor of ~x • Raw computing power of the chip goes up by ~ x4 ! • of which x3 is devoted either to parallelism or locality “Automatic” Parallelism in Modern Machines • Bit level parallelism • within floating point operations, etc. • Instruction level parallelism • multiple instructions execute per clock cycle • Memory system parallelism • overlap of memory operations with computation • OS parallelism • multiple jobs run in parallel on commodity SMPs There are limits to all of these -- for very high performance, user must identify, schedule and coordinate parallel tasks Number of transistors per processor chip 100,000,000 10,000,000 Transistors R10000 Pentium 1,000,000 i80386 i80286 100,000 R3000 R2000 i8086 10,000 i8080 i4004 1,000 1970 1975 1980 1985 1990 1995 2000 2005 Year Number of transistors per processor chip 100,000,000 10,000,000 Instruction-Level Parallelism Transistors R10000 Pentium 1,000,000 i80386 i80286 100,000 R3000 R2000 i8086 10,000 i8080 i4004 1,000 1970 1975 1980 1985 1990 1995 2000 2005 Year Bit-Level Parallelism Thread-Level Parallelism? Issues in parallel performance Locality and Parallelism Conventional Storage Proc Hierarchy Cache L2 Cache Proc Cache L2 Cache Proc Cache L2 Cache L3 Cache L3 Cache Memory Memory Memory • Large memories are slow, fast memories are small • Storage hierarchies are large and fast on average • Parallel processors, collectively, have large, fast cache • the slow accesses to “remote” data we call “communication” • Algorithm should do most work on local data potential interconnects L3 Cache Finding Enough Parallelism: Amdahl’s Law • Suppose only part of an application seems parallel • Amdahl’s law • Let s be the fraction of work done sequentially, so (1-s) is the fraction parallelizable • Let P = number of processors Speedup(P) = Time(1)/Time(P) <= 1/(s + (1-s)/P) <= 1/s • Even if the parallel part speeds up perfectly, the sequential part limits overall performance. Load Imbalance • Load imbalance is the time that some processors in the system are idle due to • insufficient parallelism (during that phase) • unequal size tasks • Examples of the latter • adapting to “interesting parts of a domain” • tree-structured computations • fundamentally unstructured problems • Algorithm needs to balance load Parallel computers, yesterday and today • Dead supercomputers • Top 500 list • Flashmob computing (!?) Parallel Computing Today Japanese Earth Simulator machine Small class Beowulf cluster Course organization Course overview • Key ideas: • Algorithms • Programming models • Performance • Course outline – see home page Resources • Course home page: http://www.sdsc.edu/~allans/cs260/cs260.htm • Computing resources: • 128-multi-streaming processor (MSP) Cray X1 with 512 GB of memory and 21 terabytes of disk. The X1, named Klondike at Arctic Region Supercomputing Center (ARSC) • 1632 processor IBM Power4 SP: DataStar (SDSC) • Return the course questionnaire so we can create accounts! • No textbook – see course homepage for references Requirements • Four 2-week homework assignments • First one is assigned today!!!!! • Individual effort • 40% of course grade • Final project • Significant parallel programming project • Teams of three • Teams should be interdisciplinary (this is how real parallel software is built) • 50% of course grade • Scribe notes for one lecture • Due one week after lecture • Sign up for a day to scribe • 10% of course grade for scribing and class participation