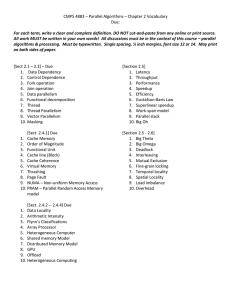

CS 240A Applied Parallel Computing John R. Gilbert

advertisement

CS 240A Applied Parallel Computing John R. Gilbert gilbert@cs.ucsb.edu http://www.cs.ucsb.edu/~cs240a Thanks to Kathy Yelick and Jim Demmel at UCB for some of their slides. Course bureacracy • Read course home page http://www.cs.ucsb.edu/~cs240a/homepage.html • Join Google discussion group (see course home page) • Accounts on Triton, San Diego Supercomputing Center: • Use “ssh –keygen –t rsa” and then email your “id_rsa.pub” file to Stefan Boeriu, stefan@engineering.ucsb.edu • If you weren’t signed up for the course as of last week, email me your registration info right away • Triton logon demo & tool intro coming soon– watch Google group for details Homework 1 • See course home page for details. • Find an application of parallel computing and build a web page describing it. • Choose something from your research area. • Or from the web or elsewhere. • Create a web page describing the application. • Describe the application and provide a reference (or link) • Describe the platform where this application was run • Find peak and LINPACK performance for the platform and its rank on the TOP500 list • Find the performance of your selected application • What ratio of sustained to peak performance is reported? • Evaluate the project: How did the application scale, ie was speed roughly proportional to the number of processors? What were the major difficulties in obtaining good performance? What tools and algorithms were used? • Send us (John and Hans) the link (we will post them) • Due next Monday, April 5 Two examples of big parallel problems • Bone density modeling: • Physical simulation • Lots of numerical computing • Spatially local • Vertex betweenness centrality: • Exploring an unstructured graph • Lots of pointer-chasing • Little numerical computing • No spatial locality Social newtork analysis Betweenness Centrality (BC) CB(v): Among all the shortest paths, what fraction of them pass through the node of interest? A typical software stack for an application enabled with the Combinatorial BLAS Brandes’ algorithm Betweenness Centrality using Sparse GEMM 1 4 3 AT X . (ATX) *¬X • Parallel breadth-first search is implemented with sparse matrix-matrix multiplication • Work efficient algorithm for BC 2 7 6 5 BC performance in distributed memory Millions TEPS score BC performance 250 Input: RMAT scale N 2N vertices Average degree 8 200 150 Scale 17 Scale 18 100 Scale 19 50 Scale 20 25 36 49 64 81 100 121 144 169 196 225 256 289 324 361 400 441 484 0 Number of Cores • TEPS: Traversed Edges Per Second • Batch of 512 vertices at each iteration • Code only a few lines longer than Matlab version Pure MPI-1 version. No reliance on any particular hardware Parallel Computers Today Two Nvidia 8800 GPUs > 1 TFLOPS Oak Ridge / Cray Jaguar > 1.75 PFLOPS TFLOPS = 1012 floating point ops/sec PFLOPS = 1,000,000,000,000,000 / sec (1015) Intel 80core chip > 1 TFLOPS AMD Opteron quad-core die Cray XMT (highly multithreaded shared memory) 1 2 i =n . . . 3 i =2 i =1 Programs running in parallel i =n Subproblem A i =3 4 . Su bproblem B . . i =1 Serial Code i =0 Concurrent threads of computation Subproblem A .... Hardware stream s (128) Unused streams Instruction Ready Pool; Pipeline of executing instructions • Top 500 List • http://www.top500.org/list/2009/11/100 The Computer Architecture Challenge Most high-performance computer designs allocate resources to optimize Gaussian elimination on large, dense matrices. Originally, because linear algebra is the middleware of scientific computing. Nowadays, mostly for bragging rights. P A = L x U Why are powerful computers parallel? Technology Trends: Microprocessor Capacity Moore’s Law Moore’s Law: #transistors/chip doubles every 1.5 years Microprocessors have become smaller, denser, and more powerful. Gordon Moore (co-founder of Intel) predicted in 1965 that the transistor density of semiconductor chips would double roughly every 18 months. Slide source: Jack Dongarra Scaling microprocessors • What happens when feature size shrinks by a factor of x? • Clock rate used to go up by x , but no longer • Clock rates are topping out due to power (heat) limits • Transistors per unit area goes up by x2 • Die size also tends to increase • Typically another factor of ~x • Raw computing capability of the chip goes up by ~ x3 ! • But it’s all for parallelism, not speed How fast can a serial computer be? 1 Tflop 1 TB sequential machine r = .3 mm • Consider the 1 Tflop sequential machine • data must travel some distance, r, to get from memory to CPU • to get 1 data element per cycle, this means 10^12 times per second at the speed of light, c = 3e8 m/s • so r < c/10^12 = .3 mm • Now put 1 TB of storage in a .3 mm^2 area • each word occupies ~ 3 Angstroms^2, the size of a small atom “Automatic” Parallelism in Modern Machines • Bit level parallelism • within floating point operations, etc. • Instruction level parallelism • multiple instructions execute per clock cycle • Memory system parallelism • overlap of memory operations with computation • OS parallelism • multiple jobs run in parallel on commodity SMPs There are limits to all of these -- for very high performance, user must identify, schedule and coordinate parallel tasks Number of transistors per processor chip 100,000,000 10,000,000 Transistors R10000 Pentium 1,000,000 i80386 i80286 100,000 R3000 R2000 i8086 10,000 i8080 i4004 1,000 1970 1975 1980 1985 1990 1995 2000 2005 Year Number of transistors per processor chip 100,000,000 10,000,000 Instruction-Level Parallelism Transistors R10000 Pentium 1,000,000 i80386 i80286 100,000 R3000 R2000 i8086 10,000 i8080 i4004 1,000 1970 1975 1980 1985 1990 1995 2000 2005 Year Bit-Level Parallelism Thread-Level Parallelism? Generic Parallel Machine Architecture Storage Hierarchy Proc Cache L2 Cache Proc Cache L2 Cache Proc Cache L2 Cache L3 Cache L3 Cache Memory Memory Memory potential interconnects L3 Cache • Key architecture question: Where is the interconnect, and how fast? • Key algorithm question: Where is the data?