203_CS257_final - Department of Computer Science

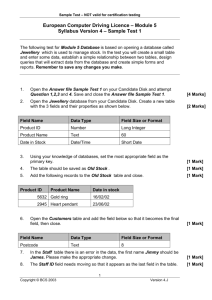

advertisement

SECONDARY

STORAGE

MANAGEMENT

SECTIONS 13.1 – 13.3

05/11/09 15:18

Sanuja Dabade & Eilbroun Benjamin

CS 257 – Dr. TY Lin

Presentation Outline

• 13.1 The Memory Hierarchy

13.1.1 The Memory Hierarchy

13.1.2 Transfer of Data Between

Levels

o 13.1.3 Volatile and Nonvolatile

Storage

o 13.1.4 Virtual Memory

o

o

• 13.2 Disks

o

o

o

13.2.1 Mechanics of Disks

13.2.2 The Disk Controller

13.2.3 Disk Access Characteristics

05/11/09 15:18

Presentation Outline (con’t)

• 13.3 Accelerating Access to

Secondary Storage

13.3.1 The I/O Model of Computation

13.3.2 Organizing Data by Cylinders

13.3.3 Using Multiple Disks

13.3.4 Mirroring Disks

13.3.5 Disk Scheduling and the

Elevator Algorithm

o 13.3.6 Prefetching and Large-Scale

Buffering

o

o

o

o

o

05/11/09 15:18

13.1.1 Memory Hierarchy

• Several components for data storage having

different data capacities available

• Cost per byte to store data also varies

• Device with smallest capacity offer the fastest

speed with highest cost per bit

05/11/09 15:18

Memory Hierarchy Diagram

Programs,

Main Memory DBMS’s

As Visual Memory

DBMS

Tertiary Storage

Disk File

System

Main Memory

Cache

05/11/09 15:18

13.1.1 Memory Hierarchy

• Cache

Lowest level of the hierarchy

Data items are copies of certain locations of main

memory

o Sometimes, values in cache are changed and

corresponding changes to main memory are

delayed

o Machine looks for instructions as well as data for

those instructions in the cache

o Holds limited amount of data

o

o

05/11/09 15:18

13.1.1 Memory Hierarchy

(con’t)(updated)

• No need to update the data in main memory

immediately in a single processor computer

• In multiple processors data is updated

immediately to main memory….called as write

through

• Data and instructions are moved to cache

from main memory when they are needed by

the processor.

05/11/09 15:18

Main Memory

• Everything happens in the computer i.e.

instruction execution, data manipulation, as

working on information that is resident in main

memory

• Main memories are random access….one can

obtain any byte in the same amount of time

05/11/09 15:18

Secondary storage

• Used to store data and programs when they

are not being processed

• More permanent than main memory, as data

and programs are retained when the power is

turned off

• E.g. magnetic disks, hard disks

05/11/09 15:18

Tertiary Storage(updated)

• Holds data volumes in terabytes

• Used for databases much larger than what

can be stored on disk

• Higher read/write times than secondary

storage.

• Retrieval takes seconds or minutes.

05/11/09 15:18

13.1.2 Transfer of Data Between

levels

• Data moves between adjacent levels of the

hierarchy

• At the secondary or tertiary levels accessing

the desired data or finding the desired place to

store the data takes a lot of time

• Disk is organized into blocks

• Entire blocks are moved to and from memory

called a buffer

05/11/09 15:18

13.1.2 Transfer of Data Between

level (cont’d)

• A key technique for speeding up database

operations is to arrange the data so that when

one piece of data block is needed it is likely

that other data on the same block will be

needed at the same time

• Same idea applies to other hierarchy levels

05/11/09 15:18

13.1.3 Volatile and Non Volatile

Storage

• A volatile device forgets what data is stored on

it after power off

• Non volatile holds data for longer period even

when device is turned off

• All the secondary and tertiary devices are non

volatile and main memory is volatile

05/11/09 15:18

13.1.4 Virtual Memory

• Typical software executes in virtual memory

• Address space is typically 32 bit or 232 bytes

or 4GB

• Transfer between memory and disk is in terms

of blocks

05/11/09 15:18

13.2.1 Mechanism of Disk

• Mechanisms of Disks

Use of secondary storage is one of the important

characteristic of DBMS

o Consists of 2 moving pieces of a disk

o

1. disk assembly

2. head assembly

o

o

o

Disk assembly consists of 1 or more platters

Platters rotate around a central spindle

Bits are stored on upper and lower surfaces of

platters

05/11/09 15:18

13.2.1 Mechanism of

Disk(updated)

• Disk is organized into tracks

The tracks that are at fixed radius from center

among all the surfaces form one cylinder

• Tracks are organized into sectors

• Tracks are the segments of circle separated

by gaps and are used to help identify the

beginnings of sectors.

• The head assembly holds the disk heads.

• A head reads/alters the magnetism passing

under it.

05/11/09 15:18

05/11/09 15:18

13.2.2 Disk Controller(updated)

• One or more disks are controlled by disk

controllers

• Disks controllers are capable of

Controlling the mechanical actuator that moves

the head assembly

o Selecting the sector from among all those in the

cylinder at which heads are positioned

o Transferring bits between desired sector and

main memory

o Possible buffering an entire track in the local

memory of the disk controller.

o

05/11/09 15:18

13.2.3 Disk Access

Characteristics

• Accessing (reading/writing) a block requires 3

steps

Disk controller positions the head assembly at the

cylinder containing the track on which the block is

located. It is a ‘seek time’

o The disk controller waits while the first sector of

the block moves under the head. This is a

‘rotational latency’

o All the sectors and the gaps between them pass

the head, while disk controller reads or writes

data in these sectors. This is a ‘transfer time’

o

05/11/09 15:18

SECONDARY

STORAGE

MANAGEMENT

SECTION 13.3

05/11/09 15:21

Eilbroun Benjamin

CS 257 – Dr. TY Lin

Presentation Outline

• 13.3 Accelerating Access to

Secondary Storage

13.3.1 The I/O Model of Computation

13.3.2 Organizing Data by Cylinders

13.3.3 Using Multiple Disks

13.3.4 Mirroring Disks

13.3.5 Disk Scheduling and the

Elevator Algorithm

o 13.3.6 Prefetching and Large-Scale

Buffering

o

o

o

o

o

05/11/09 15:21

13.3 Accelerating Access to

Secondary Storage

• Several approaches for more-efficiently accessing data

in secondary storage:

o Place blocks that are together in the same cylinder.

o Divide the data among multiple disks.

o Mirror disks.

o Use disk-scheduling algorithms.

o Prefetch blocks into main memory.

• Scheduling Latency – added delay in accessing data

caused by a disk scheduling algorithm.

• Throughput – the number of disk accesses per second

that the system can accommodate.

05/11/09 15:21

13.3.1 The I/O Model of

Computation

• The number of block accesses (Disk I/O’s) is a

good time approximation for the algorithm.

o

This should be minimized.

• Ex 13.3: You want to have an index on R to identify

the block on which the desired tuple appears, but

not where on the block it resides.

o

o

For Megatron 747 (M747) example, it takes 11ms to read a 16k block.

A standard microprocessor can execute millions of instruction in 11ms,

making any delay in searching for the desired tuple negligible.

05/11/09 15:21

13.3.2 Organizing Data by

Cylinders

• If we read all blocks on a single track or

cylinder consecutively, then we can neglect all

but first seek time and first rotational latency.

• Ex 13.4: We request 1024 blocks of M747.

If data is randomly distributed, average latency is

10.76ms by Ex 13.2, making total latency 11s.

o If all blocks are consecutively stored on 1

cylinder:

o

6.46ms + 8.33ms * 16 = 139ms

(1 average seek) (time per rotation) (# rotations)

05/11/09 15:21

13.3.3 Using Multiple Disks

• If we have n disks, read/write performance will

increase by a factor of n.

• Striping – distributing a relation across multiple

disks following this pattern:

o

o

Data on disk R1: R1, R1+n, R1+2n,…

Data on disk R2: R2, R2+n, R2+2n,…

…

• Data on disk Rn: Rn, Rn+n, Rn+2n, …

• Ex 13.5: We request 1024 blocks with n = 4.

o

6.46ms + (8.33ms * (16/4)) = 39.8ms

(1 average seek) (time per rotation) (# rotations)

05/11/09 15:21

13.3.4 Mirroring Disks

• Mirroring Disks – having 2 or more disks hold

identical copied of data.

• Benefit 1: If n disks are mirrors of each other,

the system can survive a crash by n-1 disks.

• Benefit 2: If we have n disks, read

performance increases by a factor of n.

• Performance increases further by having the

controller select the disk which has its head

closest to desired data block for each read.

05/11/09 15:21

13.3.5 Disk Scheduling and the

Elevator Problem

• Disk controller will run this algorithm to select which

of several requests to process first.

• Pseudo code:

o

o

o

requests[] // array of all non-processed data requests

upon receiving new data request:

requests[].add(new request)

while(requests[] is not empty)

move head to next location

if(head location is at data in requests[])

retrieve data

remove data from requests[]

if(head reaches end)

reverse head direction

05/11/09 15:21

13.3.5 Disk Scheduling and the

Elevator Problem (con’t)

Events:

Head starting point

Request data at 8000

Request data at

24000

Request data at

56000

Get data at 8000

Request data at

16000

Get data at 24000

Request data at

64000

Get data at 56000

Request Data at

40000

Get data at 64000

Get data at 40000

Get data at 16000

64000

56000

48000

40000

32000

24000

16000

8000

Current time

13.6

26.9

34.2

45.5

56.8

4.3

10

20

30

0

data

time

8000..

4.3

24000..

24000..

56000..

56000..

13.6

13.6

26.9

26.9

64000..

64000..

34.2

34.2

40000..

40000..

45.5

45.5

16000..

56.8

05/11/09 15:21

13.3.5 Disk Scheduling and the

Elevator Problem (con’t)

Elevator

Algorithm

FIFO

Algorithm

data

data

time

time

8000..

4.3

8000..

4.3

24000..

13.6

24000..

13.6

56000..

26.9

56000..

26.9

64000..

34.2

16000..

42.2

40000..

45.5

64000..

59.5

16000..

56.8

40000..

70.8

05/11/09 15:21

13.3.6 Prefetching and Large-Scale

Buffering(updated)

• If at the application level, we can predict the

order blocks will be requested, we can load

them into main memory before they are

needed.

• This is called prefetching or sometimes double

buffering.

05/11/09 15:21

Questions

05/11/09 15:21

• Disk failure ways and their mitigatioPppppn

• Pri

Ways in which disks can fail• Intermittent failure.

• Media Decay.

• Write failure.

• Disk Crash.

Intermittent Failures.

• Read or write operation on a sector successful not

on first try, but after repeated tries.

• The most common form of failure.

• Parity checks can be used to detect this kind of

failure.

Media Decay.

• Serious form of failure.

• Bit/Bits are permanently corrupted.

• Impossible to read a sector correctly even after

many trials.

• Stable storage technique for organizing a disk is

used to avoid this failure.

Write failure

• Attempt to write a sector is not possible.

• Attempt to retrieve previously written sector is

unsuccessful.

• Possible reason – power outage while writing of the

sector.

• Stable Storage Technique can be used to avoid this.

Disk Crash

• Most serious form of disk failure.

• Entire disk becomes unreadable, suddenly and

permanently.

• RAID techniques can be used for coping with disk

crashes.

More on Intermittent failures…

• When we try to read a sector, but the correct content

of that sector is not delivered to the disk controller.

• If the controller has a way to tell that the sector is

good or bad (checksums), it can then reissue the

read request when bad data is read.

More on Intermittent Failures..

• The controller can attempt to write a sector, but the

contents of the sector are not what was intended.

• The only way to check this is to let the disk go

around again read the sector.

• One way to perform the check is to read the sector

and compare it with the sector we intend to write.

Contd..

• Instead of performing the complete comparison at

the disk controller, simpler way is to read the sector

and see if a good sector was read.

• If it is good sector, then the write was correct

otherwise the write was unsuccessful and must be

repeated.

Checksums.

• Technique used to determine the good/bad status of

a sector.

• Each sector has some additional bits called the

checksum that are set depending on the values of

the data bits in that sector.

• If checksum is not proper on reading, then there is

an error in reading.

Checksums(contd..)

• There is a small chance that the block was not read

correctly even if the checksum is proper.

• The probability of correctness can be increased by

using many checksum bits.

Checksum calculation.

• Checksum is based on the parity of all bits in the

sector.

• If there are odd number of 1’s among a collection of

bits, the bits are said to have odd parity. A parity bit

‘1’ is added.

• If there are even number of 1’s then the collection of

bits is said to have even parity. A parity bit ‘0’ is

added.

Checksum calculation(contd..)

• The number of 1’s among a collection of bits and

their parity bit is always even.

• During a write operation, the disk controller

calculates the parity bit and append it to the

sequence of bits written in the sector.

• Every sector will have a even parity.

Examples…

• A sequence of bits 01101000 has odd number of

1’s. The parity bit will be 1. So the sequence with the

parity bit will now be 011010001.

• A sequence of bits 11101110 will have an even

parity as it has even number of 1’s. So with the

parity bit 0, the sequence will be 111011100.

Checksum calculation(contd..)

• Any one-bit error in reading or writing the bits results

in a sequence of bits that has odd-parity.

• The disk controller can count the number of 1’s and

can determine if the sector has odd parity in the

presence of an error.

Odds.

• There are chances that more than one bit can be

corrupted and the error can be unnoticed.

• Increasing the number of parity bits can increase the

chances of detecting errors.

• In general, if there are n independent bits as

checksum, the chances of error will be one in 2n.

Stable Storage.

• Checksums can detect the error but cannot correct

it.

• Sometimes we overwrite the previous contents of a

sector and yet cannot read the new contents

correctly.

• To deal with these problems, Stable Storage policy

can be implemented on the disks.

Stable-Storage(contd..)

• Sectors are paired and each pair represents one

sector-contents X.

• The left copy of the sector may be represented as

XL and XR as the right copy.

Assumptions.

• We assume that copies are written with sufficient

number of parity bits to decrease the chance of bad

sector looks good when the parity checks are

considered.

• Also, If the read function returns a good value w for

either XL or XR then it is assumed that w is the true

value of X.

Stable -Storage Writing Policy:

1.Write the value of X into XL. Check the value has

status “good”; i.e., the parity-check bits are correct

in the written copy. If not repeat write. If after a set

number of write attempts, we have not successfully

written X in XL, assume that there is a media failure

in this sector. A fix-up such as substituting a spare

sector for XL must be adopted.

1.Repeat (1) for XR.

Stable-Storage Reading Policy:

• The policy is to alternate trying to read XL and XR

until a good value is returned.

• If a good value is not returned after pre chosen

number of tries, then it is assumed that X is truly

unreadable.

Error-Handling capabilities:

Media failures:

• If after storing X in sectors XL and XR, one of them

undergoes media failure and becomes permanently

unreadable, we can read from the second one.

• If both the sectors have failed to read, then sector X

cannot be read.

• The probability of both failing is extremely small.

Error-Handling

Capabilities(contd..)

Write Failure:

• When writing X, if there is a system failure(like

power shortage), the X in the main memory is lost

and the copy of X being written will be erroneous.

• Half of the sector may be written with part of new

value of X, while the other half remains as it was.

Error-Handling

Capabilities(contd..)

• The possible cases when the system becomes

available:

1.The failure occurred when writing to XL. Then XL is

considered bad. Since XR was never changed, its

status is good. We can make a copy of XR into XL,

which is the old value of X.

2.The failure occurred after XL is written. Then XL will

have the good status and XR which has the old

value of XR has bad status. We can copy the new

value of X to XR from XL.

Recovery from Disk Crashes.

• To reduce the data loss by Dish crashes, schemes

which involve redundancy, extending the idea of

parity checks or duplicate sectors can be applied.

• The term used for these strategies is RAID or

Redundant Arrays of Independent Disks.

• In general, if the mean time to failure of disks is n

years, then in any given year, 1/nth of the surviving

disks fail.

Recovery from Disk

Crashes(contd..)

• Each of the RAID schemes has data disks and

redundant disks.

• Data disks are one or more disks that hold the data.

• Redundant disks are one or more disks that hold

information that is completely determined by the

contents of the data disks.

• When there is a disk crash of either of the disks, then the

other disks can be used to restore the failed disk to

avoid a permanent information loss.

Disk Failures

Xiaqing He

ID: 204

Dr. Lin

Content

1)Focus on :

“How to recover from disk crashes”

common term RAID

“redundancy array of independent disks”

2)Several schemes to recover from disk

crashes:

•

•

•

•

Mirroring—RAID level 1;

Parity checks--RAID 4;

Improvement--RAID 5;

RAID 6;

1) Mirroring

• The simplest scheme to recovery from

Disk Crashes

• How does Mirror work?

-- making two or more copied of the data

on different disks

• Benefit:

-- save data in case of one disk will fail;

-- divide data on several disks and let access to

several blocks at once

1) Mirroring (con’t)

• For mirroring, when the data can be lost?

-- the only way data can be lost if there is a second (mirror/redundant) disk crash

while the first (data) disk crash is being repaired.

• Possibility:

Suppose:

• One disk: mean time to failure = 10 years;

• One of the two disk: average of mean time to failure = 5 years;

• The process of replacing the failed disk= 3 hours=1/2920 year;

So:

• the possibility of the mirror disk will fail=1/10 * 1/2,920 =1/29,200;

• The possibility of data loss by mirroring: 1/5 * 1/29,200 = 1/146,000

2)Parity Blocks

• why changes?

-- disadvantages of Mirroring: uses so many

redundant disks

• What’s new?

-- RAID level 4: uses only one redundant disk

• How this one redundant disk works?

-- modulo-2 sum;

-- the jth bit of the redundant disk is the modulo-2

sum of the jth bits of all the data disks.

• Example

2)Parity Blocks(con’t)___Example

Data disks:

• Disk1: 11110000

• Disk2: 10101010

• Disk3: 00111000

Redundant disk:

• Disk4: 01100010

2)RAID 4 (con’t)

• Reading

-- Similar with reading blocks from any disk;

• Writing

1)change the data disk;

2)change the corresponding block of the redundant

disk;

• Why?

-- hold the parity checks for the corresponding blocks

of all the data disks

2)RAID 4 (con’t) _ writing

For a total N data disks:

1) naïve way:

• read N data disks and compute the modulo-2 sum of

the corresponding blocks;

• rewrite the redundant disk according to modulo-2 sum

of the data disks;

2) better way:

• Take modulo-2 sum of the old and new version of the

data block which was rewritten;

• Change the position of the redundant disk which was

1’s in the modulo-2 sum;

2)RAID 4 (con’t) _ writing_Example

•

•

•

•

Data disks:

Disk1: 11110000

Disk2: 10101010 • 01100110

Disk3: 00111000

•

•

•

•

•

to do:

Modulo-2 sum of the old and new version of disk 2: 11001100

So, we need to change the positions 1,2,5,6 of the redundant disk.

Redundant disk:

Disk4: 01100010 • 10101110

2)RAID 4 (con’t) _failure recovery

• Redundant disk crash:

-- swap a new one and recomputed data from all the data disks;

• One of Data disks crash:

-- swap a new one;

-- recomputed data from the other disks including data disks and redundant disk;

• How to recomputed? (same rule, that’s why there will be some

improvement)

-- take modulo-2 sum of all the corresponding bits of all the other disks

3) An Improvement: RAID 5

• Why need a improvement?

-- Shortcoming of RAID level 4: suffers from a bottleneck defect (when

updating data disk need to read and write the redundant disk);

• Principle of RAID level 5 (RAID 5):

-- treat each disk as the redundant disk for some of the blocks;

• Why it is feasible?

The rule of failure recovery for redundant disk and data disk is the same:

“take modulo-2 sum of all the corresponding bits of all the other

disks”

So, there is no need to retreat one as redundant disk and others as data

disks

3) RAID 5 (con’t)

• How to recognize which blocks of each disk treat

this disk as redundant disk?

-- if there are n+1 disks which were labeled

from 0 to N, then we can treat the i cylinder

of disk J as redundant if J is the remainder

when I is divided by n+1;

th

• Example;

3) RAID 5 (con’t)_example

N=3;

• The first disk, labeled as 0 : 4,8,12…;

• The second disk, labeled as 1 : 1,5,9…;

• The third disk, labeled as 2 : 2,6,10…;

• ……….

Suppose all the 4 disks are equally likely to be

written, for one of the 4 disks, the possibility of being

written:

• 1/4 + 3 /4 * 1/3 =1/2

• If N=m => 1/m +(m-1)/m * 1/(m-1) = 2/m

4) Coping with multiple disk crashes

• RAID 6

– deal with any number of disk crashes if using

enough redundant disks

• Example

a system of seven disks ( four data disks_numer 1-4

and 3 redundant disks_ number 5-7);

• How to set up this 3*7 matrix ?

(why is 3? – there are 3 redundant disks)

1)every column values three 1’s and 0’s except for all

three 0’s;

2) column of the redundant disk has single 1’s;

3) column of the data disk has at least two 1’s;

4) Coping with multiple disk crashes (con’t)

• Reading:

• read form the data disks and ignore the

redundant disk

• Writing:

• Change the data disk

• change the corresponding bits of all the

redundant disks

4) Coping with multiple disk crashes (con’t)

• In those system which has 4 data disks and 3

redundant disk, how they can correct up to 2 disk

crashes?

• Suppose disk a and b failed:

• find some row r (in 3*7 matrix)in which the column

for a and b are different (suppose a is 0’s and b is

1’s);

• Compute the correct b by taking modulo-2 sum of

the corresponding bits from all the other disks

other than b which have 1’s in row r;

• After getting the correct b, Compute the correct a

with all other disks available;

• Example

4) Coping with multiple disk crashes

(con’t)_example

3*7 matrix

data disk redundant disk

disk number 1 2 3 4 5 6 7

1

1

1

0

1

0

0

1

1

0

1

0

1

0

1

0

1

1

0

0

1

4) Coping with multiple disk crashes

(con’t)_example

First block of all the disks

disk contents

1) 11110000

2) 10101010

3) 00111000

4) 01000001

5) 01100010

6) 00011011

7) 10001001

4) Coping with multiple disk crashes

(con’t)_example

Two disks crashes;

disk contents

1) 11110000

2) ?????????

3) 00111000

4) 01000001

5) ?????????

6) 00011011

7) 10001001

4) Coping with multiple disk crashes

(con’t)_example

In that 3*7 matrix, find in row 2, disk 2 and 5 have different

value and disk 2’s value is 1 and 5’s value is 0.

so: compute the first block of disk 2 by modulo-2 sum of all

the corresponding bits of disk 1,4,6;

then compute the first block of disk 2 by modulo-2 sum of all

the corresponding bits of disk 1,2,3;

1) 11110000

2) ????????? => 00001111

3) 00111000

4) 01000001

5) ????????? => 01100010

6) 00011011

7) 10001001

13.5 Arranging data on disk

Meghna Jain

ID-205

CS257

Prof: Dr. T.Y.Lin

(updated)

Data elements are represented as records, which stores

in consecutive bytes in same same disk block.

Basic layout techniques of storing data :

Fixed-Length Records

Records have fixed-length fields, one for each attribute

of the tuple.

Allocation criteria - data should start at word

boundary.

Fixed Length record header

1. A pointer to record schema.

2. The length of the record.

3. Timestamps to indicate last modified or last read.

4. Pointers to the fields of the records.

Example

CREATE TABLE employee(

name CHAR(30) PRIMARY KEY,

address VARCHAR(255),

gender CHAR(1),

birthdate DATE

);

Data should start at word boundary and contain header and four fields name,

address, gender and birthdate.

Packing Fixed-Length Records into Blocks :

Records are stored in the form of blocks on the disk and they

move into main memory when we need to update or access

them.

A block header is written first, and it is followed by series of

blocks.

Block header contains the following information

:

• Links to one or more blocks that are part of a

network of blocks.

• Information about the role played by this block in such

a network.

• Information about the relation, the tuples in this block

belong to.

• A "directory" giving the offset of each record in the

block.

• Time stamp(s) to indicate time of the block's last

modification and/or access.

Example

Along with the header we can pack as many record as we can

in one block as shown in the figure and remaining space will

be unused.

Thank You

13.6 REPRESENTING BLOCK AND

RECORD ADDRESSES

Ramya Karri

CS257 Section 2

ID: 206

INTRODUCTION(updated)

• Address of a block and Record

In Main Memory

Address of the block is the virtual memory address

of the first byte

Address of the record within the block is the virtual

memory address of the first byte of the record

o In Secondary Memory: sequence of bytes describe the

location of the block in the overall system

o

• Sequence of Bytes describe the location of the

block : the device Id for the disk, Cylinder number,

etc.

• The record's address is block address and the

offset of the first byte of the record within the block.

ADDRESSES IN CLIENT-SERVER

SYSTEMS

• The addresses in address space are represented

in two ways

Physical Addresses: byte strings that determine the

place within the secondary storage system where the

record can be found.

o Logical Addresses: arbitrary string of bytes of some

fixed length

o

• Physical Address bits are used to indicate:

o

o

o

o

o

Host to which the storage is attached

Identifier for the disk

Number of the cylinder

Number of the track

Offset of the beginning of the record

ADDRESSES IN CLIENT-SERVER

SYSTEMS (CONTD..)(updated)

• Logical address is an arbitrary string of bytes of

some fixed length.

• Map Table relates logical addresses to physical

addresses.

Logical

Physical

Logical Address

Physical Address

LOGICAL AND STRUCTURED

ADDRESSES

• Purpose of logical address?

• Gives more flexibility, when we

o

Move the record around within the block

o Move the record to another block

• Gives us an option of deciding what to do when a

record is deleted?

Unused

Recor Recor Recor Recor

d4

d3

d2

d1

Offset table

Header

POINTER SWIZZLING(updated)

• Having pointers is common in an object-relational

database systems

• Important to learn about the management of

pointers

• Every data item (block, record, etc.) has two

addresses:

o database address: address on the disk

o memory address, if the item is in virtual memory

When the item is in main memory, it is more

efficient to use memory address.

A translation table translates database

addresses currently in virtual memory to their

current memory address.

POINTER SWIZZLING (CONTD…)

• Translation Table: Maps database address to

memory address

Dbaddr

Mem-addr

Database address

Memory Address

• All addressable items in the database have entries

in the map table, while only those items currently in

memory are mentioned in the translation table

POINTER SWIZZLING (CONTD…)

• Pointer consists of the following two fields

o

o

o

Bit indicating the type of address

Database or memory address

Example 13.17

Disk

Memory

Swizzled

Block 1

Block 1

Unswizzled

Block 2

EXAMPLE 13.7

• Block 1 has a record with pointers to a second

record on the same block and to a record on

another block

• If Block 1 is copied to the memory

The first pointer which points within Block 1 can be

swizzled so it points directly to the memory address of

the target record

o Since Block 2 is not in memory, we cannot swizzle the

second pointer

o

POINTER SWIZZLING (CONTD…)

• Three types of swizzling

o

Automatic Swizzling

As soon as block is brought into memory,

swizzle all relevant pointers.

o

Swizzling on Demand

Only swizzle a pointer if and when it is

actually followed.

o

No Swizzling

Pointers are not swizzled they are accesses

using the database address.

PROGRAMMER CONTROL OF

SWIZZLING(updated)

The programmer calls for the pointers to be swizzled

only as needed.

• Unswizzling

When a block is moved from memory back to disk, all

pointers must go back to database (disk) addresses

o Use translation table again

o Important to have an efficient data structure for the

translation table

o

PINNED RECORDS AND

BLOCKS(updated)

• A block in memory is said to be pinned if it cannot

be written back to disk safely.

• Header of the block has one bit telling whether or

not the block is pinned.

• If block B1 has swizzled pointer to an item in block

B2, then B2 is pinned

Unpin a block, we must unswizzle any pointers to it

Keep in the translation table the places in memory

holding swizzled pointers to that item

o Unswizzle those pointers (use translation table to

replace the memory addresses with database (disk)

addresses

o

o

Thank you

Eswara Satya Pavan Rajesh Pinapala

CS 257

ID: 221

Topics

•

•

•

•

•

Records with Variable Length Fields

Records with Repeating Fields

Variable Format Records

Records that do not fit in a block

BLOBS

Example

name

address

gender

birth date

0 30 286 287 297

Fig 1 : Movie star record with four fields

Records with Variable Fields

An effective way to represent variable length

records is as follows

• Fixed length fields are Kept ahead of the

variable length fields

• Record header contains

o Length of the record

o Pointers to the beginning of all variable

length fields except the first one.

Records with Variable Length

Fields

header information

record length

to address

gender birth date

name

address

Figure 2 : A Movie Star record with name and address

implemented as variable length character strings

Records with Repeating Fields

• Records contains variable number of occurrences of a

field F

• All occurrences of field F are grouped together and the

record

header contains a pointer to the first occurrence of field F

• L bytes are devoted to one instance of field F

• Locating an occurrence of field F within the record

o Add to the offset for the field F which are the integer

multiples of L starting with 0 , L ,2L,3L and so on to

locate

o We stop upon reaching the offset of the field F.

Records with Repeating Fields

other header

information

record length

to address

to movie pointers

name

address

pointers to movies

Figure 3 : A record with a repeating group of references to

movies

Records with Repeating Fields

record header to name length of name

information

to address

length of address

to movie references

number of

references

address

name

Figure 4 : Storing variable-length fields separately from the

record

Records with Repeating Fields

Advantage

• Keeping the record itself fixed length allows record to be

searched more efficiently, minimizes the overhead in the

block headers, and allows records to be moved within

or among the blocks with minimum effort.

Disadvantage

• Storing variable length components on another block

increases the number of disk I/O’s needed to examine

all components of a record.

Records with Repeating Fields

Advantage

• Keeping the record itself fixed length allows record to be

searched more efficiently, minimizes the overhead in the

block headers, and allows records to be moved within

or among the blocks with minimum effort.

Disadvantage

• Storing variable length components on another block

increases the number of disk I/O’s needed to examine

all components of a record.

Records with Repeating Fields

A compromise strategy is to allocate a fixed

portion of the record for the repeating fields

• If the number of repeating fields is lesser than

allocated space, then there will be some unused

space

• If the number of repeating fields is greater than

allocated space, then extra fields are stored in a

different location and

• Pointer to that location and count of additional

occurrences is stored in the record

Variable Format Records

• Records that do not have fixed schema

• Variable format records are represented by sequence of

tagged fields

• Each of the tagged fields consist of information

o Attribute or field name

o Type of the field

o Length of the field

o Value of the field

• Why use tagged fields

o Information – Integration applications

o Records with a very flexible schema

Variable Format Records

code for name

code for string type

length

N

S 14

code for restaurant owned

Clint Eastwood R

code for string type

length

S

Fig 5 : A record with tagged fields

16

Hog’s Breath

Inn

Records that do not fit in a block

• When the length of a record is greater than block size

,then

then record is divided and placed into two or more blocks

• Portion of the record in each block is referred to as a

RECORD FRAGMENT

• Record with two or more fragments is called

SPANNED RECORD

• Record that do not cross a block boundary is called

UNSPANNED RECORD

Spanned Records

• Spanned records require the following extra

header information

• A bit indicates whether it is fragment or not

• A bit indicates whether it is first or last fragment

of

a record

• Pointers to the next or previous fragment for the

same record

Records that do not fit in a block

block header

record header

record 1

block 1

record

2-a

record

2-b

record 3

block 2

Figure 6 : Storing spanned records across blocks

BLOBS(updated)

• Large binary objects are called BLOBS

e.g. : audio files, video files

Storage of BLOBS – They must be stored

on a sequence of blocks allocated

consecutively on the cylinders of the disk.

They can be striped across several blocks

for faster retrieval.

Retrieval of BLOBS-pass only small fields of

the record first and allow the client to

request blocks of BLOB one at a time.

Record Modifications

Chapter 13

Section 13.8

Neha Samant

CS 257

(Section II) Id 222

5/14/2009

Modification types

• Insertion

• Deletion

• Update

5/14/2009

Insertion(updated)

• Insertion of records without order

Records can be placed in a block with empty space or in a new block.

Insertion of records in fixed order

• Space available in the block

• No space available in the block (outside the block)

Structured address

Pointer to a record from outside the block. It is the block address and the

location of the entry for the record in the offset table.

5/14/2009

Insertion in fixed order

Space available within the block

• Use of an offset table in the header of each block with pointers to the location of

each record in the block.

• The records are slid within the block and the pointers in the offset table are

adjusted.

Offset

table

header

unused

Record 4

5/14/2009

Record 3

Record 2

Record 1

Insertion in fixed order

No space available within the block (outside the block)

• Find space on a “nearby” block.

In case of no space available on a block, look at the following block in sorted order of

blocks.

o If space is available in that block ,move the highest records of first block 1 to block 2 and

slide the records around on both blocks.

o

• Create an overflow block

o

o

o

Records can be stored in overflow block.

Each block has place for a pointer to an overflow block in its header.

The overflow block can point to a second overflow block as shown below.

Block B

5/14/2009

Overflow

block for B

Deletion

• Recover space after deletion

o

When using an offset table, the records can be slid around the block so there

will be an unused region in the center that can be recovered.

• In case we cannot slide records, an available space list can be maintained in the

block header.

• The list head goes in the block header and available regions hold the links in the

list.

5/14/2009

Deletion

• Use of tombstone

o

The tombstone is placed in a record in order to avoid pointers to the deleted

record to point to new records.

• The tombstone is permanent until the entire database is reconstructed.

• If pointers go to fixed locations from which the location of the record is found

then we put the tombstone in that fixed location. (See examples)

• Where a tombstone is placed depends on the nature of the record pointers.

• Map table is used to translate logical record address to physical address.

5/14/2009

Deletion

• Use of tombstone

o

If we need to replace records by tombstones, place the bit that serves as the

tombstone at the beginning of the record.

• This bit remains the record location and subsequent bytes can be reused for

another record

Record 1

Record 2

Record 1 can be replaced, but the tombstone remains, record 2 has no

tombstone and can be seen when we follow a pointer to it.

5/14/2009

Update

• Fixed Length update

No effect on storage system as it occupies same space as before

update.

• Variable length update

o Longer length

o Short length

5/14/2009

Update

Variable length update (longer length)

• Stored on the same block:

o

o

Sliding records

Creation of overflow block.

• Stored on another block

o

o

5/14/2009

Move records around that block

Create a new block for storing variable length fields.

Update

Variable length update (Shorter length)

• Same as deletion

o

o

5/14/2009

Recover space

Consolidate space.

BTrees & Bitmap Indexes

14.2

DATABASE SYSTEMS – The Complete

Book

Presented By: Under the supervision of:

Maciej Kicinski Dr.T.Y.Lin

B Trees ►►

Structure(updated)

• A balanced tree, meaning that all paths from the

leaf node have the same length.

They are at least 3 layers – root, intermediate layer and

leaves.

• There is a parameter n associated with each Btree

block. Each block will have space for n searchkeys

and n+1 pointers.

• The root may have only 1 parameter, but all other

blocks most be at least half full.

Structure

●A

typical node >

● a typical interior

node would have

pointers pointing to

leaves with out

values

● a typical leaf would

have pointers point

to records

N search keys

N+1 pointers.

Structure

●A

typical node >

● a typical interior

node would have

pointers pointing to

leaves with out

values

● a typical leaf would

have pointers point

to records

N search keys

N+1 pointers

Application(updated)

• The (n+1) pointer of the leaf node points to the

next leaf.

• The search key of the Btree is the primary key

for the data file.

• Data file is sorted by its primary key.

• Data file is sorted by an attribute that is not a

key,and this attribute is the search key for the

Btree.

Lookup

If at an interior node, choose the correct pointer to use. This

is done by comparing keys to search value.

Lookup

If at a leaf node, choose the key that matches what

you are looking for and the pointer for that leads

to the data.

Insertion

• When inserting, choose the correct leaf node to

put pointer to data.

• If node is full, create a new node and split keys

between the two.

• Recursively move up, if cannot create new

pointer to new node because full, create new

node.

• This would end with creating a new root node, if

the current root was full.

Deletion

Perform lookup to find node to delete and delete it.

If node is no longer half full, perform join on

adjacent node and recursively delete up, or key

move if that node is full and recursively change

pointer up.

Efficiency

Btrees allow lookup, insertion, and deletion of

records using very few disk I/Os.

Each level of a Btree would require one read. Then

you would follow the pointer of that to the next or

final read.

Efficiency

Three levels are sufficient for Btrees. Having each block have

255 pointers, 255^3 is about 16.6 million.

You can even reduce disk I/Os by keeping a level of a Btree in

main memory. Keeping the first block with 255 pointers

would reduce the reads to 2, and even possible to keep the

next 255 pointers in memory to reduce reads to 1.

References

References

BTrees & Bitmap Indexes

14.7

DATABASE SYSTEMS – The Complete

Book

Presented By: Under the supervision of:

Deepti Kundu Dr.T.Y.Lin

Bitmap Indexes ►►

Definition(updated)

A bitmap index for a field F is a collection of bitvectors of length n, one for each possible value

that may appear in that field F.[1]

The vector for value v has 1 in position i if

the ith record has v in field F, and it has 0

there if not.

What does that mean?

• Assume relation R

with

o

o

o

2 attributes A and B.

Attribute A is of type

Integer and B is of type

String.

6 records, numbered 1

through 6 as shown.

A

B

1

30

foo

2

30

bar

3

40

baz

4

50

foo

5

40

bar

6

30

baz

Example Continued…

• A bitmap for attribute B is:

Value

foo

bar

baz

Vector

100100

010010

001001

A

B

1

30

foo

2

30

bar

3

40

baz

4

50

foo

5

40

bar

6

30

baz

Where do we reach?

• A bitmap index is a special kind of database index

that uses bitmaps.[2]

• Bitmap indexes have traditionally been

considered to work well for data such as gender,

which has a small number of distinct values, e.g.,

male and female, but many occurrences of those

values.[2]

A little more…

• A bitmap index for attribute A of relation R is:

o A collection of bit-vectors

o The number of bit-vectors = the number of distinct values

of A in R.

o The length of each bit-vector = the cardinality of R.

o The bit-vector for value v has 1 in position i, if the ith record

has v in attribute A, and it has 0 there if not.[3]

• Records are allocated permanent numbers.[3]

• There is a mapping between record numbers and record

addresses.[3]

Motivation for Bitmap Indexes

• Very efficient when used for partial match queries.[3]

• They offer the advantage of buckets [2]

o Where we find tuples with several specified attributes

without first retrieving all the record that matched in each

of the attributes.

• They can also help answer range queries [3]

Another Example

Multidimensional Array of multiple types

{(5,d),(79,t),(4,d),(79,d),(5,t),(6,a)}

5 = 100010

79 = 010100

4 = 001000

6 = 000001

d = 101100

t = 010010

a = 000001

Example Continued…

{(5,d),(79,t),(4,d),(79,d),(5,t),(6,a)}

Searching for items is easy, just AND together.

To search for (5,d)

5 = 100010

d = 101100

100010 AND 101100 = 100000

The location of the

record has been traced!

Compressed Bitmaps

• Assume:

o The number of records in R are n

o Attribute A has m distinct values in R

• The size of a bitmap index on attribute A is m*n.

• If m is large, then the number of 1’s will be around 1/m.

o Opportunity to encode

• A common encoding approach is called run-length

encoding.[1]

Run-length encoding

• Represents runs

o A run is a sequence of i 0’s followed by a 1, by some suitable binary

encoding of the integer i.

• A run of i 0’s followed by a 1 is encoded by:

o First computing how many bits are needed to represent i, Say k

o Then represent the run by k-1 1’s and a single 0 followed by k bits which

represent i in binary.

o The encoding for i = 1 is 01. k = 1

o The encoding for i = 0 is 00. k = 1

• We concatenate the codes for each run together, and the sequence of bits is

the encoding of the entire bit-vector

Understanding with an Example

• Let us decode the sequence 11101101001011

• Staring at the beginning (left most bit):

o First run: The first 0 is at position 4, so k = 4. The next 4 bits are

1101, so we know that the first integer is i = 13

o Second run: 001011

k=1

i=0

o Last run: 1011

k=1

i=3

• Our entire run length is thus 13,0,3, hence our bit-vector is:

0000000000000110001

Managing Bitmap Indexes

1) How do you find a specific bit-vector for a

value efficiently?

2) After selecting results that match, how do you retrieve

the results efficiently?

3) When data is changed, do you you alter bitmap

index?

1) Finding bit vectors

• Think of each bit-vector as a key to a value.[1]

• Any secondary storage technique will be efficient in

retrieving the values.[1]

• Create secondary key with the attribute value as a

search key [3]

o Btree

o Hash

2) Finding Records

• Create secondary key with the record number as a

search key [3]

• Or in other words,

o Once you learn that you need record k, you can create a

secondary index using the kth position as a search key.[1]

3) Handling Modifications

Two things to remember:

Record numbers must remain fixed once

assigned

Changes to data file require changes to

bitmap index

Deletion

Tombstone replaces deleted record

Corresponding bit is set to 0

Insertion

Record assigned the next record number.

A bit of value 0 or 1 is appended to each bit

vector

If new record contains a new value of the

attribute, add one bit-vector.

Modification

Change the bit corresponding to the old value of

the modified record to 0

Change the bit corresponding to the new value

of the modified record to 1

If the new value is a new value of A, then insert

a new bit-vector.

References

[1] Database Systems : The Complete Book - Hector Garcia-Molina, Jeffrey D. Ullman,

Jennifer D. Widom

[2] http://en.wikipedia.org/wiki/Bitmap_index#Example

[3] faculty.kfupm.edu.sa/ICS/adam/ICS541/L10-md-bitmap-indexing.ppt

[4] http://csis.bitspilani.ac.in/faculty/goel/Data%20Warehousing/Lecture%20Notes/Lecture%20%239%20%20Bitmap%20Indexes%20in%20DW.doc (- a good doc file to read the concepts of bitmap

indexes)

Concurrency Control

18.1 – 18.2

Chiu Luk

CS257 Database Systems Principles

Spring 2009

Concurrency Control

• Concurrency control in database management systems

(DBMS) ensures that database transactions are

performed concurrently without the concurrency violating

the data integrity of a database.

• Executed transactions should follow the ACID rules. The

DBMS must guarantee that only serializable (unless

Serializability is intentionally relaxed), recoverable

schedules are generated.

• It also guarantees that no effect of committed transactions

is lost, and no effect of aborted (rolled back) transactions

remains in the related database.

Transaction ACID rules

Atomicity - Either the effects of all or none of its operations

remain when a transaction is completed - in other words, to

the outside world the transaction appears to be indivisible,

atomic.

Consistency - Every transaction must leave the database in a

consistent state.

Isolation - Transactions cannot interfere with each other.

Providing isolation is the main goal of concurrency control.

Durability - Successful transactions must persist through

crashes.

Serial and Serializable Schedules

•

•

In the field of databases, a schedule is a list of actions, (i.e. reading, writing,

aborting, committing), from a set of transactions.

In this example, Schedule D is the set of 3 transactions T1, T2, T3. The

schedule describes the actions of the transactions as seen by the DBMS. T1

Reads and writes to object X, and then T2 Reads and writes to object Y, and

finally T3 Reads and writes to object Z. This is an example of a serial schedule,

because the actions of the 3 transactions are not interleaved.

Serial and Serializable Schedules

•

•

A schedule that is equivalent to a serial schedule has the serializability property.

In schedule E, the order in which the actions of the transactions are executed is not the

same as in D, but in the end, E gives the same result as D.

Serial Schedule TI

T1

Read(A); A A+100

Write(A);

Read(B); B B+100;

Write(B);

precedes T2

T2

A

25

B

25

125

Read(A);A A2;

Write(A);

Read(B);B B2;

Write(B);

125

250

250

250

250

Serial Schedule T2 precedes Tl

T1

Read(A); A A+100

Write(A);

Read(B); B B+100;

Write(B);

T2

Read(A);A A2;

Write(A);

Read(B);B B2;

Write(B);

A

25

B

25

50

50

150

150

150

150

serializable, but not serial, schedule

T1

Read(A); A A+100

Write(A);

Read(B); B B+100;

Write(B);

T2

Read(A);A A2;

Write(A);

A

25

B

25

125

250

Read(B);B B2;

Write(B);

125

250

r1(A); w1 (A): r2(A); w2(A); r1 (B); w1 (B); r2(B); w2(B);

250

250

nonserializable schedule

T1

Read(A); A A+100

Write(A);

Read(B); B B+100;

Write(B);

T2

Read(A);A A2;

Write(A);

Read(B);B B2;

Write(B);

A

25

B

25

125

250

50

150

250 150

schedule that is serializable only because of the detailed

behavior of the transactions

T1

Read(A); A A+100

Write(A);

Read(B); B B+100;

Write(B);

•

T2’

Read(A);A A1;

Write(A);

Read(B);B B1;

Write(B);

A

25

125

B

25

125

25

125

regardless of the consistent initial state: the final state will be consistent.

125 125

A Notation for Transactions and

Schedules.(added)

• An action is the expression of the form

ri(X) and wi(X) meaning that transaction Ti

reads or writes the database element X.

• A transaction Ti is a sequence of actions

with subscript i.

• A schedule S of a set of Transactions is a

sequence of actions. The actions

appearing in each transaction appear in

the same order in the schedule S.

Non-Conflicting Actions

Two actions are non-conflicting if whenever they

occur consecutively in a schedule, swapping them

does not affect the final state produced by the

schedule. Otherwise, they are conflicting.

Conflicting Actions: General

Rules

• Two actions of the same transaction

conflict:

o r1(A)

w1(B)

• Two actions over the same database

element conflict, if one of them is a write

o r1(A) w2(A)

o w1(A) w2(A)

Conflict actions

• Two or more actions are said to be in conflict if:

o The actions belong to different transactions.

o At least one of the actions is a write operation.

o The actions access the same object (read or write).

• The following set of actions is conflicting:

o T1:R(X), T2:W(X), T3:W(X)

• While the following sets of actions are not:

o T1:R(X), T2:R(X), T3:R(X)

o T1:R(X), T2:W(Y), T3:R(X)

Conflict Serializable

• We may take any schedule and make as many

nonconflicting swaps as we wish.

• With the goal of turning the schedule into a

serial schedule.

• If we can do so, then the original schedule is

serializable, because its effect on the database

state remains the same as we perform each of

the nonconflicting

swaps.

Conflict Serializable

•

•

•

A schedule is said to be conflict-serializable when the schedule is conflict-equivalent to

one or more serial schedules.

Another definition for conflict-serializability is that a schedule is conflict-serializable if

and only if there exists an acyclic precedence graph/serializability graph for the

schedule.

Which is conflict-equivalent to the serial schedule <T1,T2>, but not <T2,T1>.

Conflict equivalent / conflict-serializable

• Let Ai and Aj are consecutive non-conflicting actions

that belongs to different transactions. We can swap Ai

and Aj without changing the result.

• Two schedules are conflict equivalent if they can be

turned one into the other by a sequence of nonconflicting swaps of adjacent actions.

• We shall call a schedule conflict-serializable if it is

conflict-equivalent to a serial schedule.

conflict-serializable

T1

R(A)

W(A)

T2

R(A)

R(B)

W(A)

W(B)

R(B)

W(B)

conflict-serializable

T1

R(A)

W(A)

R(B)

T2

R(A)

W(A)

W(B)

R(B)

W(B)

conflict-serializable

T1

R(A)

W(A)

R(A)

T2

R(B)

W(B)

W(A)

R(B)

W(B)

conflict-serializable

T1

R(A)

W(A)

R(A)

W(B)

T2

Serial

Schedule

R(B)

W(A)

R(B)

W(B)

END of present

References

•

•

•

•

•

Database Systems: The Complete Book (2nd Edition) (Hardcover) by Hector GarciaMolina (Author), Jeffrey D. Ullman (Author), Jennifer Widom (Author) Publisher :

Prenctice Hall.

http://en.wikipedia.org/wiki/Concurrency_control

http://www.utdallas.edu/~mxk055100/db07files/serilizable-defs.ppt

http://en.wikipedia.org/wiki/Schedule_(computer_science)#Serializable

http://www.cs.duke.edu/~shivnath/courses/fall06/Lectures/11_serial.ppt

Concurrency Control

By Donavon Norwood

Ankit Patel

Aniket Mulye

INTRODUCTION

• Enforcing serializability by locks

o

o

o

Locks

Locking scheduler

Two phase locking

• Locking systems with several lock modes

o

o

o

o

Shared and exclusive locks

Compatibility matrices

Upgrading/updating locks

Incrementing locks

Locks(updated)

It works like as follows :

• A request from transaction

• Scheduler checks in the lock table

• Generates a serializable schedule of actions.

A scheduler uses a lock table to help perform its job.

Consistency of transactions

• Actions and locks must relate each other

Transactions can only read & write only if has a

lock and has not released the lock.

o Unlocking an element is compulsory.

o

• Legality of schedules

o

No two transactions can acquire the lock on same

element without the prior one releasing it.

Locking scheduler(updated)

• Grants lock requests only if it is in a legal

schedule.

• Lock table stores the information about current

locks on the elements.

• The lock table is a relation

Locks(element,transaction), consisting of pairs

(X,T) such that transaction T currently has a lock

on database element X.

The locking scheduler (contd.)

• A legal schedule of consistent transactions but

unfortunately it is not a serializable.

Locking schedule (contd.)

• The locking scheduler delays requests that

would result in an illegal schedule.

Two-phase locking

• Guarantees a legal schedule of consistent

transactions is conflict-serializable.

• All lock requests proceed all unlock requests.

• The growing phase:

o

Obtain all the locks and no unlocks allowed.

• The shrinking phase:

o

Release all the locks and no locks allowed.

Working of Two-Phase locking

• Assures serializability.

• Two protocols for 2PL:

Strict two phase locking : Transaction holds all

its exclusive locks till commit / abort.

o Rigorous two phase locking : Transaction holds

all locks till commit / abort.

o

• Possible to find a transaction Tj that has a

2PL and a schedule S for Ti ( non 2PL ) and

Tj that is not conflict serializable.

Failure of 2PL.

• 2PL fails to provide security against

deadlocks.

Concurrency Control: 18.4

Locking Systems with Several

Lock Modes

CS257 Spring/2009

Professor: Tsau Lin

Student: Suntorn Sae-Eung

ID: 212

18.4 Locking Systems with

Several Lock Modes

• In 18.3, if a transaction must lock a database

element (X) either reads or writes,

o

No reason why several transactions could not

read X at the same time, as long as none write X

• Introduce locking schemes

o

o

Shared/Read Lock ( For Reading)

Exclusive/Write Lock( For Writing)

18.4.1 Shared & Exclusive

Locks

• Transaction Consistency

o

o

o

Cannot write without Exclusive Lock

Cannot read without holding some lock

Consider lock for writing is “stronger” than for reading

• This basically works on 2 principles

1. A read action can only proceed a shared or an

exclusive lock

2. A write lock can only proceed a exclusive lock

• All locks need to be unlocked before commit

18.4.1 Shared & Exclusive

Locks (cont.)

• Two-phase locking (2PL) of transactions

Ti

Lock • R/W • Unlock

• Notation:

sli (X)– Ti requests shared lock on DB element X

xli (X)– Ti requests exclusive lock on DB element X

ui (X)– Ti relinquishes whatever lock on X

18.4.1 Shared & Exclusive

Locks (cont.)

• Legality of Schedules

o

An element may be locked by: one write transaction or

by several read transactions shared mode, but not both

18.4.2 Compatibility Matrices

• A convenient way to describe lockmanagement policies

Rows correspond to a lock held on an element by

another transaction

o Columns correspond to mode of lock requested.

o Example :

o

Lock requested

Lock in

hold

S

X

S

YES

NO

X

NO

NO

18.4.3 Upgrading Locks

• A transaction (T) taking a shared lock is friendly

toward other transaction.

• When T wants to read and write a new value X,

1. T takes a shared lock on X.

2. performs operations on X (may spend long time)

3. When T is ready to write a new value, “Upgrade” shared lock to

exclusive lock on X.

18.4.3 Upgrading Locks (cont.)

• Observe the example

T1 retry and

succeed

‘B’ is released

• T1 cannot take an exclusive lock on B until all locks on

B are released.

18.4.3 Upgrading Locks (cont.)

• Upgrading can simply cause a “Deadlock”.

o

Both the transactions want to upgrade on the

same element

Both transactions will wait forever !!

18.4.4 Update locks

• The third lock mode resolving the

deadlock problem, which rules are

Only “Update lock” can be upgraded to a write

(exclusive) lock later.

o An “Update lock” is allowed to grant on X when

there are already shared locks on X.

o Once there is an “Update lock,” it prevents

additional any kinds of lock, and later changes to

a write (exclusive) lock.

o

• Notation: uli (X)

18.4.4 Update locks (cont.)

• Example

18.4.4 Update locks (cont.)

• Compatibility matrix (asymmetric)

Lock requested

Lock in

hold

S

X

U

S

YES

NO

YES

X

NO

NO

NO

U

NO

NO

NO

18.4.5 Increment Locks

• A useful lock for transactions which

increase/decrease value.

e.g. money transfer between two bank accounts.

• If 2 transactions (T1, T2) add constants to the

same database element (X),

o

It doesn’t matter which goes first, but no reads are

allowed in between transaction processing

• Let see on following exhibits

18.4.5 Increment Locks (cont.)

CASE 1

T1: INC (A,2)

A=7

A=5

T2: INC (A,10)

CASE 2

T2: INC (A,10)

A=17

A=15

T1: INC (A,2)

18.4.5 Increment Locks (cont.)

• What if

T1: INC (A,2)

A=5

A=7

T2: INC (A,10)

A=15

A=5

T2: INC (A,10)

A=15

T1: INC (A,2)

A=5

A=5

A=7

A !=

17

18.4.5 Increment Locks (cont.)

• INC (A, c) –

o

Increment action of writing on database element

A, which is an atomic execution consisting of

1. READ(A,t);

2. t = t+c;

3. WRITE(A,t);

• Notation:

o ili (X)– action of Ti requesting an increment lock on X

o inci (X)– action of Ti increments X by some constant;

don’t care about the value of the constant.

18.4.5 Increment Locks (cont.)

• Example

18.4.5 Increment Locks (cont.)

• Compatibility matrix

Lock requested

Lock in

hold

S

X

I

S

YES

NO

NO

X

NO

NO

NO

I

NO

NO

YES

References

• H. Garcia-Molina, J. Ullman, and J. Widom,

“Database System: The Complete Book,”

second edition: chapter 18.3-18.4, p.897-913,

Prentice Hall, New Jersy, 2008

Concurrency Control

Chapter 18

Section 18.5

Presented by

Khadke, Suvarna

CS 257

(Section II) Id 213

Overview

• Assume knowledge of:

o

o

o

Lock

Two phase lock

Lock modes: shared, exclusive, update

• A simple scheduler architecture based on

following principle :

Insert lock actions into the stream of reads, writes,

and other actions

o Release locks when the transaction manager tells it

that the transaction will commit or abort

o

Scheduler That Inserts Lock Actions into

the transactions request stream

Scheduler That Inserts Lock Actions

If transaction is delayed, waiting for a lock,

Scheduler performs following actions

• Part I: Takes the stream of requests generated by the

transaction & insert appropriate lock modes to db

operations (read, write, or update)

• Part II: Take actions (a lock or db operation) from

Part I and executes it.

• Determine the transaction (T) that action belongs

and status of T (delayed or not). If T is not delayed

then

1. Database access action is transmitted to the database

and executed

Scheduler That Inserts Lock Actions

1. If lock action is received by PartII, it checks the L Table

whether lock can be granted or not

i> Granted, the L Table is modified to include granted lock

ii>Not G. then update L Table about requested lock then PartII

delays transaction T

1. When a T = commits or aborts, PartI is notified by the

transaction manager and releases all locks.

If any transactions are waiting for locks PartI notifies PartII.

1. Part II when notified about the lock on some DB element,

determines next transaction T’ to get lock to continue.

The Lock Table

• A relation that associates database elements with

locking information about that element

• Implemented with a hash table using database

elements as the hash key

• Size is proportional to the number of lock elements

only, not to the size of the entire database

DB element

A

Lock

information

for A

Lock Table Entries Structure

Some Sort of

information found in

Lock Table entry

1>Group modes

• S: only shared locks are

held

• X: one exclusive lock and

no other locks

• U: one update lock and

one or more shared

locks

2>wait : one transaction

waiting for a lock on A

3>A list :T currently hold

locks on A or Waiting for

Handling Lock Requests

• Suppose transaction T requests a lock on A

• If there is no lock table entry for A, then there are

no locks on A, so create the entry and grant the

lock request

• If the lock table entry for A exists, use the group

mode to guide the decision about the lock

request

Handling Lock Requests

• If group mode is U (update) or X (exclusive)

No other lock can be granted

• Deny the lock request by T

• Place an entry on the list saying T requests a lock

• And Wait? = ‘yes’

• If group mode is S (shared)

Another shared or update lock can be granted

• Grant request for an S or U lock

• Create entry for T on the list with Wait? = ‘no’

• Change group mode to U if the new lock is an update lock

Handling Unlock Requests

• Now suppose transaction T unlocks A

• Delete T’s entry on the list for A

• If T’s lock is not the same as the group mode, no

need to change group mode

• Otherwise check entire list for new group mode

S: GM(S) or nothing

U: GM(S) or nothing

X: nothing

Handling Unlock Requests

• If the value of waiting is “yes" need to grant one or more locks using following

approaches

o First-Come-First-Served:

o Grant the lock to the longest waiting request.

o No starvation (waiting forever for lock)

o Priority to Shared Locks:

o Grant all S locks waiting, then one U lock.

o Grant X lock if no others waiting

o Priority to Upgrading:

o If there is a U lock waiting to upgrade to an X lock, grant that first.

Reference List

• ULLMAN, J. D., WISDOM J. & HECTOR G., DATABASE SYSTEMS THE

COMPLETE BOOK, 2nd Edition, 2008.

Thank You

Concurrency Control

Managing Hierarchies of Database Elements

(18.6)

Presented by

Ronak Shah

(214)

March 9, 2009

Managing Hierarchies of Database

Elements

• Two problems that arise with locks when there is

a tree structure to the data are:

• When the tree structure is a hierarchy of lockable

elements

o

Determine how locks are granted for both large

elements (relations) and smaller elements (blocks

containing tuples or individual tuples)

• When the data itself is organized as a tree (B-tree

indexes)

o

This will be discussed in the next section

Locks with Multiple Granularity

• A database element can be a relation, block or a

tuple

• Different systems use different database elements

to determine the size of the lock

• Thus some may require small database elements

such as tuples or blocks and others may require

large elements such as relations

Example of Multiple Granularity Locks

• Consider a database for a bank

Choosing relations as database elements means we

would have one lock for an entire relation

o If we were dealing with a relation having account

balances, this kind of lock would be very inflexible and

thus provide very little concurrency

o Why? Because balance transactions require exclusive

locks and this would mean only one transaction occurs

for one account at any time

o But as each account is independent of others we could

perform transactions on different accounts

simultaneously

o

…(contd.)

• Thus it makes sense to have block element for the lock so

that two accounts on different blocks can be updated

simultaneously

• Another example is that of a document

o

With similar arguments as above, we see that it is

better to have large element (a complete document) as

the lock in this case

Warning (Intention) Locks(updated)

• These are required to manage locks at different

granularities

o

In the bank example, if the a shared lock is obtained for

the relation while there are exclusive locks on

individual tuples, unserializable behavior occurs

• The rules for managing locks on hierarchy of

database elements constitute the warning protocol

• Three levels of database elements:

o

o

o

Relations are the largest lockable elements.

Each relation is composed of blocks are pages

Each block contains one or more tuples.

Database Elements Organized in

Hierarchy

Rules of Warning Protocol

• These involve both ordinary (S and X) and warning

(IS and IX) locks

• The rules are:

o

o

o

o

Begin at the root of hierarchy

Request the S/X lock if we are at the desired element

If the desired element id further down the hierarchy,

place a warning lock (IS if S and IX if X)

When the warning lock is granted, we proceed to the

child node and repeat the above steps until desired

node is reached

Compatibility Matrix for Shared,

Exclusive and Intention Locks

IS

IX

S

X

IS

Yes

Yes

Yes

No

IX

Yes

Yes

No

No

S

Yes

No

Yes

No

X

No

No

No

No

• The above matrix applies only to locks held by

other transactions

Group Modes of Intention Locks

• An element can request S and IX locks at the

same time if they are in the same transaction (to

read entire element and then modify sub

elements)

• This can be considered as another lock mode,

SIX, having restrictions of both the locks i.e. No