simple linear regression

advertisement

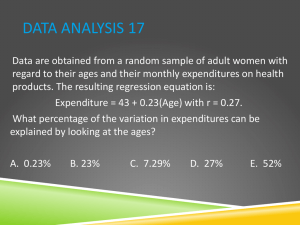

SIMPLE LINEAR REGRESSION Last week Discussed the ideas behind: Hypothesis testing Random Sampling Error Statistical Significance, Alpha, and p-values Examined Correlation – specifically Pearson’s r What it’s used for, when to use it (and not to use it) Statistical Assumptions Interpretation of r (direction/magnitude) and p Tonight Extend our discussion on correlation – into simple linear regression Correlation and regression are specifically linked together, conceptually and mathematically Often see correlations paired with regression Regression You’ve is nothing but one step past r all done it in high school math First…brief review… Quick Review/Quiz A health researcher plans to determine if there is an association between physical activity and body composition. Specifically, the researcher thinks that people who are more physically active (PA) will have a lower percent body fat (%BF). Write out a null and alternative hypothesis PA and %BF HO: There is no association between PA and %BF HA: People with ↑ PA will have ↓ %BF The researcher will use a Pearson correlation to determine this association. He sets alpha ≤ 0.05. Write out what that means (alpha ≤ 0.05) Alpha If the researcher sets alpha ≤ 0.05, this means that he/she will reject the null hypothesis if the p-value of the correlation is equal to or less than 0.05. This is the level of confidence/risk the researcher is willing to accept If the p-value of the test is greater than 0.05, there is a greater than 5% chance that the result could be due to ___________________, rather than a real effect/association Results The researcher runs the correlation in SPSS and this is in the output: n = 100, r = -0.75, p = 0.02 1) What is the direction of the correlation? What does this mean? 2) What is the sample size? 3) Describe the magnitude of the association? 4) Is this result statistically significant? 5) Did he/she fail to reject the null hypothesis OR reject the null hypothesis? Results defined There is a negative, moderate-to-strong, relationship between PA and %BF (r = -0.75, p = 0.02). Those with higher levels of physical activity tended to have lower %BF (or vice versa) Reject the null hypothesis and accept the alternative Based on this correlation alone, does PA cause %BF to change? Why or why not? Error Assume the association seen here between PA and %BF is REAL (not due to RSE). What type of error is made if the researcher fails to reject the null hypothesis (and accepts HO) Says there is no association when there really is Type II Error Assume the association seen here between PA and %BF is due to RSE (not REAL). What type of error is made if the researcher rejects the null hypothesis (and rejects HO) Says there is an association when there really is not Type I Error HA: Is an association between PA and %BF HO: Is not an association between PA and %BF Our Decision Reject HO Accept HO HO Type I Error Correct HA Correct Type II Error What is True Questions…? Back to correlations Recall, correlations provide two critical pieces of information a relationship between two variables: 1) Direction (+ or -) 2) Strength/Magnitude However, the correlation coefficient (r) can also be used to describe how well a variable can be used for prediction (of the another). A frequent goal of statistics For example… Association vs Prediction Is undergrad GPA associated with grad school GPA? Can Are skinfolds measurements associated with %BF? Can %BF be predicted by skinfolds? Is muscular strength associated with injury risk? Can grad school GPA be predicted by undergrad GPA? muscular strength be predictive of injury risk? Is event attendance associated with ticket price? Can event attendance be predicted by ticket price? (i.e., what ticket price will maximize profits?) Correlation and Prediction This idea should seem reasonable. Look at the three correlations below. In which of the three do you think it would be easiest (most accurate) to predict the y variable from the x variable? A B C Correlation and Prediction The stronger the relationship between two variables, the more accurately you can use information from one of those variables to predict the other Which do you think you could predict more accurately? Bench press repetitions from body weight ? Or 40-yard dash from 10-yard dash? Explained Variance The stronger the relationship between two variables, the more accurately you can use information from one of those variables to predict the other This concept is “explained variance” or “variance accounted for” Variance = the spread of the data around the center Calculated by squaring the correlation coefficient, r2 Above correlation: r = 0.624 and r2 = 0.389 Why the values are different for everyone aka, Coefficient of Determination What percentage of the variability in x is explained by y The 10-yard dash explains 39% of the variance in the 40-yard dash If we could explain 100% of the variance – we’d be able to make a perfect prediction Coefficient of Determination, 2 r What percentage of the variability in y is explained by x The 10-yard dash explains 39% of the variance in the 40-yard dash So – about 61% (100% - 39% = 61%) of the variance remains unexplained (is due to other things) The more variance you can explain the better the predication The less variance that is explained the more error in the prediction Examples, notice how quickly the prediction degrades: r = 1.00; r2 = 100% r = 0.87; r2 = 75% r = 0.71; r2 = 50% r = 0.50; r2 = 25% r = 0.22; r2 = 5% Example with BP… Variance: BP Mean = 119 mmHg SD = 20 N = 22,270 Average systolic blood pressure in the United States Note mean – and variation (variance) in the values Why are these values so spread out? What things influence blood pressure Age Gender Physical Activity Diet Stress Which of these variables do you think is most important? Least important? If we could measure all of these, could we perfectly predict blood pressure? Correlating each variable with BP would allow us to answer these questions using r2 Beyond Obviously you want to have an estimate of how well a prediction might work – but it does not tell you how to make that prediction For 2 r that we use some form of regression Regression is a generic term (like correlation) There are several different methods to create a prediction equation: Simple Linear Regression Multiple Linear Regression Logistic Regression (pregnancy test) and many more… Example using Height to predict Weight Let’s start with a scatterplot between the two variables… 170 r = 0.81 160 150 Weight 140 130 120 110 100 90 80 55 65 75 Height Note the correlation coefficient above (r2 = 0.66) SPSS is going to do all the work. It will use a process called: Least Squares Estimation Least squares estimation: Fancy process where SPSS draws every possible line through the points - until finding the line where the vertical deviations from that line are the smallest 170 r = .81 160 150 Weight 140 130 120 110 100 90 80 55 65 75 Height The green line indicates a possible line, the blue arrows indicate the deviations – longer arrows = bigger deviations This is a crappy attempt – it will keep trying new lines until it finds the best one Least squares estimation: Fancy process where SPSS draws every possible line through the points - until finding the line where the vertical deviations from that line are the smallest 170 r = .81 160 150 Weight 140 130 120 110 100 90 80 55 65 75 Height Eventually, SPSS will get it right, finding the line that minimizes deviations, known as: Line of Best Fit The Line of Best fit is the end-product of regression This line will have a certain slope… 170 r = .81 160 150 Weight 140 Up so many units 130 120 110 SLOPE 100 90 In so many others 80 55 65 75 Height -234 And it will have a value on the y-axis for the zero value of the x-axis INTERCEPT The intercept can be seen more clearly if we redraw the graph with appropriate axes… 200 150 100 Weight 50 0 -50 0 20 40 60 80 -100 -150 -200 -250 -234lbs -300 Height The intercept will sometimes be a nonsense value – in this case, nobody is 0 inches tall or weighs -234 lbs. From the line (it’s equation), we can predict that an increase in height of 1 inch predicts a rise in weight of 5.4 lbs 170 r = .81 160 150 Weight 140 130 135lbs 120 Slope = 5.4 110 100 90 80 55 65 68 75 Height We can now estimate weight from height. A person that’s 68 inches tall should weight about 135 lbs. SPSS will output the equation, among a number of other items if you ask for them SPSS output: Coefficientsa Model 1 (Cons tant) Height (in inches ) Uns tandardized Coefficients B Std. Error -234.681 71.552 5.434 1.067 Standardi zed Coefficien ts Beta .806 t -3.280 5.092 Sig. .005 .000 a. Dependent Variable: Weight (in pounds ) INTERCEPT SLOPE The β-coefficient is the Slope of the line The (Constant) is the Intercept of the line The p-value is still here. In this case, height is a statistically significant predictor of weight (association likely NOT due to RSE) We can use those two values to write out the equation for our line Depending on your high school math teacher: Y = b + mX or Y = a + bX SLOPE INTERCEPT Weight = -234 + 5.434 (Height) Model Fit? Once you create your regression equation, this equation is called the ‘model’ i.e., we just modeled (created a model for) the association between height and weight How good is the model? How well do the data fit? Can use r2 for a general comparison How well one variable can predict the other Lower r2 means less variance accounted for, more error Our r = 0.81 for height/weight, so r2 = 0.65 We can also use Standard Error of the Estimate How good, generally, is the fit? Standard error of the estimate (SEE) Imagine we used our prediction equation to predict height for each subject in our dataset (X to predict Y) Will our equation perfectly estimate each Y from X? Unless r2 = 1.0, there will be some error between the real Y and the predicted Y The SEE is the standard deviation of those differences The standard deviation of actual Y’s about predicted Y’s Estimates typical size of the error in predicting Y (sort of) Critically equation related to r2, but SEE is more specific to your Let’s go back to our line of best fit (this line represents the predicted value of Y for each X): SEE is the standard deviation of all these errors 170 r = .81 160 150 Large Error Very Small Error Weight 140 130 120 110 Small Error 100 90 80 55 65 75 Height Notice some real Y’s are closer to the line than others SEE = The standard deviation of actual Y’s about predicted Y’s SEE Why calculate the ‘standard deviation’ of these errors instead of just calculating the ‘average error’? By using standard deviation instead of the mean, we can describe what percentage of estimates are within 1 SEE of the line In other words, if we used this prediction equation, we would expect that 68% fall within 1 SEE 95% fall within 2 SEE 99% fall within 3 SEE Knowing, “How often is this accurate?” is probably more important than asking, “What’s the average error?” Of course, how large the SEE is depends on your r2 and your sample size (larger samples make more accurate predictions) Let’s go back to our line of best fit : SEE is the standard deviation of the residuals 170 r = .81 160 150 Weight 140 Very Small Residual 130 Large Residual 120 110 100 Small Residual 90 80 55 65 75 Height In regression, we call these errors/deviations “residuals” Residual Y = Real Y – Predicted Y Notice that some of the residuals are - and some are +, where we over-estimated (-) or under-estimated (+) weight Residuals The line of best fit is a line where the residuals are minimized (least error) The residuals will sum to 0 The mean of the residuals will also be 0 The Line of Best Fit is the ‘balance point’ of the scatterplot The standard deviation of the residuals is the SEE Recognize this concept/terminology– if there is a residual – that means the effect of other variables is creating error QUESTIONS…? Confounding variables create residuals Statistical Assumptions of Simple Linear Regression See last week’s notes on assumptions of correlation… Variables are normally distributed Homoscedasticity of variance Sample is representative of population Relationship is linear (remember, y = a + bX) The variables are ratio/interval (continuous) Can’t use nominal or ordinal variables …at least pretend for now, we’ll break this one next week. Simple Linear Regression: Example Let’s start simple, with two variables we know to be very highly correlated 40-yard dash and 20-yard dash Can we predict 40-yard dash from 20-yard dash? SLR Trimmed dataset down to just two variables Let’s look at a scatterplot first All my assumptions are good, should be able to produce a decent prediction Next step, correlation Correlation Strength? Direction? Statistically significant correlations will (usually) produce statistically significant predictors r2 = ?? 0.66 Now, run the regression in SPSS SPSS The ‘predictor’ is the independent variable Model Outputs Adjusted r2 = Adjusts the r2 value based on sample size…small samples tend to overestimate the ability to predict the DV with the IV (our sample is 428, adjusted is similar) Model Outputs Notice our SEE of 0.06 seconds. 68% of residuals are within 0.06 seconds of predicted 95% of residuals are within 0.12 seconds of predicted Model Outputs The ‘ANOVA’ portion of the output tells you if the entire model is statistically significant. However, since our model just includes one variable (20-yard dash), the p-value here will match the one to follow Outputs Y-intercept = 1.259 Slope = 1.245 20-yard dash is a statistically significant predictor What is our equation to predict 40-yard dash? Equation 40yard dash time = 1.245(20yard time) + 1.259 If a player ran the 20-yard dash in 2.5 seconds, what is their estimated 40-yard dash time? 1.245(2.5) + 1.259 = 4.37 seconds If the player actually ran 4.53 seconds, what is the residual? Residual = Real – Predicted 4.53 – 4.37 = 0.16 Significance vs. Importance in Regression A statistically significant model/variable does NOT mean the equation is good at predicting The p-value tells you if the independent variable (predictor) can be used as a predictor of the dependent variable The r2 tells you how good the independent variable might be as a predictor (variance accounted for) The SEE tells you how good the predictor (model) is at predicting QUESTIONS…? Upcoming… In-class activity… Homework: Cronk Section 5.3 Holcomb Exercises 29, 44, 46 and 33 Multiple Linear Regression next week