PowerPoint - BYU Computer Science Students Homepage Index

advertisement

Chapter 4

Processing Text

Processing Text

Modifying/Converting documents to index terms

Convert the many forms of words into more consistent index

terms that represent the content of a document

What are the problems?

Matching the exact string of characters typed by the user is too

restrictive, e.g., case-sensitivity, punctuation, stemming

• it doesn’t work very well in terms of effectiveness

Sometimes not clear where words begin and end

• Not even clear what a word is in some languages, e.g., in

Chinese and Korean

Not all words are of equal value in a search, and understanding

the statistical nature of text is critical

2

Indexing Process

Text + Meta data

(Doc type, structure,

features, size, etc.)

Takes index terms and

creates data structures

(indexes) to support

fast searching

Identifies and stores

documents for

indexing

or Text Processing

Transforms documents into

index terms or features

3

Text Statistics

Huge variety of words used in text but many statistical

characteristics of word occurrences are predictable

e.g., distribution of word counts

Retrieval models and ranking algorithms depend heavily

on statistical properties of words

e.g., important/significant words occur often in documents

but are not high frequency in collection

4

Zipf’s Law

Distribution of word frequencies is very skewed

Few words occur very often, many hardly ever occur

e.g., “the” and “of”, two common words, make up about 10%

of all word occurrences in text documents

Zipf’s law:

The frequency f of a word in a corpus is inversely proportional

to its rank r (assuming words are ranked in order of

decreasing frequency)

k

f = r

fr=k

where k is a constant for the corpus

5

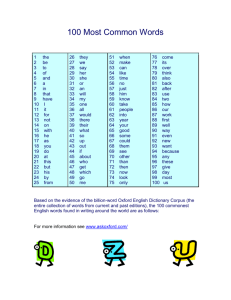

Top 50 Words from AP89

6

Zipf’s Law

Example. Zipf’s law for

AP89 with problems at

high and low frequencies

According to [Ha 02], Zipf’s law

does not hold for rank > 5,000

is valid when considering single words as well as n-gram phrases,

combined in a single curve.

[Ha 02] Ha et al. Extension of Zipf's Law to Words and Phrases. In Proc. of Int. Conf.

on Computational Linguistics. 2002.

7

Vocabulary Growth

Heaps’ Law, another prediction of word occurrence

As corpus grows, so does vocabulary size. However,

fewer new words when corpus is already large

Observed relationship (Heaps’ Law):

v = k × nβ

where

v is the vocabulary size (number of unique words)

n is the total number of words in corpus

k, β are parameters that vary for each corpus

(typical values given are 10 ≤ k ≤ 100 and β ≈ 0.5)

Predicting that the number of new words increases very

rapidly when the corpus is small

8

AP89 Example (40 million words)

(k)

()

v = k × nβ

9

Heaps’ Law Predictions

Number of new words increases very rapidly when the

corpus is small, and continue to increase indefinitely

Predictions for TREC collections are accurate for large

numbers of words, e.g.,

First 10,879,522 words of the AP89 collection scanned

Prediction is 100,151 unique words

Actual number is 100,024

Predictions for small numbers of words (i.e., < 1000) are

much worse

10

Heaps’ Law on the Web

Heaps’ Law works with very large corpora

New words occurring even after seeing 30 million!

Parameter values different than typical TREC values

New words come from a variety of sources

Spelling errors, invented words (e.g., product, company

names), code, other languages, email addresses, etc.

Search engines must deal with these large and

growing vocabularies

11

Heaps’ Law vs. Zipf’s Law

As stated in [French 02]:

The observed vocabulary growth has a positive correlation

with Heaps’ law

Zipf’s law, on the other hand, is a poor predictor of highranked terms, i.e., Zipf’s law is adequate for predicting

medium to low ranked terms

While Heaps’ law is a valid model for vocabulary growth of

web data, Zipf’s law is not strongly correlated with web data

[French 02] J. French. Modeling Web Data. In Proc. of Joint Conf. on Digital Libraries

(JCDL). 2002.

12

Estimating Result Set Size

Word occurrence statistics can be used to estimate the size

of the results from a web search

How many pages (in the results) contain all of the query

terms (based on word occurrence statistics)?

Example. For the query “a b c”:

fabc = N × fa/N × fb/N × fc/N = (fa × fb × fc)/N2

fabc: estimated size of the result set using joint probability

fa, fb, fc: the number of documents that terms a, b, and c occur

in, respectively

N is the total number of documents in the collection

Assuming that terms occur independently

13

TREC GOV2 Example

Poor

Estimation

Due to the

Independent

Assumption

Collection size (N) is 25,205,179

14

Estimating Collection Size

Important issue for Web search engines, in terms of coverage

Simple method: use independence model, even not realizable

Given two words, a and b, that are independent, and N is the

estimated size of the document collection

fab / N = fa / N × fb / N

N = (fa × fb) / fab

Example. For GOV2

flincoln = 771,326

ftropical = 120,990

flincoln ∩ tropical = 3,018

N = (120,990 × 771,326) / 3,018

= 30,922,045 (actual number is 25,205,179)

15

Result Set Size Estimation

Poor estimates because words are not independent

Better estimates possible if co-occurrence info. available

P(a ∩ b ∩ c) = P(a ∩ b) × P(c | a ∩ b)

= P(a ∩ b) × (P(b ∩ c) / P(b))

(a)

(b)

(c)

f tropical ∩ aquarium ∩ fish = f tropical ∩ aquarium × f aquarium ∩ fish / f aquarium

= 1921 × 9722 / 26480

= 705 (1,529, actual)

f tropical ∩ breeding ∩ fish = f tropical ∩ breeding × f breeding ∩ fish / f breeding

= 5510 × 36427 / 81885

= 2,451 (3,629 actual)

16

Result Set Estimation

Even better estimates using initial result set (word

frequency + current result set)

Estimate is simply C/s

• where s is the proportion of the total number of documents

that have been ranked (i.e., processed) & C is the number

of documents found that contain all the query words

Example. “tropical fish aquarium” in GOV2

• After processing 3,000 out of the 26,480 documents that

contain “aquarium”, C = 258

11%

f tropical ∩ fish ∩ aquarium = 258 / (3000 ÷ 26480) = 2,277 (> 1,529)

• After processing 20% of the documents,

f tropical ∩ fish ∩ aquarium = 1,778 (1,529 is real value)

where C = 356 & 5,296 documents have been ranked

17

Tokenizing

Forming words from sequence of characters

Surprisingly complex in English, can be harder in other

languages

Tokenization strategy of early IR systems:

Any sequence of alphanumeric characters of length 3 or

more

Terminated by a space or other special character

Upper-case changed to lower-case

18

Tokenizing

Example (Using the Early IR Approach).

“Bigcorp's 2007 bi-annual report showed profits rose 10%.”

becomes

“bigcorp 2007 annual report showed profits rose”

Too simple for search applications or even large-scale

experiments

Why? Too much information lost

Small decisions in tokenizing can have major impact on the

effectiveness of some queries

19

Tokenizing Problems

Small words can be important in some queries, usually in

combinations

xp, bi, pm, cm, el paso, kg, ben e king, master p, world war II

Both hyphenated and non-hyphenated forms of many

words are common

Sometimes hyphen is not needed

• e-bay, wal-mart, active-x, cd-rom, t-shirts

At other times, hyphens should be considered either as part

of the word or a word separator

• winston-salem, mazda rx-7, e-cards, pre-diabetes, t-mobile,

spanish-speaking

20

Tokenizing Problems

Special characters are an important part of tags, URLs,

code in documents

Capitalized words can have different meaning from lower

case words

Bush, Apple, House, Senior, Time, Key

Apostrophes can be a part of a word/possessive, or just

a mistake

rosie o'donnell, can't, don't, 80's, 1890's, men's straw hats,

master's degree, england's ten largest cities, shriner's

21

Tokenizing Problems

Numbers can be important, including decimals

Periods can occur in numbers, abbreviations, URLs,

ends of sentences, and other situations

Nokia 3250, top 10 courses, united 93, quicktime 6.5 pro,

92.3 the beat, 288358

I.B.M., Ph.D., cs.umass.edu, F.E.A.R.

Note: tokenizing steps for queries must be identical to

steps for documents

22

Tokenizing Process

First step is to use parser to identify appropriate parts of

doc (e.g., markup/tags in markup language) to tokenize

An approach: defer complex decisions to other components,

such as stopping, stemming, & query transformation

Word is any sequence of alphanumeric characters, terminated

by a space or special character, converted to lower-case

Everything indexed

Example: 92.3 → 92 3 but search finds document with 92

and 3 adjacent

To enhance the effectiveness of query transformation,

incorporate some rules into the tokenizer to reduce

dependence on other transformation components

23

Tokenizing Process

Not that different than simple tokenizing process used in

the past

Examples of rules used with TREC

Apostrophes in words ignored

• o’connor → oconnor bob’s → bobs

Periods in abbreviations ignored

• I.B.M. → ibm Ph.D. → phd

24

Stopping

Function words (conjunctions, prepositions, articles) have

little meaning on their own

High occurrence frequencies

Treated as stopwords (i.e., text processing stops when

words are detected & removed hereafter)

Reduce index space

improve response time

Improve effectiveness

Can be important in combinations

e.g., “to be or not to be”

25

Stopping

Stopword list can be created from high-frequency words

or based on a standard list

Lists are customized for applications, domains, and even

parts of documents

e.g., “click” is a good stopword for anchor text

One of the policies is to index all words in docs, & make

decisions about which words to use at query time

26

Stemming

Many morphological variations of words to convey a

single idea

Inflectional (plurals, tenses)

Derivational (making verbs into nouns, etc.)

In most cases, these have the same or very similar

meanings

Stemmers attempt to reduce morphological variations of

words to a common stem

Usually involves removing suffixes

Can be done at indexing time/as part of query processing

(like stopwords)

27

Stemming

Two basic types

Dictionary-based: uses lists of related words

Algorithmic: uses program to determine related words

Algorithmic stemmers

Suffix-s: remove ‘s’ endings assuming plural

• e.g., cats → cat, lakes → lake, wiis → wii

• Some false positives: ups → up (find a relationship when

none exists)

• Many false negatives: countries → countrie

(Fail to find term relationship)

28

Porter Stemmer

Algorithmic stemmer used in IR experiments since the 70’s

Consists of a series of rules designed to extract the longest

possible suffix at each step, e.g.,

Step 1a:

- Replace sses by ss (e.g., stresses stress)

- Delete s if the preceding word contains a vowel not

immediately before s (e.g., gaps gap, gas gas)

- Replace ied or ies by i if preceded by > 1 letter; o.w.,

by ie (e.g., ties tie, cries cri)

Effective in TREC

Produces stems not words

Makes a number of errors and difficult to modify

29

Errors of Porter Stemmer

It is difficult to capture all the subtleties of a language in

a simple algorithm

{ No Relationship }

{ Fail to Find a Relationship }

Porter2 stemmer addresses some of these issues

Approach has been used with other languages

30

Link Analysis

Links are a key component of the Web

Important for navigation, but also for search

e.g., <a href="http://example.com">Example website</a>

“Example website” is the anchor text

“http://example.com” is the destination link

both are used by search engines

31

Anchor Text

Describe the content of the destination page

i.e., collection of anchor text in all links pointing to a page

used as an additional text field

Anchor text tends to be short, descriptive, and similar to

query text

Retrieval experiments have shown that anchor text has

significant impact on effectiveness for some types

of queries

i.e., more than PageRank

32

PageRank

Billions of web pages, some more informative than others

Links can be viewed as information about the popularity

(authority?) of a web page

Can be used by ranking algorithms

Inlink count could be used as simple measure

Link analysis algorithms like PageRank provide more

reliable ratings, but Less susceptible to link spam

PageRank of a page is the probability that the “random

surfer” will be looking at that page

Links from popular pages increase PageRank of pages

they point to, i.e., links tend to point to popular pages

33

PageRank

PageRank (PR) of page C = PR(A)/2 + PR(B)/1

More generally

where u is a web page

Bu is the set of pages that point to u

Lv is the number of outgoing links from page v

(not counting duplicate links)

34

PageRank

Don’t know PageRank values at start

Example. Assume equal values of 1/3, then

1st iteration: PR(C) = 0.33/2 + 0.33/1 = 0.5

PR(A) = 0.33/1 = 0.33

PR(B) = 0.33/2 = 0.17

2nd iteration: PR(C) = 0.33/2 + 0.17/1 = 0.33

PR(A) = 0.5/1 = 0.5

PR(B) = 0.33/2 = 0.17

3rd iteration: PR(C) = 0.5/2 + 0.17/1 = 0.42

PR(A) = 0.33/1 = 0.33

PR(B) = 0.5/2 = 0.25

Converges to PR(C) = 0.4

PR(A) = 0.4

PR(B) = 0.2

35