Basics of Statistics

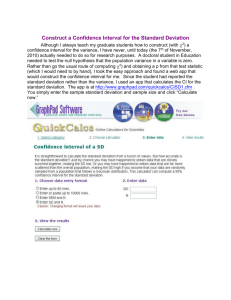

advertisement