Lecture Week 1.2

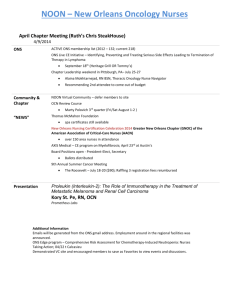

advertisement

CSCI 561 Founda.ons of Ar.ficial Intelligence Week 1: Overview and Intelligent Robots/Agents Fall 2013 Instructor: Wei-­‐Min Shen TA: Thomas Collins Review • Intelligence – Does the right thing given what it knows (ra#onal) – The common underlying capabili.es that enable a system to be general, literate, ra.onal, autonomous and collabora.ve • Ar.ficial Intelligence – The scien.fic understanding of the mechanisms underlying thought and intelligent behavior and their embodiment in machines • Intelligent Agents – Goals, knowledge, percep.on and ac.on 2 Today’s Lecture • • • • • Agents and environments The concept of ra.onal behavior Environments Agent types and varia.ons Project 1 descrip#on and assignment 3 What is an (Intelligent) Agent? • An over-­‐used, over-­‐loaded, and misused term. • Any “behaviors” that can be viewed as perceiving through its sensors from the environment and ac/ng through its effectors upon that environment to maximize progress towards its goals. • PAGE (Percepts, Actions, Goals, Environment) • ROBOT = Agents + Body (sensors and effectors) 4 Agents or Robots How to design this? Sensors percepts Agent Environment Goals actions Effectors 5 Ques.ons? • • • • • Is a Thermostat an agent? Is an Air-­‐Condi.on an agent? Are you an agent? Is Roomba an agent? Can you give some examples to differen.ate agents from robots? – W, E, B, R, O, B, Example Agent and Environment Sensors=? (Percepts=?) Effectors=? (Ac.ons=?) Goals=? Behaviors=? Is this SuperBot module an agent? • Sensors/ percepts=? • Effectors/ ac.ons=? • Goals=? • Environment=? • Behaviors=? Where are the agents in this robot? SuperBot Simulator Example Agent and Environment Your Project-­‐1 Agent: From A to B Sensor/Percepts=? Effector/Ac.ons=? Goals=? Behaviors=? B A Environments • Environment: World in which the agent operates – To understand agent behavior -­‐ or to design a special purpose agent -­‐ need to understand its environment • Simon’s Ant: Complex behavior may arise from a simple program in a complex environment • Ra.onality defined, at least in part, in terms of agent’s environment • PEAS descrip.on of the environment: – Performance: Measure for success/progress/quality – Environment: The world in which the agent operates • Environment in the narrow versus the broad context – Actuators: How the agent affects the environment – Sensors: How the agent perceives the environment 13 A Windshield Wiper Agent How do we design a agent that can wipe the windshields when needed? • Goals? • Sensors? (Percepts)? • Effectors? (Ac.ons)? • Environment? 14 A Windshield Wiper Agent (Cont’d) • Goals: • Sensors: Keep windshields clean & maintain visibility Camera (moist sensor) – Percepts: Raining, Dirty • Effectors: Wipers (leh, right, back) – Ac.ons: Off, Slow, Medium, Fast • Environment: Inner city, freeways, highways, weather … 15 Intelligent Agents/Robots • Agent and Robot – What can it see, do, think, and learn? – What does it want? • “Fame/fortune” , goal, u.lity, solu.ons to problems • Environment and world – What can be seen? – What can be changed? – Who else are there? (Obstacle and other agents) • Cogni.ve Cycles Cogni.ve Cycle • Agent repeatedly decides what to do next – The cogni#ve cycle that repeats for agent life.me Percep.on Memory Access Decision Learning Ac.on – In humans, the cycle runs at ~50-­‐100ms • This is minimum .me to choose an ac.on, but many such cycles can be combined to make harder choices • On each cycle, agent can be considered to be compu.ng a func.on for decision making 17 Two views of Agent Behavior • View 1 (popular): The agent is func#on maps percept sequences to ac.ons in the environment – f1: S*A! – [Dirty] WIPE – [Car <20’ away] RUN • View 2 (deeper): The agent is func#on maps percept sequences & ac.ons in the environment to a sequence of predic.ons – f2: (S*, A) P*! – [Car <20’ away], STAY HIT • Difference: f2 knows what to do, and why to do it. 18 Ra.onality & Ra.onal Agents • What is ra.onal at a given .me depends on: – What has been seen? Percept sequence to date (sensors) – What can you do? Ac.ons – What do you know? Prior environment knowledge – What do you want? Performance measure • Ideally objec.ve, external, based on what is to be achieved • A ra#onal agent chooses whichever ac.on maximizes the expected value of the performance measure given the percept sequence to date and the prior environment knowledge • Most human beings have only bounded ra.onality – My story of playing irra.onal Risk with my kids 19 Environment Types • However you define environment, its nature can drama.cally impact the complexity of the required agent program as well as the difficulty of achieving goals in it • Next few slides look at some key aqributes of environments 20 Environment Types Crossword Backgammon Part-Picking Robot Robot Taxi Observable?? Deterministic?? Episodic?? Static?? Discrete?? Single-agent?? 21 Environment Types Fully vs. partially observable: an environment is fully observable when the sensors can detect all aspects that are relevant to the choice of action. Crossword Backgammon Part-Picking Robot Robot Taxi Observable?? Deterministic?? Episodic?? Static?? Discrete?? Single-agent?? 22 Environment Types Deterministic vs. stochastic: if the next environment state is completely determined by the current state and the executed action then the environment is deterministic Observable?? Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? Episodic?? Static?? Discrete?? Single-agent?? 23 Environment Types Deterministic vs. stochastic: if the next environment state is completely determined by the current state and the executed action then the environment is deterministic Observable?? Deterministic?? Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL YES NO NO NO Episodic?? Static?? Discrete?? Single-agent?? 24 Environment Types Episodic vs. sequential (Markov or not): In an episodic environment the agent’s experience can be divided into atomic steps where the agent perceives and then performs a single action. The choice of action depends only on the episode itself, not on previous actions/episodes Observable?? Deterministic?? Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL YES NO NO NO Episodic?? Static?? Discrete?? Single-agent?? 25 Environment Types Static vs. dynamic: If the environment can change while the agent is choosing an action, the environment is dynamic Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? YES NO NO NO Episodic?? NO NO YES NO Observable?? Static?? Discrete?? Single-agent?? 26 Environment Types Static vs. dynamic: If the environment can change while the agent is choosing an action, the environment is dynamic Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? YES NO NO NO Episodic?? NO NO YES NO Static?? YES YES NO NO Observable?? Discrete?? Single-agent?? 27 Environment Types Discrete vs. continuous: This distinction can be applied to the state of the environment, the way time is handled and to the percepts/actions of the agent Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? YES NO NO NO Episodic?? NO NO YES NO Static?? YES YES NO NO Observable?? Discrete?? Single-agent?? 28 Environment Types Discrete vs. continuous: This distinction can be applied to the state of the environment, the way time is handled and to the percepts/actions of the agent Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? YES NO NO NO Episodic?? NO NO YES NO Static?? YES YES NO NO Discrete?? YES YES NO NO Observable?? Single-agent?? 29 Environment Types Single vs. multi-agent: Does the environment contain more than one agent whose behavior interacts in some relevant way? Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? YES NO NO NO Episodic?? NO NO YES NO Static?? YES YES NO NO Discrete?? YES YES NO NO Observable?? Single-agent?? 30 Environment Types Single vs. multi-agent: Does the environment contain more than one agent whose behavior interacts in some relevant way? Crossword Backgammon Part-Picking Robot Robot Taxi FULL FULL PARTIAL PARTIAL Deterministic?? YES NO NO NO Episodic?? NO NO YES NO Static?? YES YES NO NO Discrete?? YES YES NO NO Single-agent?? YES NO YES NO Observable?? 31 Environment Difficulty • The simplest environment is – Fully observable, determinis.c, episodic, sta.c, discrete and single-­‐agent • Real world situa.ons are frequently – Par.ally observable, stochas.c, sequen.al, dynamic, con.nuous and mul.-­‐agent • Other factors that determine difficulty include – Difficulty of individual ac.ons • E.g., Crosswords, part picking – Size/combinatorics of environment • E.g., The game of Go has ~319*19 (= ~10172) states 32 Agent Types • Four basic kinds of agent programs will be discussed: – Simple reflex agents – Model-­‐based reflex agents – Goal-­‐based agents – U.lity-­‐based agents • All can be turned into learning agents • Two addi.onal more complex varia.ons – Hybrid agents – Reflec.ve agents 33 Simple Reflex Agent • Select ac.on on the basis of only the current percept • Large reduc.on in possible percept/ac.on situa.ons (next slide) • May be implemented as condi#on-­‐ac#on rules – E.g., “If dirty then suck” 34 Model-­‐Based Reflex Agent • To tackle par#ally observable environments – Maintain internal state represen.ng best es.mate of current world situa.on • Over .me update state using world knowledge – How world changes – How ac.ons affect world ! Model of World 35 Goal-­‐Based Agent • Goals describe what agent wants – By changing goals, can change what agent does in same situa.on • Combining models and goals enables determining which possible future paths could lead to goals – Typically inves.gated in search, problem solving and planning research 36 U.lity-­‐Based Agent • Some goals can be solved in different ways – Some solu.ons may be “beqer” – have higher u.lity • U.lity func.on maps a (sequence of) state(s) onto a real number – Can think of goal achievement as 1 versus 0 • Can help in op.miza.on or in arbitra.on among goals 37 Learning Agent • All previous agent programs describe methods for selec.ng ac.ons, yet they do not explain the origins of these programs – Learning programs can be used to do this • Advantages – Robustness of the program in par.ally or totally unknown environments – Reduced programming effort • Disadvantages – May do the unexpected in a disastrous manner 38 Nominal Structure of Learning Agent • Performance element: selec.ng ac.ons based on percepts – Corresponds to the previous agent programs • Learning element: introduce improvements in performance element • Cri#c: provides feedback on agent’s performance based on fixed performance standard • Problem generator: ac.vely suggests ac.ons that will lead to new and informa.ve experiences – Explora.on vs. exploita.on 39 Self-­‐Awareness Agent Related to self-­‐awareness and meta-­‐level processing 40 Adap.ve Agents • New Task Learning New Knowledge/Skill – No priori knowledge (e.g., baby swimming) • Don’t-­‐know-­‐how learning know-­‐how • Recovery from unexpected failures or dynamics – Recover from unexpected (e.g., adult with inverted vision) • Know-­‐how Failures learning recovery 8/28/13 USC-­‐Polymorphic-­‐Robo.cs-­‐Lab 41 Surprise-­‐Based Learning Agents observation Perception TASK/ ENVIRONMENT (BLACK BOX) Surprise Analyzer prediction Model ModiEier Model Synchronizer actions Plan Action Selector (Planner) Predictor Model Goals (Intensions) • The Learner con.nuously makes predic.ons, detects surprise, analyzes surprises, extracts cri.cal informa.on from surprises, and improves and use its ac.on models Surprise ==> Model ==> Prediction 8/28/13 USC-­‐Polymorphic-­‐Robo.cs-­‐Lab 42 Real vs Ar.ficial Intelligence • Real: Human mind as network of thousands or millions of neural agents working in parallel. • To produce ar.ficial intelligence, this school holds, we should build systems that also contain many agents and systems for arbitra.ng among the agents' compe.ng results. • Distributed decision-­‐making & control Agency effectors – Ac.on selec.on: What to do next? – Conflict resolu.on sensors • Challenges: 43 Self-Reconfigurable Body/Mind Sensors Agent network percepts Environment actions Effectors What body should I become? What behaviors should I do? 44 Other Views of Agent Types • Knowledge – Fixed versus Flexible Knowledge • Is new knowledge learned? – Covers past, present, future • Past: Percept Sequence (Table) • Present: What to do now (Reflex) • Future: Enables predic.on (Model) Goals • Success(/Goals) – Fixed versus Flexible • Reflex (and MB) agents have fixed metrics of success • Goal/U.lity based agents can change metric by task – Binary (goal) versus Graded (u.lity) 45 Hybrid Agents • Many prac.cal agents combine one or more of the basic types • For example, robots that must perform complex tasks in real .me frequently combine reflex and model-­‐based agents – Reac#ve: Reflexes provide fast responses – Delibera#ve: Models and goals enable thinking about the future 46 Your Project-­‐1 Agent: From A to B Sensors: GPS (you and goal) Color camera Ac.ons: forward, backward, leh, right Goal: Go to goal, stay there, report goal color Behavior: Your choice “If X, then do Y” Goal My current loca.on Project 1 Assignment • Download the SuperBot simulator from hqp://www.isi.edu/robots/CS561 • Install the simulator on your computer • Compile and run the random agent example • Design and build your Project-­‐1-­‐Agent – Go from A to B in an open environment • Grade: We will test different A and B on your agent • Due date: 24:00 on 9-­‐18-­‐2013. Project 1 Details • Sensors: – 1: Your current loca.on – 2: Goal loca.on – 3: Color of the object in front of you • Ac.ons: – Forward, backward, leh, right • Goal: – Go to the goal loca.on, report the color of the goal object • Environment: – Open environment with no other objects or agents • Example: a random agent you can play