Grade Distribution: Examining nine years in

Grade Distribution: Examining nine years in three freshman service learning courses

Research Study

ENGL 5377

Spring 2007

Dr. Angela Eaton

Bobbie Latham

Tuesday, May 8, 2007

Latham 2

Introduction

Institutions of higher education admit, enroll, and graduate students. For students to complete college courses, a grade must be received. A common myth among administrators in institutions of higher education is that professors assign grades based on factors outside the actual academic work the student completes in a specific course. This myth is commonly referred to as grade inflation. Many articles have taken issue with whether or not grade inflation exists and if it does exist, what causes it. Grade inflation has been defined as “an upward shift in the grade point average (GPA) of students over an extended period of time without a corresponding increase in student achievement”

(Rosovsky and Hartley, 4). Still others ask, if students master coursework, is it wrong if the grades increase (French, 66)? French declares that “grade increases do not equal grade inflation. Grade inflation results from inappropriate standards” (66).

The following study questions whether grades in three freshman service learning courses at Texas Tech University have changed in a meaningful way in the past nine years. The study examines grade distribution for all fall semester sections in History 2300, English

1301, and Political Science 1301 from 1994 to 2002. Enrollment in these three courses is required of all freshme n unless credit for these courses has been received via test credit.

With this study, I hope to either dispel the myth or suggest further research be completed as to the reason grade inflation exists.

Background & Literature Review

The fact that “college grades and grading practices constantly spark controversy both inside and outside the academy” makes grade inflation an interesting subject (Hu, 1).

Grades first “came into being” at Yale University in 1783 (Milton, 3). Since the creation of grades, many studies have been conducted that compare the average grade point average (GPA) of students who were enrolled in an institution of higher education in the past to those GPA’s of students enrolled in the same institutions today. The most quoted studies have taken place at Ivy League schools such as Princeton and Harvard. Bradford

Wilson reports that the median GPA for the class of 1973 out of Princeton “was 3.078, while the median GPA for the class of 1997 was 3.422” and that the percent of all variations of the “A” grade was at 30.7 percent and rose to 42.5 percent within the period of 1992-97” (Wilson, 38). He also reports that at “Dartmouth, the average GPA rose from

3.06 to 3.23 from 1968 to 1994 (38). What is more important than the rise in GPA are the percentages of grades given to the student body.

What is the reason for the controversy over grade inflation? Many authors have various feelings about the subject. Some words used to describe grade inflation include phenomena (Wilson, 38), myth (Kohn, B7 ), and swindle (Seligman, 94). The emotions vary depending on whether the author is a member of the faculty, the administration, or the student body. Professor Harvey Mansfield, a faculty member at Harvard University, shows emotion by stating that “grade inflation got started in the late ‘60s and early ‘70s.

The grades that faculty members now give deserve to be a scandal” (Kohn, B7). Still others argue that an “upward trend of college grades cannot be considered evidence of grade inflation” and that these trends should be studied only in so far that there is

“evidence that higher grades are undeserved” (Hu, 15).

Latham 3

Richard Rothstein offers other reasons for grade inflation. He suggests that higher grades

“could be the result of smarter students” and that if “students are more proficient, there should be more A’s, even if grading is just as strict” (8). He also notes that classes are smaller and may reflect better learning, thus further achievement (8). Yet another reason he suggests for the increasing amount of “A” grades is that the professors may “demand more reasoning and less memorization” leading to learning the information by becoming involved in it (8).

Regardless of the feelings associated with grade inflation, literature points to the use of two typ es of analytical strategies to reveal the problem of grade inflation. The first offers the increase of average grades over time while the second offers a change in distribution over time (Hu, 15). It is the belief that if the number of students receiving “A” and “B” grades increase while the number of “C” and “D” grades decrease, “grade inflation may be present” (Hu, 15). While there are many sides to the grade inflation debate, many institutions understand what it is but do not know how to combat it effectively. Although grade inflation may exist, many place considerable trust in the grading system institutions use. Hu realizes that various groups, including parents and businesses, have faith in the current grading system and believes that “it is necessary to accurately identify problems in college grading practices and design effective policies to correct and prevent grading problems and optimize student learning (5). If grading problems exist, fix them.

In the fall of 2006, I ran a pilot study using the same grades as used in this study. I found that other statistical tests should be run on all grades for all years and compared. The tests

I ran in the pilot study were descriptive in nature and showed no statistical significance. I did run a test on two years, comparing all the grades and discovered a statistical significance. This study is an expansion of the earlier study.

Methodology

To find out whether or not grade inflation, if only to a small degree, exists at Texas Tech

University, I accessed and reviewed nine years of fall semester grades in Political Science

1301, English 1301, and History 2300. The Registrar at the university provided all data after approval was obtained from the appropriate human subjects review board (see attachment A). Identifiable information such as students receiving the grades and/or professors giving the grades was not accessed. Information retained for this study includes the course prefix and number and the amount of A, B, C, D, and F grades for all sections in each fall semester from 1994 to 2002.

I chose to gather data for this specific nine year period because several new academic policies were enacted in the fall of 2004 that I believe skewed the data. It is important to note that one policy in place during the above nine year period that may be cause for concern is the grade replacement policy. Students may repeat a course in which they have earned a grade. The student then completes a grade replacement form and submits to the

Office of the Registrar. All preceding grades for that same course are replaced with the most recent, highest grade. Some of the letter grades in the data within the fall terms for the nine year period may include replaced grades. The study began using 10 years worth of data but the final year, 2003, was thrown out. In the fall of 2003, the English department made a policy change to no longer give credit due to high CLEP scores.

Latham 4

Because of this change, the student population in English 1301 dramatically jumped along with the amount of higher grades given. One reason may be attributed to the amount of students who subsequently enrolled in the course after being denied credit although CLEP scores were high in the area of writing.

Another change in academic policy worth noting is that of admission standard changes.

Beginning in the fall of 1997, admissions standards were raised. Prior to that time, students in the top 25% of their high school graduating class had to earn a minimum of either a 22 composite ACT score or a composite SAT score of 1000. If a student fell in the second 25% of the high school graduating class, the minimum ACT composite was

27 and SAT composite was 1180. The new admission standards for fall 1997 included raising the minimum score for the top 25% to 25 for ACT composite and 1140 for SAT composite as well as raising the second 25% ACT composite to 28 and SAT composite to

1230 (Dale Ganus, 2007). Higher ensured admissions standards may be seen as a cause for any indication of grade inflation in that the better the students are, the better the grades received will be.

Results and Discussion

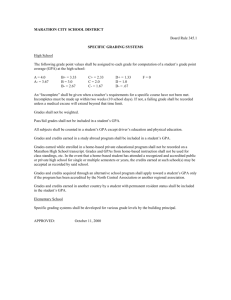

Initial Statistical Tests

I evaluated nine years of grade data in POLS 1301, ENGL 1301, and HIST 2300 using inferential as well as descriptive statistics. I ran a one-way ANOVA to examine and compare the grades in each year. I assigned a number to each letter grade as follows:

A = 5, B = 4, C = 3, D = 2, F = 1. I then multiplied the assigned grade number by the number of students making that grade. I used a significance level of .05. Over the nine year period in each of the courses, there was not a statistically significant difference in the grades given ( table 1 ).

Course Significance

POLS 1301 1.0 (p > .05)

ENGL 1301 1.0 (p >.05)

HIST 2300 .995 (p > .05)

Table 1

To further investigate the data, I individually listed the grades in HIST 2300 in 1994 and

1995 without assigning a number to each grade. It is important to note that the ANOVA on the grades listed individually for these two years returned a statistically significant difference (p < .05). This difference may be attributed to the individual listing of grades or because only two fall semesters of data were compared. Further in depth statistical studies is required to gauge if analyzing the grades individually has an effect on statistical significance over the 9 year period.

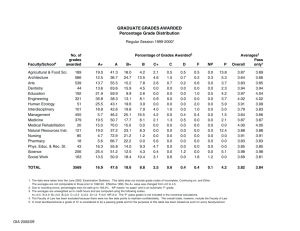

In addition to the above inferential statistics, I also created a visual representation in several different views of the grade data (tables 2-5). To further describe the statistics of the grade data, I calculated the course GPA for each year and course. Table 2 indicates the various class GPA data. At Texas Tech University the lowest GPA indicator is 0.00 and the highest is 4.0, with 0.00 indicating an average of “F” and 4.00 an average of “A.”

The GPA data in table 2 are within the 2.0 range and indicate an average grade of “C.”

Latham 5

Breakdown of Course GPA by Year (fall terms only)

Year

POLS 1301

GPA

2.044

ENGL 1301

GPA

2.667

HIST 2300

GPA

2.010 1994

1995

1996

1997

1998

1999

2000

2.482

2.654

2.516

2.516

2.496

2.305

2.498

2.387

2.71

2.757

2.900

2.762

2.113

2.268

2.245

2.330

2.375

2.493

2001 2.516 2.812 2.407

2002 2.555 2.659 2.414

Table 2

Table 2 represents seemingly small increments in which the average class GPA changed over the 9 year period. Figures 3-5, however, are visually presented using a smaller scale.

The GPA scale presents a GPA from 1.5 to 2.9, which makes the GPA changes over the nine year period appear significant.

Political Science 1301

2.9

2.7

2.5

2.3

2.1

1.9

1.7

1.5

Class GPA

Year

Figure 1

Latham 6

English 1301

2.9

2.7

2.5

2.3

2.1

1.9

1.7

1.5

Class GPA

Year

Figure 2

History 2300

2.9

2.7

2.5

2.3

2.1

1.9

1.7

1.5

Class GPA

Year

Figure 3

The above visual presentation of the grade data may be misconstrued. While table 2 indicates little or no significance to the naked eye, figures 1-3 present a different picture.

Based on the various statistical tests run, the data is inconclusive in regards to grade inflation in the three freshman service learning courses at Texas Tech University over a period of nine years.

Latham 7

Additional Statistical Tests

To break down the statistics even further, I ran a chi-square crosstabs test using SPSS

(student version 15.0). I used chi-square crosstabs to test for the presence of an association between all grades in all years of data. I was interested in assessing the differences among the distributions of grades over nine years. It is important to note that I included all grades of A, B, C, D, and F made in each of the courses over the period of nine years, which amounts to a significant amount of data. A total of 15,765 grades were given in History 2300 from 1994 to 2002. A total of 15,653 grades were given in Political

Science 1301 from 1994 to 2002. A total of 15,970 grades were given in English 1301 from 1994 to 2002. The grade distribution charts for each course is shown in figures 4, 5, and 6. Corresponding chi-square statistic tables are shown as well (tables 3-5).

History 2300

800

600

Grade

A

B

C

D

F

400

200

0

1994 1995 1996 1997 1998

Year

1999 2000 2001 2002

Figure 4

The bar chart above is a representation of the amount of each A, B, C, D, and F grades given over the nine year period. The largest jump in any one grade may be seen between the years

2000 and 2001 for the grade of B (green bar). The chi-square test for History 2300 showed a statistically significant difference ( p<.05) in the grades given over the period of nine years

(figure 4).

Latham 8

Chi-Square Tests

Value

Pearson Chi-Square 351.933(a)

Likelihood Ratio

N of Valid Cases

351.245

15765 df

32

32

Asymp. Sig.

(2-sided)

.000

.000 a. 0 cells (.0%) have expected count less than 5. The minimum expected count is 157.25.

Table 3

Political Science

600

Grade

A

B

C

D

F

400

200

0

1994 1995 1996 1997 1998 1999 2000 2001 2002

Year

Figure 5

In the Political Science 1301 graph, there is an interesting rise in the amount of “A” grades from 1994 to 1995 and then to 1996. Another grade of interest is the amount of

“D” grades given between the years of 1996 and 1999. The “D” grades rise and then fall off slightly in 1999.

Latham 9

Chi-Square Tests

Pearson Chi-Square

Likelihood Ratio

N of Valid Cases

Value

454.083(a)

476.172

15653 df

32

32

Asymp. Sig.

(2-sided)

.000

.000 a. 0 cells (.0%) have expected count less than 5. The minimum expected count is 122.22.

Table 4

The chi-square test for Political Science 1301 shows a statistically significant difference

( p<.05) in the grades given over the period of nine years because 122.22 exceeds the critical value of 5, thus there is a significant difference (figure 5).

English 1301

1,000

800

Grade

A

B

C

D

F

600

400

200

0

1994 1995 1996 1997 1998

Year

1999 2000 2001 2002

Figure 6

The English 1301 chart above shows the most significant jump between 1998 and 1999 in the grade of “A” (blue bar). The number of “A” grades given in 1998 was 358 compared to 568 “A” grades given in 1999.

Latham 10

Chi-Square Tests

Pearson Chi-Square

Likelihood Ratio

N of Valid Cases

Value

668.732(a)

662.849

15970 df

32

32

Asymp. Sig.

(2-sided)

.000

.000 a. 0 cells (.0%) have expected count less than 5. The minimum expected count is 71.86.

Table 5

The chi-square test for English 1301 shows a statistically significant difference ( p<.05) in the grades given over the period of nine years (figure 5).

Limitations to the Data

Although a statistically significant difference is shown in the chi-square two-variable test, the cause of these differences is not shown, thus not known. Other studies concerning grade inflation have used other factors or variables to show where the differences in the data occur. Because only the year and the grades of A, B, C, D, and F were tested for difference in distribution, we see that only there is a statistically significant difference and not where that difference lies.

Conclusion

The debate over grade inflation in institutions of higher education is a fascinating one.

Depending on who is asked, faculty, staff, and/or student body, the answer will be different. And even among those groups identified, the answer to the question as to whether or not grade inflation exists will be different. As members of any group within institutions of higher education, it is important to keep abreast of debates of this magnitude. While many believe that grade inflation is on the decrease, it is helpful to test the environment of course grade distribution periodically. Over a period of nine years, this study shows that there is a statistically significant difference in the three freshman service learning courses covered. We must remember that statistically significant does not necessarily mean practically different. There is no way to tell what causes these statistical tests to indicate that the grades are significant because only the grades and the years of 1994 to 2002 were tested. The differences beg the question is this grade inflation or a simple case of a natural increase in grades over a period of years. It is possible that the inclusion of additional variables such as number of teachers, class load, and position of faculty teaching, among others may tell a different story in regards to the problem of grade inflation and whether or not it exists at Texas Tech University.

Latham 11

References

French, Donald. “Grade Inflation: Is Ranking Students the Answer?” Journal of College

Science Teaching 34.6 (2005): 66-67.

Ganus, Dale. Personal Interview. 4 May. 2007.

Hu, Shouping. Beyond Grade Inflation: Grading Problems in Higher Education . San

Francisco: Wiley Periodicals, Inc., 2005.

Kohn, Alfie. “The Dangerous Myth of Grade Inflation.” Chronicle of Higher Education

49.11 (2002): B7 – B10.

Milton, Ohmer. Making Sense of College Grades . San Francisco: Jossey-Bass Publishers,

1986.

Rosovsky, Henry, and Matthew Hartley. Evaluation and the Academy: Are We Doing the

Right Thing . Cambridge, Massachusetts: American Academy of Arts & Science,

2002.

Rothstein, Richard. “Lessons; Doubling of A’s at Harvard: Grade Inflation or Brains?”

The New York Times 5 Dec. 2001, late ed.: 8.

Seligman, Dan. “The Grade Inflation Swind le.” Forbes . 169.6 (2002): 94.

Wilson, Bradford P. “The Phenomenon of Grade Inflation in Higher Education.”

National Form: Phi Kappa Phi Journal 79.4 (Fall 1999): 38-41.

Latham 12

Attachment A

Official written request to the Office of the Registrar asking for grade distribution statistics for Political Science 1301, English 1301, and History

2300 for all fall sections from 1994 – 2002.

To: South University Office of the Registrar

From: Roberta Latham

I request a report in spreadsheet format that includes the following information:

Grade count for all fall sections of History 2300 from 1994-2003.

Grade count for all fall sections of English 1301 from 1994-2003.

Grade count for all fall sections of Political Science 1301 from 1994-2003.

The grade count should include a total grades of A, B, C, D, and F. Please separate by year.

Please do not include any identifiable information such professor or student names.

If you have any questions pertaining to this request, please do not hesitate to contact

Angela Eaton at angela.eaton@ttu.edu

or Bobbie Latham at bobbie.latham@ttu.edu

. We hope to hear from you soon.

Thank you.