Diagnosing performance changes by comparing request

advertisement

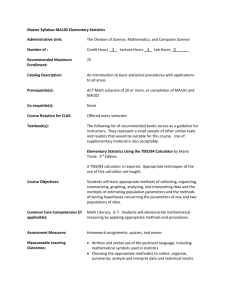

Diagnosing performance changes by comparing request flows! Raja Sambasivan! Alice Zheng★, Michael De Rosa✚, Elie Krevat, Spencer Whitman, Michael Stroucken, William Wang, Lianghong Xu, Greg Ganger! ! Carnegie Mellon! Microsoft Research★! Google✚ ! Perf. diagnosis in distributed systems! • Very difficult and time consuming! • Root cause could be in any component! ! • Request-flow comparison! • Helps localize performance changes! • Key insight: Changes manifest as mutations in request timing/structure! ! http://www.pdl.cmu.edu/ 2 Raja Sambasivan © March 11! Perf. debugging a feature addition! Client Clients Client Client! Metadata! Servers Servers server! NFS server! Storage nodes! http://www.pdl.cmu.edu/ 3 Raja Sambasivan © March 11! Perf. debugging a feature addition! • Before addition:! • Every file access needs a MDS access! Metadata! Client! server! (4)! (1)! (3)! (6)! (2)! NFS server! (5)! Storage nodes! http://www.pdl.cmu.edu/ 4 Raja Sambasivan © March 11! Perf. debugging a feature addition! • After: Metadata prefetched to clients ! • Most requests donʼt need MDS access! Metadata! Client! server! (1)! (3)! NFS server! (2) Storage nodes! http://www.pdl.cmu.edu/ 5 Raja Sambasivan © March 11! Perf. debugging a feature addition! • Adding metadata prefetching reduced performance instead of improving it (!)! • How to efficiently diagnose this?! http://www.pdl.cmu.edu/ 6 Raja Sambasivan © March 11! Request-flow comparison will show! Precursor! Mutation! NFS Lookup Call! 10μs! ! MDS DB Lock 20μs! !MDS DB Unlock! 50μs! NFS Lookup Call! 10μs! ! MDS DB Lock! ! NFS Lookup Reply! MDS DB Unlock! 50μs! 350μs! ! Reply! NFS Lookup http://www.pdl.cmu.edu/ 7 Raja Sambasivan © March 11! Request-flow comparison will show! Precursor! Mutation! NFS Lookup Call! 10μs! ! MDS DB Lock 20μs! !MDS DB Unlock! 50μs! NFS Lookup Call! 10μs! ! MDS DB Lock! ! NFS Lookup Reply! MDS DB Unlock! 50μs! 350μs! ! Reply! Root cause localized byNFS showing how Lookup mutation and precursor differ! http://www.pdl.cmu.edu/ 8 Raja Sambasivan © March 11! Request-flow comparison! • Identifies distribution changes! • Distinct from anomaly detection! – E.g., Magpie, Pinpoint, etc.! • Satisfies many use cases! • Performance regressions/degradations! • Eliminating the system as the culprit! http://www.pdl.cmu.edu/ 9 Raja Sambasivan © March 11! Contributions! • Heuristics for identifying mutations, precursors, and for ranking them! • Implementation in Spectroscope! • Use of Spectroscope to diagnose! • Unsolved problems in Ursa Minor! • Problems in Google services ! http://www.pdl.cmu.edu/ 10 Raja Sambasivan © March 11! Spectroscope workflow! Non-problem period graphs! Problem period graphs! Categorization! Response-time mutation identification! Structural mutation identification! Ranking! UI layer! http://www.pdl.cmu.edu/ 11 Raja Sambasivan © March 11! Graphs via end-to-end tracing! • Used in research & production systems! • E.g., Magpie, X-Trace, Googleʼs Dapper! • Works as follows:! • Tracks trace points touched by requests! • Request-flow graphs obtained by stitching together trace points accessed! • Yields < 1% overhead w/req. sampling ! http://www.pdl.cmu.edu/ 12 Raja Sambasivan © March 11! Example: Graph for a striped read! NFS Read Response-time:! Call! 8,120μs! 100μs! Cache 10μs! 10μs! Miss! SN2 Read SN1 Read 1,000μs! 1,000μs! Call! Call! Work Work 3,500μs! 5,500μs! on SN1! on SN2! 1,500μs! 1,500μs! SN1 SN2 Reply! NFS Reply! Reply! 10μs! 10μs! http://www.pdl.cmu.edu/ 13 Raja Sambasivan © March 11! Example: Graph for a striped read! NFS Read Response-time:! Call! 8,120μs! 100μs! Cache 10μs! 10μs! Miss! SN2 Read SN1 Read 1,000μs! 1,000μs! Call! Call! Work Work 3,500μs! 5,500μs! on SN1! on SN2! 1,500μs! 1,500μs! SN1 SN2 Reply! NFS Reply! Nodes show trace points & edges show latencies Reply! 10μs! 10μs! http://www.pdl.cmu.edu/ 14 Raja Sambasivan © March 11! Spectroscope workflow! Non-problem period graphs Problem period graphs Categorization Response-time mutation identification Structural mutation identification Ranking UI layer http://www.pdl.cmu.edu/ 15 Raja Sambasivan © March 11! Categorization step! • Necessary since it is meaningless to compare individual requests flows! • Groups together similar request flows! • Categories: basic unit for comparisons! • Allows for mutation identification by comparing per-category distributions ! http://www.pdl.cmu.edu/ 16 Raja Sambasivan © March 11! Choosing what to bin into a category! • Our choice: identically structured reqs! • Uses same path/similar cost expectation! • Same path/similar costs notion is valid! • For 88—99% of Ursa Minor categories! • For 47—69% of Bigtable categories! – Lower value due to sparser trace points! – Lower value also due to contention ! Aside: Categorization can be used to localize problematic sources of variance! http://www.pdl.cmu.edu/ 17 Raja Sambasivan © March 11! Spectroscope workflow! Non-problem period graphs! Problem period graphs! Categorization! Response-time mutation identification! Structural mutation identification! Ranking! UI layer! http://www.pdl.cmu.edu/ 18 Raja Sambasivan © March 11! Type 1: Response-time mutations! • Requests that:! • are structurally identical in both periods! • have larger problem period latencies! • Root cause localized by… ! • identifying interactions responsible ! http://www.pdl.cmu.edu/ 19 Raja Sambasivan © March 11! Categories w/response-time mutations! • Identified via use of a hypothesis test! • Sets apart natural variance from mutations! • Also used to find interactions responsible! Frequency Frequency Category 1 ! Response-time Response-time http://www.pdl.cmu.edu/ Category 2! 20 Raja Sambasivan © March 11! Categories w/response-time mutations! • Identified via use of a hypothesis test! • Sets apart natural variance from mutations! • Also used to find interactions responsible! Frequency Frequency Category 1 ! Response-time Response-time IDʼd as containing mutations! http://www.pdl.cmu.edu/ Category 2! 21 Not IDʼd as containing mutations! Raja Sambasivan © March 11! Response-time mutation example! Avg. non-problem Avg. problem response time:! response time:! 110μs! 1,090μs! NFS Read Call! Avg. 10μs! ! Avg. 20μs! Avg. 1,000μs ! ! SN1 Read End! Avg. 80μs! ! http://www.pdl.cmu.edu/ SN1 Read Start NFS Read Reply! 22 Raja Sambasivan © March 11! Response-time mutation example! Avg. non-problem Avg. problem response time:! response time:! 110μs! 1,090μs! NFS Read Call! Avg. 10μs! ! Avg. 20μs! Avg. 1,000μs ! ! SN1 Read Start SN1 Read End! Avg. 80μs! ! NFS Read Reply! interaction Problem localized by ID’ing responsible http://www.pdl.cmu.edu/ 23 Raja Sambasivan © March 11! Type 2: Structural mutations! • Requests that:! • take different paths in the problem period! • Root caused localized by…! • identifying their precursors! – Likely path during the non-problem period! • idʼing how mutation & precursor differ! http://www.pdl.cmu.edu/ 24 Raja Sambasivan © March 11! IDʼing categories w/structural mutations! • Assume similar workloads executed! • Categories with more problem period requests contain mutations! • Reverse true for precursor categories! • Threshold used to differentiate natural variance from categories w/mutations! http://www.pdl.cmu.edu/ 25 Raja Sambasivan © March 11! Mapping mutations to precursors! Precursors! Read! Structural Mutation! Write! Read! ? NP: 1,000! NP: 480! P: 150! P: 460! NP: 300! P: 800! • Accomplished using three heuristics! • See paper for details! ! ! http://www.pdl.cmu.edu/ 26 Raja Sambasivan © March 11! Example structural mutation! Precursor! Mutation! NFS Lookup Call! 10μs! ! MDS DB Lock 20μs! !MDS DB Unlock! 50μs! NFS Lookup Call! 10μs! ! MDS DB Lock! ! NFS Lookup Reply! MDS DB Unlock! 50μs! 350μs! ! Reply! NFS Lookup http://www.pdl.cmu.edu/ 27 Raja Sambasivan © March 11! Example structural mutation! Precursor! Mutation! NFS Lookup Call! 10μs! ! MDS DB Lock 20μs! !MDS DB Unlock! 50μs! NFS Lookup Call! 10μs! ! MDS DB Lock! ! NFS Lookup Reply! MDS DB Unlock! 50μs! 350μs! ! Reply! Root cause localized byNFS showing how Lookup mutation and precursor differ! http://www.pdl.cmu.edu/ 28 Raja Sambasivan © March 11! Ranking of categories w/mutations! • Necessary for two reasons! • There may be more than one problem! • One problem may yield many mutations! • Rank based on:! • # of reqs affected * Δ in response time! ! http://www.pdl.cmu.edu/ 29 Raja Sambasivan © March 11! Spectroscope workflow! Non-problem period graphs! Problem period graphs! Categorization! Response-time mutation identification! Structural mutation identification! Ranking! UI layer! http://www.pdl.cmu.edu/ 30 Raja Sambasivan © March 11! Putting it all together: The UI! Precursor! NFS Lookup Call! 10μs! ! MDS DB Lock 20μs! !MDS DB Unlock! 50μs! Mutation! Ranked list ! NFS Lookup Call! 10μs! 1.! Structural! 2. Response ! ! MDS DB Lock! time! 350μs! MDS DB Unlock! ! NFS Lookup Reply! 20. Structural! 50μs! ! Reply! NFS Lookup http://www.pdl.cmu.edu/ 31 Raja Sambasivan © March 11! Putting it all together: The UI! Mutation! Ranked list ! NFS Read Call! ! Structural! 1. 2. Response ! time! Avg. 10μs! ! Avg. 20μs! Avg. 100μs ! ! SN1 Read Start SN1 Read End! Avg. 80μs! ! http://www.pdl.cmu.edu/ 20. Structural! NFS Read Reply! 32 Raja Sambasivan © March 11! Outline! • • • • Introduction! End-to-end tracing & Spectroscope! Ursa Minor & Google case studies! Summary! http://www.pdl.cmu.edu/ 33 Raja Sambasivan © March 11! Ursa Minor case studies! • Used Spectroscope to diagnose real performance problems in Ursa Minor! • Four were previously unsolved! • Evaluated ranked list by measuring! • % of top 10 results that were relevant! • % of total results that were relevant! http://www.pdl.cmu.edu/ 34 Raja Sambasivan © March 11! Quantitative results! 100% % top 10 relevant 75% % total ! relevant! 50% 25% N/A 0% Prefetch Config. RMWs Creates 500us Spikes delay http://www.pdl.cmu.edu/ 35 Raja Sambasivan © March 11! Ursa Minor 5-component config! • All case studies use this configuration! Client! (1)! (4)! Metadata! server! (3)! (6)! (2)! NFS server! (5) Storage nodes! http://www.pdl.cmu.edu/ 36 Raja Sambasivan © March 11! Case 1: MDS configuration problem! • Problem: Slowdown in key benchmarks seen after large code merge! http://www.pdl.cmu.edu/ 37 Raja Sambasivan © March 11! Comparing request flows! • Identified 128 mutation categories:! • Most contained structural mutations! • Mutations and precursors differed only in the storage node accessed! • Localized bug to unexpected interaction between MDS & the data storage node! • But, unclear why this was happening! http://www.pdl.cmu.edu/ 38 Raja Sambasivan © March 11! Further localization! • Thought mutations may be caused by changes in low-level parameters! • E.g., function call parameters, etc.! • Identified parameters that separated 1stranked mutation from its precursors! • Showed changes in encoding params! • Localized root cause to two config files ! Root cause: Change to an obscure config file http://www.pdl.cmu.edu/ 39 Raja Sambasivan © March 11! Inter-cluster perf. at Google! • Load tests run on same software in two datacenters yielded very different perf.! • Developers said perf. should be similar! http://www.pdl.cmu.edu/ 40 Raja Sambasivan © March 11! Comparing request flows! • Revealed many mutations! • High-ranked ones were response-time! • Responsible interactions found both within the service and in dependencies! • Led us to suspect root cause was issue with the slower load testʼs datacenter ! Root cause: Shared Bigtable in slower load test’s datacenter was not working properly http://www.pdl.cmu.edu/ 41 Raja Sambasivan © March 11! Summary! • Introduced request-flow comparison as a new way to diagnose perf. changes! • Presented algorithms for localizing problems by identifying mutations! • Showed utility of our approach by using it to diagnose real, unsolved problems! http://www.pdl.cmu.edu/ 42 Raja Sambasivan © March 11! Related work (I)! [Abd-El-Malek05b]: Ursa Minor: versatile cluster-based storage. Michael Abd-El-Malek, William V. Courtright II, Chuck Cranor, Gregory R. Ganger, James Hendricks, Andrew J. Klosterman, Michael Mesnier, Manish Prasad, Brandon Salmon, Raja R. Sambasivan, Shafeeq Sinnamohideen, John D. Strunk, Eno Thereska, Matthew Wachs, Jay J. Wylie. FAST, 2005.! ! [Barham04]: Using Magpie for request extraction and workload modelling. Paul Barham, Austin Donnelly, Rebecca Isaacs, Richard Mortier. OSDI, 2004.! ! [Chen04]: Path-based failure and evolution management. Mike Y. Chen, Anthony Accardi, Emre Kiciman, Jim Lloyd, Dave Patterson, Armando Fox, Eric Brewer. NSDI, 2004.! ! ! http://www.pdl.cmu.edu/ 43 Raja Sambasivan © March 11! ! ! Related work (II)! [Cohen05]: Capturing, indexing, and retrieving system history. Ira Cohen, Steve Zhang, Moises Goldszmidt, Terrence Kelly, Armando Fox. SOSP, 2005.! ! [Fonseca07]: X-Trace: A Pervasive Network Tracing Framework. Rodrigo Fonseca, George Porter, Randy H. Katz, Scott Shenker, Ion Stoica. NSDI, 2007.! ! [Hendricks06]: Improving small file performance in objectbased storage. James Hendricks, Raja R. Sambasivan, Shafeeq Sinnamohideen, Gregory R. Ganger. Technical Report CMU-PDL-06-104, 2006.! ! ! ! ! http://www.pdl.cmu.edu/ 44 Raja Sambasivan © March 11! ! Related work (III)! [Reynolds06]: Detecting the unexpected in distributed systems. Patrick Reynolds, Charles Killian, Janet L. Wiener, Jeffrey C. Mogul, Mehul A. Shah, Amin Vahdat. NSDI, 2006.! ! [Sambasivan07]: Categorizing and differencing system behaviours. Raja R. Sambasivan, Alice X. Zheng, Eno Thereska. HotAC II, 2007.! ! [Sambasivan10]: Diagnosing performance problems by visualizing and comparing system behaviours. Raja R. Sambasivan, Alice X. Zheng, Elie Krevat, Spencer Whitman, Michael Stroucken, William Wang, Lianghong Xu, Gregory R. Ganger. CMU-PDL-10-103. ! http://www.pdl.cmu.edu/ 45 Raja Sambasivan © March 11! Related work (IV)! [Sambasivan11]: Automation without predictability is a recipe for failure. Raja R. Sambasivan and Gregory R. Ganger. Technical Report CMU-PDL-11-101, 2011.! ! [Sigelman10]: Dapper, a large-scale distributed tracing infrastructure. Benjamin H. Sigelman, Luiz Andre ́ Barroso, Mike Burrows, Pat Stephenson, Manoj Plakal, Donald Beaver, Saul Jaspan, Chandan Shanbhag. Technical report 2010-1, 2008.! ! [Thereska06b]: Stardust: tracking activity in a distributed storage system. Eno Thereska, Brandon Salmon, John Strunk, Matthew Wachs, Michael Abd-El-Malek, Julio Lopez, Gregory R. Ganger. SIGMETRICS, 2006.! ! ! http://www.pdl.cmu.edu/ 46 Raja Sambasivan © March 11!