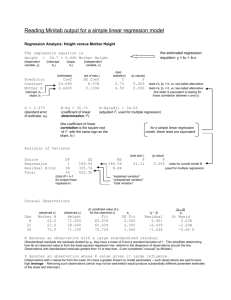

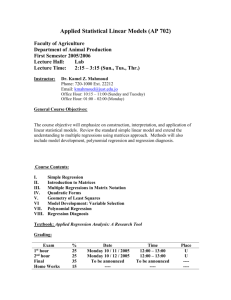

Bivariate Regression

advertisement