D2.R1 - DBGroup

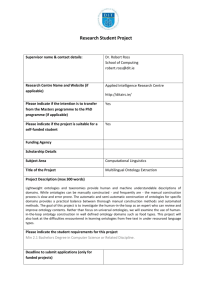

advertisement