DEPARTMENT OF BIOCHEMISTRY & MOLECULAR

advertisement

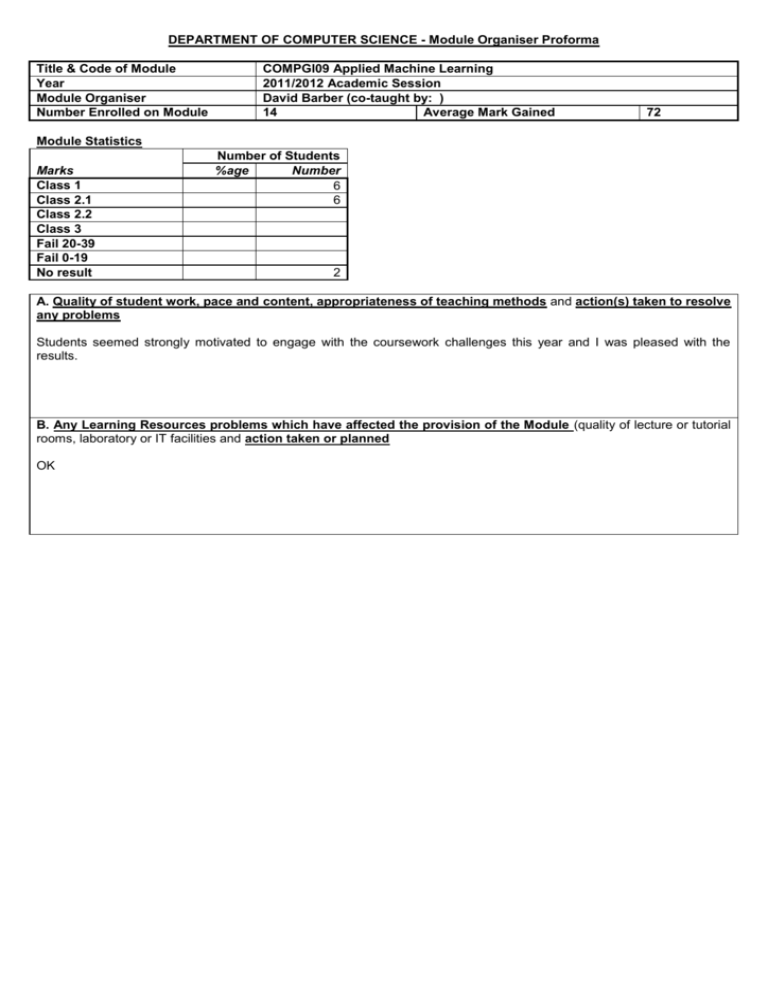

DEPARTMENT OF COMPUTER SCIENCE - Module Organiser Proforma Title & Code of Module Year Module Organiser Number Enrolled on Module COMPGI09 Applied Machine Learning 2011/2012 Academic Session David Barber (co-taught by: ) 14 Average Mark Gained 72 Module Statistics Marks Class 1 Class 2.1 Class 2.2 Class 3 Fail 20-39 Fail 0-19 No result Number of Students %age Number 6 6 2 A. Quality of student work, pace and content, appropriateness of teaching methods and action(s) taken to resolve any problems Students seemed strongly motivated to engage with the coursework challenges this year and I was pleased with the results. B. Any Learning Resources problems which have affected the provision of the Module (quality of lecture or tutorial rooms, laboratory or IT facilities and action taken or planned OK C. Issues identified by students (from questionnaires, staff-student committees etc.) and action taken or planned 1) Board Excellent course with excellent lecturer. Very helpful that many of the mathematical material is proved and produced on the OK 2) The theoretical material covered in this module is rather difficult for people without strong mathematical backgrounds. I think many students had expected the applied aspect of this module to be the main focus instead of the theoretical aspect. I wouldn’t say that the material is `theoretical’; the course aims to discuss methods that are applicable on real world problems and students are encouraged to have a good understanding of the technical underpinnings of these methods. 50% of the module marks are from the coursework which is completely about solving a real world problem. I’m surprised that to hear that the mathematics was considered difficult, since this is not the focus of the course. 3) Good course! some suggestions while I procrastinate from revision: Some things about the kaggle dataset which might help in picking a better challenge next year: - Complex / messy / ambiguously-documented structure, with the anonymisation procedures run on it complicating things further - Just a bit too big to comfortably work with on a laptop without spending a lot of time waiting for things to finish, on code optimisations, approximations, or limiting yourself to a subset of the data Of course that's what real world problems are like, but given the relatively limited time and compute power available, these things together ended up rewarding quite a conservative approach -- that is, just piggybacking on the existing work of the intermediate challenge winners. Still learn something from that though, so not the end of the world. And like the idea of using one of the competitions as coursework. I agree that the coursework problem was rather complex and difficult. Nevertheless this did stimulate a lot of interesting debate. I’ll take a look this year though to see if there might be a `cleaner’ problem. 4) Lectures were good, certain amount of overlap with some of the other courses, but the reinforcement of it, and the slightly different perspectives and extra practical tips/tricks were helpful. OK 5) One topic I think might be worth covering on an applied ML course would be some of the practical issues involved in scaling up ML algorithms to run on clusters etc. (Some access to a compute cluster for the assignment would be a great resource for this kind of course too.) For the external talk, maybe worth inviting someone from the open source community who works on MLrelated tools or infrastructure (eg Hadoop or Mahout?) in? I agree. I’ll try to get someone from industry to talk about this this year. 6) The slides miss valuable information that stops students understand the thing from course time. This comment is too vague to be useful. As students know, the slides are simply an accompaniment to the textbook which contains full derivations and examples of all the material on the module. D. Issues identified by External Examiners and action taken or planned None E. Chair of Teaching Committee’s Comments and Action Taken or Planned (Where applicable. Where no comment is required, please see the overall departmental report)