Lab: Decision Trees

advertisement

DECISION TREES ALGORITHMS

A particularly efficient method for producing classifiers from data is to generate a decision tree. The decision-tree

representation is the most widely used logic method. There is a large number of decision-tree induction algorithms

described primarily in the machine-learning and applied-statistics literature. They are supervised learning

methods that construct decision trees from a set of input-output samples. A typical decision-tree learning system

adopts a top-down strategy that searches for a solution in a part of the search space.

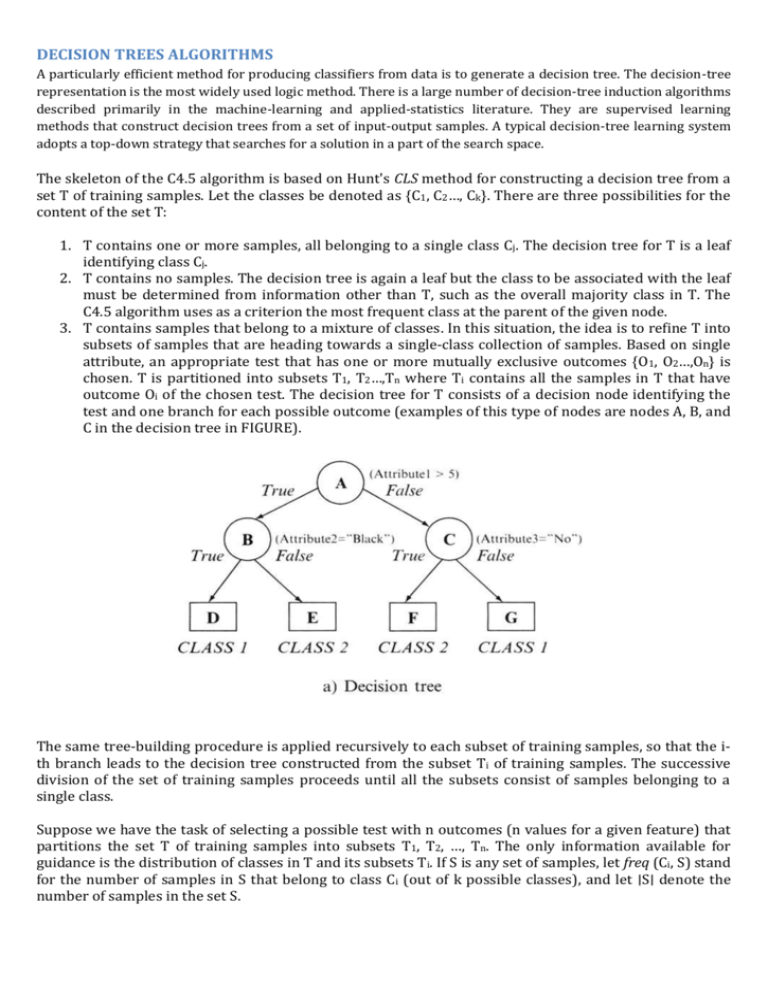

The skeleton of the C4.5 algorithm is based on Hunt's CLS method for constructing a decision tree from a

set T of training samples. Let the classes be denoted as {C1, C2…, Ck}. There are three possibilities for the

content of the set T:

1. T contains one or more samples, all belonging to a single class Cj. The decision tree for T is a leaf

identifying class Cj.

2. T contains no samples. The decision tree is again a leaf but the class to be associated with the leaf

must be determined from information other than T, such as the overall majority class in T. The

C4.5 algorithm uses as a criterion the most frequent class at the parent of the given node.

3. T contains samples that belong to a mixture of classes. In this situation, the idea is to refine T into

subsets of samples that are heading towards a single-class collection of samples. Based on single

attribute, an appropriate test that has one or more mutually exclusive outcomes {O1, O2…,On} is

chosen. T is partitioned into subsets T1, T2…,Tn where Ti contains all the samples in T that have

outcome Oi of the chosen test. The decision tree for T consists of a decision node identifying the

test and one branch for each possible outcome (examples of this type of nodes are nodes A, B, and

C in the decision tree in FIGURE).

The same tree-building procedure is applied recursively to each subset of training samples, so that the ith branch leads to the decision tree constructed from the subset Ti of training samples. The successive

division of the set of training samples proceeds until all the subsets consist of samples belonging to a

single class.

Suppose we have the task of selecting a possible test with n outcomes (n values for a given feature) that

partitions the set T of training samples into subsets T1, T2, …, Tn. The only information available for

guidance is the distribution of classes in T and its subsets Ti. If S is any set of samples, let freq (Ci, S) stand

for the number of samples in S that belong to class Ci (out of k possible classes), and let ∣S∣ denote the

number of samples in the set S.

The original ID3 algorithm used a criterion called gain to select the attribute to be tested which is based

on the information theory concept: entropy. The following relation gives the computation of the entropy

of the set S (bits are units):

Now consider a similar measurement after

T has been partitioned in accordance with n outcomes of one attribute test X. The expected information

requirement can be found as the weighted sum of entropies over the subsets:

The quantity

measures the information that is gained by partitioning T in accordance with the test X. The gain

criterion selects a test X to maximize Gain (X), i.e., this criterion will select an attribute with the highest

info-gain.

Let us analyze the application of these measures and the creation of a decision tree for one simple

example. Suppose that the database T is given in a flat form in which each out of fourteen examples

(cases) is described by three input attributes and belongs to one of two given classes: CLASSI or CLASS2.

Nine samples belong to CLASS1 and five samples to CLASS2, so the entropy before splitting is

After using Attributel to divide the initial set of samples T into three subsets (test x1 represents the

selection one of three values A, B, or C), the resulting information is given by:

The information gained by this test x1 is

If the test and splitting is based on Attribute3 (test x2 represents the selection one of two values True or

False), a similar computation will give new results:

and corresponding gain is

To find the optimal test…

Based on the gain criterion, the decision-tree algorithm will select test x1 as an initial test for splitting the

database T because this gain is higher. To find the optimal test it will be necessary to analyze a test on

Attribute2, which is a numeric feature with continuous values. In general, C4.5 contains mechanisms for

proposing three types of tests:

1. The "standard" test on a discrete attribute, with one outcome and one branch for each possible

value of that attribute (in our example these are both tests x1 for Attributel and x2 for Attribute3).

2. If attribute Y has continuous numeric values, a binary test with outcomes Y≤Z and Y>Z could be

defined, by comparing its value against a threshold value Z.

3. A more complex test also based on a discrete attribute, in which the possible values are allocated

to a variable number of groups with one outcome and branch for each group.

While we have already explained standard test for categorical attributes, additional explanations are

necessary about a procedure for establishing tests on attributes with numeric values. It might seem that

tests on continuous attributes would be difficult to formulate, since they contain an arbitrary threshold

for splitting all values into two intervals.

But there is an algorithm for the computation of optimal threshold value Z.

The training samples are first sorted on the values of the attribute Y being considered. There are only a

finite number of these values, so let us denote them in sorted order as {v1, v2 …, vm}. Any threshold value

lying between vi and vi+1 will have the same effect as dividing the cases into those whose value of the

attribute Y lies in {v1, v2 …, vi} and those whose value is in {vi+1, vi+2, …, vm}. There are thus only m − 1

possible splits on Y, all of which should be examined systematically to obtain an optimal split. It is usual

to choose the midpoint of each interval, (vi + vi+1)/2, as the representative threshold. The algorithm C4.5

differs in choosing as the threshold a smaller value vi for every interval {vi, vi+1}, rather than the midpoint

itself. This ensures that the threshold values appearing in either the final decision tree or rules or both

actually occur in the database.

To illustrate this threshold-finding process, we could analyze, for our example of database T, the

possibilities of Attribute2 splitting. After a sorting process, the set of values for Attribute2 is {65, 70, 75,

78, 80, 85, 90, 95, 96} and the set of potential threshold values Z is {65, 70, 75, 78, 80, 85, 90, 95}. Out of

these eight values the optimal Z (with the highest information gain) should be selected. For our example,

the optimal Z value is Z = 80 and the corresponding process of information-gain computation for the test

x3 (Attribute2 ≤ 80 or Attribute2 > 80) is the following:

Now, if we compare the information gain for the three attributes in our example, we can see that

Attribute1 still gives the highest gain of 0.246 bits and therefore this attribute will be selected for the

first splitting in the construction of a decision tree. The root node will have the test for the values of

Attribute1, and three branches will be created, one for each of the attribute values. This initial tree with

the corresponding subsets of samples in the children nodes is represented in Figure:

After initial splitting, every child node has several samples from the database, and the entire process of

test selection and optimization will be repeated for every child node. Because the child node for test x 1:

Attribute1=B has four cases and all of them are in CLASS1, this node will be the leaf node, and no

additional tests are necessary for this branch of the tree.

For the remaining child node where we have five cases in subset T1, tests on the remaining attributes can

be performed; an optimal test (with maximum information gain) will be test x4 with two alternatives:

Attribute2 ≤ 70 or Attribute2 > 70.

Using Attribute2 to divide T1 into two subsets (test x4 represents the selection of one of two intervals),

the resulting information is given by:

The information gained by this test is maximal:

and two branches will create the final leaf nodes because the subsets of cases in each of the branches

belong to the same class.

A similar computation will be carried out for the third child of the root node. For the subset T3 of the

database T, the selected optimal test x5 is the test on Attribute3 values. Branches of the tree, Attribute3 =

True and Attribute3 = False, will create uniform subsets of cases which belong to the same class. The final

decision tree for database T is represented in Figure

Exercises for students

1. Given a data set X with 3-dimesional categorical samples:

X: Attribute1 Attribute2 Class Construct a decision tree using the computation steps given in

the C4.5 algorithm.

T

1

C2

T

2

C1

F

F

1

2

C2

C2

2. Given a training data set Y:

Y A B C Class

15 1 A C1

20 3 B C2

25

e. 2 A C1

30 4 A C1

35 2 B C2

25 4 A C1

15III.

2 B C2

20 3 B C2

a. Find the best threshold (for the maximal gain) for attribute A.

b. Find the best threshold (for the maximal gain) for attribute B.

c. Find a decision tree for data set Y.

d. If the testing set is:

A B C Class

I.

what is the percentage of correct

10 2 A

classifications using the decision tree developed in c).

II.

Derive decision rules from the 20 1 B

decision tree.

30 3 A

40 2 B

C2

C1

C2

C2

15 1 B C1

3. Use the C4.5 algorithm to build a decision tree for classifying the following objects: