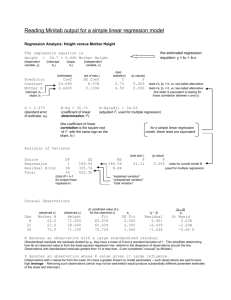

Addition rule :

advertisement