Distributed File Systems

advertisement

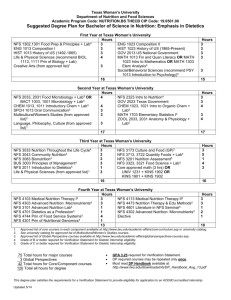

Distributed File Systems http://net.pku.edu.cn/~course/cs402/2010 Hongfei Yan School of EECS, Peking University 7/29/2010 DFS@wikipedia (1/2) • In computing, a distributed file system or network file system is any file system that allows access to files from multiple hosts sharing via a computer network.[1] This makes it possible for multiple users on multiple machines to share files and storage resources. • The client nodes do not have direct access to the underlying block storage but interact over the network using a protocol. This makes it possible to restrict access to the file system depending on access lists or capabilities on both the servers and the clients, depending on how the protocol is designed. DFS@wikipedia (2/2) • In contrast, in a shared disk file system all nodes have equal access to the block storage where the file system is located. On these systems the access control must reside on the client. • Distributed file systems may include facilities for transparent replication and fault tolerance. That is, when a limited number of nodes in a file system go offline, the system continues to work without any data loss. • The difference between a distributed file system and a distributed data store can be vague, but DFSes are generally geared towards use on local area networks. Outline • File systems overview • NFS & AFS (Andrew File System) • Google File System File Systems Overview • System that permanently stores data • Usually layered on top of a lower-level physical storage medium • Divided into logical units called “files” – Addressable by a filename (“foo.txt”) – Usually supports hierarchical nesting (directories) File Paths • A file path joins file & directory names into a relative or absolute address to identify a file – Absolute: /home/aaron/foo.txt – Relative: docs/someFile.doc • The shortest absolute path to a file is called its canonical path • The set of all canonical paths establishes the namespace for the file system What Gets Stored • User data itself is the bulk of the file system's contents • Also includes meta-data on a drive-wide and per-file basis: Drive-wide: Per-file: available space name formatting info owner character set modification date ... physical layout... High-Level Organization • Files are organized in a “tree” structure made of nested directories • One directory acts as the “root” • “links” (symlinks, shortcuts, etc) provide simple means of providing multiple access paths to one file • Other file systems can be “mounted” and dropped in as sub-hierarchies (other drives, network shares) Low-Level Organization (1/2) • File data and meta-data stored separately • File descriptors + meta-data stored in inodes – Large tree or table at designated location on disk – Tells how to look up file contents • Meta-data may be replicated to increase system reliability Low-Level Organization (2/2) • “Standard” read-write medium is a hard drive (other media: CDROM, tape, ...) • Viewed as a sequential array of blocks • Must address ~1 KB chunk at a time • Tree structure is “flattened” into blocks • Overlapping reads/writes/deletes can cause fragmentation: files are often not stored with a linear layout – inodes store all block ids related to file Fragmentation A B C (free space) A B C A (free space) A (free space) C A (free space) A D C A D (free) Design Considerations • Smaller block size reduces amount of wasted space • Larger block size increases speed of sequential reads (may not help random access) • Should the file system be faster or more reliable? • But faster at what: Large files? Small files? Lots of reading? Frequent writers, occasional readers? File system Security • File systems in multi-user environments need to secure private data – Notion of username is heavily built into FS – Different users have different access writes to files UNIX Permission Bits • World is divided into three scopes: – User – The person who owns (usually created) the file – Group – A list of particular users who have “group ownership” of the file – Other – Everyone else • “Read,” “write” and “execute” permissions applicable at each level UNIX Permission Bits: Limits • Only one group can be associated with a file • No higher-order groups (groups of groups) • Makes it difficult to express more complicated ownership sets Access Control Lists • More general permissions mechanism • Implemented in Windows • Richer notion of privileges than r/w/x – e.g., SetPrivilege, Delete, Copy… • Allow for inheritance as well as deny lists – Can be complicated to reason about and lead to security gaps Process Permissions • Important note: processes running on behalf of user X have permissions associated with X, not process file owner Y • So if root owns ls, user aaron can not use ls to peek at other users’ files • Exception: special permission “setuid” sets the user-id associated with a running process to the owner of the program file Disk Encryption • Data storage medium is another security concern – Most file systems store data in the clear, rely on runtime security to deny access – Assumes the physical disk won’t be stolen • The disk itself can be encrypted – Hopefully by using separate passkeys for each user’s files – (Challenge: how do you implement read access for group members?) – Metadata encryption may be a separate concern Outline • File systems overview • NFS & AFS (Andrew File System) • Google File System Distributed Filesystems • Support access to files on remote servers • Must support concurrency – Make varying guarantees about locking, who “wins” with concurrent writes, etc... – Must gracefully handle dropped connections • Can offer support for replication and local caching • Different implementations sit in different places on complexity/feature scale Distributed File Systems • General goal: Try to make a file system transparently available to remote clients. • (a) The remote access model. (b) The upload/download model. Network File System (NFS) • First developed in 1980s by Sun • Presented with standard UNIX FS interface • Network drives are mounted into local directory hierarchy – Type ‘man mount’, 'mount' some time at the prompt if curious NFS Protocol • Initially completely stateless – Operated over UDP; did not use TCP streams – File locking, etc, implemented in higher-level protocols • Modern implementations use TCP/IP & stateful protocols NFS Architecture for UNIX systems • NFS is implemented using the Virtual File System abstraction, which is now used for lots of different operating systems: • Essence: VFS provides standard file system interface, and allows to hide difference between accessing local or remote file system. Server-side Implementation • NFS defines a virtual file system – Does not actually manage local disk layout on server • Server instantiates NFS volume on top of local file system – Local hard drives managed by concrete file systems (EXT, ReiserFS, ...) NFS server User-visible filesystem EXT3 fs EXT3 fs Hard Drive 1 Hard Drive 2 Server filesystem NFS client EXT2 fs ReiserFS Hard Drive 1 Hard Drive 2 Typical implementation • Assuming a Unix-style scenario in which one machine requires access to data stored on another machine: 1. The server implements NFS daemon processes in order to make its data generically available to clients. 2. The server administrator determines what to make available, exporting the names and parameters of directories. 3. The server security-administration ensures that it can recognize and approve validated clients. 4. The server network configuration ensures that appropriate clients can negotiate with it through any firewall system. 5. The client machine requests access to exported data, typically by issuing a mount command. 6. If all goes well, users on the client machine can then view and interact with mounted filesystems on the server within the parameters permitted. [webg@index1 ~]$ vi /etc/exports # the file /etc/exports serves as the access control list for # file systems which may be exported to NFS clients. /home/infomall/udata 192.168.100.0/24(rw,async) 222.29.154.11(rw,async) /home/infomall/hist 192.168.100.0/24(rw,async) NFS Locking • NFS v4 supports stateful locking of files – Clients inform server of intent to lock – Server can notify clients of outstanding lock requests – Locking is lease-based: clients must continually renew locks before a timeout – Loss of contact with server abandons locks NFS Client Caching • NFS Clients are allowed to cache copies of remote files for subsequent accesses • Supports close-to-open cache consistency – When client A closes a file, its contents are synchronized with the master, and timestamp is changed – When client B opens the file, it checks that local timestamp agrees with server timestamp. If not, it discards local copy. – Concurrent reader/writers must use flags to disable caching NFS: Tradeoffs • NFS Volume managed by single server – Higher load on central server – Simplifies coherency protocols • Full POSIX system means it “drops in” very easily, but isn’t “great” for any specific need Distributed FS Security • Security is a concern at several levels throughout DFS stack – Authentication – Data transfer – Privilege escalation • How are these applied in NFS? Authentication in NFS • Initial NFS system trusted client programs – User login credentials were passed to OS kernel which forwarded them to NFS server – … A malicious client could easily subvert this • Modern implementations use more sophisticated systems (e.g., Kerberos) Data Privacy • Early NFS implementations sent data in “plaintext” over network – Modern versions tunnel through SSH • Double problem with UDP (connectionless) protocol: – Observers could watch which files were being opened and then insert “write” requests with fake credentials to corrupt data Privilege Escalation • Local file system username is used as NFS username – Implication: being “root” on local machine gives you root access to entire NFS cluster • Solution: “root squash” – NFS hard-codes a privilege de-escalation from “root” down to “nobody” for all accesses. RPCs in File System • Observation: Many (traditional) distributed file systems deploy remote procedure calls to access files. When wide-area networks need to be crossed, alternatives need to be exploited: File Sharing Semantics (1/2) • Problem: When dealing with distributed file systems, we need to take into account the ordering of concurrent read/write operations, and expected semantics (=consistency). File Sharing Semantics (2/2) • UNIX semantics: – a read operation returns the effect of the last write operation => can only be implemented for remote access models in which there is only a single copy of the file • Transaction semantics: – the file system supports transactions on a single file => issue is how to allow concurrent access to a physically distributed file • Session semantics: – the effects of read and write operations are seen only by the client that has opened (a local copy) of the file => what happens when a file is closed (only one client may actually win) Consistency and Replication • Observation: In modern distributed file systems, client side caching is the preferred technique for attaining performance; server-side replication is done for fault tolerance. • Observation: Clients are allowed to keep (large parts of) a file, and will be notified when control is withdrawn => servers are now generally stateful Fault Tolerance • Observation: FT is handled by simply replicating file servers, generally using a standard primary-backup protocol: AFS (The Andrew File System) • Developed at Carnegie Mellon • Strong security, high scalability – Supports 50,000+ clients at enterprise level • AFS heavily influenced Version 4 of NFS. Security in AFS • Uses Kerberos authentication • Supports richer set of access control bits than UNIX – Separate “administer”, “delete” bits – Allows application-specific bits Local Caching • File reads/writes operate on locally cached copy • Local copy sent back to master when file is closed • Open local copies are notified of external updates through callbacks Local Caching - Tradeoffs • Shared database files do not work well on this system • Does not support write-through to shared medium Replication • AFS allows read-only copies of filesystem volumes • Copies are guaranteed to be atomic checkpoints of entire FS at time of readonly copy generation • Modifying data requires access to the sole r/w volume – Changes do not propagate to read-only copies AFS Conclusions • Not quite POSIX – Stronger security/permissions – No file write-through • High availability through replicas, local caching • Not appropriate for all file types Outline • File systems overview • NFS & AFS (Andrew File System) • Google File System Motivation • Google needed a good distributed file system – Redundant storage of massive amounts of data on cheap and unreliable computers • Why not use an existing file system? – Google’s problems are different from anyone else’s • Different workload and design priorities – GFS is designed for Google apps and workloads – Google apps are designed for GFS Assumptions • High component failure rates – Inexpensive commodity components fail often • “Modest” number of HUGE files – Just a few million – Each is 100MB or larger; multi-GB files typical • Files are write-once, mostly appended to – Perhaps concurrently • Large streaming reads • High sustained throughput favored over low latency GFS Design Decisions • Files stored as chunks – Fixed size (64MB) • Reliability through replication – Each chunk replicated across 3+ chunkservers • Single master to coordinate access, keep metadata – Simple centralized management • No data caching – Little benefit due to large data sets, streaming reads • Familiar interface, but customize the API – Simplify the problem; focus on Google apps – Add snapshot and record append operations GFS Client Block Diagram Client computer GFS-Aware Application POSIX API GFS Master GFS API GFS Chunkserver Regular VFS with local and NFS-supported files Separate GFS view Specific drivers... Network stack GFS Chunkserver Cluster-Based Distributed File Systems • Observation: When dealing with very large data collections, following a simple client-server approach is not going to work. • Solution 1: For speeding up file accesses, apply striping techniques by which files can be fetched in parallel: • (a) whole-file distribution, (b) file-striped system Example: Google File System • Solution 2: Divide files in large 64 MB chunks, and distribute/replicate chunks across many servers. • A couple of important details: – The master maintains only a (file name, chunk server) table in main memory ) minimal I/O – Files are replicated using a primary-backup scheme; the master is kept out of the loop Single master • From distributed systems we know this is a: – Single point of failure – Scalability bottleneck • GFS solutions: – Shadow masters – Minimize master involvement • never move data through it, use only for metadata – and cache metadata at clients • large chunk size • master delegates authority to primary replicas in data mutations (chunk leases) • Simple, and good enough! Metadata (1/2) • Global metadata is stored on the master – File and chunk namespaces – Mapping from files to chunks – Locations of each chunk’s replicas • All in memory (64 bytes / chunk) – Fast – Easily accessible Metadata (2/2) • Master has an operation log for persistent logging of critical metadata updates – persistent on local disk – replicated – checkpoints for faster recovery Mutations • Mutation = write or append – must be done for all replicas • Goal: minimize master involvement • Lease mechanism: – master picks one replica as primary; gives it a “lease” for mutations – primary defines a serial order of mutations – all replicas follow this order • Data flow decoupled from control flow Mutations Diagram Mutation Example 1. Client 1 opens "foo" for modify. Replicas are named A, B, and C. B is declared primary. 2. Client 1 sends data X for chunk to chunk servers 3. Client 2 opens "foo" for modify. Replica B still primary 4. Client 2 sends data Y for chunk to chunk servers 5. Server B declares that X will be applied before Y 6. Other servers signal receipt of data 7. All servers commit X then Y 8. Clients 1 & 2 close connections 9. B's lease on chunk is lost Atomic record append • Client specifies data • GFS appends it to the file atomically at least once – GFS picks the offset – works for concurrent writers • Used heavily by Google apps – e.g., for files that serve as multiple-producer/singleconsumer queues Relaxed consistency model (1/2) • “Consistent” = all replicas have the same value • “Defined” = replica reflects the mutation, consistent • Some properties: – concurrent writes leave region consistent, but possibly undefined – failed writes leave the region inconsistent • Some work has moved into the applications: – e.g., self-validating, self-identifying records Relaxed consistency model (2/2) • Simple, efficient – Google apps can live with it – what about other apps? • Namespace updates atomic and serializable Master’s responsibilities (1/2) • Metadata storage • Namespace management/locking • Periodic communication with chunkservers – give instructions, collect state, track cluster health • Chunk creation, re-replication, rebalancing – balance space utilization and access speed – spread replicas across racks to reduce correlated failures – re-replicate data if redundancy falls below threshold – rebalance data to smooth out storage and request load Master’s responsibilities (2/2) • Garbage Collection – simpler, more reliable than traditional file delete – master logs the deletion, renames the file to a hidden name – lazily garbage collects hidden files • Stale replica deletion – detect “stale” replicas using chunk version numbers Fault Tolerance • High availability – fast recovery • master and chunkservers restartable in a few seconds – chunk replication • default: 3 replicas. – shadow masters • Data integrity – checksum every 64KB block in each chunk Scalability • Scales with available machines, subject to bandwidth – Rack- and datacenter-aware locality and replica creation policies help • Single master has limited responsibility, does not rate-limit system Scalability •Microbenchmarks: 1—16 servers •Read performance 75—80% efficient (good!) •Write performance ~50% (network stack overhead) Performance Security • … Basically none • Relies on Google’s network being private • File permissions not mentioned in paper – Individual users / applications must cooperate Deployment in Google • • • • 50+ GFS clusters Each with thousands of storage nodes Managing petabytes of data GFS is under BigTable, etc. Conclusion • GFS demonstrates how to support large-scale processing workloads on commodity hardware – design to tolerate frequent component failures – optimize for huge files that are mostly appended and read – feel free to relax and extend FS interface as required – go for simple solutions (e.g., single master) • GFS has met Google’s storage needs… it must be good! References • Some definitions of concepts form Wikipedia, – e.g., DFS, file system • [Ghemawat, et al.,2003] S. Ghemawat, H. Gobioff, and S.-T. Leung, "The Google file system," SIGOPS Oper. Syst. Rev., vol. 37, pp. 29-43, 2003. • Chapter 11 of [Tanenbaum, 2007]