A Practical Guide to Training Restricted Boltzmann Machines

advertisement

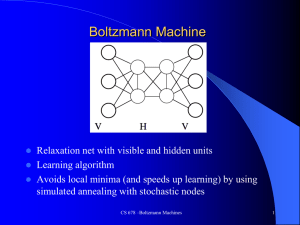

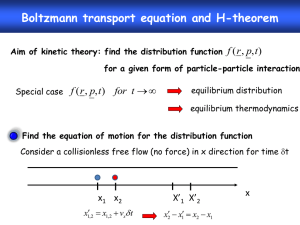

Restricted Boltzmann Machines and Deep Networks for Unsupervised Learning Instituto Italiano di Tecnologia, Genova June 7th, 2011 Loris Bazzani University of Verona Brief Intro • Unsupervised Learning • Learning features from (visual) data • Focus here on Restricted Boltzmann Machines 2 Outline Presentation – – – – Binary RBMs Gaussian-binary RBMs RBMs for Classification Deep Belief Networks (DBNs) Theor y • Restricted Boltzmann Machines (RBMs) • RBMs for Modeling Natural Scenes [Ranzato, CVPR 2010] • Learning Attentional Policies [Bazzani, ICML 2011] Applications • Learning Algorithms Outline Presentation – – – – Binary RBMs Gaussian-binary RBMs RBMs for Classification Deep Belief Networks (DBNs) Theor y • Restricted Boltzmann Machines (RBMs) • RBMs for Modeling Natural Scenes [Ranzato, CVPR 2010] • Learning Attentional Policies [Bazzani, ICML 2011] Applications • Learning Algorithms Restricted Boltzmann Machines • Bipartite Probabilistic Graphical Model W: parameters governing the interactions between visible and hidden units • Property: ”given the hidden units, all of the visible units become independent and given the visible units, all of the hidden units become independent” 5 Binary RBMs • We can sample from • Use the expected value of the hidden units as features: 6 Gaussian-binary RBMs • Popular extension for modeling natural images • Make the visible units conditionally Gaussian given the hidden units • Conditional distributions 7 RBMs for Classification 1) Feed the hidden representation into a standard classifier (e.g., multinomial logistic regression, SVM, random forest,…) 2) Embed the class into the visible units and, the class vector will be sample from 8 Deep Belief Networks • Goal: reach a high level of abstraction, so that classification becomes simple (e.g., linear) • Multiple stacked RBMs • Learning consists in greedy training each level sequentially from the bottom • Add fine-tuning with back-propagation • Or a non-linear classifier can be used • PB: how many layers? 9 Outline Presentation – – – – Binary RBMs Gaussian-binary RBMs RBMs for Classification Deep Belief Networks (DBNs) Theor y • Restricted Boltzmann Machines (RBMs) • RBMs for Modeling Natural Scenes [Ranzato, CVPR 2010] • Learning Attentional Policies [Bazzani, ICML 2011] Applications • Learning Algorithms How to Learn the Parameters • Maximum Likelihood (ML) techniques • No close-form solution for the maximization • Problem: Partition function usually not efficiently computable • Solutions: • Approximate ML • Sacrifices convergence properties to make it computationally feasible • Alternatives: variational methods, max-margin learning, etc. 11 ML Problem Marginalizing Gradient: Match the gradient of the free energy under the data distribution with the gradient under the model distribution 12 Contrastive Divergence (1) • It is just a gradient descent • At each step, it contrasts the data distribution with the model distribution • E.g., binary RBM 13 Contrastive Divergence (2) • Algorithm for binary RBMs: 14 Outline Presentation – – – – Binary RBMs Gaussian-binary RBMs RBMs for Classification Deep Belief Networks (DBNs) Theor y • Restricted Boltzmann Machines (RBMs) • RBMs for Modeling Natural Scenes [Ranzato, CVPR 2010] • Learning Attentional Policies [Bazzani, ICML 2011] Applications • Learning Algorithms Modeling Natural Images • Motivations: – Learning a generative model of natural images – Extracting features that capture regularities – Opposed to using engineered features • RBM with two set of hidden units: – One represents the pixel intensity – Another one, the pair-wise dependencies • Called Mean-Covariance RBM (mc-RBM) • It is still a Gaussian-binary RBM 17 mc-RBM Model (1) • Capture pair-wire interactions with: • Sketch: Covariance hiddens 18 mc-RBM Model (2) • Representation of mean pixel intensities: • Conditional distributions: 19 mc-RBM Model (3) • Final Energy term: Regularization • Free Energy formulation is also computable • Learning with – Stochastic gradient descent – And, Contrastive Divergence – Sampling using Hybrid Monte Carlo 20 Training Protocol for Recognition • • • • Images are pre-processed by PCA whitening Train the mc-RBM Extract features with mc-RBM Train a classifier for object recognition: – Multinomial Logistic Classifier 21 Object Recognition on CIFAR 10 22 Outline Presentation – – – – Binary RBMs Gaussian-binary RBMs RBMs for Classification Deep Belief Networks (DBNs) Theor y • Restricted Boltzmann Machines (RBMs) • RBMs for Modeling Natural Scenes [Ranzato, CVPR 2010] • Learning Attentional Policies [Bazzani, ICML 2011] Applications • Learning Algorithms Where do you look at? Original video source: http://gpu4vision.icg.tugraz.at/index.php?content=subsites/prost/prost.php Goal • Human tracking and recognition is amazingly efficient and effective • Large stream of data is filtered by attention • We propose a model for tracking and recognition that takes inspiration from human visual system • Tracking and recognition of “something” that is moving in the scene • Accumulate gaze data • Plan where to look at in the next future 25 Parallelism with Human Brain 26 Source image: http://www.waece.org/cd_morelia2006/ponencias/stoodley.htm Sketch of the Model Classifier Multi-fixation RBM (mc-)RBM Policy Learning 27 Modularity Learning Online • Offline Training • from moving “things” with multiple saccades Extract gaze data from a training dataset autoencoders, sparse coding, etc. • Train the (mc-)RBM • Train the multi-fixation RBM (3 random gazes) SVM, random forest, etc. • Train the multinomial logistic classifier • Online Learning other bandit techniques or Bayesian optimization • Hedge algorithm for policy learning 28 Experiments (1) • 10 synthetic video sequences with moving and background digits (from MNIST dataset) Tracking error in pixels Classification accuracy Code available at: http://www.lorisbazzani.info/code-datasets/rbm-tracking/ Experiments (2) Dataset available at: http://seqam.rutgers.edu/softdata/facedata/facedata.html Summary • • • • • Several RBMs models How to train RBMs Their extensions for classification RBMs as block for deep architectures They are useful for learning features from images, without engineering them • Taking inspiration from human learning, DBNs have been used 32 References (1) Learning attentional policies for tracking and recognition in video with deep networks, Loris Bazzani, Nando de Freitas, Hugo Larochelle, Vittorio Murino, and Jo-Anne Ting, International Conference on Machine Learning, 2011 Tutorial on Stochastic Approximation Algorithms for Training Restricted Boltzmann Machines and Deep Belief Nets, Swersky and Bo Chen, Benjamin Marlin, and Nando de Freitas, Information Theory and Applications (ITA) Workshop, 2010 Inductive Principles for Restricted Boltzmann Machine Learning, Benjamin Marlin, Kevin Swersky, Bo Chen, and Nando de Freitas, AISTATS, 2010 Modeling Pixel Means and Covariances Using Factorized Third-Order Boltzmann, Marc'Aurelio Ranzato and Geoffrey E. Hinton, IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2010 Factored 3-Way Restricted Boltzmann Machines For Modeling Natural Images, Marc'Aurelio Ranzato, Alex Krizhevsky and Geoffrey E. Hinton, International Conference on Artificial Intelligence and Statistics, 2010 On Deep Generative Models with Applications to Recognition, Marc'Aurelio Ranzato, Joshua Susskind, Volodymyr Mnih, and Geoffrey Hinton, IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2011 Learning to combine foveal glimpses with a third-order Boltzmann machine, Hugo Larochelle and Geoffrey E. Hinton, Neural Information Processing Systems, 2010 33 References (2) Stacks of Convolutional Restricted Boltzmann Machines for Shift-Invariant Feature Learning, Mohammad Norouzi, Mani Ranjbar, and Greg Mori, IEEE Computer Society Conference on Computer Vision and Pattern Recognition,2009 Deconvolutional Networks, Matthew D. Zeiler, Dilip Krishnan, Graham W. Taylor, and Rob Fergus, IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2010 Convolutional deep belief networks for scalable unsupervised learning of hierarchical representations, Lee, Honglak, Grosse, Roger, Ranganath, Rajesh and Ng, Andrew, International Conference on Machine Learning, 2009 A deep learning approach to machine transliteration, Deselaers, Thomas, Hasan, Savsa, Bender, Oliver and Ney, Hermann, Proceedings of the Fourth Workshop on Statistical Machine Translation, 2009 Learning Multilevel Distributed Representations for High-dimensional Sequences, Sutskever, I. and Hinton, G. E., Proceeding of the Eleventh International Conference on Artificial Intelligence and Statistics, 2007 On Contrastive Divergence Learning, Miguel A. Carreira-Perpinan and Geoffrey E. Hinton, International Conference on Artificial Intelligence and Statistics, 2005 Convolutional learning of spatio-temporal features, Taylor, Graham W., Fergus, Rob, LeCun, Yann and Bregler, Christoph, Proceedings of the 11th European conference on Computer vision, 2010 A Practical Guide to Training Restricted Boltzmann Machines, Geoffrey E. Hinton, University of Toronto, 2010, TR2010-003 34