FinalReport

advertisement

When Variational Auto-encoders meet Generative Adversarial Networks

Jianbo Chen Billy Fang Cheng Ju

14 December 2016

Abstract

Variational auto-encoders are a promising class of generative models. In this project, we first review

variational auto-encoders (VAE) and its popular variants including the Conditional VAE [17], VAE for semisupervised learning [10] and DRAW [2]. We also investigate various applications of variational auto-encoders.

Finally, we propose models which combine variants of VAE with another popular deep generative model: generative adversarial networks [6], generalizing the idea proposed in [12].

1

Introduction

Unsupervised learning from large unlabeled datasets is an active research area. In practice, millions of images

and videos are unlabeled and one can leverage them to learn good intermediate feature representations via approaches in unsupervised learning, which can then be used for other supervised or semi-supervised learning tasks

such as classification. One approach for unsupervised learning is to learn a generative model. Two popular methods

in computer vision are variational auto-encoders (VAEs) [11] and generative adversarial networks (GANs) [6].

Variational auto-encoders are a class of deep generative models based on variational methods. With sophisticated

VAE models, one can not only generate realistic images, but also replicate consistent “style.” For example, DRAW

[7] was able to generate images of house numbers with number combinations not seen in the training set, but with

a consistent style/color/font of street sign in each image. Additionally, as models learn to generate realistic output,

they learn important features along the way, which potentially can be used for classification; we consider this in

the Conditional VAE and semi-supervised learning models. However, one main criticism of VAE models is that

their generated output is often blurry.

Generative adversarial networks are another class of deep generative models. GANs introduce a game theoretic

component to the training process, in which a discriminator learns to distinguish real images from generated images,

while at the same time the generator learns to fool the discriminator.

In this report, we make the following contributions:

• We do a survey on the currently popular variants of variational auto-encoders and implement them in TensorFlow.

• Based on previous work [12], we combine popular variants of variational auto-encoders with generative adversarial networks, addressing the problem of blurry images.

• We investigate a few applications of generative models, including image completion, style transfer and semisupervised learning.

On the one hand, we were able to generate less blurry images by combining the Conditional VAE with a GAN,

compared to the original Conditional VAE [17]. On the other hand, we did not get comparable accuracy in semisupervised learning when we tried to combine VAE with GAN. DRAW yields strange results when combined with

GAN. These problems and their potential solutions are detailed in section 5.

1

2

2.1

Overview of VAE models

Variational auto-encoders

In the variational auto-encoder (VAE) literature, we consider the following latent variable model for the

data x.

z ∼ N (0, I)

x | z ∼ f (x; z, θ).

Here, f (x; z, θ) is some distribution parameterized by z and θ. In our work, we mainly consider x | z being

[conditionally] independent Bernoulli random variables (one for each pixel) with parameters being some function

of the latent variable z and possibly other parameters θ.

We omit motivation of the variational approach, which is explained in more detail in [3]. We consider the

following lower bound, valid for any distribution Q(z | x).

log p(x) ≥ Ez∼Q(·|x) [log p(x | z)] − KL(Q(z | x)kp(z)) =: −L(x).

(1)

In variational inference, we would maximize the right-hand side over a family of distributions Q(z | x). Here

we restrict our attention to Q(z | x) being Gaussian with some mean µ(x) and diagonal covariance Σ(x) being

functions (namely, neural networks) of x. For the VAE framework however, we also learn p(x | z) = f (x; z, θ); this

too, is a neural network.

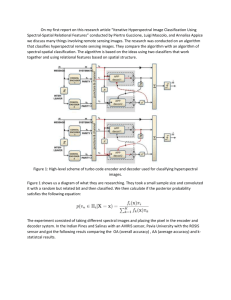

The VAE model is trained by maximizing the right-hand side of (1), and the structure is depicted in Figure 1.

The first neural network, called the encoder, takes in the data x and outputs the mean µ(x) and variance Σ(x) of

Q(z | x), which then can be used to compute the KL-divergence term in the loss. We then sample z ∼ Q(z | x) by

computing Σ(x) · + µ(x) where ∼ N (0, I), and pass z through the second neural network, called the decoder,

which allows us to compute p(x | z). Since z ∼ Q(z | x), this is an unbiased estimate of Ez∼Q(·|x) [log p(x | z)].

µ(x)

x

Encoder Q

KL(N (µ(x), Σ(x))kN (0, I))

Σ(x)

log p(x | z)

∼ N (0, I)

∗

+

Decoder P

f (·; z, θ)

Figure 1: Variational auto-encoder structure.

To generate new data, we simply sample z ∼ N (0, I) and input it into the trained decoder. More information

can be found in the original paper [11] as well as in the tutorial [3].

2.2

Conditional variational auto-encoders

One variant of the VAE is the Conditional variational auto-encoder (CVAE) [17], which makes use of

side information y, such as a label or part of an image. Mathematically the variational approach is the same as

above except with conditioning on y.

log p(x | y) ≥ Ez∼Q(·|y,x) [log p(x | y, z)] − KL(Q(z | x, y)kp(z | y)) =: −L(x, y).

The CVAE structure appears in Figure 2. Structurally, the only difference is that both the encoder and decoder

take the side information y as extra input.

At generation time, we input not only a fresh sample z ∼ N (0, I), but also some side information y into the

decoder. We explore different examples of side information in Section 4.4.

2.3

Semi-supervised learning

Kingma et al. [10] introduced a variant of the VAE framework tailored for semi-supervised learning (SSL),

where the goal is classification after training on a dataset that has both labeled and unlabeled examples. Unlike

2

µ(x, y)

x

Encoder Q

KL(N (µ(x, y), Σ(x, y))kN (0, I))

Σ(x, y)

log p(x | z)

∗

∼ N (0, I)

+

Decoder P

f (·; z, y, θ)

y

Figure 2: Conditional variational auto-encoder structure

the CVAE where everything was conditioned on the label y, the SSL model gives y a categorical distribution. The

generative model (called “M2” in [10]) is as follows.

y ∼ Cat(π)

z ∼ N (0, I)

x | y, z ∼ f (x; y, z, θ)

We maximize a lower bound on the log likelihood (of both the data x and the label y) over normal distributions

Q(z | x, y) and categorical distributions Q(y | x). However, for unlabeled examples, we marginalize over y ∼ q(y | x).

This gives us the labeled and unlabeled losses L(x, y) and U(x) below.

log p(x, y) ≥ Ez∼Q(z|x,y) [log p(x | y, z) + log p(y)] − KL(Q(z | x, y)kp(z)) =: −L(x, y)

X

log p(x) ≥

Q(y | x)(−L(x, y)) + H(Q(y | x)) =: −U(x).

y

x

Encoder Q2

µ(x, y)

Encoder Q1

Σ(x)

KL(N (µ(x, y), Σ(x))kN (0, I))

log p(x | z)

π(x)

∗

∼ N (0, I)

+

Decoder P

f (z, y)

y

Figure 3: Model for semi-supervised learning

The total loss is

X

L(x, y) +

X

U(x).

unlabeled x

labeled (x, y)

In [10], they also add an extra classification loss αElabeled (x, y) [− log Q(y | x)] so that the predictive distribution

q(y | x) also contributes to L(x, y).

We can use this for labeled image generation as before: input z ∼ N (0, I) and y into the decoder.

For classification, we input the unlabeled image into the decoder and return the class with the highest probability

according to the categorical distribution parameterized by π(x).

3

2.4

DRAW

The Deep Recurrent Attentive Writer (DRAW) [7] is an extension of the vanilla variational auto-encoder generator. It applies the sequential variational auto-encoding framework to iteratively construct complicated images,

with a soft attention mechanism. There are three main differences between the DRAW and vanilla VAE.

1. Both the encoder and decoder of DRAW are recurrent networks.

2. The decoders outputs are successively added to the distribution that will ultimately generate the data (image),

as opposed to emitting this distribution in a single step.

3. A dynamically updated attention mechanism is used in DRAW to restrict the input region observed by the

encoder, which is computed by the previous decoder.

Figure 4: Conventional Variational Auto-Encoder (left) and DRAW Network [7] (right)

Figure 4 shows the structure of DRAW. The input for each encoder cell is convoluted image, where the convolution is computed by the previous decoder cell. Thus the input for each encoder is dynamically computed.

The loss comes from two parts. The decoder loss is the same as the vanilla VAE: only the final canvas matrix

(which is the cumulative sum of the previous decoder output) is used to compute the distribution of the data

D(x|cT ). If the input is binary, the natural choice for D is a Bernoulli distribution with means given by σ(cT ), and

the reconstruction loss is the negative log probability of x under D:

Lx = − log D(x|cT )

The encoder loss is different. DRAW uses cumulative Kullback-Leibler divergence across time T as the encoder

loss:

Lz =

T

X

T

KL(Q(Zt |henc

t )||Zt ) =

t=1

1X 2

(µ + σt2 − log σt2 ) − T /2

2 t=1 t

The total loss L for the network is the expectation of the sum of the reconstruction and latent losses:

L = Ez∼Q (Lz + Lx ).

Conditional DRAW is an extension of vanilla DRAW analogous to the CVAE described above. The input of

the encoder and decoder of conditional DRAW is the same as vanilla DRAW, except with additional information

(e.g. the label of the image).

3

Combining generative adversarial networks with VAEs

In this section, we investigate how we can combine various variants of VAEs reviewed above with another

popular generative model: generative adversarial networks (GANs) [6]. In a GAN, a generator and a discriminator

are trained together. The discriminator is trained to distinguish “real” images from “fake” (i.e. generated), while

the generator is trained to generate images that the discriminator will classify as “real.” Abstractly, combining the

GAN framework with VAEs is straightforward: the generator in the GAN framework is the VAE. Below we discuss

the details and our results for various variants of VAEs.

4

3.1

VAE with GAN

The basic idea is the following: Let D(x) denote the output of the discriminator for an image x; this represents

the probability that x is a real image. As in GAN, we train the generator (which is a VAE here) and the discriminator

alternately, by minimizing two losses separately.

The generator loss is formed by adding an extra term − log D(Dec(z)), which punishes the generated images

being classified as fake. The discriminator loss is − log D(x) − log(1 − D(Dec(z)), the same as in a pure GAN.

Based on ideas in [12], we make the following two modifications on the simplified model:

l

• Replacing the VAE decoder/reconstruction loss Ez∼Q(·|x) log p(x | z) with a dissimilarity loss LDis

llike based on

the lth layer inside the discriminator. That is, we input both the original image x and a generated image

Dec(z) into the discriminator, and use the L2 distance between the output of the lth layer of the discriminator

as the decoder loss (this can be interpreted as maximizing the log likelihood of a Gaussian distribution) instead

of the original pixel-based loss. The intuition is that the deeper layers of the discriminator will learn good

features of the images, and that using this as the decoder/reconstruction loss will be more informative than

using a pixel-based loss. Mathematically, the reconstruction loss is a l2 loss:

l

LDis

x)k2 /2σ 2 + Const.

llike = kfl (x) − fl (e

where fl (x) is the result of x passing through the lth layer of the discriminator network, and x

e is the result

getting from letting x pass through the encoder and then the decoder.

• Incorporate the classification of the reconstructed image Dec(Enc(x)) by adding − log D(Dec(Enc(x))) and

− log(1 − D(Dec(Enc(x))))) to the VAE and discriminator losses respectively. Since the reconstructed image

is close to the original image, this will help train the discriminator.

In summary, the generator loss is

l

LG = LKL + LDis

llike − log D(Dec(z)) − log D(Dec(Enc(x)))

(2)

where z is generated from the prior p(z) of latent variables (p(z) = N (0, I)) and LKL = KL(q(z|x), p(z)) is the

same as the regularization term in VAE. The discriminator loss is

LD = − log D(x) − log(1 − D(Dec(z)) − log(1 − D(Dec(Enc(x)))),

(3)

where z is generated from the prior of latent variables (N (0, I)).

The structure of the model is shown in Figure 5 [12].

Figure 5: VAE with GAN model from [12]

3.2

CVAE with GAN

One generalization of VAE with GAN is to incorporate the side information y, such as a label or part of an

image. Our idea is to put a GAN on top of a Conditional VAE. To make full use of the side information y,

the structure of the discriminator of GAN is also modified. Suppose y is a label which has k classes. Then the

discriminator D outputs a distribution on k + 1 classes. We wish the discrimator to assign the correct label among

5

k classes for a true example (x, y) but assign generated images to the extra k + 1th class. Formally, define D as a

function from the image space to a distribution on k + 1 classes:

k+1

X

Dc (x) = 1, Dc (x) ≥ 0.

c=1

We still train the generator and the discriminator alternately. The generator loss is formed as a sum of the loss

of a Conditional VAE and the loss from GAN which is a cross entropy loss over the output of the discriminator

which punishes generated images for being classified into a wrong class. The mathematical representation is shown

below. Recall that the encoder and the decoder of a conditional VAE both take y as an input. The cross entropy

loss takes as input a distribution p on classes and a label y, and outputs − log p(Y = y).

l

LG = LKL + LDis

llike

+ CrossEntropy(D(Dec(z, y)), y)

+ CrossEntropy(D(Dec(Enc(x, y), y), y),

l

where z is generated from the prior p(z) of latent variables. LKL and LDis

llike are exactly the same as those in the

previous section, except that we also feed in y when generating images from the decoder.

The discriminator loss is a cross entropy loss over the output of the discriminator which punishes classifying a

real image into a wrong class or classifying a generated image into the first k classes. Concretely, the discriminator

loss is

LD = CrossEntropy(D(x), y)

+ CrossEntropy(D(Dec(z, y)), yf ake )

+ CrossEntropy(D(Dec(Enc(x, y), y)), yf ake ),

where z is generated from the prior of latent variables and yf ake is the label for the extra (k + 1)st class.

3.3

SSL with GAN

The VAE tailored for semi-supervised learning (SSL) can also be incorporated with a k + 1-class GAN in a

similar way as CVAE. Recall that the VAE-SSL loss is divided into two parts: a loss for labeled data and a loss for

unlabeled data. The method of incorporating GAN to VAE-SSL is exactly the same as that of CVAE for labeled

data; refer to the previous section for details. For unlabeled data, recall that the VAE loss is

X

Q(y | x)(−L(x, y)) + H(Q(y | x)) =: −U(x),

y

where a categorical distribution Q(y|x) is introduced. We mimic the idea that when a label y is needed as an input,

the loss is averaged over Q(y|x). Mathematically:

X

l

LG,unobserved = LKL + H(Q(y|x)) +

Q(y|x) LDis

llike

y

+ CrossEntropy(D(Dec(z, y)), y)

+ CrossEntropy(D(Dec(Enc(x, y), y), y) ,

l

where we emphasize that LDis

llike also depends on y and needs to be averaged, and that the entropy term H(Q(y|x))

is introduced to regularize over Q as before. The discriminator loss is again averaged over Q(y|x):

X

LD =

Q(y|x) CrossEntropy(D(x), y)

y

+ CrossEntropy(D(Dec(z, y)), yf ake )

+ CrossEntropy(D(Dec(Enc(x, y), y)), yf ake ) .

6

3.4

DRAW with GAN

Unlike the VAE, which only generates one image, DRAW generates a sequence of images. Thus, there are two

potential way to combine DRAW with GAN: we could either add discriminator on the top of all the temporary

canvas, or only on the top of the last output. In this project, we only consider the latter one. If we consider DRAW

as a black-box generator like the vanilla VAE, the structure is exactly the same as VAE-GAN. The two losses that

we minimize alternately take the same forms as (2) and (3), but instead use the decoder and the encoder from

DRAW.

4

Experiments and Applications

In the project, we implemented all the models mentioned above and studied their performance. The code

will be made available at https://github.com/Jianbo-Lab/vae-gan. In this section, we discuss details about the

implementation, investigate applications of various models and compare the performances of various models. All

models were trained with mini-batch stochastic gradient descent (SGD) with a mini-batch size of 100. All weights

were initialized from a zero-centered Normal distribution. Batch-normalization [16] was added to all fully connected

and convolutional layers except the output layers. The Adam [9] optimizer with default hyperparameters in

TensorFlow was used for optimization. For various VAEs without GANs, we used the learning rate 0.001 while for

all VAEs with GANs, we use learning rate 0.0001. Due to the page limit, we omit the detailed description of our

implementations of VAE-GAN. We refer interested readers to our GitHub repository for details.

4.1

Tools

We implemented all the models in TensorFlow. We used a GeForce GTX 770 GPU through Amazon for training.

Git and Github were also very helpful for collaboration and managing our codebase.

4.2

Dataset

We used the MNIST handwritten digits dataset [13] through TensorFlow as well as the Street View House

Numbers (SVHN) dataset [15]. The MNIST dataset consists of a training dataset with 55, 000 labeled handwritten

digits, a validation dataset with 5, 000 examples and a test set with 5, 000 examples. SVHN is a real-world image

dataset similar in flavor to MNIST (e.g., the images are of small cropped digits), but incorporates an order of

magnitude more labeled data (over 600, 000 digit images) and comes from a significantly harder, unsolved, real

world problem (recognizing digits and numbers in natural scene images) [15]. We treated the MNIST images as a

28 × 28-long vector with pixel values in [0, 1]. We treated the SVHN images as a 32 × 32 × 3-long vector with RGB

values in [0, 1]. No other data cleaning was needed.

4.3

CVAE v.s. CVAE-GAN

This experiment aims to compare the performance of CVAE with that of CVAE-GAN in terms of generating

images. In this experiment, we feed into a trained decoder of CVAE a label (1 to 10) and a randomly generated

z from the standard normal N (0, I). Then the corresponding handwritten digits will be generated according to

the label. Figure 6 shows ten generated samples of each digit after training on MNIST and SVHN using CVAE.

Figure 7 shows ten generated samples of each digit after training on MNIST and SVHN using CVAE with GAN.

The networks of CVAE and CVAE-GAN are constructed based on structures for DCGAN [1] with slight modification. In particular, we replace pooling by strided convolutions (discriminator) and fractional-strided convolutions

(decoder) and remove all unnecessary fully connected layers (except at the outputs). Below we briefly describe the

network structure of the encoder, the decoder and the discriminator (for MNIST), following notations used in class

[4]. The discriminator network is only used in CVAE-GAN while the encoder and the decoder networks are used

both in CVAE and CVAE-GAN. Note that we use the same network structure for SVHN, except that the number

of neurons at each layer increases by 8/7.

• Encoder:

Input x : [28 × 28] → conv5-28 → conv5-56

7

→ conv5-108}

Input y : [10]

→

FC-50

FC-50

→Σ

→µ

• Decoder:

Input z concat with y : [50 + 10] → FC-7*7*128 → reshape[7 × 7 × 128] →

→ deconv5-64 → deconv5-32 → output x : [28 × 28]

Here deconv is also called fractionally-strided convolutions in literature [1].

• Discriminator:

Input x : [28 × 28] → conv3-16 → conv3-32 → conv3-64 → FC-512 →

FC-500 → Softmax

lth layer fl (x)

Figure 6: Purely generated images for MNIST (left) and SVHN (right) by CVAE. Since the CVAE incorporates

labels into generation, we are able to generate a particular digit we choose.

Comparing the results of CVAE and CVAE-GAN, we can see CVAE-GAN generates less blurry MNIST images

compared to CVAE. But for the digit “2,” CVAE-GAN generates highly similar and strange images constantly. For

the SVHN dataset, most of images generated by CVAE-GAN are less blurry, but there are also some weird images

generated, like the digit “7” in the fourth column. We put the fixing of these problems to future work.

4.4

CVAE applications

When implementing the CVAE model, we also investigated various applications of CVAE. In particular, we

show here how we use a simple fully-connected CVAE for image completion and style transfer.

4.4.1

Image completion

The side information y need not be a label. Here, we considered y as being the left half of an image. At

generation time, we can input a new half-image into the decoder, and it will attempt to complete the image based

on what it has learned from the training data. Our demonstration appears in Figure 8.

4.4.2

Style transfer

The CVAE can be used to transfer style from one image to the generated output. To do so, we input the base

image (whose style we want to copy) into the encoder and obtain its latent “code” z. We then input this sampled

8

Figure 7: Purely generated images for MNIST (left) and SVHN (right) by CVAE-GAN.

Figure 8: Image completion for MNIST (left) and SVHN (right). We take half-images from the test data and input

them into the decoder, which attempts to complete the image based on what it has learned from the training data.

z, which captures the features and style of the base image, along with an arbitrary label y into the decoder. The

output is then a digit with label y, but with the style of the original image. As shown in Figure 9, we are able to

copy boldness and slant in the case of MNIST, as well as font style, font color, and background color in the case of

SVHN.

9

Figure 9: Style transfer for MNIST (left) and SVHN (right). We transfer the style of the first column to all digits

0 to 9.

4.5

Semi-supervised learning

This experiment compares the performance of VAE and that of VAE-GAN in semi-supervised learning. We

followed the “M2” model of [10] depicted in Figure 3.

We took the 55000 examples in the MNIST dataset and retained only 1000 or 600 of the labels (so that more

than 98% of the data is unlabeled!), with each label equally represented.

We considered both a fully-connected architecture and a convolutional architecture. For the discriminator of

GAN, we still use the same structure as that of CVAE-GAN. Interested readers can refer to the previous section

for details. Below we describe the network structures of the encoder and the decoder.

• Fully connected

– In the fully connected model described below, each hidden layer has batch normalization and softplus

activation.

– Encoder:

h1

Input x: [28 × 28] :→ FC-1024 → FC-1024 → FC-50 → Σ(x)

FC-50 → π(x)

h1 concat with y : [1024 + 10] → FC-1024 → FC-50 → µ(x, y)

– Decoder:

Input z concat with y: [50 + 10] → FC-1024 → FC-1024 → FC-28*28-sigmoid → output x: [28 × 28]

• Convolutional model

– For the convolutional version we replaced the hidden fully-connected layers with 5 × 5 convolutions (in

the encoder) and convolution transposes (“deconvolutions”). Below, all convolutions and deconvolutions

have stride 1. The argument of Conv and Deconv is the number of filters. 2 × 2 max pooling and 2 × 2

upsampling are denoted by ⇓ and ⇑ respectively.

10

– All layers have batch normalization except the output layers. All fully-connected layers have ReLU

activation except the output layers.

Q1 : x, Conv(20), ⇓, Conv(50), ⇓, FC(1024), {FC for Σ, FC-sigmoid for π}

Q2 :

(x, y), FC(1024), FC

P : z, FC(500), FC(7 × 7 × 50), ⇑ 2, Deconv(20), ⇑, Deconv(1),

concat with y, FC(512), FC-sigmoid

– Encoder:

h1

Input x: [28 × 28] :→ conv5-20 →⇓→ conv5-50 →⇓→ FC-1024 → FC-50 → Σ(x)

FC-50 → π(x)

h1 concat with y : [1024 + 10] → FC-1024 → FC-50 → µ(x, y)

– Decoder:

Input z: [50] → FC-500 → FC-7*7*50 →⇑→ deconv5-20 →⇑→ deconv5-1

Input y: [10]

→ FC-512 → FC-28*28-sigmoid → output x: [28 × 28]

• Convolutional with GAN: see Section 3.3

Table 1 shows the resulting classification error on the test dataset. Currently we are unable to perform as

well as the original paper [10]. Some potential reasons are that we have pooling after convolutional layers, we are

not using the most appropriate activation functions in the discriminator and in the generator and there are fully

connected layers in the network. We are currently working on new network structures that replace pooling by strided

convolutions (discriminator) and fractional-strided convolutions (decoder), repace ReLU in the discriminator by

LeakyReLU and remove unnecessary fully connected layers (as suggested in [1]).

Fully connected

Convolutional

Convolutional with GAN

Kingma et al. [10]

1000 labeled

5.1%

4.8%

8.1%

2.4%

600 labeled

12.0%

6.2%

9.2%

2.6%

Table 1: Test error on MNIST (55000 training examples)

4.6

DRAW v.s. DRAW-GAN

This experiment compares the performance of DRAW and DRAW-GAN.

• Encoder: a standard LSTM cell. The size of the hidden state is 256.

• Decoder: a standard LSTM cell. The size of the hidden state is 256.

• Discriminator (for DRAW-GAN): we use the same discriminator as CVAE. Due to the limited space, we only

report the experimental results for vanilla DRAW and DRAW-GAN (omitting conditional DRAW results).

In addition, replacing the decoder loss with GAN layer loss did not give satisfactory performance. Thus we

used the reconstruction loss on the original image (the L2 or cross-entropy loss between original image and

reconstructed image).

FC-500 → Softmax

Input x : [28 × 28] → conv3-16 → conv3-32 → conv3-64 → FC-512 →

lth layer fl (x)

It is interesting to see how the style of the images generated by DRAW-GAN differ from the reconstructed

images (Figure 11, right and left respectively). The reconstructed image is very similar to the real image, while the

generated image is more winding than the real one.

We see that simply taking DRAW as a black box and adding a GAN on top of it directly does not produce good

results. Some potential solutions include incorporating an attention mechanism in the GAN and investigating how

to appropriately add a GAN to each image in the sequence generated by DRAW.

11

Figure 10: Images on the left are reconstructed by the real input images using DRAW. Images on the right are

generated from random Gaussian input using DRAW.

Figure 11: Images on the left are reconstructed by the real input images using DRAW-GAN. Images on the right

are generated from random Gaussian input using DRAW-GAN.

5

Conclusion and Future Work

Variational auto-encoders and generative adversarial networks are two promising classes of generative models

which have their respective drawbacks. For example, VAEs generate blurry images while GANs are difficult to train

and generate images which lack variety. This work demonstrates several instances of combining various variants

of VAEs with GANs. In particular, we propose CVAE-GAN, SSL-VAE-GAN and DRAW-GAN. We also apply

generative models to the problems of image completion, style transfer and semi-supervised learning. We observe

that CVAE-GAN generates better images than the baseline model CVAE, but SSL-VAE-GAN and DRAW-GAN

fail to achieve expected performance. We propose several potential solutions to these problems and leave them to

future work.

6

Lessons Learned

• TensorFlow, although tricky to learn at first, became quite helpful when building more complicated models.

In particular, the TensorFlow-Slim library allowed us to access standard neural network layers easily so that

we did not have to manage weights and variables manually.

• Some of the models were difficult to train, and batch normalization was critical to reduce sensitivity to

hyperparameters (e.g. learning rate) and to help bring the loss down.

• The pooling layer is not helpful in generateive models of images like VAEs and GANs.

• Use a smaller learning rate (0.0001) to train GANs but use a higher one (0.001) to train VAEs.

12

7

Team Contributions

• Jianbo Chen (33.3%): implemented VAE framework on which all other implementations are based, improved

convolutional architecture, proposed and improved CVAE-GAN models, implemented SSL-VAE-GAN models,

contributed to poster, wrote report

• Billy Fang (33.3%): implemented CVAE (with digit generation), apply CVAE to image completion and style

transfer, implemented SSL, implemented VAE-GAN models, CVAE-GAN models, contributed to poster,

wrote report

• Cheng Ju (33.3%): implemented and experimented with DRAW, conditional DRAW, DRAW with GAN.

Build general DRAW object that has options to construct flexible combinations of DRAW variants (conditional/unconditional and vanilla/GAN), wrote report

References

[1] Soumith Chintala Alec Radford, Luke Metz. Unsupervised representation learning with deep convolutional

generative adversarial networks. ICLR, 2016.

[2] Junyoung Chung, Kyle Kastner, Laurent Dinh, Kratarth Goel, Aaron C Courville, and Yoshua Bengio. A

recurrent latent variable model for sequential data. In Advances in neural information processing systems,

pages 2980–2988, 2015.

[3] Carl Doersch. Tutorial on variational autoencoders. arXiv preprint arXiv:1606.05908, 2016.

[4] Justin Johnson Fei-Fei Li, Andrej Karpathy. Lecture notes of cs231n. http://cs231n.github.io/, 2016.

[5] Ian Goodfellow, Yoshua Bengio, and Aaron Courville. Deep learning. Book in preparation for MIT Press,

2016.

[6] Ian J. Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron

Courville, and Yoshua Bengio. Generative adversarial networks. Advances in Neural Information Processing

Systems, 2014.

[7] Karol Gregor, Ivo Danihelka, Alex Graves, Danilo Jimenez Rezende, and Daan Wierstra. DRAW: A recurrent

neural network for image generation. arXiv preprint arXiv:1502.04623, 2015.

[8] Xianxu Hou, Linlin Shen, Ke Sun, and Guoping Qiu. Deep feature consistent variational autoencoder. arXiv

preprint arXiv:1610.00291, 2016.

[9] Diederik P Kingma and Jimmy Lei Ba.

arXiv:1412.6980, 2014.

Adam: A method for stochastic optimization.

arXiv reprint

[10] Diederik P. Kingma, Danilo Jimenez Rezende, Shakir Mohamed, and Max Welling. Semi-supervised learning

with deep generative models. CoRR, abs/1406.5298, 2014.

[11] Diederik P Kingma and Max Welling. Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114, 2013.

[12] Anders Boesen Lindbo Larsen, Søren Kaae Sønderby, and Ole Winther. Autoencoding beyond pixels using a

learned similarity metric. arXiv preprint arXiv:1512.09300, 2015.

[13] Yann LeCun, Léon Bottou, Yoshua Bengio, and Patrick Haffner. Gradient-based learning applied to document

recognition. Proceedings of the IEEE, 86(11):2278–2324, 1998.

[14] Marcus Liwicki and Horst Bunke. Iam-ondb-an on-line english sentence database acquired from handwritten

text on a whiteboard. In Eighth International Conference on Document Analysis and Recognition (ICDAR’05),

pages 956–961. IEEE.

[15] Yuval Netzer, Tao Wang, Adam Coates, Alessandro Bissacco, Bo Wu, and Andrew Y Ng. Reading digits in

natural images with unsupervised feature learning. 2011.

13

[16] Christian Szegedy Sergey Ioffe. Batch normalization: Accelerating deep network training by reducing internal

covariate shift. Journal of Machine Learning Research, 2016.

[17] Kihyuk Sohn, Honglak Lee, and Xinchen Yan. Learning structured output representation using deep conditional

generative models. In Advances in Neural Information Processing Systems, pages 3483–3491, 2015.

[18] Lucas Theis, Aäron van den Oord, and Matthias Bethge. A note on the evaluation of generative models. arXiv

preprint arXiv:1511.01844, 2015.

14