James A. Edwards, Uzi Vishkin University of Maryland

advertisement

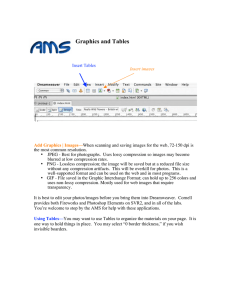

James A. Edwards, Uzi Vishkin University of Maryland Introduction Lossless data compression Common tool better use of memory (e.g., disk space) and network bandwidth. Burrows-Wheeler (BW) compression e.g., bzip2 Relatively higher compression ratio (pro) but slower (con) Snappy (Google) lower compression ratios but fast. Example For MPI on large machines speed is critical. Our motivation fast and high compression ratio Unexpected Prior work unknown to us made empirical follow-up … stronger Assumption throughout: fixed constant-size alphabet State of the field Irregular algorithms: prevalent in CS curriculum and daily work (open-ended problems/programs). Yet, very limited support on today’s parallel hardware. Even more limited with strong scaling Low support for irregular parallel code in HW SW developers limit themselves to regular algorithms HW benchmarks optimize HW for regular code … Namely, parallel data compression is of general interest as an undeniable application representing a big underrepresented “application crowd” “Truly Parallel” BW compression Existing parallel approach: break input into blocks, compress blocks in parallel Practical drawback: good compression & speed only with large input Theory drawback: not really parallel Truly parallel: compress entire input using a parallel algorithm Works for both large and small inputs Can be combined with block-based approach Applications of small inputs: Faster (decompression) & greater compression better use of main memory [ISCA05] & cache [ISCA12] Warehouse-scale computers. Bandwidth between various pairs of nodes can be extremely different; for MPI, MapReduce low bandwidth between pairs debilitating [HP 5th ed.] (i.e., Snappy was a solution) Attempts at truly parallel BW compression A 2011 survey paper [Eirola] stipulates that parallelizing BW could hardly work on GPGPU, and decompression would fall behind further. Portions require “very random memory accessing” “…it seems unlikely that efficient Huffman-tree GPGPU algorithms will be possible.” The best GPGPU result: even more painful In 2012, Patel et al. concurrently attempted to develop parallel code for BW compression on GPUs but their best result was 2.8X slowdown. Patel reported separately 1.2X speedup for decompression (hence, not referenced in SPAA13 version.) Stages of BW compression & decompression S Block-Sorting Transform (BST) SBST Move-toFront (MTF) encoding SMTF Huffman encoding SBW SMTF Huffman decoding SBW Compression S Inverse Block-Sorting Transform (IBST) SBST Move-toFront (MTF) decoding Decompression Inverse Block-Sorting Transform Serial algorithm: i 0 1 2 3 4 5 6 BST 1. Sort characters of S ; the SBST[i] a n n b $ a a sorted order T[i] forms a ring i → T[i] T[i] 1 5 6 4 0 2 3 2. Starting with $, traverse the ring to recover S 0 1 4 Parallel algorithm: Linked ring 1. Use parallel integer sorting 5 3 to find T[i] i → T[i] 2. Use parallel list ranking to 2 6 traverse the ring Both steps require O(log n) i 4 0 1 5 2 6 3 (END) time and O(n) work rank[i] 6 5 4 3 2 1 0 On current parallel HW list ranking gets you – why we SBST[i] $ a n a n a b chose this step S (read right to left) Conclusion and where to go from here? Despite being originally described as a serial algorithm, BW compression can be accomplished by a parallel algorithm. Material for a few good exercises on prefix sum & list ranking? For a more detailed description of our algorithm, see reference [4] in our brief announcement. This algorithm demonstrates the importance of parallel primitives such as prefix sums and list ranking. Requires support of fine-grained, irregular parallelism and sometimes also strong scaling Issues on all current parallel hardware. Indeed: While recent work from UC Davis (2012) on parallel BW compression on GPUs that we missed taxed ~20% of our originality (same Step 2), It failed to achieve any speedup on compression. Instead a slowdown of 2.8x. For decompression: 1.2X speedup. On the UMD experimental Explicit Multi-Threading (XMT) architecture, we achieved speedups of 25x for compression and 13x for decompression [5]. On balance UC Davis paper huge gift: 70x vs. GPU for compression and 11X for decompression. Where to go from here? Remaining options for the community Figure out how to do it on current HW Or, bash PRAM Or, the alternative we pursued Develop a parallel algorithm that will work well on buildable HW designed to support the bestestablished parallel algorithmic theory Final thought connecting to several other SPAA presentations This is an example where MPI on large systems works in tandem with PRAM-like support on small systems. Intra-node (of a large system) use PRAM compression & decompression algorithms for inter-node MPI messages Counter-argument to an often unstated position. That we need the same parallel programming model at very large and small scales References [4] J. A. Edwards and U. Vishkin. Parallel algorithms for Burrows-Wheeler compression and decompression. TR, UMD, 2012. http://hdl.handle.net/1903/13299. [5] J. A. Edwards and U. Vishkin. Empirical speedup study of truly parallel data compression. TR, UMD, 2013. http://hdl.handle.net/1903/13890. Block-Sorting Transform (BST) Goal: bring occurrences of banana$ characters together Serial algorithm: Input to BST Form a list of all rotations of the input string 2. Sort the list lexicographically 3. Take the last column of the list as output 1. Equivalent to sorting the suffixes of the input string banana$ anana$b nana$ba ana$ban na$bana a$banan $banana Sort $banana a$banan ana$ban anana$b banana$ na$bana nana$ba List of rotations annb$aa Output of BST Block-Sorting Transform (BST) Parallel algorithm: 1. Find the suffix tree of S (O(log2 n) time, O(n) work)) 2. Find the suffix array SA of S by traversing the suffix tree (Euler tour technique: O(log n) time, O(n) work) 3. Permute characters according to SA (O(1) time, O(n) work) 6 0 5 4 2 1 3 i 0 1 2 3 4 5 6 S[i] b a n a n a $ SA[i] 6 5 3 1 0 4 2 S[SA[i]-1] a n n b $ a a Move-to-Front (MTF) encoding Goal: Assign low codes to repeated characters Serial algorithm: Maintain list of characters in order last seen Parallel algorithm: use prefix sums to compute the MTF list for each character (O(log n) time, O(n) work) Associative binary operator: X + Y = Y concat (X – Y) i 0 1 2 3 SBST[i] a n n b Li j L0[j] 0 $ 1 a 2 b 3 n j L1[j] 0 a 1 $ 2 b 3 n j L2[j] 0 n 1 a 2 $ 3 b j L3[j] 0 n 1 a 2 $ 3 b 1 3 0 3 SMTF[i] a,$,b,n b,n,a,$ $,a,b,n b,n n b b,n,a $,a a a,$ $ assumed prefix n,a a n a,$ b,n n b SBST $ a,$ a a a Move-to-Front (MTF) decoding encoding, with the following changes Serial: The MTF lists are used in reverse Parallel: Instead of combining MTF lists, combine permutation functions SMTF Permutation function Same algorithm as 1 3 0 1 2 3 1 0 2 3 0 1 2 3 1 0 0 1 + 2 2 3 3 0 1 2 3 0 1 2 3 0 3 0 1 2 0 1 2 3 3 0 1 2 3 0 1 2 3 3 0 1 2 3 0 1 2 3 1 0 2 = 0 1 2 3 3 1 0 2 Huffman Encoding Goal: Assign shorter bit strings to more-frequent MTF codes The parallelization of this step is already well known Serial algorithm: 1. Count frequencies of characters 2. Build Huffman table based on frequencies 3. Encode characters using the table Parallel algorithm: 1. Use integer sorting to count frequencies (O(log n) time, O(n) work) 2. Build Huffman table using the (standard, heap-based) serial algorithm (O(1) time and work) 3. (a) Compute the prefix sums of the code lengths to determine where in the output to write the code for each character (O(log n) time, O(n) work) (b) Actually write the output (O(1) time, O(n) work) Huffman Decoding Serial algorithm: Read through compressed data, decoding one character at a time Parallel algorithm: partition input and apply serial algorithm to each partition Problem: Decoding cannot start in the middle of the codeword for a character Solution: Identify a set of valid starting bits using prefix sums (O(log n) time, O(n) work) 1 1 0 1 0 0 0 0 1 0 1 1 0 1 0 0 0 0 1 0 1 1 0 1 0 0 0 0 1 0 1 1 0 1 0 0 0 0 1 0 Huffman Decoding How to identify valid starting positions: Divide the input string into partitions of length l (the length of the longest Huffman codeword) 1. Assign a processor to each bit in the input. Processor i decodes the compressed input starting at index i and stops when it crosses a partition boundary, recording the index where it stopped. (O(1) time, O(n) work) Now each partition has l pointers entering it, all of which originate from the immediately preceding partition. 2. Use prefix sums to merge consecutive pointers. (O(log n) time, O(n) work) Now each partition still has l pointers entering it, but they all originate from the first partition. 3. For each bit in the input, mark it as a valid starting position if and only if the pointer that points to that bit originates from the first bit (index 0) of the first partition (O(1) time, O(n) work) Lossless data compression on GPGPU architectures (2011) Inverse BST: “Problems would possibly arise from poor GPU performance of the very random memory accessing caused by the scattering of characters throughout the string.” MTF decoding: “Speeding up decoding on GPGPU platforms might be more challenging since the character lookup is already constant time on serial implementations, and starting decoding from multiple places is difficult since the state of the stack is not known at the other places.” Huffman decoding: “Here again, decompression is harder. This is due to the fact that the decoder doesn’t know where one codeword ends and another begins before it has decoded the whole prior input.” “As for the codeword tables for the VLE, it seems unlikely that efficient Huffman-tree GPGPU algorithms will be possible.”