server We have 1000 random times in the last year where...

advertisement

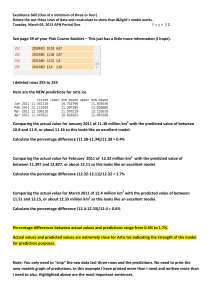

A UW database technician has brought you the following dataset server and explanation: We have 1000 random times in the last year where we recorded the usage level on our server, and for each measurement we found the response time the server was taking per request at that time. We know that as the usage rises the response time for the server is longer, but we need to know more exactly what that relationship is. A few months ago we predicted badly and the server actually crashed for a few days. We don’t want that to happen again. We don’t want to read a report. We just want: 1) A prediction equation that tells us the response time based on the usage level. 2) Predictions specifically for when the usage level is: a. At 200 b. At 1000 c. At 5000 3) A rule for how much we can expect the response time to climb per usage increase. 4) The code you used to analyze the data