Evaluate—Qualitative Methods October 2, 2007 NEEDS

advertisement

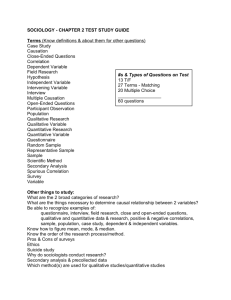

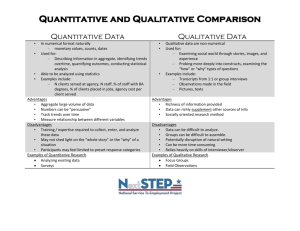

Evaluate—Qualitative Methods October 2, 2007 NEEDS DESIGN EVALUATE IMPLEMENT 1 Evaluation 2 A little out of sequence due to scheduling Will get more implementation over next two weeks Imagine you’ve implemented your application These are techniques you will need to design user study (end of project) Methods for evaluating system Qualitative – Rich, subjective – Exploratoring concepts – More useful for earlier input 3 Quantitative – Precise, objective, repeatable – Demonstrating claims – More useful at documenting improvement – Can be expensive For your project Will require aspects of both qualitative and quantitative methods – Qualitative How users react to project, perceptions? – Quantitative 4 How users perform on project? What would you improve on next iteration? – Perhaps users’ perceptions of performance more important than actual values – Elevator waiting story Design evaluation methods! Most important aspect of evaluation is upfront design! – Expensive to line up users, collect data – Design to collect right information 5 Pick appropriate method for what you want to learn Applying an evaluation method 6 Determine the activity to observe Develop the method Human subjects review approval Pilot test the method Recruit participants Collect the data Inspect & analyze the data Draw conclusions to resolve design problems, reflect on what you learned Redesign and implement the revised interface Demographic information Demographic data – Age, gender, culture – Task expertise, experience – Motivation – Frequency of use – Education, literacy, training 7 No matter what method, collect demographic data Environmental information Besides info on the user, may also need info on the operating environment – Windows, Mac, Linux? – Firefox, Internet Explorer, Safari? – Wired ethernet, wireless, modem – Morning, afternoon, night – Office, mobile, home 8 Qualitative methods “Discount” usability methods – Hueristic Evaluation – Cognitive Walkthrough 9 Questionnaire / Survey Think aloud protocol Co-discovery Semi-structured interview Deploy and observe in use “Discount” usability methods Enable evaluation at early stage, before prototype implemented Conducted quickly, inexpensively Early evaluation investment saves downstream development costs – Hueristic evaluation – Cognitive walkthrough 10 Heuristic Evaluation Fancy way to describe expert review – HCI expert – Domain expert 11 Expert review identifies usability issues before implementation Our grades on your homework are form of heuristic evaluation Evaluation hueristics 12 Visibility of system status Match between system and the real world User control and freedom Consistency and standards Error prevention Recognition rather than recall Flexibility and efficiency of use Aesthetic and minimalist design Help users recognize, diagnose, and recover from errors Help and documentation Heuristic evaluation method 13 Multiple experts individually review (around 5 experts get 75% problems) Observer records issues, answers questions, gives hints Conduct using low fidelity prototype or task analysis with storyboards and scenarios Generate list of usability problems according to hueristic compromised Hueristic Evaluation analysis After created list of problems – Rank severity – Estimate fixability – Suggest possible fixes 14 Analysis may involve larger team Hueristic Evaluation as rigorous design review You can make a living out of doing Hueristic Evaluation – Substantial consulting market for conducting Heuristic Evaluation 15 You may pay a consultant to do a Heuristic Evaluation – Know what you’re paying for – Especially the Severity, Fixability, Potential Fix aspects Learning more about Hueristic Evaluation You can learn to do a Hueristic Evaluation – http://www.useit.com/papers/heuristic/ 16 Cognitive Walkthrough Have user imagine walking through the process of using system Can use low-fidelity prototyping, partially implemented prototype Can use target user rather than expert – Pluralistic walkthrough uses experts, users, developers 17 Like a code walkthrough C. Wharton et. al. "The cognitive walkthrough method: a practitioner's guide" in J. Nielsen & R. Mack "Usability Inspection Methods" pp. 105-140. Walkthrough procedure Give user representation of interface and task – Can they discover how to accomplish goal with description of interface? – Can ask “From here, how would you like to accomplish…?” 18 Step through interface – User takes action, system provides response – Describe actions not depicted in interface representation – Somewhat like Wizard of Oz Stepping through interface Will user try to achieve the right goal? – Conceptual model of goals and tasks Will user notice correct action is available? – Visibility – Understandability Will user associate correct action with the goal to be achieved? – Aligning goals with sequence of actions 19 If correct action performed, will user see progress toward solution? – Feedback Next assignment Testing storyboard with one user – Effectively, this is a cognitive walkthrough – Create storyboard – Define task – Step through with one user 20 Questionnaires & surveys User responses to specific questions Preparation is expensive, administration relatively cheap Oral vs. written – Oral provides interaction, followup, but takes more time – Written more efficient, can provide quantitative data 21 Designing questions Design questions with analysis in mind – Closed format more precise, easier to analyze Convert qualitativequantitative measures You give categories to users – Open-ended questions provide richer feedback, longer to analyze 22 Users give you categories Designing survey questions Multiple choice – Collecting information Ordinal ranking – Expressing relative preferences Likert scales – Expressing personal reactions 23 Closed format styles Multiple choice Which social networking systems do you use? facebook MySpace LinkedIn Orkut 24 Other_____________ Ordinal ranking Rank frequency of use from 5 – Most frequent 1- Least frequent 0 - Unused ___ facebook ___ MySpace ___ LinkedIn ___ Orkut ___ Other__________ Likert scales 25 Ask users to rate on a numeric scale Odd number scale allows a neutral midpoint (5- or 7-point scale) Even number scale forces taking a position (4- or 6-point scale) “Anchors” give examples of points along the scale Example question How important is the BerkeleyStanford Big Game? Not Important Could not care less 26 Very Important Maybe I’ll go if my friends go Most important event this Fall Closed Format Advantages – Clarify among alternatives – Easily quantifiable – Eliminate useless answers – Relatively quick to administer 27 Disadvantages – Must cover whole range – All choices should be similarly likely – Don’t get interesting, “different” reactions Questions people can answer about themselves 28 What they do How they do it Opinions about current activities Complaints about current activites Comparing one thing with another How often they have done something in the recent past Questions people cannot answer about themselves 29 Predicting what they would do / like / want Imagining a hypothetical scenario Whether they would like a certain feature or product Estimating how often they do things What’s most important? 30 Web-based survey tools Surveymonkey – http://www.surveymonkey.com/ Zoomerang – http://info.zoomerang.com/ 31 Allows free basic analysis, more advanced features for fee Can extend reach to large number of respondents Thinking aloud protocol 32 Have subject “think out loud” while performing task Psychology to elicit cognition Requires training task Facilitator actively prompts if subject falls silent for more then 10 secondss – “What are you thinking now?” – “So, you are trying to…?” – “And now you are…?” Exercise: Volunteer 33 Never used Photoshop before Co-discovery 34 Have two people work on a task together (even though the task is normally done by one person) Coordination with each other naturally elicits cognition Exercise: Two volunteers 35 Never used Photoshop before Think aloud and codiscovery 36 Valuable to evaluate tasks that require cognition Time intensive Rich feedback Think aloud requires training Semi-structured interviews Interactively asking questions (face-toface, telephone) Give users chance to explain “why” to complement “what” they did, subjective user’s viewpoint Can help with design questions – “What improvements would you suggest?” 37 Can be done individually or in groups Semi-structured interviews Begin with list of open-ended questions – Ask all users these questions – Let users elaborate – Flexibility to ask follow-up questions 38 Must audio-record Interviewer should attend to user (not notepad or laptop), use audio record for data (note timestamps) Questionnaire Issues Language – Beware terminology, jargon Clarity – “How effective was the system?” (ambiguous) Avoid leading questions – Phrase neutrally rather than positive or negative 39 “How easy or hard was it to accomplish the task?” Questionnaire Issues (2) Prestige bias – People answer a certain way because they want you to think that way about them Embarrassing questions – “What did you have the most problems with?” Hypothetical questions “Halo effect” – When estimate of one feature affects estimate of another (e.g. intelligence/looks) – Aesthetics & usability, one example in HCI 40 Interviews Disadvantages – Subjective view – Interviewer(s) can bias the interview – Problem of inter-rater or interexperimenter reliability (agreement) – Time-consuming – Hard to quantify 41 Pilot test observation method Pilot test method with some target users – Debug the questions, methods – Also debug logistics – Don’t count pilot data in analysis 42 Make changes now before collecting data (want method for collecting data to be consistent) Methods used in combination 43 Mix of closed format, open-ended questions Surveys, questionnaires often used with quantitative performance measures to assess how users feel about interactions Mechanics of user testing 44 Readings give more detailed nuts and bolts Common sense structuring of the experience to help it run smoothly Analyzing qualitative data 45 Rich, open-ended data Goal: Structure to characterize, describe, summarize data Sounds harder than it is Analyzing qualitative data Exercise to immerse in data – Develop categories to count Range Average – Identify common patterns 46 Allows identifying the interesting, unusual, exceptions Also look for correlations Exercise: Analyzing conceptual map of Berkeley 47 Example of rich, qualitative data See if we can detect some patterns Characterize set of qualitative data Berkeley map Number of features? Format of map Common features – Landmarks – Roadways 48 Unusual features Assessments Correlations Qualitative analysis Start with things you can count – Average, range, median 49 Look for patterns that are in common Recognize features that are unusual, interesting Look for correlations Reflect on what the data is saying Qualitative study of your project What do you want to learn – User reactions, perceptions – Conceptual model problems – Areas to improve design – Does the design work? 50 Next time Quantitative methods Readings – "A face(book) in the crowd: social Searching vs. social browsing" – "iPod distraction: effects of portable music-player use on driver performance" 51 Questions on Project Proposal assignment?